January 2024

111 posts: 8 entries, 57 links, 10 quotes, 36 beats

Jan. 10, 2024

You Can Build an App in 60 Minutes with ChatGPT, with Geoffrey Litt (via) YouTube interview between Dan Shipper and Geoffrey Litt. They talk about how ChatGPT can build working React applications and how this means you can build extremely niche applications that you woudn’t have considered working on before—then to demonstrate that idea, they collaborate to build a note-taking app to be used just during that specific episode recording, pasting React code from ChatGPT into Replit.

Geoffrey: “I started wondering what if we had a world where everybody could craft software tools that match the workflows they want to have, unique to themselves and not just using these pre-made tools. That’s what malleable software means to me.”

AI versus old-school creativity: a 50-student, semester-long showdown (via) An interesting study in which 50 university students “wrote, coded, designed, modeled, and recorded creations with and without AI, then judged the results”.

This study seems to explore the approach of incremental prompting to produce an AI-driven final results. I use GPT-4 on a daily basis but my usage patterns are quite different: I very rarely let it actually write anything for me, instead using it as brainstorming partner, or to provide feedback, or as API reference or a thesaurus.

Jan. 11, 2024

Budgeting with ChatGPT (via) Jon Callahan describes an ingenious system he set up to categorize his credit card transactions using GPT 3.5. He has his bank email him details of any transaction over $0, then has an email filter to forward those to Postmark, which sends them via a JSON webhook to a custom Deno Deploy app which cleans the transaction up with a GPT 3.5 prompt (including guessing the merchant) and submits the results to a base in Airtable.

Jan. 12, 2024

Where is all of the fediverse? (via) Neat piece of independent research by Ben Cox, who used the /api/v1/instance/peers Mastodon API endpoint to get a list of “peers” (instances his instance knows about), then used their DNS records to figure out which hosting provider they were running on.

Next Ben combined that with active users from the /nodeinfo/2.0 API on each instance to figure out the number of users on each of those major hosting providers.

Cloudflare and Fastly were heavily represented, but it turns out you can unveil the underlying IP for most instances by triggering an HTTP Signature exchange with them and logging the result.

Ben’s conclusion: Hertzner and OVH are responsible for hosting a sizable portion of the fediverse as it exists today.

Marimo (via) This is a really interesting new twist on Python notebooks.

The most powerful feature is that these notebooks are reactive: if you change the value or code in a cell (or change the value in an input widget) every other cell that depends on that value will update automatically. It’s the same pattern implemented by Observable JavaScript notebooks, but now it works for Python.

There are a bunch of other nice touches too. The notebook file format is a regular Python file, and those files can be run as “applications” in addition to being edited in the notebook interface. The interface is very nicely built, especially for such a young project—they even have GitHub Copilot integration for their CodeMirror cell editors.

Jan. 13, 2024

More than an OpenAI Wrapper: Perplexity Pivots to Open Source. I’m increasingly impressed with Perplexity.ai—I’m using it on a daily basis now. It’s by far the best implementation I’ve seen of LLM-assisted search—beating Microsoft Bing and Google Bard at their own game.

A year ago it was implemented as a GPT 3.5 powered wrapper around Microsoft Bing. To my surprise they’ve now evolved way beyond that: Perplexity has their own search index now and is running their own crawlers, and they’re using variants of Mistral 7B and Llama 70B as their models rather than continuing to depend on OpenAI.

Jan. 14, 2024

How We Executed a Critical Supply Chain Attack on PyTorch (via) Report on a now handled supply chain attack reported against PyTorch which took advantage of GitHub Actions, stealing credentials from some self-hosted task runners.

The researchers first submitted a typo fix to the PyTorch repo, which gave them status as a “contributor” to that repo and meant that their future pull requests would have workflows executed without needing manual approval.

Their mitigation suggestion is to switch the option from ’Require approval for first-time contributors’ to ‘Require approval for all outside collaborators’.

I think GitHub could help protect against this kind of attack by making it more obvious when you approve a PR to run workflows in a way that grants that contributor future access rights. I’d like a “approve this time only” button separate from “approve this run and allow future runs from user X”.

Making a Discord bot with PHP (via) Building bots for Discord used to require a long-running process that stayed connected, but a more recent change introduced slash commands via webhooks, making it much easier to write a bot that is backed by a simple request/response HTTP endpoint. Stuart Langridge explores how to build these in PHP here, but the same pattern in Python should be quite straight-forward.

Jan. 15, 2024

SQLite 3.45. Released today. The big new feature is JSONB support, a new, specific-to-SQLite binary internal representation of JSON which can provide up to a 3x performance improvement for JSON-heavy operations, plus a 5-10% saving it terms of bytes stored on disk.

Slashing Data Transfer Costs in AWS by 99% (via) Brilliant trick by Daniel Kleinstein. If you have data in two availability zones in the same AWS region, transferring a TB will cost you $10 in ingress and $10 in egress at the inter-zone rates charged by AWS.

But... transferring data to an S3 bucket in that same region is free (aside from S3 storage costs). And buckets are available with free transfer to all availability zones in their region, which means that TB of data can be transferred between availability zones for mere cents of S3 storage costs provided you delete the data as soon as it’s transferred.

Jan. 16, 2024

Daniel Situnayake explains TinyML in a Hacker News comment. Daniel worked on TensorFlow Lite at Google and co-wrote the TinyML O’Reilly book. He just posted a multi-paragraph comment on Hacker News explaining the term and describing some of the recent innovations in that space.

“TinyML means running machine learning on low power embedded devices, like microcontrollers, with constrained compute and memory.”

You likely have a TinyML system in your pocket right now: every cellphone has a low power DSP chip running a deep learning model for keyword spotting, so you can say "Hey Google" or "Hey Siri" and have it wake up on-demand without draining your battery. It’s an increasingly pervasive technology. [...]

It’s astonishing what is possible today: real time computer vision on microcontrollers, on-device speech transcription, denoising and upscaling of digital signals. Generative AI is happening, too, assuming you can find a way to squeeze your models down to size. We are an unsexy field compared to our hype-fueled neighbors, but the entire world is already filling up with this stuff and it’s only the very beginning. Edge AI is being rapidly deployed in a ton of fields: medical sensing, wearables, manufacturing, supply chain, health and safety, wildlife conservation, sports, energy, built environment—we see new applications every day.

On being listed in the court document as one of the artists whose work was used to train Midjourney, alongside 4,000 of my closest friends (via) Poignant webcomic from Cat and Girl.

I want to make my little thing and put it out in the world and hope that sometimes it means something to somebody else.

Without exploiting anyone.

And without being exploited.

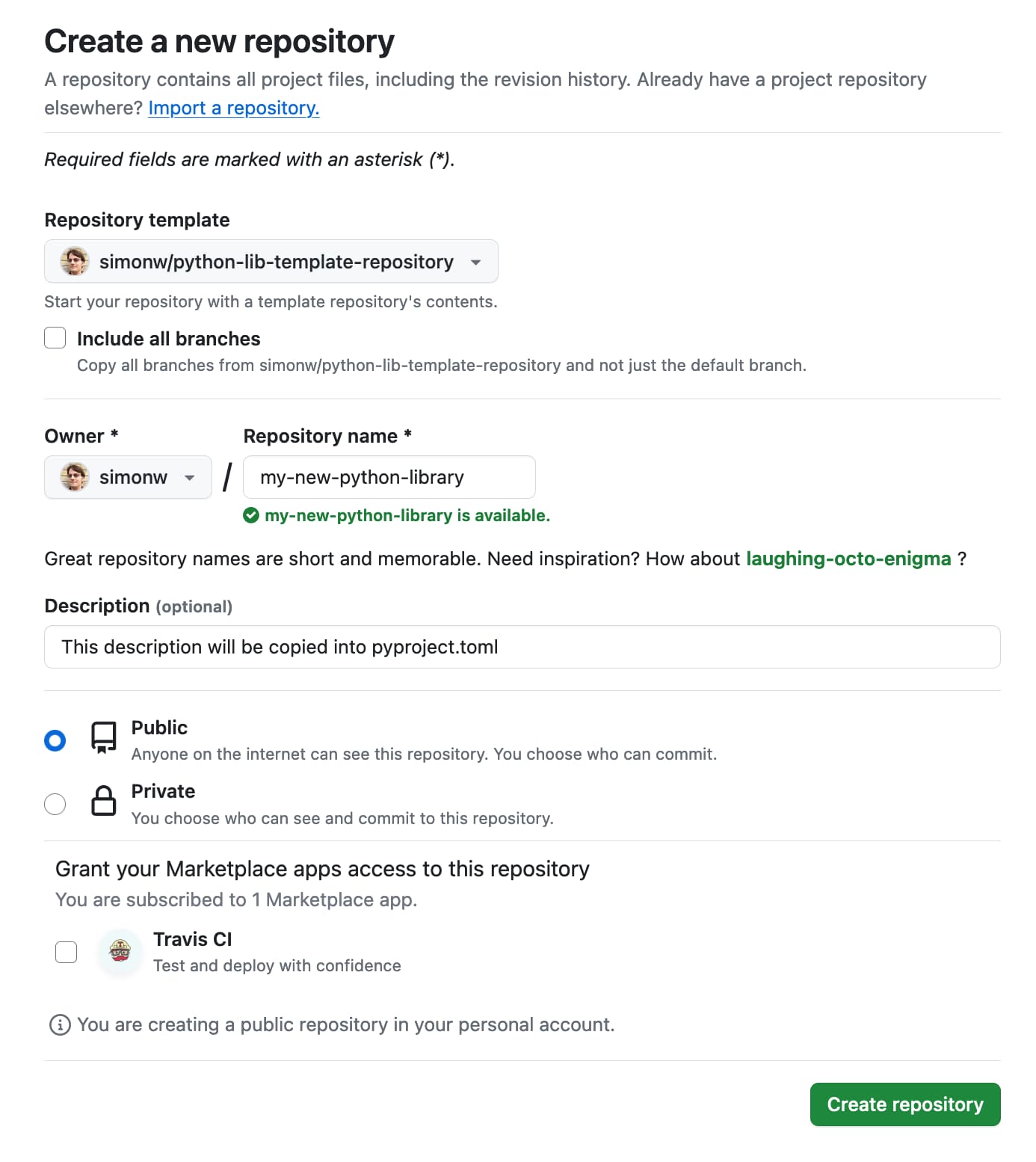

Publish Python packages to PyPI with a python-lib cookiecutter template and GitHub Actions

I use cookiecutter to start almost all of my Python projects. It helps me quickly generate a skeleton of a project with my preferred directory structure and configured tools.

[... 686 words]