532 posts tagged “projects”

Posts about projects I have worked on.

2026

Another significant alpha release, with two new headline features.

Datasette now offers users with the necessary permissions the ability to both execute write queries against their database and to save stored queries (renamed from "canned queries") both privately and for use by other members of their Datasette instance.

There's more detail in SQL write queries and stored queries in Datasette 1.0a31 on the Datasette blog, which now has three posts introducing new features since the blog launched two weeks ago.

Here's an animated demo from the blog post showing how the new execute query interface lets people get started with templated insert/update/delete queries from tables they have permission to edit:

The big new feature in this alpha is a new customizable "Jump to..." menu, described in detail in The extensible "Jump to" menu in Datasette 1.0a30 on the Datasette blog. You can try it out by hitting / on latest.datasette.io - it looks like this:

The new jump_items_sql() plugin hook allows plugins to add their own items to the set that's searched by the plugin.

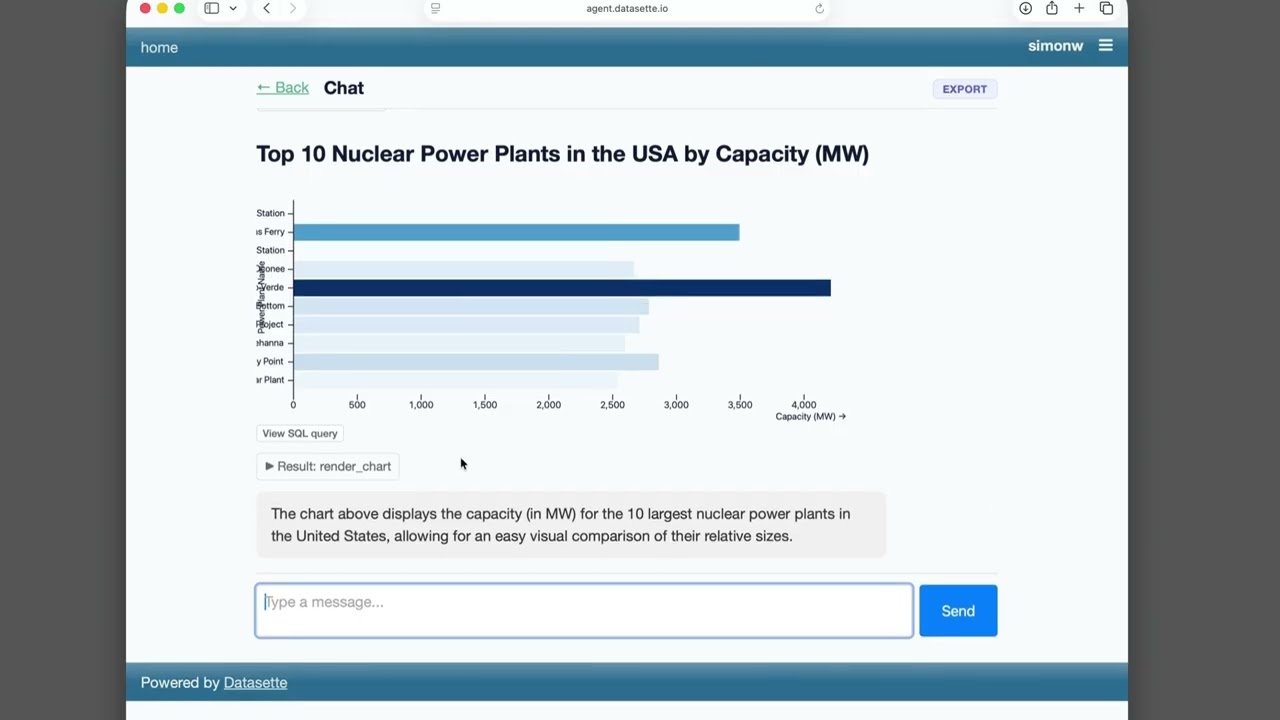

Datasette Agent

We just announced the first release of Datasette Agent, a new extensible AI assistant for Datasette. I’ve been working on my LLM Python library for just over three years now, and Datasette Agent represents the moment that LLM and Datasette finally come together. I’m really excited about it!

[... 659 words]Part of the infrastructure I use for publishing my iNaturalist sightings on my blog. I've been running this in production for a few weeks now, inspiring some iterations on how it works, so I decided to ship a 0.1 release.

You can see an example of the output in this JSON file.

- New

TokenRestrictions.abbreviated(datasette)utility method for creating"_r"dictionaries. #2695- Table headers and column options are now visible even if a table contains zero rows. #2701

- Fixed bug with display of column actions dialog on Mobile Safari. #2708

- Fixed bug where tests could crash with a segfault due to a race condition between

Datasette.close()andDatabase.close(). #2709

That segfault bug was gnarly. I added a mechanism to Datasette recently that would automatically close connections at the end of each test, but it turned out that introduced a race condition where an in-flight query could sometimes be executing in a thread against a connection while it was being closed. I ended up solving that by having Codex CLI (with GPT-5.5 xhigh) create a minimal Dockerfile that recreated the bug.

A bunch of useful stuff in this LLM alpha, but the most important detail is this one:

Most reasoning-capable OpenAI models now use the

/v1/responsesendpoint instead of/v1/chat/completions. This enables interleaved reasoning across tool calls for GPT-5 class models. #1435

This means you can now see the summarized reasoning tokens when you run prompts against an OpenAI model, displayed in a different color to standard error. Use the -R or --hide-reasoning flags if you don't want to see that.

LLM 0.32a0 is a major backwards-compatible refactor

I just released LLM 0.32a0, an alpha release of my LLM Python library and CLI tool for accessing LLMs, with some consequential changes that I’ve been working towards for quite a while.

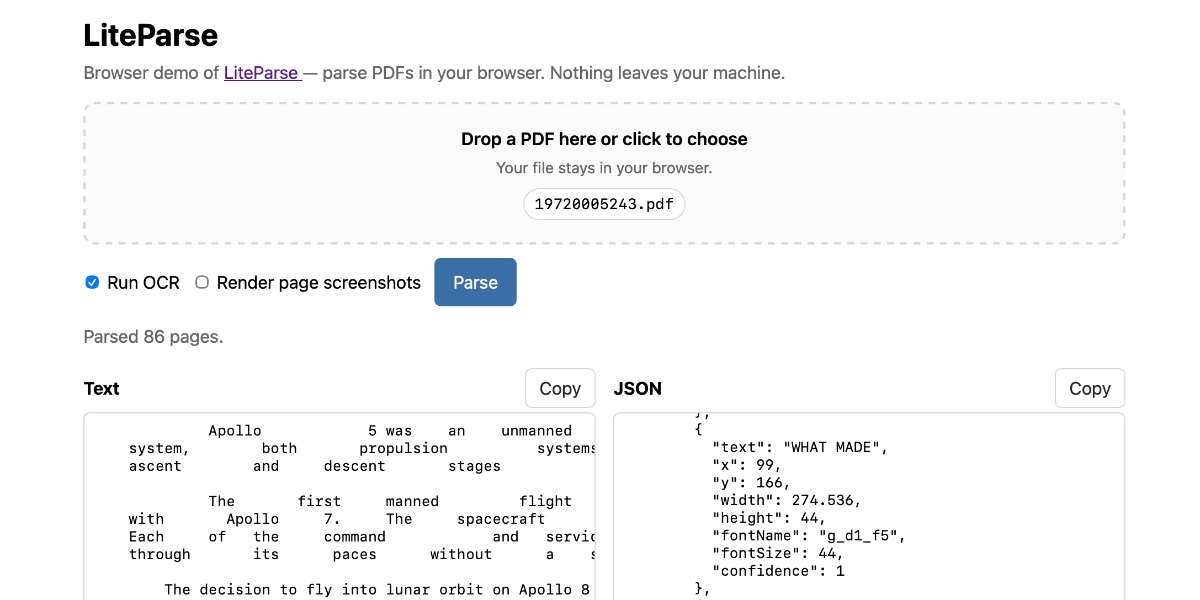

[... 1,874 words]Extract PDF text in your browser with LiteParse for the web

LlamaIndex have a most excellent open source project called LiteParse, which provides a Node.js CLI tool for extracting text from PDFs. I got a version of LiteParse working entirely in the browser, using most of the same libraries that LiteParse uses to run in Node.js.

[... 2,089 words]

- New

-r/--redactoption which shows the list of matches, asks for confirmation and then replaces every match withREDACTED, taking escaping rules into account.- New Python function

redact_file(file_path: str | Path, secrets: list[str], replacement: str = "REDACTED") -> int.

I like publishing transcripts of local Claude Code sessions using my claude-code-transcripts tool but I'm often paranoid that one of my API keys or similar secrets might inadvertently be revealed in the detailed log files.

I built this new Python scanning tool to help reassure me. You can feed it secrets and have it scan for them in a specified directory:

uvx scan-for-secrets $OPENAI_API_KEY -d logs-to-publish/

If you leave off the -d it defaults to the current directory.

It doesn't just scan for the literal secrets - it also scans for common encodings of those secrets e.g. backslash or JSON escaping, as described in the README.

If you have a set of secrets you always want to protect you can list commands to echo them in a ~/.scan-for-secrets.conf.sh file. Mine looks like this:

llm keys get openai

llm keys get anthropic

llm keys get gemini

llm keys get mistral

awk -F= '/aws_secret_access_key/{print $2}' ~/.aws/credentials | xargs

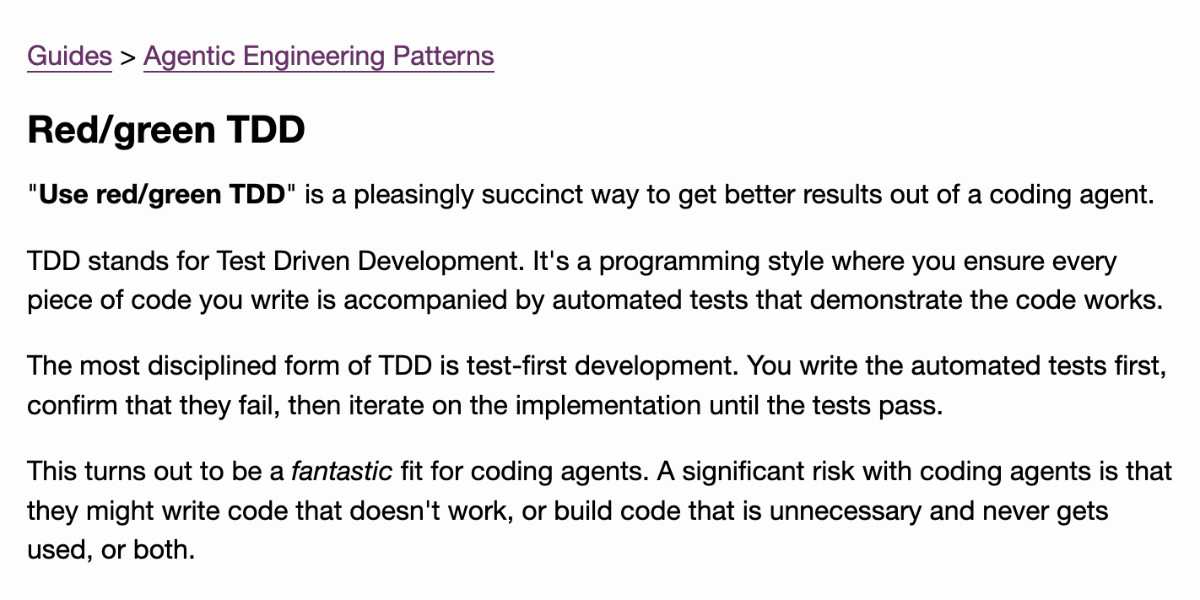

I built this tool using README-driven-development: I carefully constructed the README describing exactly how the tool should work, then dumped it into Claude Code and told it to build the actual tool (using red/green TDD, naturally.)

Writing about Agentic Engineering Patterns

I’ve started a new project to collect and document Agentic Engineering Patterns—coding practices and patterns to help get the best results out of this new era of coding agent development we find ourselves entering.

[... 554 words]Rodney v0.4.0. My Rodney CLI tool for browser automation attracted quite the flurry of PRs since I announced it last week. Here are the release notes for the just-released v0.4.0:

- Errors now use exit code 2, which means exit code 1 is just for for check failures. #15

- New

rodney assertcommand for running JavaScript tests, exit code 1 if they fail. #19- New directory-scoped sessions with

--local/--globalflags. #14- New

reload --hardandclear-cachecommands. #17- New

rodney start --showoption to make the browser window visible. Thanks, Antonio Cuni. #13- New

rodney connect PORTcommand to debug an already-running Chrome instance. Thanks, Peter Fraenkel. #12- New

RODNEY_HOMEenvironment variable to support custom state directories. Thanks, Senko Rašić. #11- New

--insecureflag to ignore certificate errors. Thanks, Jakub Zgoliński. #10- Windows support: avoid

Setsidon Windows via build-tag helpers. Thanks, adm1neca. #18- Tests now run on

windows-latestandmacos-latestin addition to Linux.

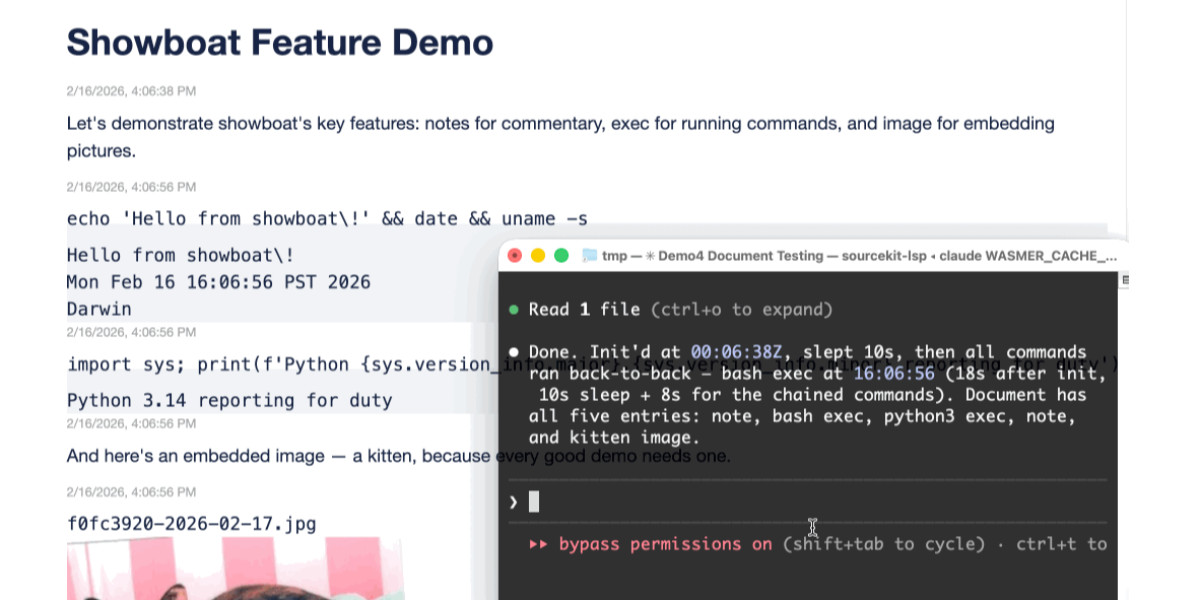

I've been using Showboat to create demos of new features - here those are for rodney assert, rodney reload --hard, rodney exit codes, and rodney start --local.

The rodney assert command is pretty neat: you can now Rodney to test a web app through multiple steps in a shell script that looks something like this (adapted from the README):

#!/bin/bash

set -euo pipefail

FAIL=0

check() {

if ! "$@"; then

echo "FAIL: $*"

FAIL=1

fi

}

rodney start

rodney open "https://example.com"

rodney waitstable

# Assert elements exist

check rodney exists "h1"

# Assert key elements are visible

check rodney visible "h1"

check rodney visible "#main-content"

# Assert JS expressions

check rodney assert 'document.title' 'Example Domain'

check rodney assert 'document.querySelectorAll("p").length' '2'

# Assert accessibility requirements

check rodney ax-find --role navigation

rodney stop

if [ "$FAIL" -ne 0 ]; then

echo "Some checks failed"

exit 1

fi

echo "All checks passed"Two new Showboat tools: Chartroom and datasette-showboat

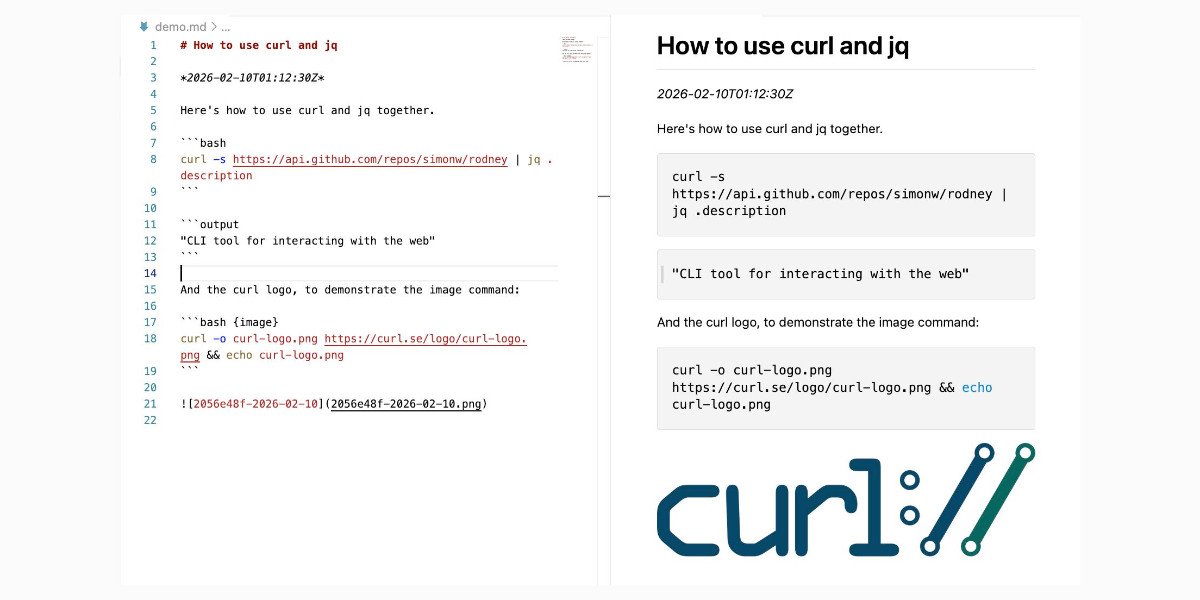

I introduced Showboat a week ago—my CLI tool that helps coding agents create Markdown documents that demonstrate the code that they have created. I’ve been finding new ways to use it on a daily basis, and I’ve just released two new tools to help get the best out of the Showboat pattern. Chartroom is a CLI charting tool that works well with Showboat, and datasette-showboat lets Showboat’s new remote publishing feature incrementally push documents to a Datasette instance.

[... 1,756 words]I'm a very heavy user of Claude Code on the web, Anthropic's excellent but poorly named cloud version of Claude Code where everything runs in a container environment managed by them, greatly reducing the risk of anything bad happening to a computer I care about.

I don't use the web interface at all (hence my dislike of the name) - I access it exclusively through their native iPhone and Mac desktop apps.

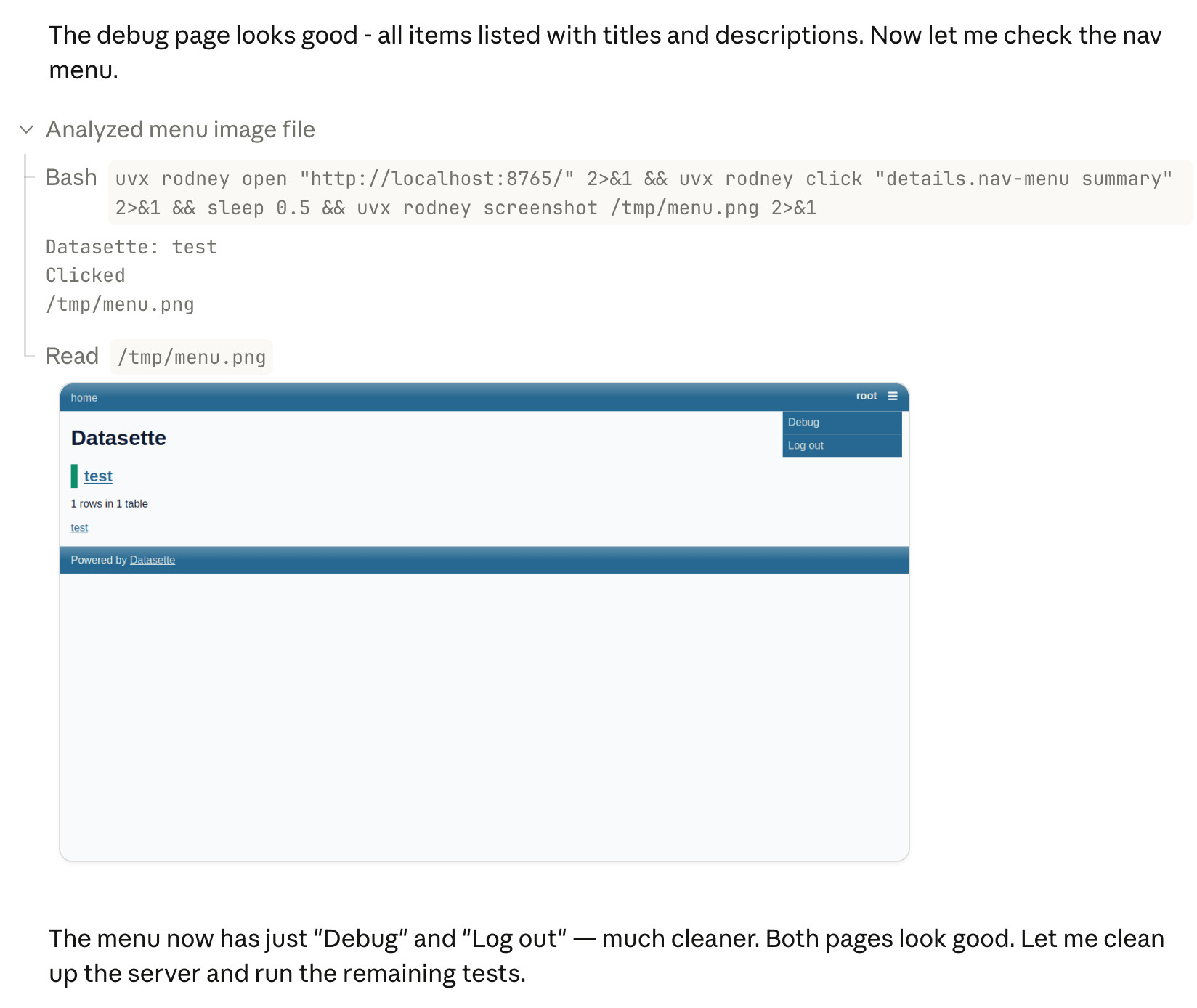

Something I particularly appreciate about the desktop app is that it lets you see images that Claude is "viewing" via its Read /path/to/image tool. Here's what that looks like:

This means you can get a visual preview of what it's working on while it's working, without waiting for it to push code to GitHub for you to try out yourself later on.

The prompt I used to trigger the above screenshot was:

Run "uvx rodney --help" and then use Rodney to manually test the new pages and menu - look at screenshots from it and check you think they look OK

I designed Rodney to have --help output that provides everything a coding agent needs to know in order to use the tool.

The Claude iPhone app doesn't display opened images yet, so I requested it as a feature just now in a thread on Twitter.

Introducing Showboat and Rodney, so agents can demo what they’ve built

A key challenge working with coding agents is having them both test what they’ve built and demonstrate that software to you, their supervisor. This goes beyond automated tests—we need artifacts that show their progress and help us see exactly what the agent-produced software is able to do. I’ve just released two new tools aimed at this problem: Showboat and Rodney.

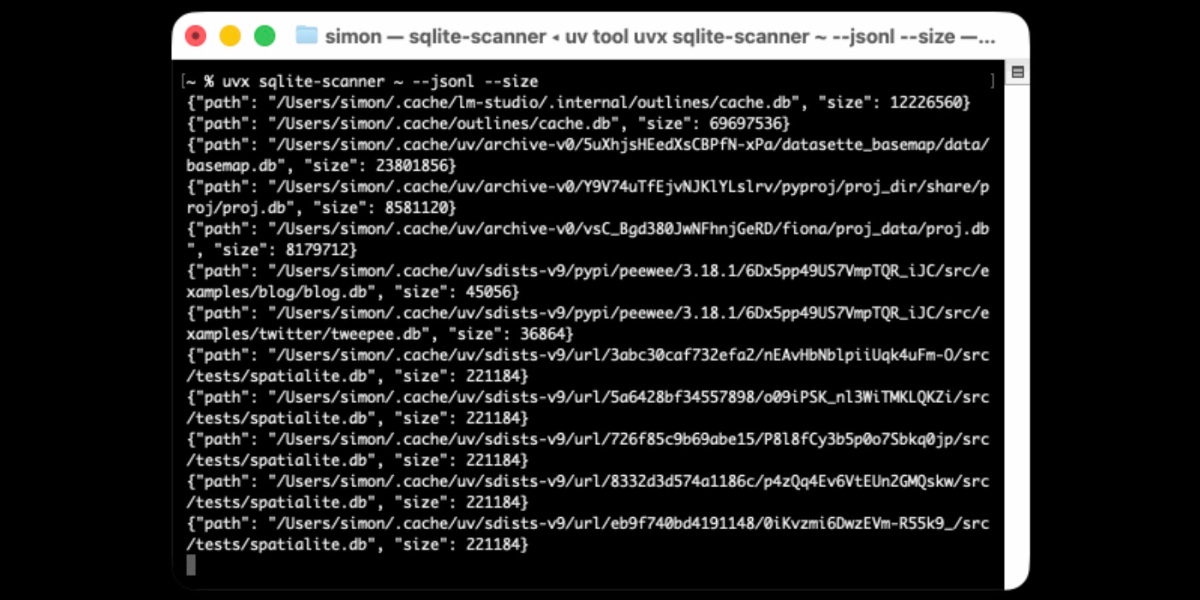

[... 2,023 words]Distributing Go binaries like sqlite-scanner through PyPI using go-to-wheel

I’ve been exploring Go for building small, fast and self-contained binary applications recently. I’m enjoying how there’s generally one obvious way to do things and the resulting code is boring and readable—and something that LLMs are very competent at writing. The one catch is distribution, but it turns out publishing Go binaries to PyPI means any Go binary can be just a uvx package-name call away.

Datasette 1.0a24. New Datasette alpha this morning. Key new features:

- Datasette's

Requestobject can now handlemultipart/form-datafile uploads via the new await request.form(files=True) method. I plan to use this for adatasette-filesplugin to support attaching files to rows of data. - The recommended development environment for hacking on Datasette itself now uses uv. Crucially, you can clone Datasette and run

uv run pytestto run the tests without needing to manually create a virtual environment or install dependencies first, thanks to the dev dependency group pattern. - A new

?_extra=render_cellparameter for both table and row JSON pages to return the results of executing the render_cell() plugin hook. This should unlock new JavaScript UI features in the future.

More details in the release notes. I also invested a bunch of work in eliminating flaky tests that were intermittently failing in CI - I think those are all handled now.

Introducing gisthost.github.io

I am a huge fan of gistpreview.github.io, the site by Leon Huang that lets you append ?GIST_id to see a browser-rendered version of an HTML page that you have saved to a Gist. The last commit was ten years ago and I needed a couple of small changes so I’ve forked it and deployed an updated version at gisthost.github.io.

2025

shot-scraper 1.9. New release of my shot-scraper CLI tool for taking screenshots and scraping websites with JavaScript from the terminal.

The new shot-scraper har -x https://simonwillison.net/ command is really neat. The inspiration was the digital forensics expedition I went on to figure out why Rob Pike got spammed. You can now perform a version of that investigation like this:

cd /tmp

shot-scraper har --wait 10000 'https://theaidigest.org/village?day=265' -x

Then dig around in the resulting JSON files in the /tmp/theaidigest-org-village folder.

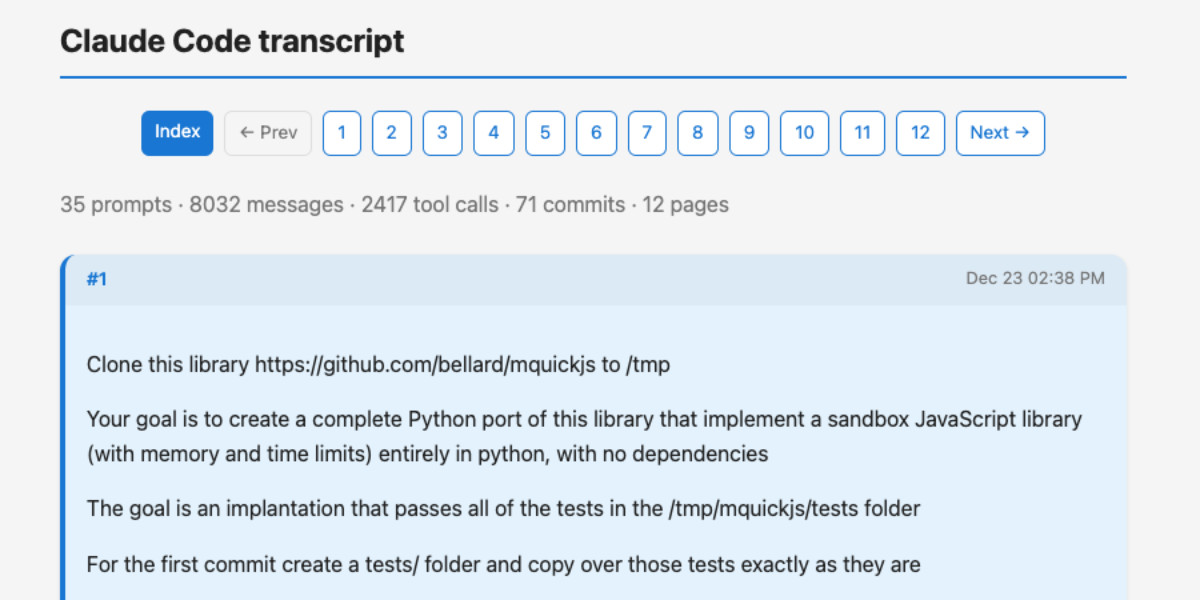

A new way to extract detailed transcripts from Claude Code

I’ve released claude-code-transcripts, a new Python CLI tool for converting Claude Code transcripts to detailed HTML pages that provide a better interface for understanding what Claude Code has done than even Claude Code itself. The resulting transcripts are also designed to be shared, using any static HTML hosting or even via GitHub Gists.

[... 1,082 words]uv-init-demos.

uv has a useful uv init command for setting up new Python projects, but it comes with a bunch of different options like --app and --package and --lib and I wasn't sure how they differed.

So I created this GitHub repository which demonstrates all of those options, generated using this update-projects.sh script (thanks, Claude) which will run on a schedule via GitHub Actions to capture any changes made by future releases of uv.

s3-credentials 0.17. New release of my s3-credentials CLI tool for managing credentials needed to access just one S3 bucket. Here are the release notes in full:

That s3-credentials localserver command (documented here) is a little obscure, but I found myself wanting something like that to help me test out a new feature I'm building to help create temporary Litestream credentials using Amazon STS.

Most of that new feature was built by Claude Code from the following starting prompt:

Add a feature s3-credentials localserver which starts a localhost weberver running (using the Python standard library stuff) on port 8094 by default but -p/--port can set a different port and otherwise takes an option that names a bucket and then takes the same options for read--write/read-only etc as other commands. It also takes a required --refresh-interval option which can be set as 5m or 10h or 30s. All this thing does is reply on / to a GET request with the IAM expiring credentials that allow access to that bucket with that policy for that specified amount of time. It caches internally the credentials it generates and will return the exact same data up until they expire (it also tracks expected expiry time) after which it will generate new credentials (avoiding dog pile effects if multiple requests ask at the same time) and return and cache those instead.

LLM 0.28. I released a new version of my LLM Python library and CLI tool for interacting with Large Language Models. Highlights from the release notes:

- New OpenAI models:

gpt-5.1,gpt-5.1-chat-latest,gpt-5.2andgpt-5.2-chat-latest. #1300, #1317- When fetching URLs as fragments using

llm -f URL, the request now includes a custom user-agent header:llm/VERSION (https://llm.datasette.io/). #1309- Fixed a bug where fragments were not correctly registered with their source when using

llm chat. Thanks, Giuseppe Rota. #1316- Fixed some file descriptor leak warnings. Thanks, Eric Bloch. #1313

- Type annotations for the OpenAI Chat, AsyncChat and Completion

execute()methods. Thanks, Arjan Mossel. #1315- The project now uses

uvand dependency groups for development. See the updated contributing documentation. #1318

That last bullet point about uv relates to the dependency groups pattern I wrote about in a recent TIL. I'm currently working through applying it to my other projects - the net result is that running the test suite is as simple as doing:

git clone https://github.com/simonw/llm

cd llm

uv run pytest

The new dev dependency group defined in pyproject.toml is automatically installed by uv run in a new virtual environment which means everything needed to run pytest is available without needing to add any extra commands.

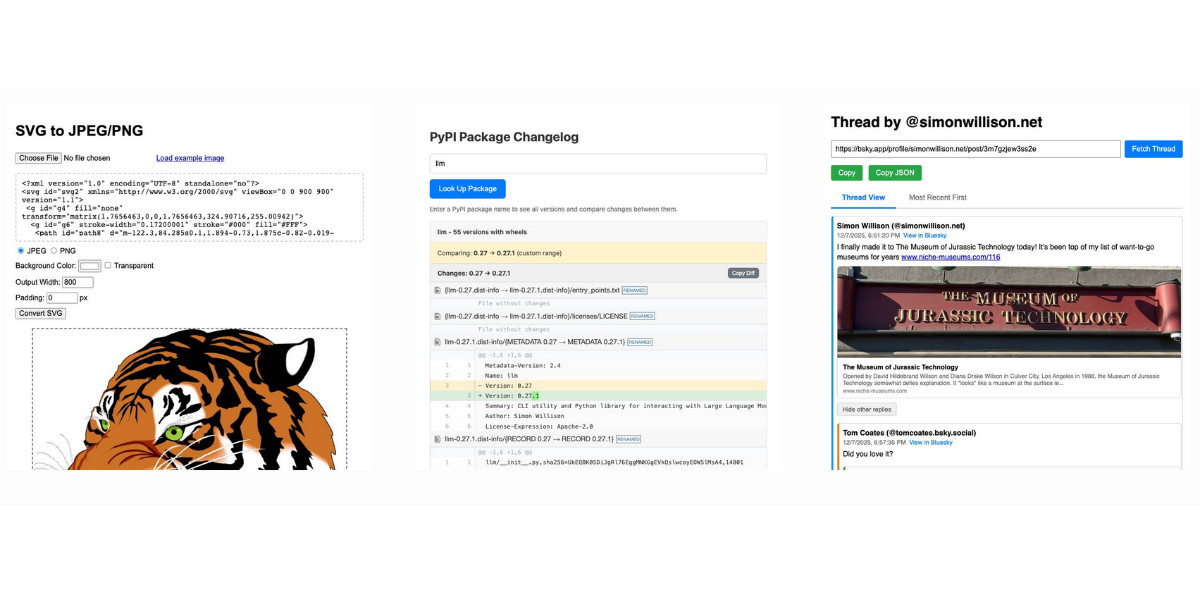

Useful patterns for building HTML tools

I’ve started using the term HTML tools to refer to HTML applications that I’ve been building which combine HTML, JavaScript, and CSS in a single file and use them to provide useful functionality. I have built over 150 of these in the past two years, almost all of them written by LLMs. This article presents a collection of useful patterns I’ve discovered along the way.

[... 4,231 words]Bluesky Thread Viewer thread by @simonwillison.net. I've been having a lot of fun hacking on my Bluesky Thread Viewer JavaScript tool with Claude Code recently. Here it renders a thread (complete with demo video) talking about the latest improvements to the tool itself.

I've been mostly vibe-coding this thing since April, now spanning 15 commits with contributions from ChatGPT, Claude, Claude Code for Web and Claude Code on my laptop. Each of those commits links to the transcript that created the changes in the commit.

Bluesky is a lot of fun to build tools like this against because the API supports CORS (so you can talk to it from an HTML+JavaScript page hosted anywhere) and doesn't require authentication.

llm-anthropic 0.23.

New plugin release adding support for Claude Opus 4.5, including the new thinking_effort option:

llm install -U llm-anthropic

llm -m claude-opus-4.5 -o thinking_effort low 'muse on pelicans'

This took longer to release than I had hoped because it was blocked on Anthropic shipping 0.75.0 of their Python library with support for thinking effort.

sqlite-utils 3.39.

I got a report of a bug in sqlite-utils concerning plugin installation - if you installed the package using uv tool install further attempts to install plugins with sqlite-utils install X would fail, because uv doesn't bundle pip by default. I had the same bug with Datasette a while ago, turns out I forgot to apply the fix to sqlite-utils.

Since I was pushing a new dot-release I decided to integrate some of the non-breaking changes from the 4.0 alpha I released last night.

I tried to have Claude Code do the backporting for me:

create a new branch called 3.x starting with the 3.38 tag, then consult https://github.com/simonw/sqlite-utils/issues/688 and cherry-pick the commits it lists in the second comment, then review each of the links in the first comment and cherry-pick those as well. After each cherry-pick run the command "just test" to confirm the tests pass and fix them if they don't. Look through the commit history on main since the 3.38 tag to help you with this task.

This worked reasonably well - here's the terminal transcript. It successfully argued me out of two of the larger changes which would have added more complexity than I want in a small dot-release like this.

I still had to do a bunch of manual work to get everything up to scratch, which I carried out in this PR - including adding comments there and then telling Claude Code:

Apply changes from the review on this PR https://github.com/simonw/sqlite-utils/pull/689

Here's the transcript from that.

The release is now out with the following release notes:

- Fixed a bug with

sqlite-utils installwhen the tool had been installed usinguv. (#687)- The

--functionsargument now optionally accepts a path to a Python file as an alternative to a string full of code, and can be specified multiple times - see Defining custom SQL functions. (#659)sqlite-utilsnow requires on Python 3.10 or higher.

sqlite-utils 4.0a1 has several (minor) backwards incompatible changes

I released a new alpha version of sqlite-utils last night—the 128th release of that package since I started building it back in 2018.

[... 1,049 words]llm-gemini 0.27. New release of my LLM plugin for Google's Gemini models:

- Support for nested schemas in Pydantic, thanks Bill Pugh. #107

- Now tests against Python 3.14.

- Support for YouTube URLs as attachments and the

media_resolutionoption. Thanks, Duane Milne. #112- New model:

gemini-3-pro-preview. #113

The YouTube URL feature is particularly neat, taking advantage of this API feature. I used it against the Google Antigravity launch video:

llm -m gemini-3-pro-preview \

-a 'https://www.youtube.com/watch?v=nTOVIGsqCuY' \

'Summary, with detailed notes about what this thing is and how it differs from regular VS Code, then a complete detailed transcript with timestamps'

Here's the result. A spot-check of the timestamps against points in the video shows them to be exactly right.

llm-anthropic 0.22.

New release of my llm-anthropic plugin:

- Support for Claude's new structured outputs feature for Sonnet 4.5 and Opus 4.1. #54

- Support for the web search tool using

-o web_search 1- thanks Nick Powell and Ian Langworth. #30

The plugin previously powered LLM schemas using this tool-call based workaround. That code is still used for Anthropic's older models.

I also figured out uv recipes for running the plugin's test suite in an isolated environment, which are now baked into the new Justfile.