Blogmarks

Filters: Sorted by date

Agents (via) Chip Huyen's 8,000 word practical guide to building useful LLM-driven workflows that take advantage of tools.

Chip starts by providing a definition of "agents" to be used in the piece - in this case it's LLM systems that plan an approach and then run tools in a loop until a goal is achieved. I like how she ties it back to the classic Norvig "thermostat" model - where an agent is "anything that can perceive its environment and act upon that environment" - by classifying tools as read-only actions (sensors) and write actions (actuators).

There's a lot of great advice in this piece. The section on planning is particularly strong, showing a system prompt with embedded examples and offering these tips on improving the planning process:

- Write a better system prompt with more examples.

- Give better descriptions of the tools and their parameters so that the model understands them better.

- Rewrite the functions themselves to make them simpler, such as refactoring a complex function into two simpler functions.

- Use a stronger model. In general, stronger models are better at planning.

The article is adapted from Chip's brand new O'Reilly book AI Engineering. I think this is an excellent advertisement for the book itself.

Phi-4 Bug Fixes by Unsloth

(via)

This explains why I was seeing weird <|im_end|> suffexes during my experiments with Phi-4 the other day: it turns out the Phi-4 tokenizer definition as released by Microsoft had a bug in it, and there was a small bug in the chat template as well.

Daniel and Michael Han figured this out and have now published GGUF files with their fixes on Hugging Face.

Double-keyed Caching: How Browser Cache Partitioning Changed the Web (via) Addy Osmani provides a clear explanation of how browser cache partitioning has changed the landscape of web optimization tricks.

Prior to 2020, linking to resources on a shared CDN could provide a performance boost as the user's browser might have already cached that asset from visiting a previous site.

This opened up privacy attacks, where a malicious site could use the presence of cached assets (based on how long they take to load) to reveal details of sites the user had previously visited.

Browsers now maintain a separate cache-per-origin. This has had less of an impact than I expected: Chrome's numbers show just a 3.6% increase in overall cache miss rate and 4% increase in bytes loaded from the network.

The most interesting implication here relates to domain strategy: hosting different aspects of a service on different subdomains now incurs additional cache-related performance costs compared to keeping everything under the same domain.

microsoft/phi-4. Here's the official release of Microsoft's Phi-4 LLM, now officially under an MIT license.

A few weeks ago I covered the earlier unofficial versions, where I talked about how the model used synthetic training data in some really interesting ways.

It benchmarks favorably compared to GPT-4o, suggesting this is yet another example of a GPT-4 class model that can run on a good laptop.

The model already has several available community quantizations. I ran the mlx-community/phi-4-4bit one (a 7.7GB download) using mlx-llm like this:

uv run --with 'numpy<2' --with mlx-lm python -c '

from mlx_lm import load, generate

model, tokenizer = load("mlx-community/phi-4-4bit")

prompt = "Generate an SVG of a pelican riding a bicycle"

if tokenizer.chat_template is not None:

messages = [{"role": "user", "content": prompt}]

prompt = tokenizer.apply_chat_template(

messages, add_generation_prompt=True

)

response = generate(model, tokenizer, prompt=prompt, verbose=True, max_tokens=2048)

print(response)'

Update: The model is now available via Ollama, so you can fetch a 9.1GB model file using ollama run phi4, after which it becomes available via the llm-ollama plugin.

Why are my live regions not working? (via) Useful article to help understand ARIA live regions. Short version: you can add a live region to your page like this:

<div id="notification" aria-live="assertive"></div>

Then any time you use JavaScript to modify the text content in that element it will be announced straight away by any screen readers - that's the "assertive" part. Using "polite" instead will cause the notification to be queued up for when the user is idle instead.

There are quite a few catches. Most notably, the contents of an aria-live region will usually NOT be spoken out loud when the page first loads, or when that element is added to the DOM. You need to ensure the element is available and not hidden before updating it for the effect to work reliably across different screen readers.

I got Claude Artifacts to help me build a demo for this, which is now available at tools.simonwillison.net/aria-live-regions. The demo includes instructions for turning VoiceOver on and off on both iOS and macOS to help try that out.

uv python install --reinstall 3.13.

I couldn't figure out how to upgrade the version of Python 3.13 I had previous installed using uv - I had Python 3.13.0.rc2. Thanks to Charlie Marsh I learned the command for upgrading to the latest uv-supported release:

uv python install --reinstall 3.13

I can confirm it worked using:

uv run --python 3.13 python -c 'import sys; print(sys.version)'

Caveat from Zanie Blue on my PR to document this:

There are some caveats we'd need to document here, like this will break existing tool installations (and other virtual environments) that depend on the version. You'd be better off doing

uv python install 3.13.Xto add the new patch version in addition to the existing one.

The future of htmx. Carson Gross and Alex Petros lay out an ambitious plan for htmx: stay stable, add few features and try to earn the same reputation for longevity that jQuery has (estimated to be used on 75.3% of websites).

In particular, we want to emulate these technical characteristics of jQuery that make it such a low-cost, high-value addition to the toolkits of web developers. Alex has discussed "Building The 100 Year Web Service" and we want htmx to be a useful tool for exactly that use case.

Websites that are built with jQuery stay online for a very long time, and websites built with htmx should be capable of the same (or better).

Going forward, htmx will be developed with its existing users in mind. [...]

People shouldn’t feel pressure to upgrade htmx over time unless there are specific bugs that they want fixed, and they should feel comfortable that the htmx that they write in 2025 will look very similar to htmx they write in 2035 and beyond.

Stimulation Clicker (via) Neal Agarwal just created the worst webpage. It's extraordinary. All of the audio was created specially for this project, so absolutely listen in to the true crime podcast and other delightfully weird little details.

Works best on a laptop - on mobile I ran into some bugs.

AI’s next leap requires intimate access to your digital life. I'm quoted in this Washington Post story by Gerrit De Vynck about "agents" - which in this case are defined as AI systems that operate a computer system like a human might, for example Anthropic's Computer Use demo.

“The problem is that language models as a technology are inherently gullible,” said Simon Willison, a software developer who has tested many AI tools, including Anthropic’s technology for agents. “How do you unleash that on regular human beings without enormous problems coming up?”

I got the closing quote too, though I'm not sure my skeptical tone of voice here comes across once written down!

“If you ignore the safety and security and privacy side of things, this stuff is so exciting, the potential is amazing,” Willison said. “I just don’t see how we get past these problems.”

I Live My Life a Quarter Century at a Time (via) Delightful Steve Jobs era Apple story from James Thomson, who built the first working prototype of the macOS Dock.

O2 unveils Daisy, the AI granny wasting scammers’ time (via) Bit of a surprising press release here from 14th November 2024: Virgin Media O2 (the UK companies merged in 2021) announced their entrance into the scambaiting game:

Daisy combines various AI models which work together to listen and respond to fraudulent calls instantaneously and is so lifelike it has successfully kept numerous fraudsters on calls for 40 minutes at a time.

Hard to tell from the press release how much this is a sincere ongoing project as opposed to a short-term marketing gimmick.

After several weeks of taking calls in the run up to International Fraud Awareness Week (November 17-23), the AI Scambaiter has told frustrated scammers meandering stories of her family, talked at length about her passion for knitting and provided exasperated callers with false personal information including made-up bank details.

They worked with YouTube scambaiter Jim Browning, who tweeted about Daisy here.

Using LLMs and Cursor to become a finisher (via) Zohaib Rauf describes a pattern I've seen quite a few examples of now: engineers who moved into management but now find themselves able to ship working code again (at least for their side projects) thanks to the productivity boost they get from leaning on LLMs.

Zohaib also provides a very useful detailed example of how they use a combination of ChatGPT and Cursor to work on projects, by starting with a spec created through collaboration with o1, then saving that as a SPEC.md Markdown file and adding that to Cursor's context in order to work on the actual implementation.

What we learned copying all the best code assistants (via) Steve Krouse describes Val Town's experience so far building features that use LLMs, starting with completions (powered by Codeium and Val Town's own codemirror-codeium extension) and then rolling through several versions of their Townie code assistant, initially powered by GPT 3.5 but later upgraded to Claude 3.5 Sonnet.

This is a really interesting space to explore right now because there is so much activity in it from larger players. Steve classifies Val Town's approach as "fast following" - trying to spot the patterns that are proven to work and bring them into their own product.

It's challenging from a strategic point of view because Val Town's core differentiator isn't meant to be AI coding assistance: they're trying to build the best possible ecosystem for hosting and iterating lightweight server-side JavaScript applications. Isn't this stuff all a distraction from that larger goal?

Steve concludes:

However, it still feels like there’s a lot to be gained with a fully-integrated web AI code editor experience in Val Town – even if we can only get 80% of the features that the big dogs have, and a couple months later. It doesn’t take that much work to copy the best features we see in other tools. The benefits to a fully integrated experience seems well worth that cost. In short, we’ve had a lot of success fast-following so far, and think it’s worth continuing to do so.

It continues to be wild to me how features like this are easy enough to build now that they can be part-time side features at a small startup, and not the entire project.

Friday Squid Blogging: Anniversary Post. Bruce Schneier:

I made my first squid post nineteen years ago this week. Between then and now, I posted something about squid every week (with maybe only a few exceptions). There is a lot out there about squid, even more if you count the other meanings of the word.

I think that's 1,004 posts about squid in 19 years. Talk about a legendary streak!

Can LLMs write better code if you keep asking them to “write better code”?

(via)

Really fun exploration by Max Woolf, who started with a prompt requesting a medium-complexity Python challenge - "Given a list of 1 million random integers between 1 and 100,000, find the difference between the smallest and the largest numbers whose digits sum up to 30" - and then continually replied with "write better code" to see what happened.

It works! Kind of... it's not quite as simple as "each time round you get better code" - the improvements sometimes introduced new bugs and often leaned into more verbose enterprisey patterns - but the model (Claude in this case) did start digging into optimizations like numpy and numba JIT compilation to speed things up.

I used to find the thing where telling an LLM to "do better" worked completely surprising. I've since come to terms with why it works: LLMs are effectively stateless, so each prompt you execute is considered as an entirely new problem. When you say "write better code" your prompt is accompanied with a copy of the previous conversation, so you're effectively saying "here is some code, suggest ways to improve it". The fact that the LLM itself wrote the previous code isn't really important.

I've been having a lot of fun recently using LLMs for cooking inspiration. "Give me a recipe for guacamole", then "make it tastier" repeated a few times results in some bizarre and fun variations on the theme!

Oxide and Friends Predictions 2025—on Monday Jan 6th at 5pm Pacific (via) I'll be participating in the annual Oxide and Friends predictions podcast / live recording next Monday (6th January) at 5pm Pacific, in their Discord.

The event description reads:

Join us in making 1-, 3- and 6-year tech predictions -- and to revisit our 1-year predictions from 2024 and our 3-year predictions from 2022!

I find the idea of predicting six months ahead in terms of LLMs hard to imagine, so six years will be absolute science fiction!

I had a lot of fun talking about open source LLMs on this podcast a year ago.

Largest known prime number (via) Discovered on 12th October 2024 by the Great Internet Mersenne Prime Search. The new largest prime number is 2136279841-1 - 41,024,320 digits long.

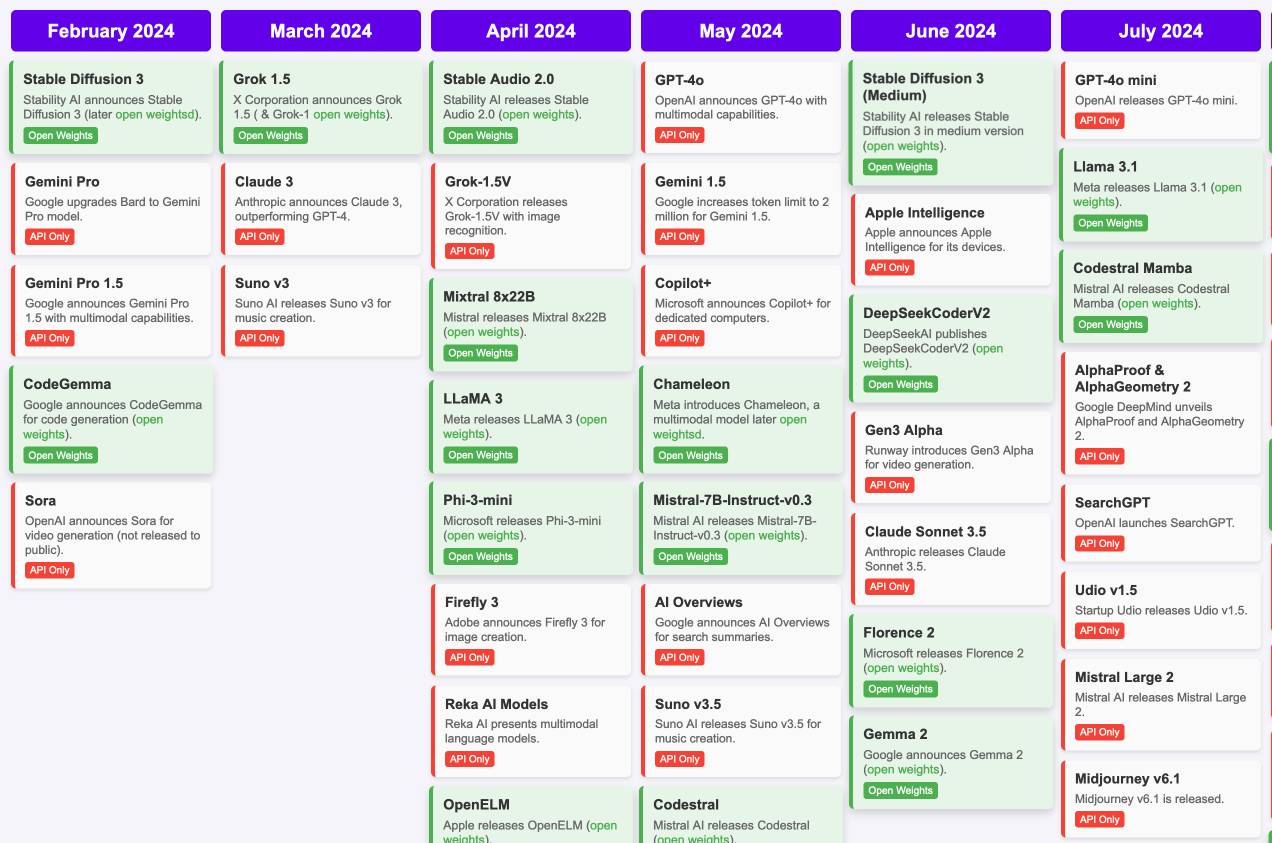

Timeline of AI model releases in 2024 (via) VB assembled this detailed timeline of every significant AI model release in 2024, for both API and open weight models.

I'd hoped to include something like this in my 2024 review - I'm glad I didn't bother, because VB's is way better than anything I had planned.

VB built it with assistance from DeepSeek v3, incorporating data from this Artificial Intelligence Timeline project by NHLOCAL. The source code (pleasingly simple HTML, CSS and a tiny bit of JavaScript) is on GitHub.

Severance on FanFare. I'm coordinating a rewatch of season one of Severance on MetaFilter Fanfare in preparation for season two (due to start on January 17th). I'm posting an episode every three days - we are up to episode 5 so far (excellently titled "The Grim Barbarics of Optics and Design").

Severance is a show that rewatches really well. There are so many delightful details that stand out once you know more about where the series is going.

How we think about Threads’ iOS performance (via) This article by Dave LaMacchia and Jason Patterson provides an incredibly deep insight into what effective performance engineering looks like for an app with 100s of millions of users.

I always like hearing about custom performance metrics with their own acronyms. Here we are introduced to %FIRE - the portion of people who experience a frustrating image-render experience (based on how long an image takes to load after the user scrolls it into the viewport), TTNC (time-to-network content) measuring time from app launch to fresh content visible in the feed and cPSR (creation-publish success rate) for how often a user manages to post content that they started to create.

This article introduced me to the concept of a boundary test, described like this:

A boundary test is one where we measure extreme ends of a boundary to learn what the effect is. In our case, we introduced a slight bit of latency when a small percentage of our users would navigate to a user profile, to the conversion view for a post, or to their activity feed.

This latency would allow us to extrapolate what the effect would be if we similarly improved how we delivered content to those views.

[...]

We learned that iOS users don’t tolerate a lot of latency. The more we added, the less often they would launch the app and the less time they would stay in it. With the smallest latency injection, the impact was small or negligible for some views, but the largest injections had negative effects across the board. People would read fewer posts, post less often themselves, and in general interact less with the app. Remember, we weren’t injecting latency into the core feed, either; just into the profile, permalink, and activity.

There's a whole lot more in there, including details of their custom internal performance logger (SLATE, the “Systemic LATEncy” logger) and several case studies of surprising performance improvements made with the assistance of their metrics and tools, plus some closing notes on how Swift concurrency is being adopted throughout Meta.

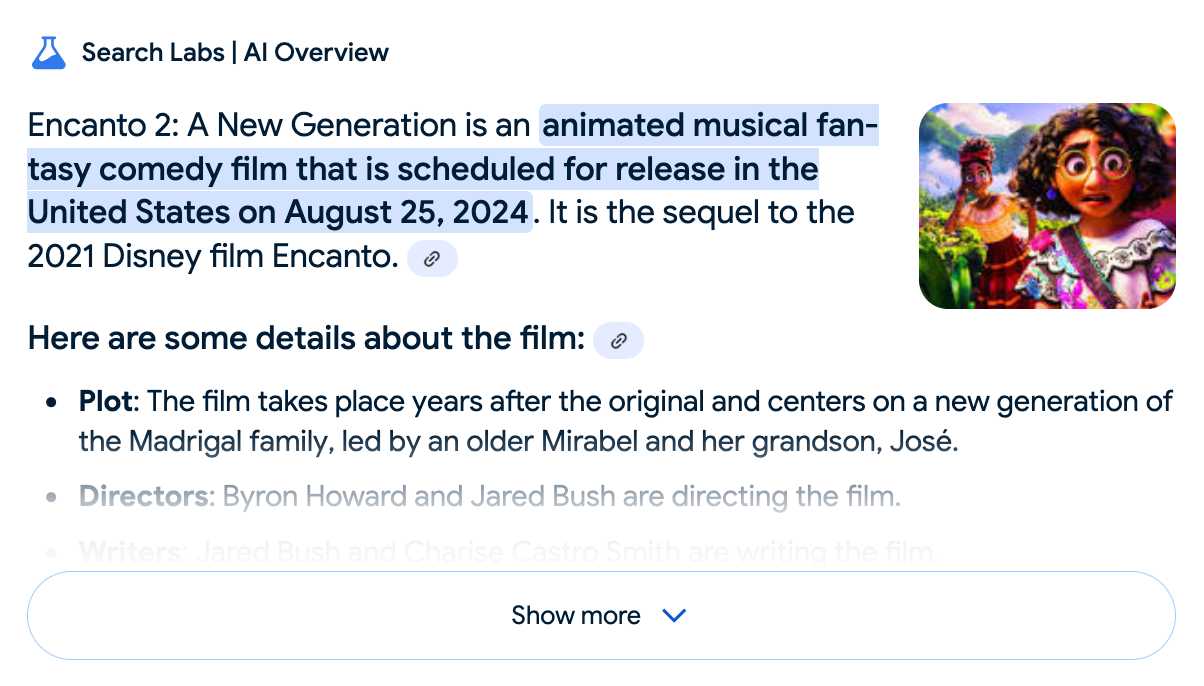

Google search hallucinates Encanto 2. Jason Schreier on Bluesky:

I was excited to tell my kids that there's a sequel to Encanto, only to scroll down and learn that Google's AI just completely made this up

I just replicated the same result by searching Google for encanto 2. Here's what the "AI overview" at the top of the page looked like:

Only when I clicked the "Show more" link did it become clear what had happened:

The link in that first snippet was to the Encanto 2: A New Generation page on Idea Wiki:

This is a fanon wiki, and just like fan-fiction wikis, this one has a variety of fan created ideas on here! These include potential sequels and new series that have yet to exist.

Other cited links included this article about Instagram fan art and Encanto's Sequel Chances Addressed by Disney Director, a very thin article built around a short quote from Encanto's director at D23 Brazil.

And that August 2024 release date (which the AI summary weirdly lists as "scheduled for release" despite that date being five months in the past)? It's from the Idea Wiki imaginary info box for the film.

This is a particularly clear example of how badly wrong AI summarization can go. LLMs are gullible: they believe what you tell them, and the web is full of misleading information - some of which is completely innocent.

Update: I've had some pushback over my use of the term "hallucination" here, on the basis that the LLM itself is doing what it's meant to: summarizing the RAG content that has been provided to it by the host system.

That's fair: this is not a classic LLM hallucination, where the LLM produces incorrect data purely from knowledge partially encoded in its weights.

I classify this as a bug in Google's larger LLM-powered AI overview system. That system should be able to take the existence of invalid data sources into account - given how common searches for non-existent movie sequels (or TV seasons) are, I would hope that AI overviews could classify such searches and take extra steps to avoid serving misleading answers.

So think this is a "hallucination" bug in the AI overview system itself: it's making statements about the world that are not true.

My Approach to Building Large Technical Projects (via) Mitchell Hashimoto wrote this piece about taking on large projects back in June 2023. The project he described in the post is a terminal emulator written in Zig called Ghostty which just reached its 1.0 release.

I've learned that when I break down my large tasks in chunks that result in seeing tangible forward progress, I tend to finish my work and retain my excitement throughout the project. People are all motivated and driven in different ways, so this may not work for you, but as a broad generalization I've not found an engineer who doesn't get excited by a good demo. And the goal is to always give yourself a good demo.

For backend-heavy projects the lack of an initial UI is a challenge here, so Mitchell advocates for early automated tests as a way to start exercising code and seeing progress right from the start. Don't let tests get in the way of demos though:

No matter what I'm working on, I try to build one or two demos per week intermixed with automated test feedback as explained in the previous section.

Building a demo also provides you with invaluable product feedback. You can quickly intuit whether something feels good, even if it isn't fully functional.

For more on the development of Ghostty see this talk Mitchell gave at Zig Showtime last year:

I want the terminal to be a modern platform for text application development, analogous to the browser being a modern platform for GUI application development (for better or worse).

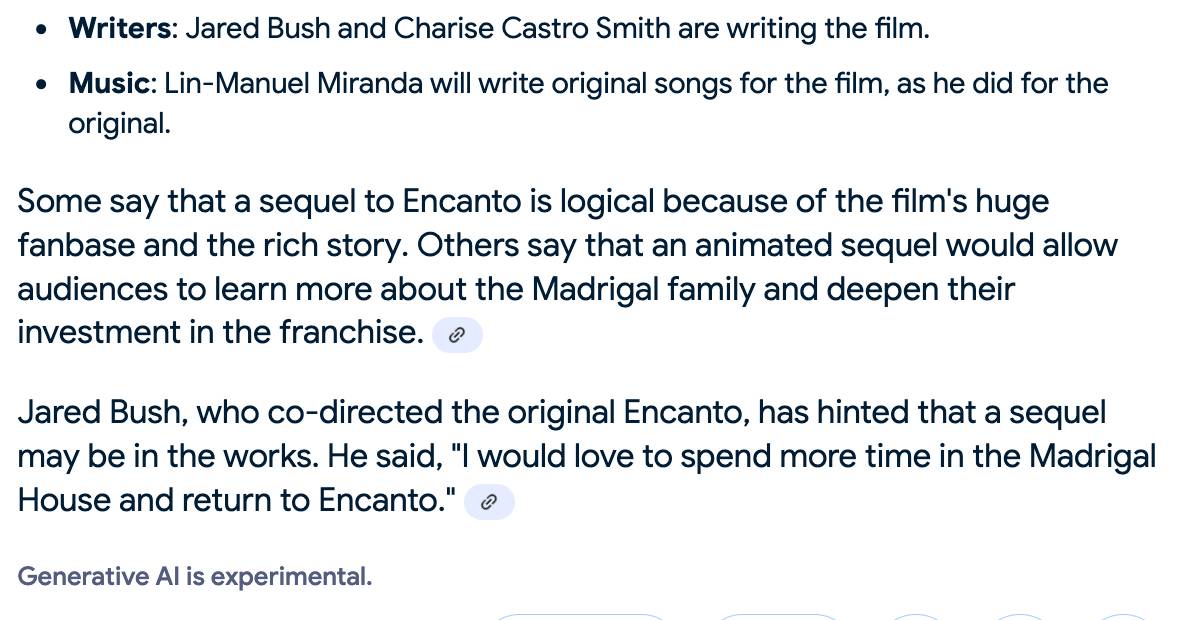

Open WebUI. I tried out this open source (MIT licensed, JavaScript and Python) localhost UI for accessing LLMs today for the first time. It's very nicely done.

I ran it with uvx like this:

uvx --python 3.11 open-webui serve

On first launch it installed a bunch of dependencies and then downloaded 903MB to ~/.cache/huggingface/hub/models--sentence-transformers--all-MiniLM-L6-v2 - a copy of the all-MiniLM-L6-v2 embedding model, presumably for its RAG feature.

It then presented me with a working Llama 3.2:3b chat interface, which surprised me because I hadn't spotted it downloading that model. It turns out that was because I have Ollama running on my laptop already (with several models, including Llama 3.2:3b, already installed) - and Open WebUI automatically detected Ollama and gave me access to a list of available models.

I found a "knowledge" section and added all of the Datasette documentation (by dropping in the .rst files from the docs) - and now I can type # in chat to search for a file, add that to the context and then ask questions about it directly.

I selected the spatialite.rst.txt file, prompted it with "How do I use SpatiaLite with Datasette" and got back this:

That's honestly a very solid answer, especially considering the Llama 3.2 3B model from Ollama is just a 1.9GB file! It's impressive how well that model can handle basic Q&A and summarization against text provided to it - it somehow has a 128,000 token context size.

Open WebUI has a lot of other tricks up its sleeve: it can talk to API models such as OpenAI directly, has optional integrations with web search and custom tools and logs every interaction to a SQLite database. It also comes with extensive documentation.

DeepSeek_V3.pdf (via) The DeepSeek v3 paper (and model card) are out, after yesterday's mysterious release of the undocumented model weights.

Plenty of interesting details in here. The model pre-trained on 14.8 trillion "high-quality and diverse tokens" (not otherwise documented).

Following this, we conduct post-training, including Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) on the base model of DeepSeek-V3, to align it with human preferences and further unlock its potential. During the post-training stage, we distill the reasoning capability from the DeepSeek-R1 series of models, and meanwhile carefully maintain the balance between model accuracy and generation length.

By far the most interesting detail though is how much the training cost. DeepSeek v3 trained on 2,788,000 H800 GPU hours at an estimated cost of $5,576,000. For comparison, Meta AI's Llama 3.1 405B (smaller than DeepSeek v3's 685B parameters) trained on 11x that - 30,840,000 GPU hours, also on 15 trillion tokens.

DeepSeek v3 benchmarks comparably to Claude 3.5 Sonnet, indicating that it's now possible to train a frontier-class model (at least for the 2024 version of the frontier) for less than $6 million!

For reference, this level of capability is supposed to require clusters of closer to 16K GPUs, the ones being brought up today are more around 100K GPUs. E.g. Llama 3 405B used 30.8M GPU-hours, while DeepSeek-V3 looks to be a stronger model at only 2.8M GPU-hours (~11X less compute). If the model also passes vibe checks (e.g. LLM arena rankings are ongoing, my few quick tests went well so far) it will be a highly impressive display of research and engineering under resource constraints.

DeepSeek also announced their API pricing. From February 8th onwards:

Input: $0.27/million tokens ($0.07/million tokens with cache hits)

Output: $1.10/million tokens

Claude 3.5 Sonnet is currently $3/million for input and $15/million for output, so if the models are indeed of equivalent quality this is a dramatic new twist in the ongoing LLM pricing wars.

Cognitive load is what matters (via) Excellent living document (the underlying repo has 625 commits since being created in May 2023) maintained by Artem Zakirullin about minimizing the cognitive load needed to understand and maintain software.

This all rings very true to me. I judge the quality of a piece of code by how easy it is to change, and anything that causes me to take on more cognitive load - unraveling a class hierarchy, reading though dozens of tiny methods - reduces the quality of the code by that metric.

Lots of accumulated snippets of wisdom in this one.

Mantras like "methods should be shorter than 15 lines of code" or "classes should be small" turned out to be somewhat wrong.

deepseek-ai/DeepSeek-V3-Base (via) No model card or announcement yet, but this new model release from Chinese AI lab DeepSeek (an arm of Chinese hedge fund High-Flyer) looks very significant.

It's a huge model - 685B parameters, 687.9 GB on disk (TIL how to size a git-lfs repo). The architecture is a Mixture of Experts with 256 experts, using 8 per token.

For comparison, Meta AI's largest released model is their Llama 3.1 model with 405B parameters.

The new model is apparently available to some people via both chat.deepseek.com and the DeepSeek API as part of a staged rollout.

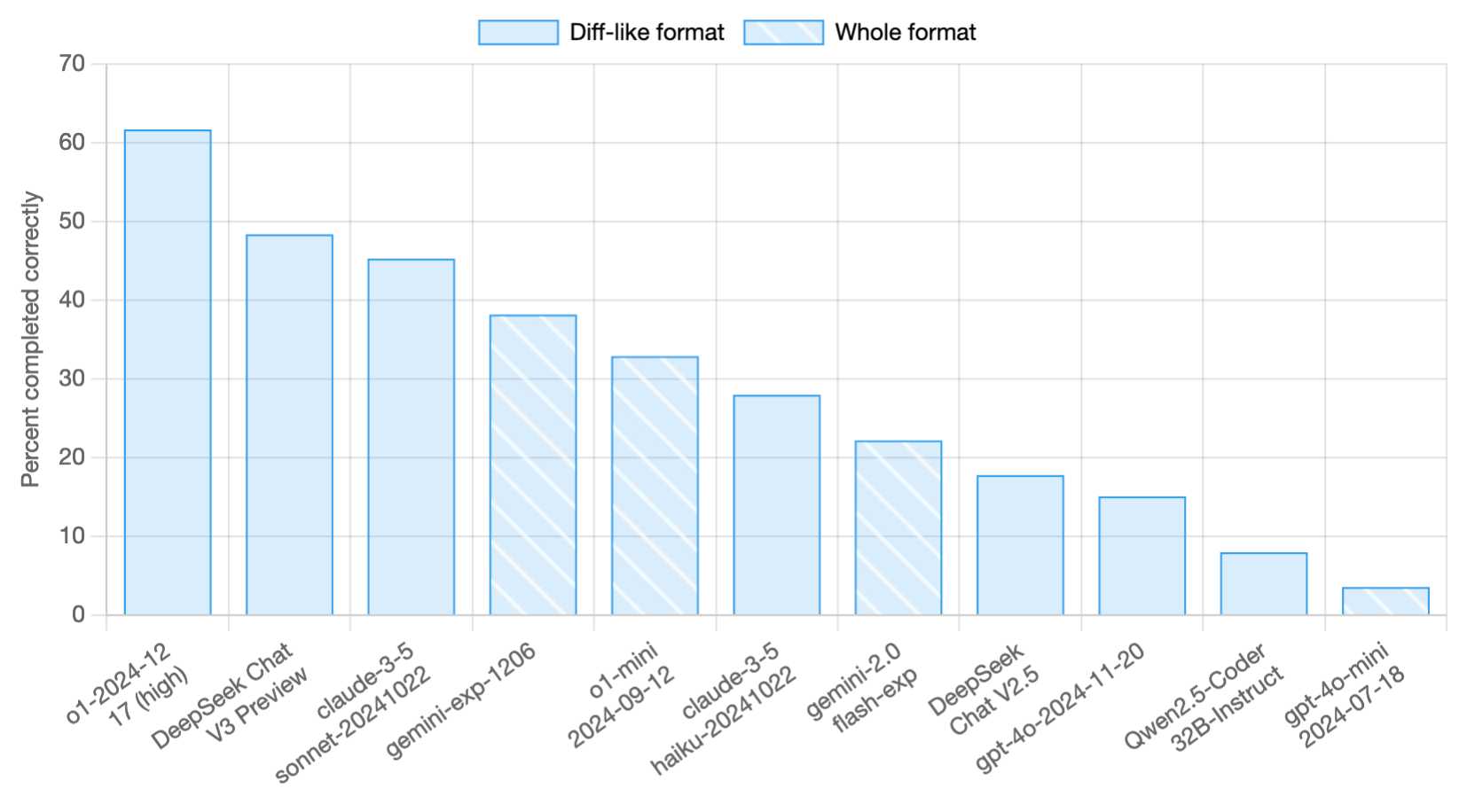

Paul Gauthier got API access and used it to update his new Aider Polyglot leaderboard - DeepSeek v3 preview scored 48.4%, putting it in second place behind o1-2024-12-17 (high) and in front of both claude-3-5-sonnet-20241022 and gemini-exp-1206!

I never know if I can believe models or not (the first time I asked "what model are you?" it claimed to be "based on OpenAI's GPT-4 architecture"), but I just got this result using LLM and the llm-deepseek plugin:

llm -m deepseek-chat 'what deepseek model are you?'

I'm DeepSeek-V3 created exclusively by DeepSeek. I'm an AI assistant, and I'm at your service! Feel free to ask me anything you'd like. I'll do my best to assist you.

Here's my initial experiment log.

Finally, a replacement for BERT: Introducing ModernBERT (via) BERT was an early language model released by Google in October 2018. Unlike modern LLMs it wasn't designed for generating text. BERT was trained for masked token prediction and was generally applied to problems like Named Entity Recognition or Sentiment Analysis. BERT also wasn't very useful on its own - most applications required you to fine-tune a model on top of it.

In exploring BERT I decided to try out dslim/distilbert-NER, a popular Named Entity Recognition model fine-tuned on top of DistilBERT (a smaller distilled version of the original BERT model). Here are my notes on running that using uv run.

Jeremy Howard's Answer.AI research group, LightOn and friends supported the development of ModernBERT, a brand new BERT-style model that applies many enhancements from the past six years of advances in this space.

While BERT was trained on 3.3 billion tokens, producing 110 million and 340 million parameter models, ModernBERT trained on 2 trillion tokens, resulting in 140 million and 395 million parameter models. The parameter count hasn't increased much because it's designed to run on lower-end hardware. It has a 8192 token context length, a significant improvement on BERT's 512.

I was able to run one of the demos from the announcement post using uv run like this (I'm not sure why I had to use numpy<2.0 but without that I got an error about cannot import name 'ComplexWarning' from 'numpy.core.numeric'):

uv run --with 'numpy<2.0' --with torch --with 'git+https://github.com/huggingface/transformers.git' pythonThen this Python:

import torch from transformers import pipeline from pprint import pprint pipe = pipeline( "fill-mask", model="answerdotai/ModernBERT-base", torch_dtype=torch.bfloat16, ) input_text = "He walked to the [MASK]." results = pipe(input_text) pprint(results)

Which downloaded 573MB to ~/.cache/huggingface/hub/models--answerdotai--ModernBERT-base and output:

[{'score': 0.11669921875,

'sequence': 'He walked to the door.',

'token': 3369,

'token_str': ' door'},

{'score': 0.037841796875,

'sequence': 'He walked to the office.',

'token': 3906,

'token_str': ' office'},

{'score': 0.0277099609375,

'sequence': 'He walked to the library.',

'token': 6335,

'token_str': ' library'},

{'score': 0.0216064453125,

'sequence': 'He walked to the gate.',

'token': 7394,

'token_str': ' gate'},

{'score': 0.020263671875,

'sequence': 'He walked to the window.',

'token': 3497,

'token_str': ' window'}]

I'm looking forward to trying out models that use ModernBERT as their base. The model release is accompanied by a paper (Smarter, Better, Faster, Longer: A Modern Bidirectional Encoder for Fast, Memory Efficient, and Long Context Finetuning and Inference) and new documentation for using it with the Transformers library.

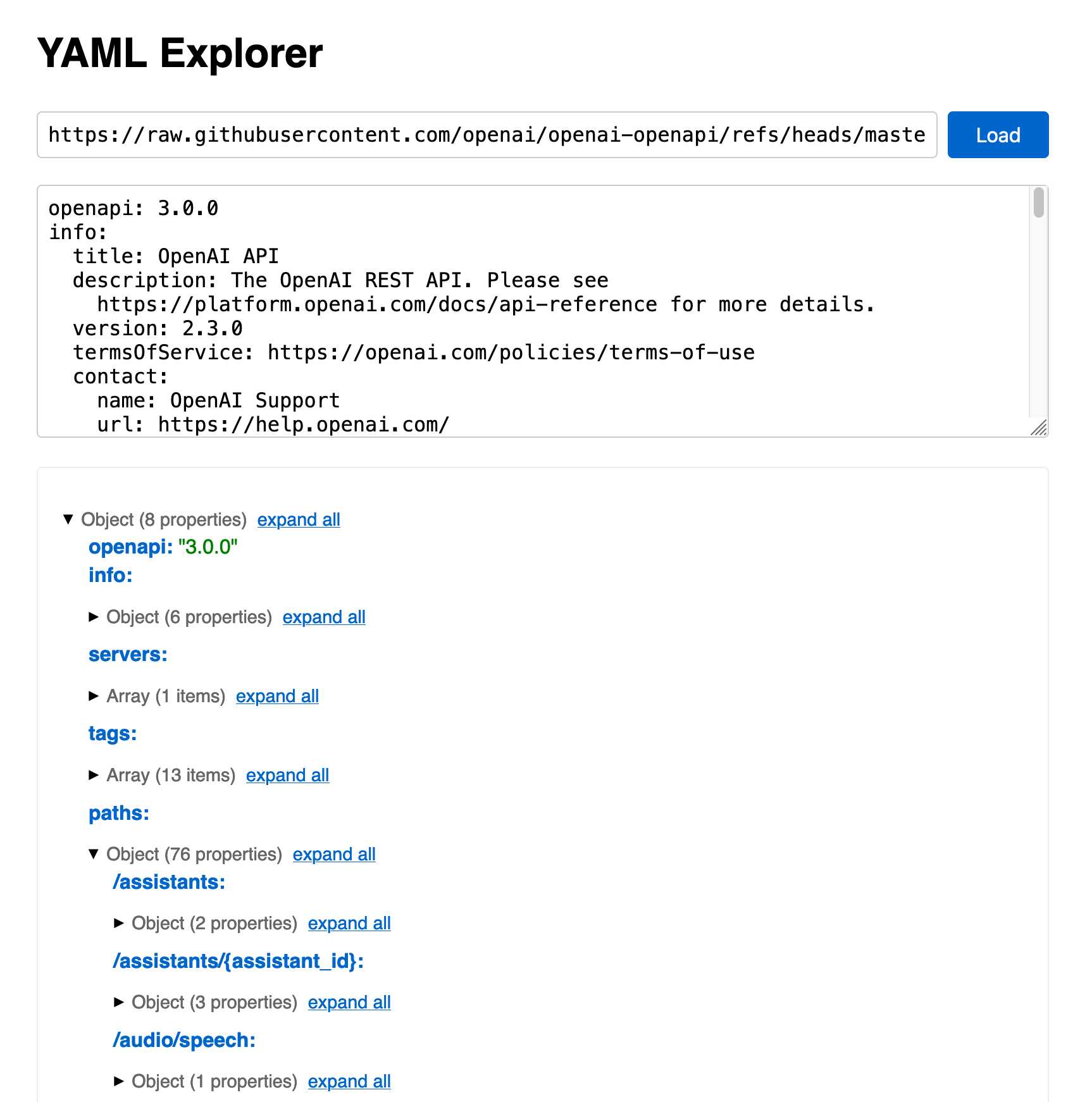

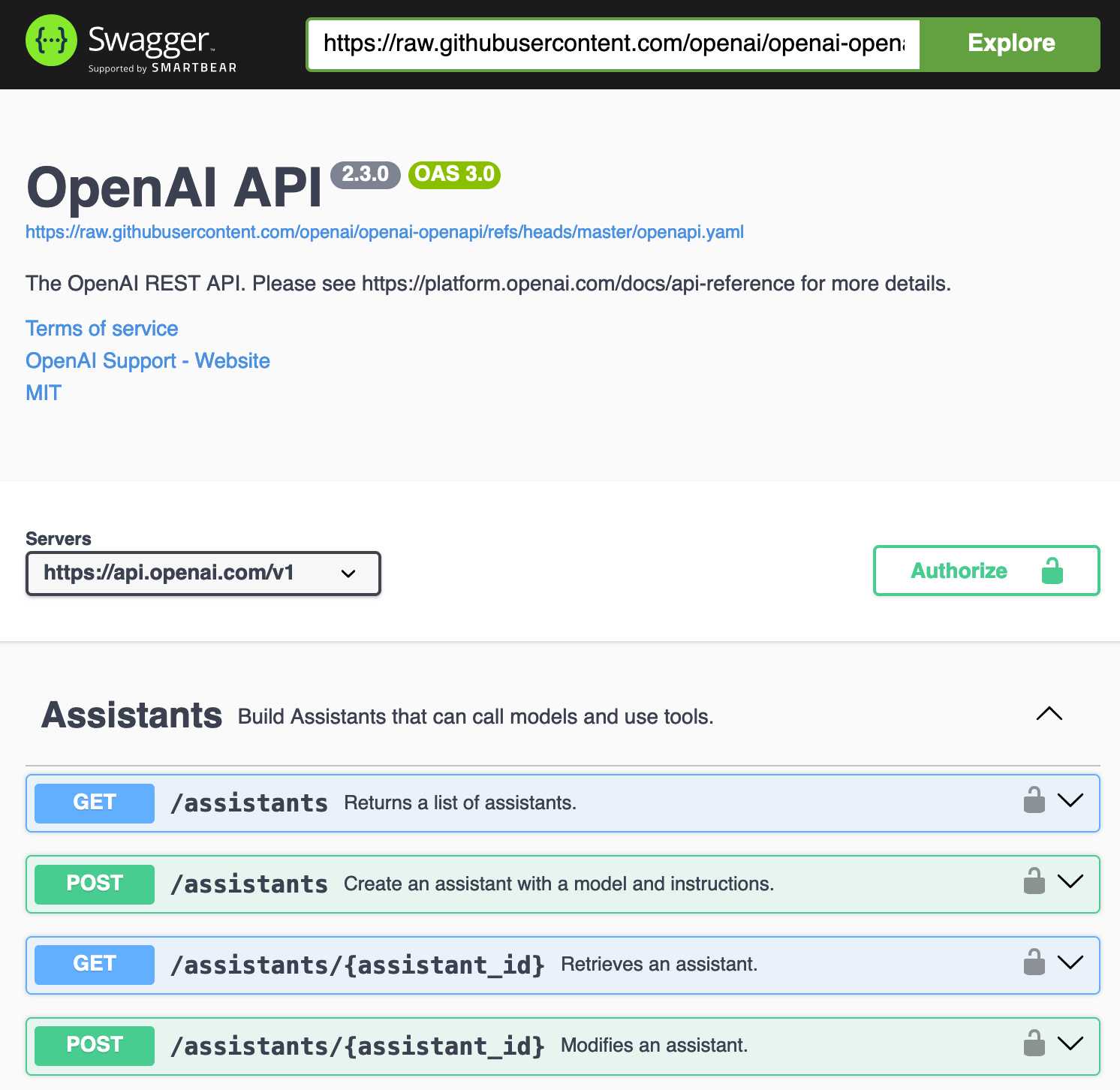

openai/openai-openapi. Seeing as the LLM world has semi-standardized on imitating OpenAI's API format for a whole host of different tools, it's useful to note that OpenAI themselves maintain a dedicated repository for a OpenAPI YAML representation of their current API.

(I get OpenAI and OpenAPI typo-confused all the time, so openai-openapi is a delightfully fiddly repository name.)

The openapi.yaml file itself is over 26,000 lines long, defining 76 API endpoints ("paths" in OpenAPI terminology) and 284 "schemas" for JSON that can be sent to and from those endpoints. A much more interesting view onto it is the commit history for that file, showing details of when each different API feature was released.

Browsing 26,000 lines of YAML isn't pleasant, so I got Claude to build me a rudimentary YAML expand/hide exploration tool. Here's that tool running against the OpenAI schema, loaded directly from GitHub via a CORS-enabled fetch() call: https://tools.simonwillison.net/yaml-explorer#.eyJ1c... - the code after that fragment is a base64-encoded JSON for the current state of the tool (mostly Claude's idea).

The tool is a little buggy - the expand-all option doesn't work quite how I want - but it's useful enough for the moment.

Update: It turns out the petstore.swagger.io demo has an (as far as I can tell) undocumented ?url= parameter which can load external YAML files, so here's openai-openapi/openapi.yaml in an OpenAPI explorer interface.

What happened to the world’s largest tube TV? (via) This YouTube video is an absolute delight.

Shank Mods describes the legendary Sony PVM-4300 - the largest CRT television ever made, released by Sony in 1989 and weighing over 400lb. CRT enthusiasts had long debated its very existence, given the lack of known specimens outside of Sony's old marketing materials. Then Shank tracked a working one down... on the second floor of a 300 year old Soba noodle restaurant in Osaka, Japan.

This story of how they raced to rescue the TV before the restaurant was demolished, given the immense difficulty of moving a 400lb television (and then shipping it to the USA), is a fantastic ride.

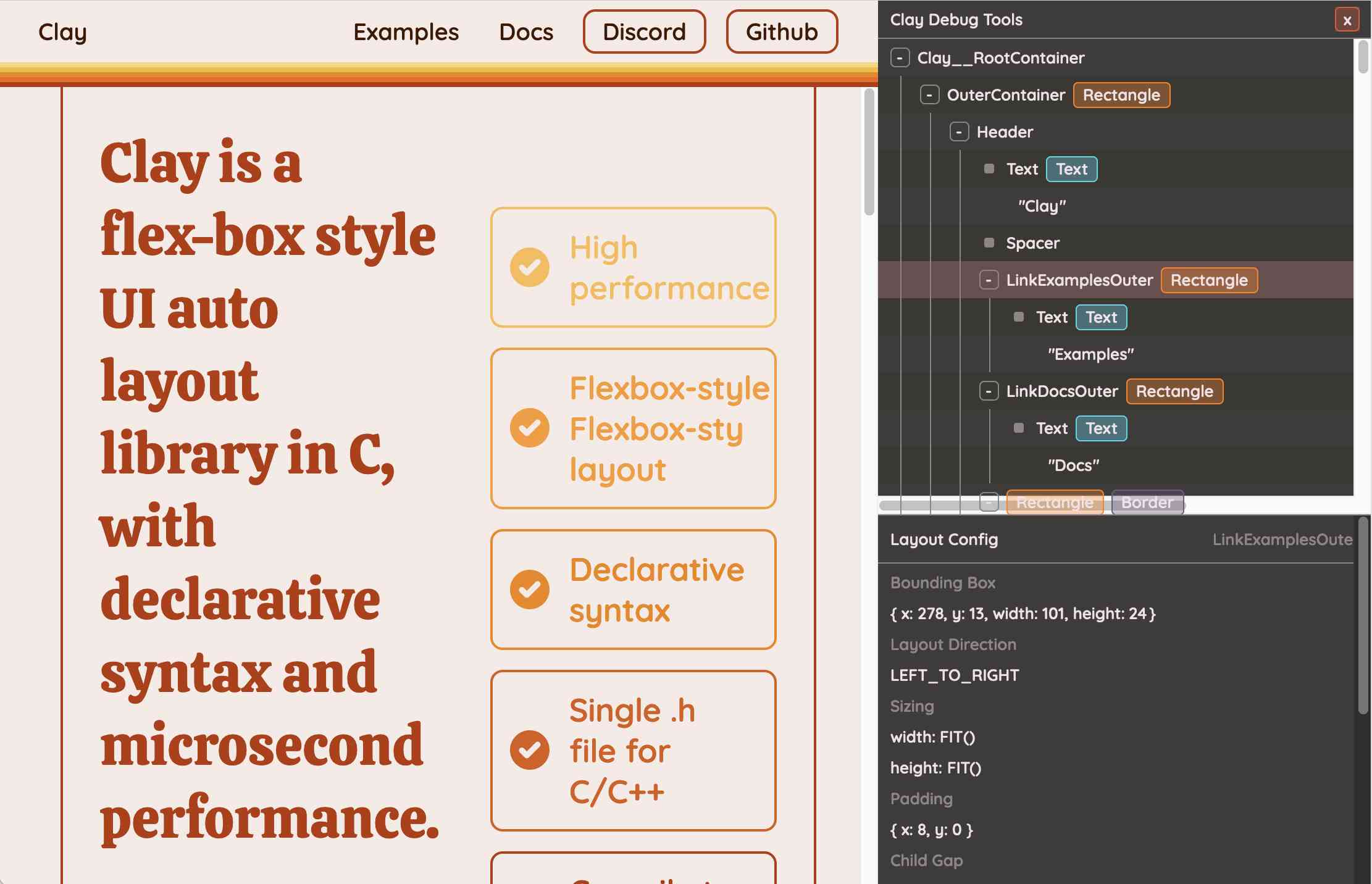

Clay UI library (via) Fascinating project by Nic Barker, who describes Clay like this:

Clay is a flex-box style UI auto layout library in C, with declarative syntax and microsecond performance.

His intro video to the library is outstanding: I learned a ton about how UI layout works from this, and the animated visual explanations are clear, tasteful and really helped land the different concepts:

Clay is a C library delivered in a single ~2000 line clay.h dependency-free header file. It only handles layout calculations: if you want to render the result you need to add an additional rendering layer.

In a fascinating demo of the library, the Clay site itself is rendered using Clay C compiled to WebAssembly! You can even switch between the default HTML renderer and an alternative based on Canvas.

This isn't necessarily a great idea: because the layout is entirely handled using <div> elements positioned using transform: translate(0px, 70px) style CSS attempting to select text across multiple boxes behaves strangely, and it's not clear to me what the accessibility implications are.

Update: Matt Campbell:

The accessibility implications are as serious as you might guess. The links aren't properly labeled, there's no semantic markup such as headings, and since there's a div for every line, continuous reading with a screen reader is choppy, that is, it pauses at the end of every physical line.

It does make for a very compelling demo of what Clay is capable of though, especially when you resize your browser window and the page layout is recalculated in real-time via the Clay WebAssembly bridge.

You can hit "D" on the website and open up a custom Clay debugger showing the hierarchy of layout elements on the page:

This also means that the entire page is defined using C code! Given that, I find the code itself surprisingly readable

void DeclarativeSyntaxPageDesktop() {

CLAY(CLAY_ID("SyntaxPageDesktop"), CLAY_LAYOUT({ .sizing = { CLAY_SIZING_GROW(), CLAY_SIZING_FIT({ .min = windowHeight - 50 }) }, .childAlignment = {0, CLAY_ALIGN_Y_CENTER}, .padding = {.x = 50} })) {

CLAY(CLAY_ID("SyntaxPage"), CLAY_LAYOUT({ .sizing = { CLAY_SIZING_GROW(), CLAY_SIZING_GROW() }, .childAlignment = { 0, CLAY_ALIGN_Y_CENTER }, .padding = { 32, 32 }, .childGap = 32 }), CLAY_BORDER({ .left = { 2, COLOR_RED }, .right = { 2, COLOR_RED } })) {

CLAY(CLAY_ID("SyntaxPageLeftText"), CLAY_LAYOUT({ .sizing = { CLAY_SIZING_PERCENT(0.5) }, .layoutDirection = CLAY_TOP_TO_BOTTOM, .childGap = 8 })) {

CLAY_TEXT(CLAY_STRING("Declarative Syntax"), CLAY_TEXT_CONFIG({ .fontSize = 52, .fontId = FONT_ID_TITLE_56, .textColor = COLOR_RED }));

CLAY(CLAY_ID("SyntaxSpacer"), CLAY_LAYOUT({ .sizing = { CLAY_SIZING_GROW({ .max = 16 }) } })) {}

CLAY_TEXT(CLAY_STRING("Flexible and readable declarative syntax with nested UI element hierarchies."), CLAY_TEXT_CONFIG({ .fontSize = 28, .fontId = FONT_ID_BODY_36, .textColor = COLOR_RED }));

CLAY_TEXT(CLAY_STRING("Mix elements with standard C code like loops, conditionals and functions."), CLAY_TEXT_CONFIG({ .fontSize = 28, .fontId = FONT_ID_BODY_36, .textColor = COLOR_RED }));

CLAY_TEXT(CLAY_STRING("Create your own library of re-usable components from UI primitives like text, images and rectangles."), CLAY_TEXT_CONFIG({ .fontSize = 28, .fontId = FONT_ID_BODY_36, .textColor = COLOR_RED }));

}

CLAY(CLAY_ID("SyntaxPageRightImage"), CLAY_LAYOUT({ .sizing = { CLAY_SIZING_PERCENT(0.50) }, .childAlignment = {.x = CLAY_ALIGN_X_CENTER} })) {

CLAY(CLAY_ID("SyntaxPageRightImageInner"), CLAY_LAYOUT({ .sizing = { CLAY_SIZING_GROW({ .max = 568 }) } }), CLAY_IMAGE({ .sourceDimensions = {1136, 1194}, .sourceURL = CLAY_STRING("/clay/images/declarative.png") })) {}

}

}

}

}I'm not ready to ditch HTML and CSS for writing my web pages in C compiled to WebAssembly just yet, but as an exercise in understanding layout engines (and a potential tool for building non-web interfaces in the future) this is a really interesting project to dig into.

To clarify here: I don't think the web layout / WebAssembly thing is the key idea behind Clay at all - I think it's a neat demo of the library, but it's not what Clay is for. It's certainly an interesting way to provide a demo of a layout library!

Nic confirms:

You totally nailed it, the fact that you can compile to wasm and run in HTML stemmed entirely from a “wouldn’t it be cool if…” It was designed for my C projects first and foremost!