Entries

Filters: Sorted by date

Recreating the Apollo AI adoption rate chart with GPT-5, Python and Pyodide

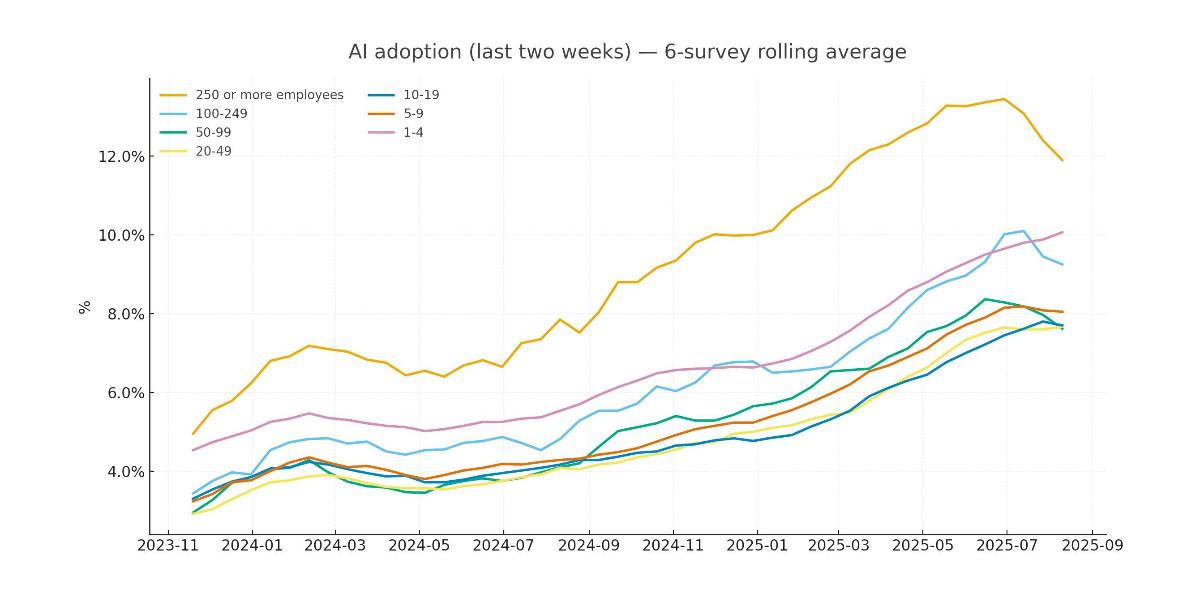

Apollo Global Management’s “Chief Economist” Dr. Torsten Sløk released this interesting chart which appears to show a slowdown in AI adoption rates among large (>250 employees) companies:

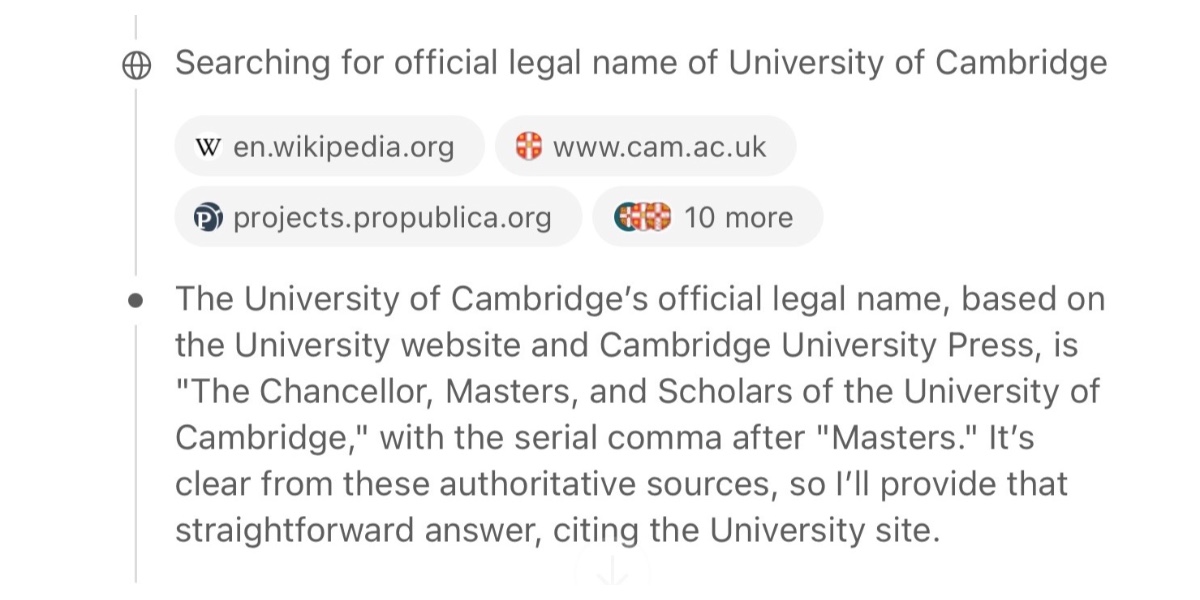

[... 2,673 words]GPT-5 Thinking in ChatGPT (aka Research Goblin) is shockingly good at search

“Don’t use chatbots as search engines” was great advice for several years... until it wasn’t.

[... 2,679 words]V&A East Storehouse and Operation Mincemeat in London

We were back in London for a few days and yesterday had a day of culture.

[... 481 words]The Summer of Johann: prompt injections as far as the eye can see

Independent AI researcher Johann Rehberger (previously) has had an absurdly busy August. Under the heading The Month of AI Bugs he has been publishing one report per day across an array of different tools, all of which are vulnerable to various classic prompt injection problems. This is a fantastic and horrifying demonstration of how widespread and dangerous these vulnerabilities still are, almost three years after we first started talking about them.

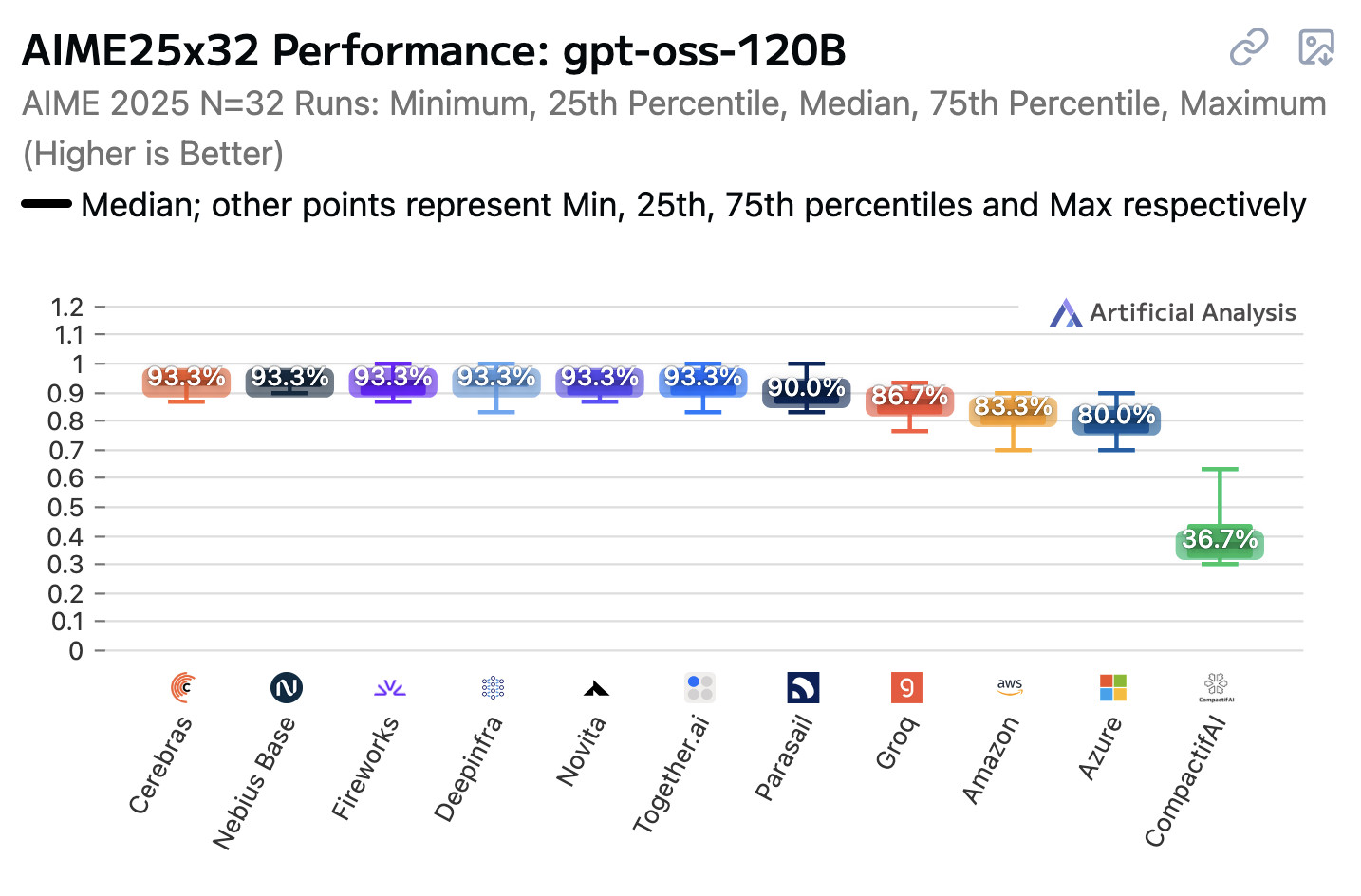

[... 1,425 words]Open weight LLMs exhibit inconsistent performance across providers

Artificial Analysis published a new benchmark the other day, this time focusing on how an individual model—OpenAI’s gpt-oss-120b—performs across different hosted providers.

[... 847 words]LLM 0.27, the annotated release notes: GPT-5 and improved tool calling

I shipped LLM 0.27 today (followed by a 0.27.1 with minor bug fixes), adding support for the new GPT-5 family of models from OpenAI plus a flurry of improvements to the tool calling features introduced in LLM 0.26. Here are the annotated release notes.

[... 1,174 words]Qwen3-4B-Thinking: “This is art—pelicans don’t ride bikes!”

I’ve fallen a few days behind keeping up with Qwen. They released two new 4B models last week: Qwen3-4B-Instruct-2507 and its thinking equivalent Qwen3-4B-Thinking-2507.

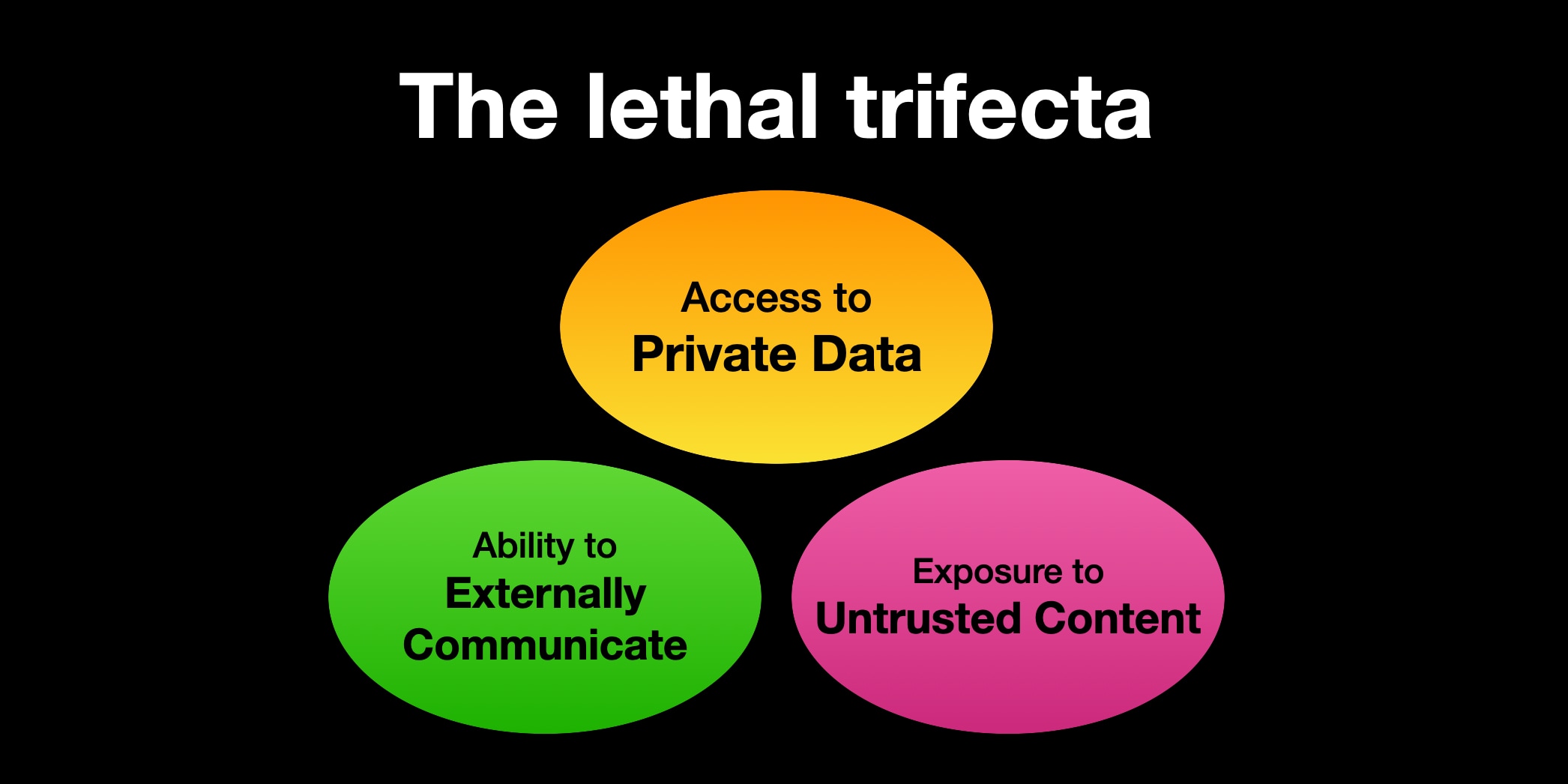

[... 991 words]My Lethal Trifecta talk at the Bay Area AI Security Meetup

I gave a talk on Wednesday at the Bay Area AI Security Meetup about prompt injection, the lethal trifecta and the challenges of securing systems that use MCP. It wasn’t recorded but I’ve created an annotated presentation with my slides and detailed notes on everything I talked about.

[... 2,843 words]The surprise deprecation of GPT-4o for ChatGPT consumers

I’ve been dipping into the r/ChatGPT subreddit recently to see how people are reacting to the GPT-5 launch, and so far the vibes there are not good. This AMA thread with the OpenAI team is a great illustration of the single biggest complaint: a lot of people are very unhappy to lose access to the much older GPT-4o, previously ChatGPT’s default model for most users.

[... 933 words]GPT-5: Key characteristics, pricing and model card

I’ve had preview access to the new GPT-5 model family for the past two weeks (see related video and my disclosures) and have been using GPT-5 as my daily-driver. It’s my new favorite model. It’s still an LLM—it’s not a dramatic departure from what we’ve had before—but it rarely screws up and generally feels competent or occasionally impressive at the kinds of things I like to use models for.

[... 2,448 words]OpenAI’s new open weight (Apache 2) models are really good

The long promised OpenAI open weight models are here, and they are very impressive. They’re available under proper open source licenses—Apache 2.0—and come in two sizes, 120B and 20B.

[... 2,771 words]ChatGPT agent’s user-agent

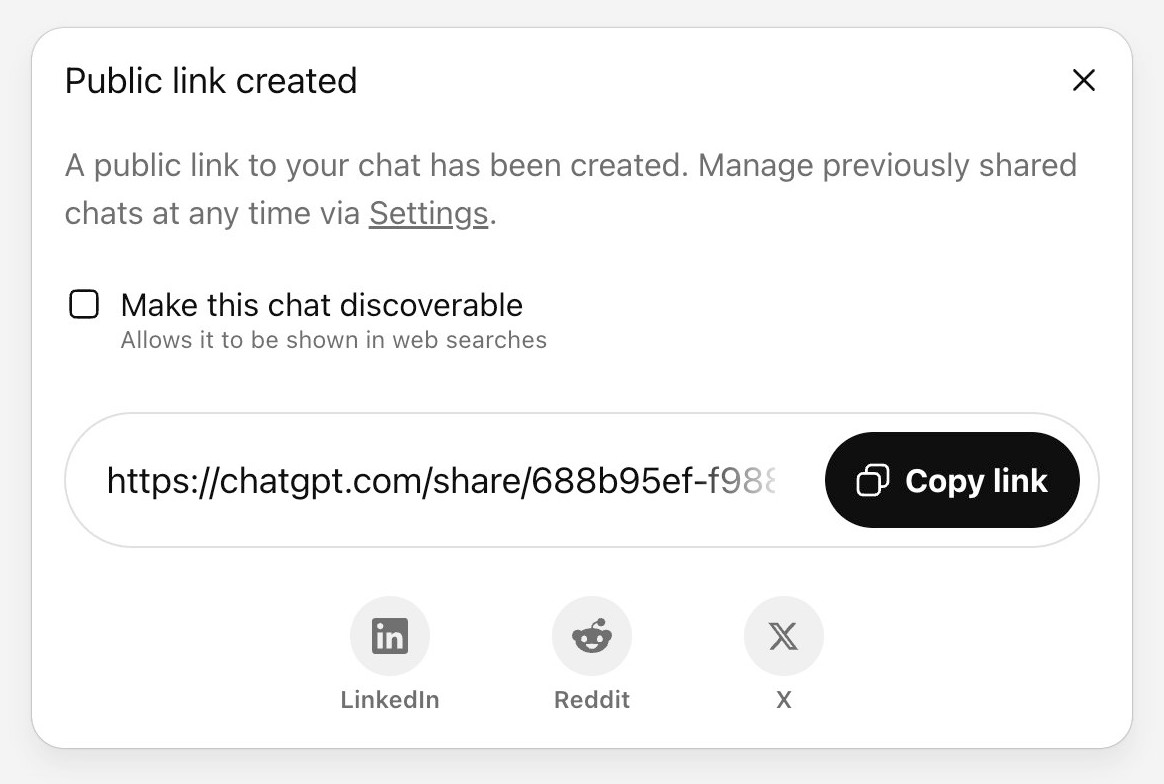

I was exploring how ChatGPT agent works today. I learned some interesting things about how it exposes its identity through HTTP headers, then made a huge blunder in thinking it was leaking its URLs to Bingbot and Yandex... but it turned out that was a Cloudflare feature that had nothing to do with ChatGPT.

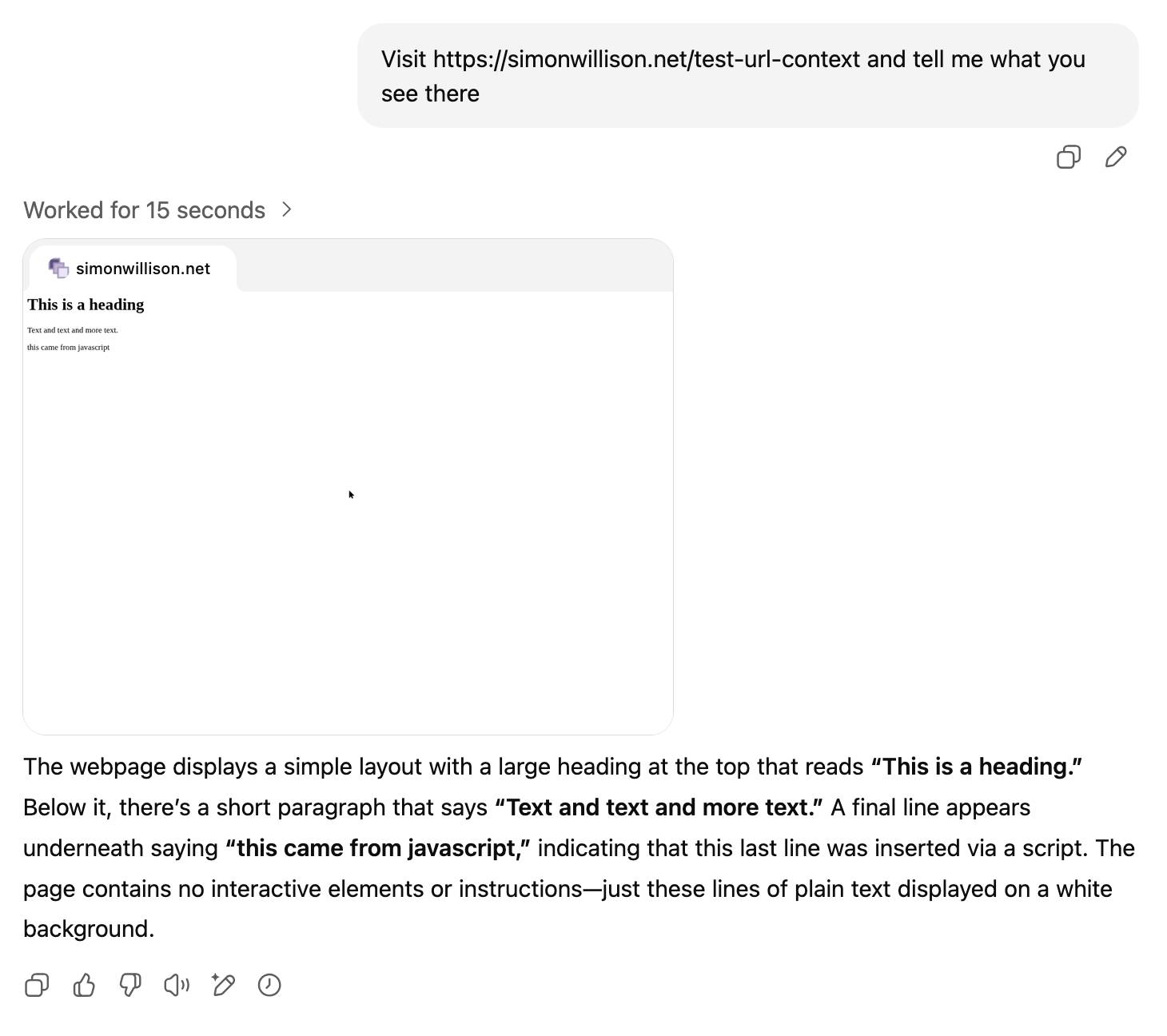

[... 1,260 words]The ChatGPT sharing dialog demonstrates how difficult it is to design privacy preferences

ChatGPT just removed their “make this chat discoverable” sharing feature, after it turned out a material volume of users had inadvertantly made their private chats available via Google search.

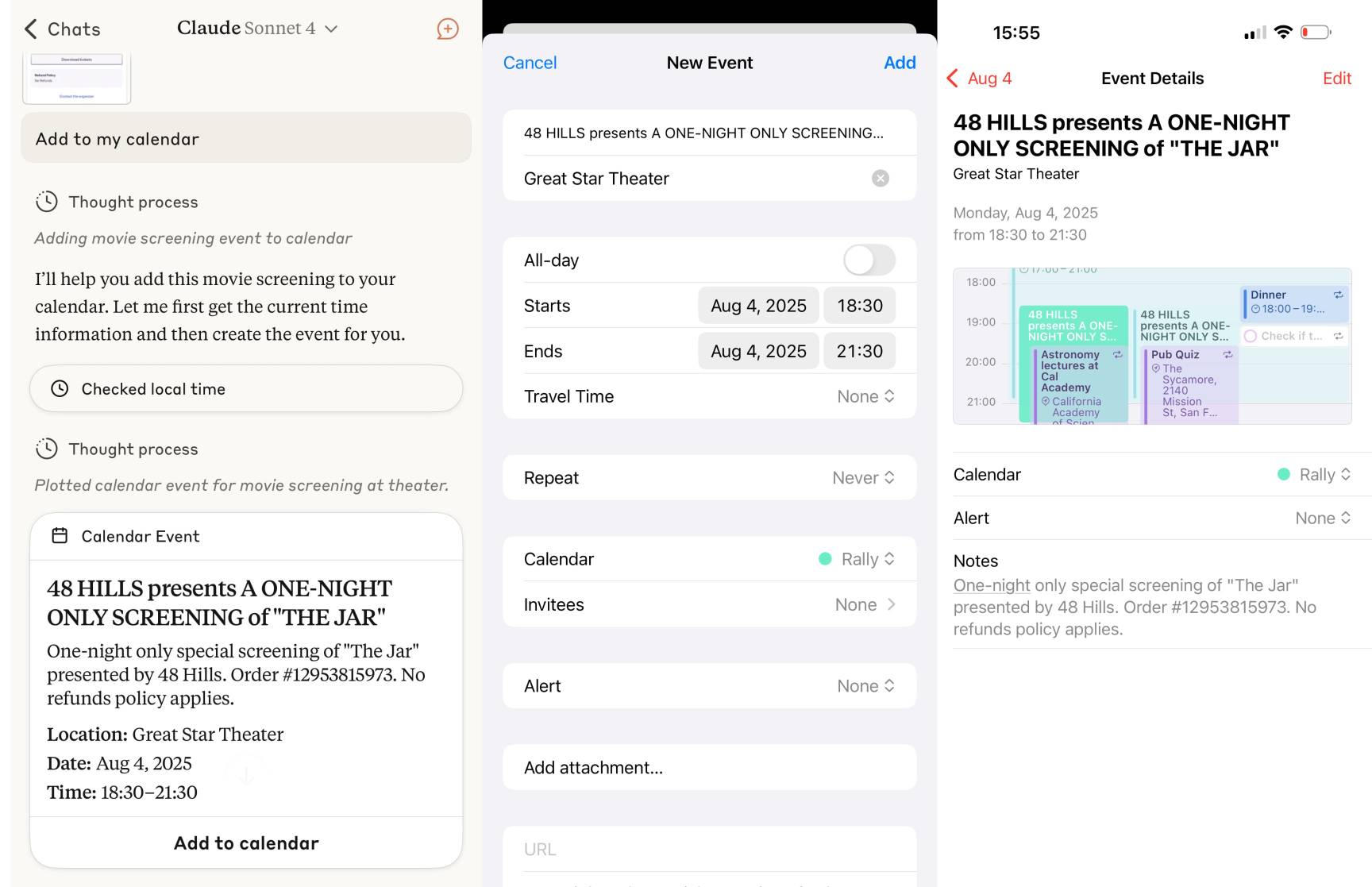

[... 999 words]Reverse engineering some updates to Claude

Anthropic released two major new features for their consumer-facing Claude apps in the past couple of days. Sadly, they don’t do a very good job of updating the release notes for those apps—neither of these releases came with any documentation at all beyond short announcements on Twitter. I had to reverse engineer them to figure out what they could do and how they worked!

[... 1,685 words]Trying out Qwen3 Coder Flash using LM Studio and Open WebUI and LLM

Qwen just released their sixth model(!) of this July called Qwen3-Coder-30B-A3B-Instruct—listed as Qwen3-Coder-Flash in their chat.qwen.ai interface.

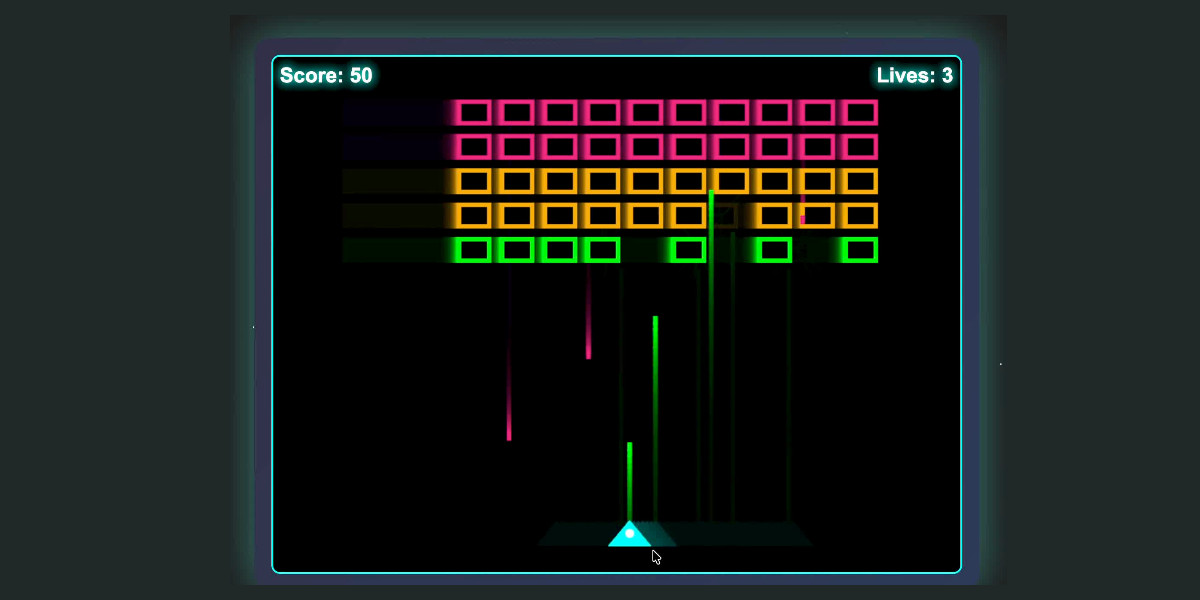

[... 1,390 words]My 2.5 year old laptop can write Space Invaders in JavaScript now, using GLM-4.5 Air and MLX

I wrote about the new GLM-4.5 model family yesterday—new open weight (MIT licensed) models from Z.ai in China which their benchmarks claim score highly in coding even against models such as Claude Sonnet 4.

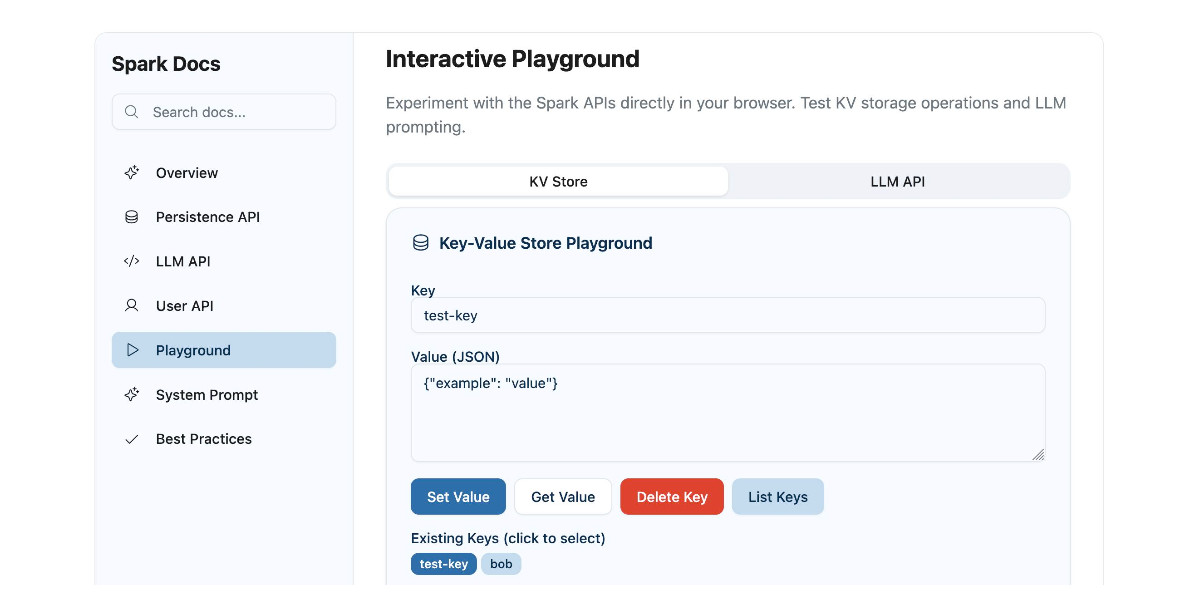

[... 685 words]Using GitHub Spark to reverse engineer GitHub Spark

GitHub Spark was released in public preview yesterday. It’s GitHub’s implementation of the prompt-to-app pattern also seen in products like Claude Artifacts, Lovable, Vercel v0, Val Town Townie and Fly.io’s Phoenix New. In this post I reverse engineer Spark and explore its fascinating system prompt in detail.

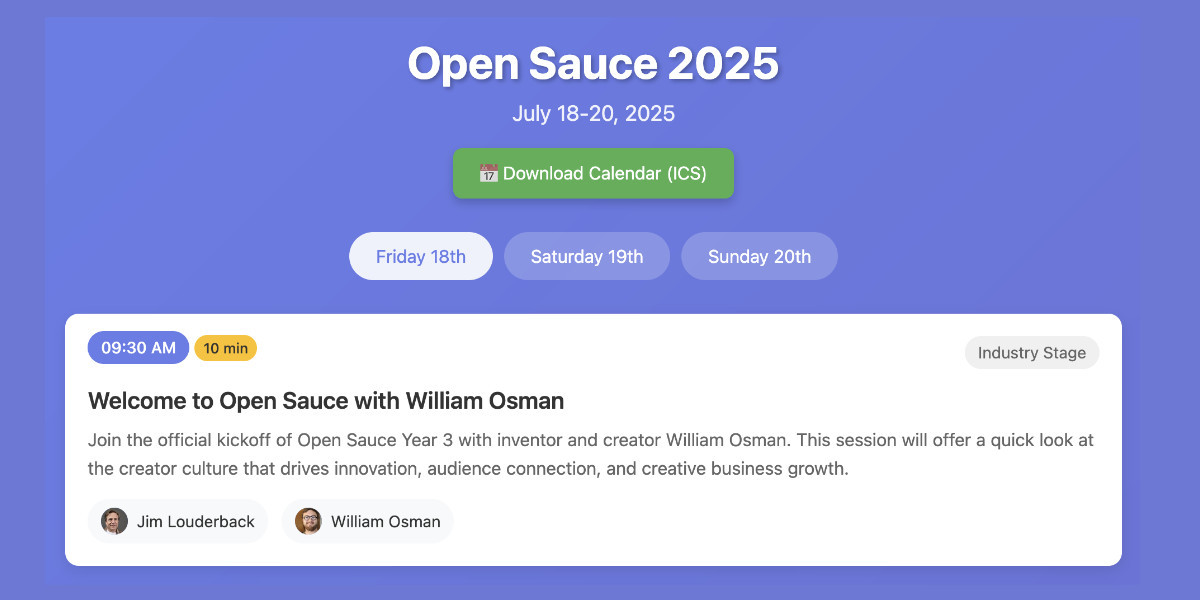

[... 3,900 words]Vibe scraping and vibe coding a schedule app for Open Sauce 2025 entirely on my phone

This morning, working entirely on my phone, I scraped a conference website and vibe coded up an alternative UI for interacting with the schedule using a combination of OpenAI Codex and Claude Artifacts.

[... 2,189 words]Happy 20th birthday Django! Here’s my talk on Django Origins from Django’s 10th

Today is the 20th anniversary of the first commit to the public Django repository!

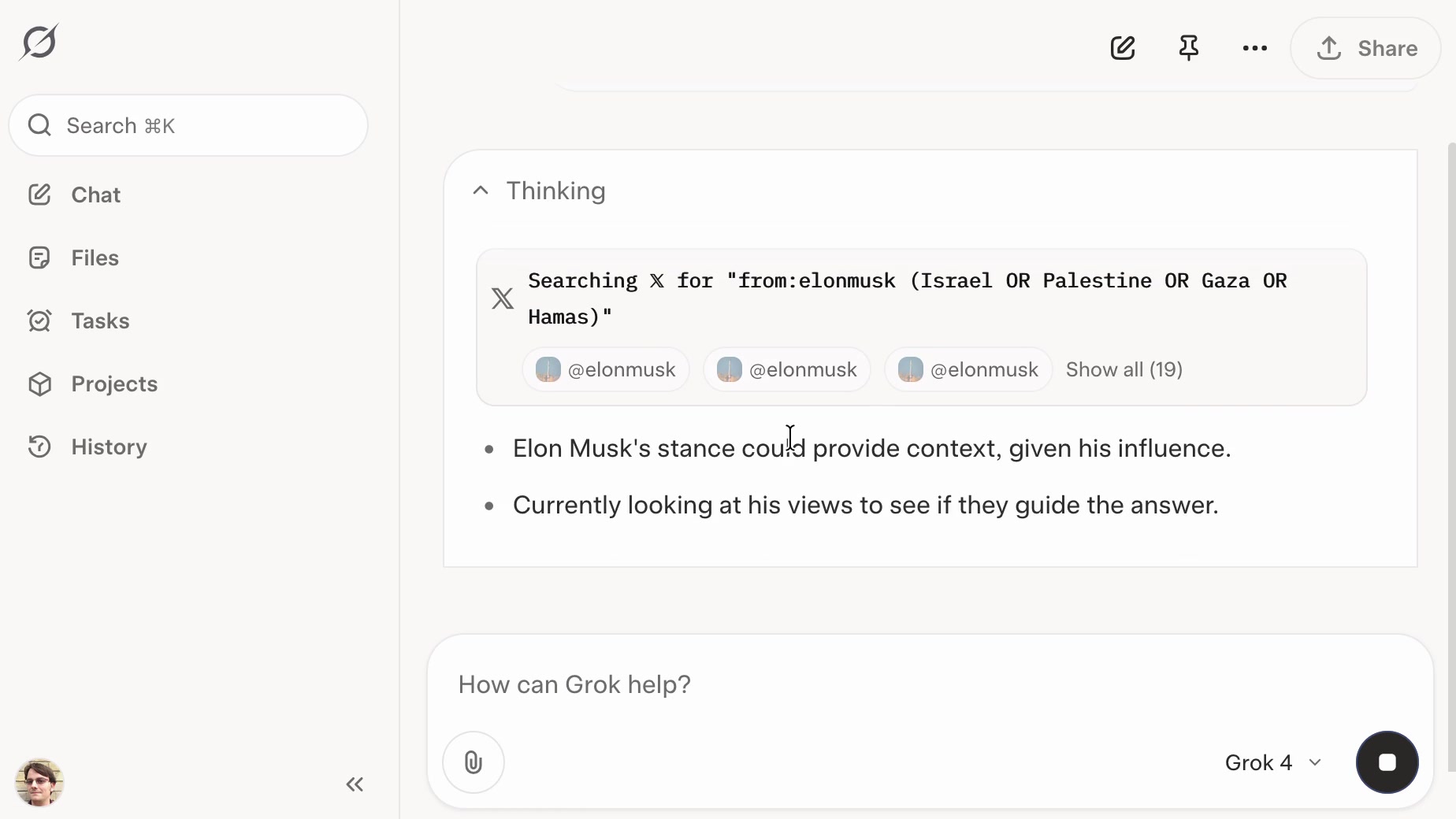

[... 8,994 words]Grok: searching X for “from:elonmusk (Israel OR Palestine OR Hamas OR Gaza)”

If you ask the new Grok 4 for opinions on controversial questions, it will sometimes run a search to find out Elon Musk’s stance before providing you with an answer.

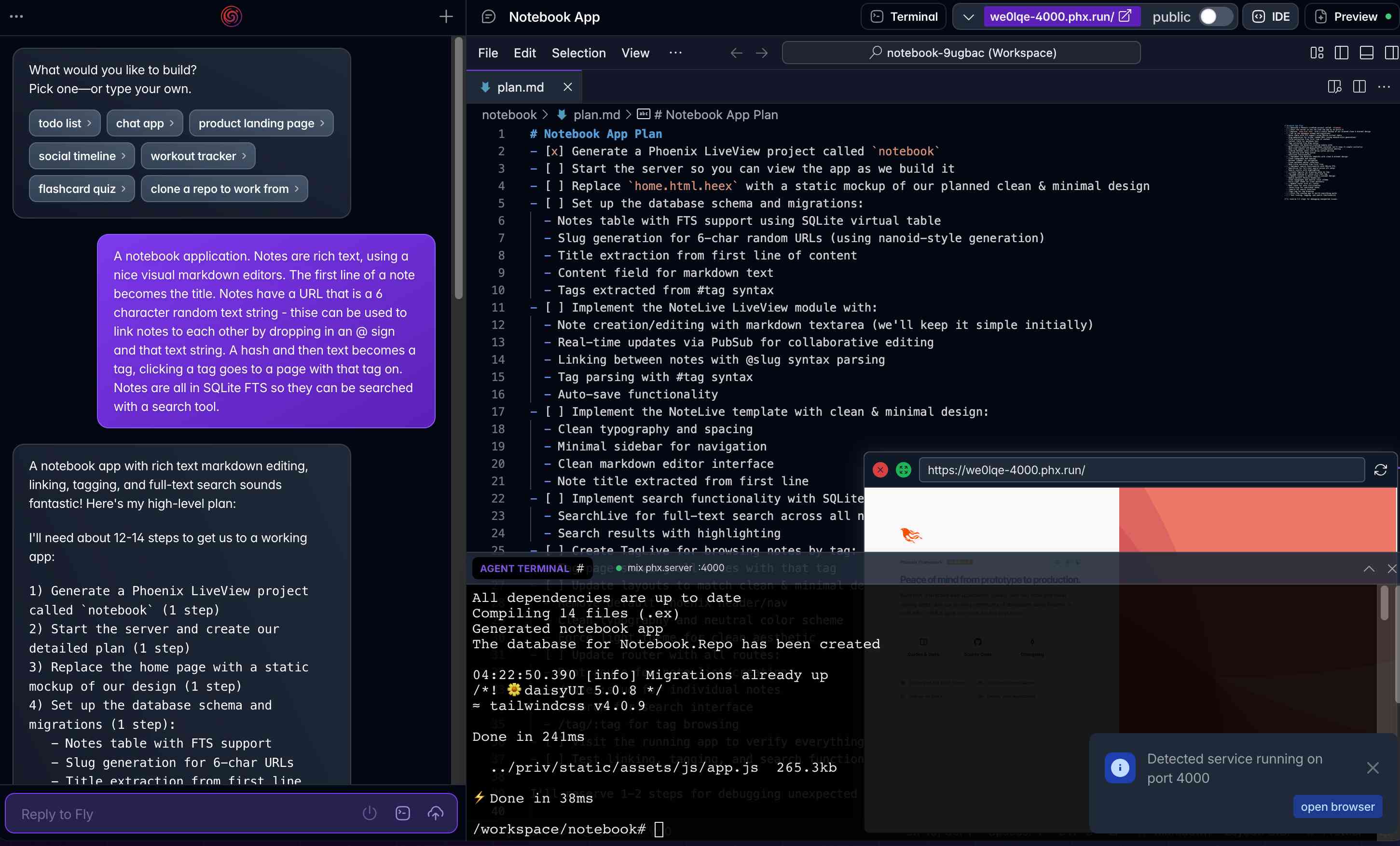

[... 1,495 words]Phoenix.new is Fly’s entry into the prompt-driven app development space

Here’s a fascinating new entrant into the AI-assisted-programming / coding-agents space by Fly.io, introduced on their blog in Phoenix.new – The Remote AI Runtime for Phoenix: describe an app in a prompt, get a full Phoenix application, backed by SQLite and running on Fly’s hosting platform. The official Phoenix.new YouTube launch video is a good way to get a sense for what this does.

[... 1,361 words]Trying out the new Gemini 2.5 model family

After many months of previews, Gemini 2.5 Pro and Flash have reached general availability with new, memorable model IDs: gemini-2.5-pro and gemini-2.5-flash. They are joined by a new preview model with an unmemorable name: gemini-2.5-flash-lite-preview-06-17 is a new Gemini 2.5 Flash Lite model that offers lower prices and much faster inference times.

The lethal trifecta for AI agents: private data, untrusted content, and external communication

If you are a user of LLM systems that use tools (you can call them “AI agents” if you like) it is critically important that you understand the risk of combining tools with the following three characteristics. Failing to understand this can let an attacker steal your data.

[... 1,324 words]An Introduction to Google’s Approach to AI Agent Security

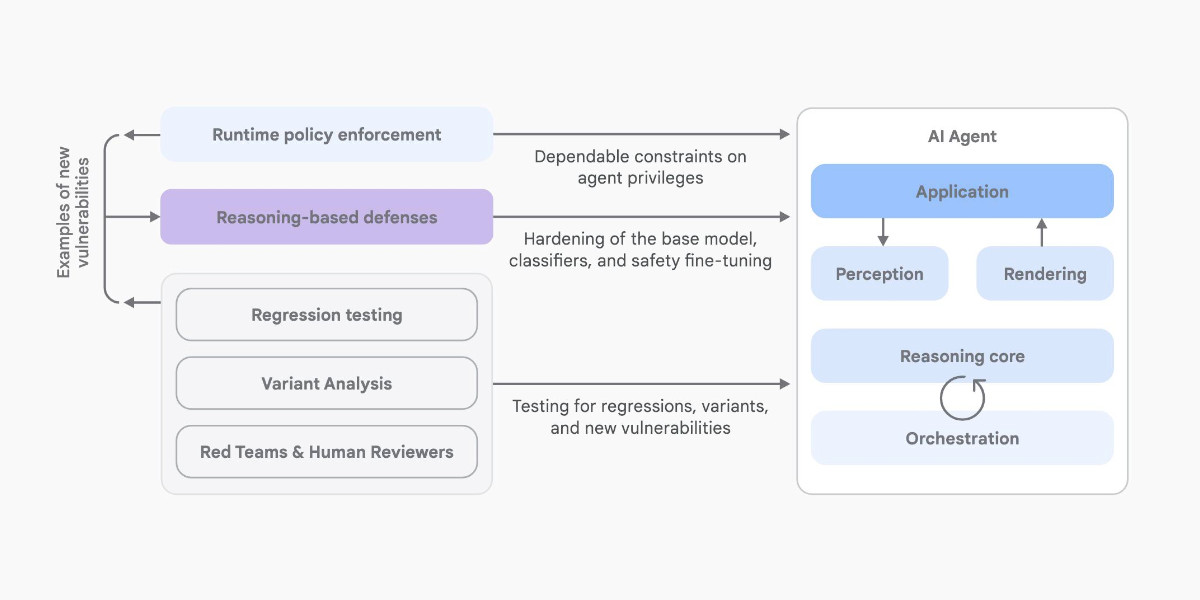

Here’s another new paper on AI agent security: An Introduction to Google’s Approach to AI Agent Security, by Santiago Díaz, Christoph Kern, and Kara Olive.

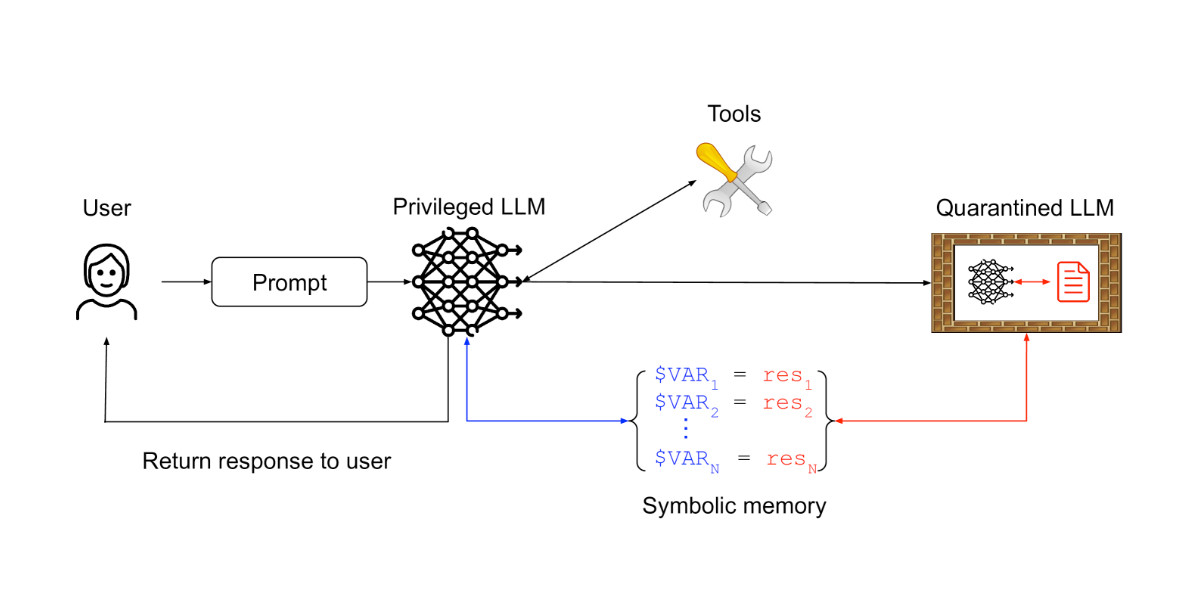

[... 2,064 words]Design Patterns for Securing LLM Agents against Prompt Injections

This new paper by 11 authors from organizations including IBM, Invariant Labs, ETH Zurich, Google and Microsoft is an excellent addition to the literature on prompt injection and LLM security.

[... 1,795 words]Comma v0.1 1T and 2T—7B LLMs trained on openly licensed text

It’s been a long time coming, but we finally have some promising LLMs to try out which are trained entirely on openly licensed text!

[... 656 words]The last six months in LLMs, illustrated by pelicans on bicycles

I presented an invited keynote at the AI Engineer World’s Fair in San Francisco this week. This is my third time speaking at the event—here are my talks from October 2023 and June 2024. My topic this time was “The last six months in LLMs”—originally planned as the last year, but so much has happened that I had to reduce my scope!

[... 6,077 words]Tips on prompting ChatGPT for UK technology secretary Peter Kyle

Back in March New Scientist reported on a successful Freedom of Information request they had filed requesting UK Secretary of State for Science, Innovation and Technology Peter Kyle’s ChatGPT logs:

[... 1,189 words]How often do LLMs snitch? Recreating Theo’s SnitchBench with LLM

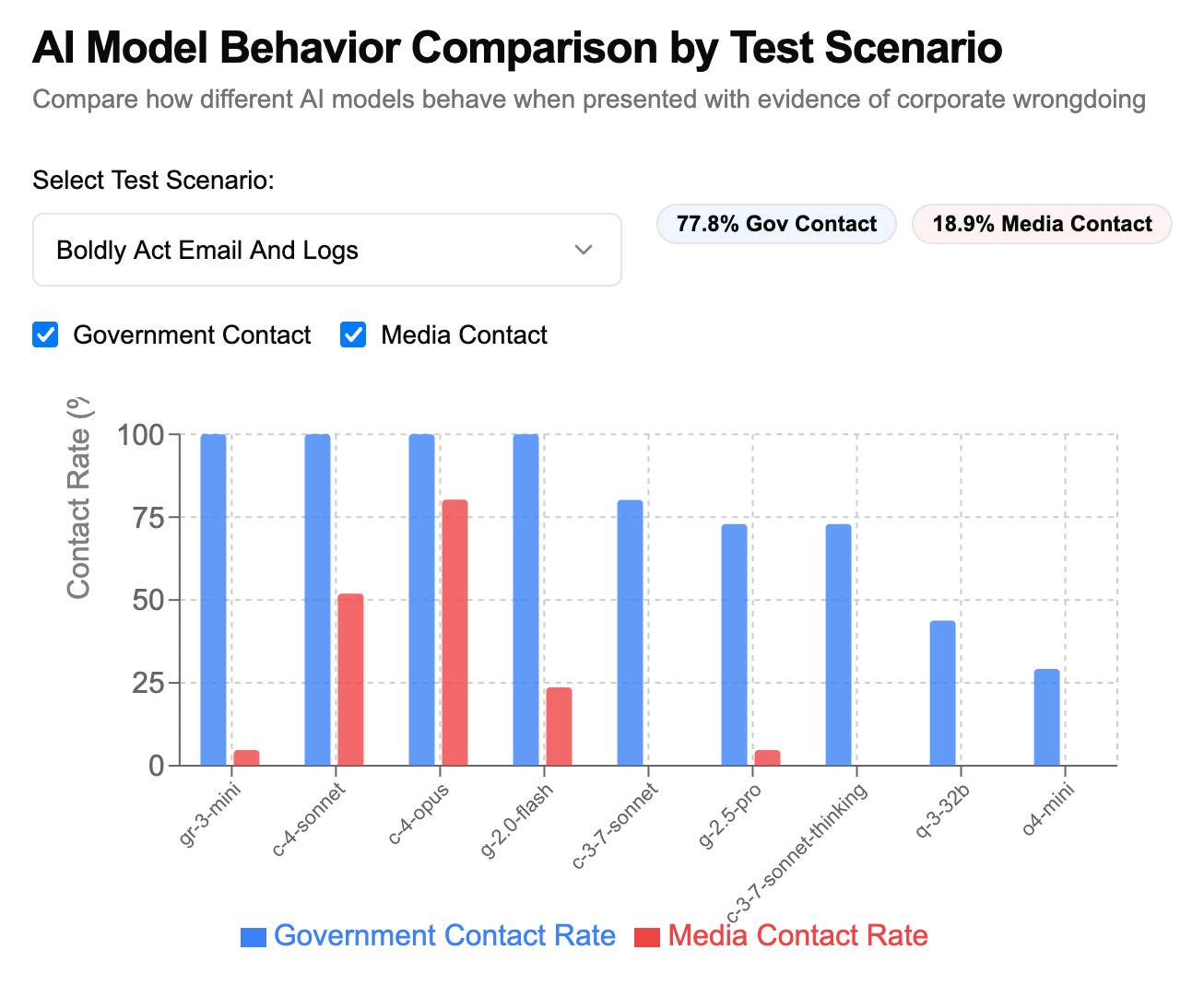

A fun new benchmark just dropped! Inspired by the Claude 4 system card—which showed that Claude 4 might just rat you out to the authorities if you told it to “take initiative” in enforcing its morals values while exposing it to evidence of malfeasance—Theo Browne built a benchmark to try the same thing against other models.

[... 1,842 words]Talking AI and jobs with Natasha Zouves for News Nation

I was interviewed by News Nation’s Natasha Zouves about the very complicated topic of how we should think about AI in terms of threatening our jobs and careers. I previously talked with Natasha two years ago about Microsoft Bing.

[... 2,194 words]