31 posts tagged “replication”

2025

Stop syncing everything. In which Carl Sverre announces Graft, a fascinating new open source Rust data synchronization engine he's been working on for the past year.

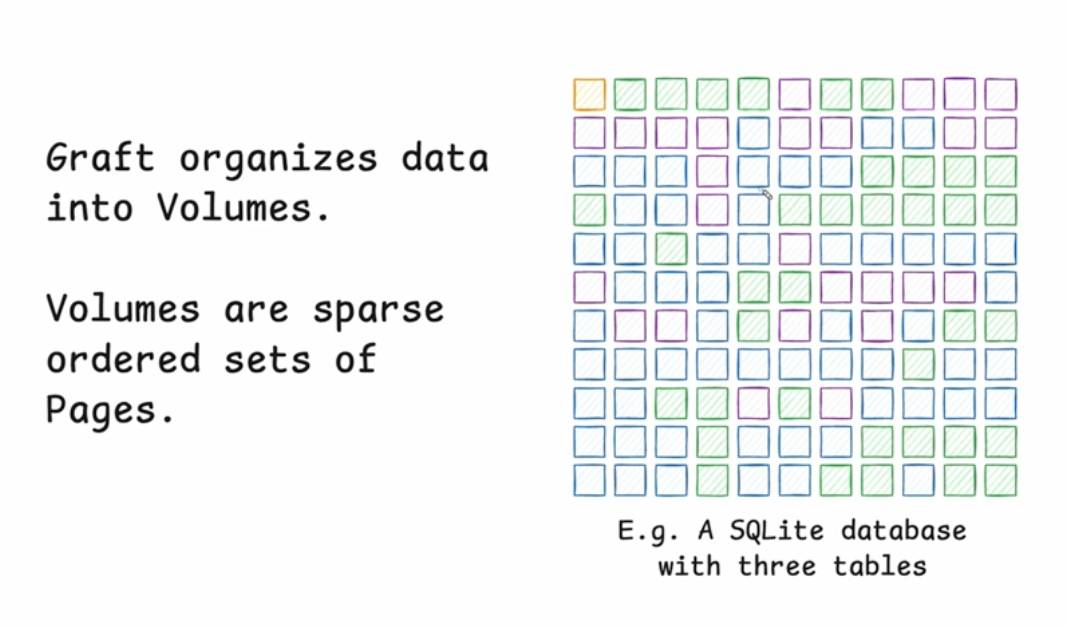

Carl's recent talk at the Vancouver Systems meetup explains Graft in detail, including this slide which helped everything click into place for me:

Graft manages a volume, which is a collection of pages (currently at a fixed 4KB size). A full history of that volume is maintained using snapshots. Clients can read and write from particular snapshot versions for particular pages, and are constantly updated on which of those pages have changed (while not needing to synchronize the actual changed data until they need it).

This is a great fit for B-tree databases like SQLite.

The Graft project includes a SQLite VFS extension that implements multi-leader read-write replication on top of a Graft volume. You can see a demo of that running at 36m15s in the video, or consult the libgraft extension documentation and try it yourself.

The section at the end on What can you build with Graft? has some very useful illustrative examples:

Offline-first apps: Note-taking, task management, or CRUD apps that operate partially offline. Graft takes care of syncing, allowing the application to forget the network even exists. When combined with a conflict handler, Graft can also enable multiplayer on top of arbitrary data.

Cross-platform data: Eliminate vendor lock-in and allow your users to seamlessly access their data across mobile platforms, devices, and the web. Graft is architected to be embedded anywhere

Stateless read replicas: Due to Graft's unique approach to replication, a database replica can be spun up with no local state, retrieve the latest snapshot metadata, and immediately start running queries. No need to download all the data and replay the log.

Replicate anything: Graft is just focused on consistent page replication. It doesn't care about what's inside those pages. So go crazy! Use Graft to sync AI models, Parquet or Lance files, Geospatial tilesets, or just photos of your cats. The sky's the limit with Graft.

2023

Upgrading GitHub.com to MySQL 8.0 (via) I love a good zero-downtime upgrade story, and this is a fine example of the genre. GitHub spent a year upgrading MySQL from 5.7 to 8 across 1200+ hosts, covering 300+ TB that was serving 5.5 million queries per second. The key technique was extremely carefully managed replication, plus tricks like leaving enough 5.7 replicas available to handle a rollback should one be needed.

2022

Introducing LiteFS (via) LiteFS is the new SQLite replication solution from Fly, now ready for beta testing. It’s from the same author as Litestream but has a very different architecture; LiteFS works by implementing a custom FUSE filesystem which spies on SQLite transactions being written to the journal file and forwards them on to other nodes in the cluster, providing full read-replication. The signature Litestream feature of streaming a backup to S3 should be coming within the next few months.

Litestream: Live Read Replication (via) The documentation for the read replication implemented in the latest Litestream beta (v0.4.0-beta.2). The design is really simple and clever: the primary runs a web server on a port, and replica instances can then be started with a configured URL pointing to the IP and port of the primary. That’s all it takes to have a SQLite database replicated to multiple hosts, each of which can then conduct read queries against their local copies.

2021

Multi-region PostgreSQL on Fly (via) Really interesting piece of architectural design from Fly here. Fly can run your application (as a Docker container run using Firecracker) in multiple regions around the world, and they’ve now quietly added PostgreSQL multi-region support. The way it works is that all-but-one region can have a read-only replica, and requests sent to application servers can perform read-only queries against their local region’s replica. If a request needs to execute a SQL update your application code can return a “fly-replay: region=scl” HTTP header and the Fly CDN will transparently replay the request against the region containing the leader database. This also means you can implement tricks like setting a 10s expiring cookie every time the user performs a write, such that their requests in the next 10s will go straight to the leader and avoid them experiencing any replication lag that hasn’t caught up with their latest update.

logpaste (via) Useful example of how to use the Litestream SQLite replication tool in a Dockerized application: S3 credentials are passed to the container on startup, it then attempts to restore the SQLite database from S3 and starts a Litestream process in the same container to periodically synchronize changes back up to the S3 bucket.

Why I Built Litestream. Litestream is a really exciting new piece of technology by Ben Johnson, who previously built BoltDB, the key-value store written in Go that is used by etcd. It adds replication to SQLite by running a process that converts the SQLite WAL log into a stream that can be saved to another folder or pushed to S3. The S3 option is particularly exciting—Ben estimates that keeping a full point-in-time recovery log of a high write SQLite database should cost in the order of a few dollars a month. I think this could greatly expand the set of use-cases for which SQLite is sensible choice.

2020

Replicating SQLite with rqlite (via) I’ve been trying out rqlite, a “lightweight, distributed relational database, which uses SQLite as its storage engine”. It’s written in Go and uses the Raft consensus algorithm to allow a cluster of nodes to elect a leader and replicate SQLite statements between them. By default it uses in-memory SQLite databases with an on-disk Raft replication log—here are my notes on running it in “on disk” mode as a way to run multiple Datasette processes against replicated SQLite database files.

2017

Scaling Postgres with Read Replicas & Using WAL to Counter Stale Reads (via) The problem with sending writes to the primary and balancing reads across replicas is dealing with replica lag—what if you write to the primary and then read from a replica that hasn’t had the new state applied to it yet? Brandur Leach dives deep into an elegant solution using PostgreSQL’s LSN (log sequence numbers) accesesed using pg_last_wal_replay_lsn(). An observer process continuously polls the replicas for their most recently applied LSN and stores them in a table. A column in the Users table then records the min_lsn valid for that user, updating it to the pg_current_wal_lsn() of the primary whenever that user makes a write. Combining the two allows the application to randomly select a replica that is up-to-date for the purposes of a specific user any time it needs to make a read.

Scaling the GitLab database.

Lots of interesting details on how GitLab have worked to scale their PostgreSQL setup. They've avoided sharding so far, instead opting for database pooling with pgbouncer and read-only replicas using hot standbys. I like the way they deal with replica lag - they store the current WAL position in a redis key for the user every time there's a write, then use pg_last_xlog_replay_location() on the various replicas to check and see if they have caught up next time the user makes a request that needs to read some data.

PostgreSQL 10 Released. Highlights include major improvements to parallelized queries, quorum commit for synchronous replication (sounds reminiscent of Cassandra) and logical replication, which allows modifications to specific tables to be replicated to different clusters. They’re also changing their versioning scheme to Major.Minor, so the next minor release will be 10.1 and the next major release will be 11.

2010

PostgreSQL: 5 Minutes to Binary Replication. The missing manual.

PostgreSQL 9.0 Beta 1 Now Available. With asynchronous streaming replication.

2009

PostgreSQL 8.5alpha3 now available. “Hot Standby, allowing read-only connections during recovery, provides a built-in master-slave replication solution.” Woohoo!

Simple CouchDB multi-master clustering via Nginx. An impressive combination. CouchDB can be easily set up in a multi-master configuration, where writes to one master are replicated to the other and vice versa. This makes setting up a reliable CouchDB cluster is as simple as putting two such servers behind a single nginx proxy.

PostgreSQL 8.5 alpha 2 is out. “P.S. If you’re wondering about Hot Standby and Synchronous Replication, they’re still under heavy development and still (at this point) expected to be in 8.5.”—Hot Standby is PostgreSQL-speak for MySQL-style master/slave replication for scaling your reads.

How We Made GitHub Fast. Detailed overview of the new GitHub architecture. It’s a lot more complicated than I would have expected—lots of moving parts are involved in ensuring they can scale horizontally when they need to. Interesting components include nginx, Unicorn, Rails, DRBD, HAProxy, Redis, Erlang, memcached, SSH, git and a bunch of interesting new open source projects produced by the GitHub team such as BERT/Ernie and ProxyMachine.

When I worked at Amazon.com we had a deeply-ingrained hatred for all of the SQL databases in our systems. Now, we knew perfectly well how to scale them through partitioning and other means. But making them highly available was another matter. Replication and failover give you basic reliability, but it's very limited and inflexible compared to a real distributed datastore with master-master replication, partition tolerance, consensus and/or eventual consistency, or other availability-oriented features.

Londiste Tutorial. Master/slave replication for PostgreSQL, developed and used by Skype.

Keyspace. Yet Another Key-Value Store—this one focuses on high availability, with one server in the cluster serving as master (and handling all writes), and the paxos algorithm handling replication and ensuring a new master can be elected should the existing master become unavailable. Clients can chose to make dirty reads against replicated servers or clean reads by talking directly to the master. Underlying storage is BerkeleyDB, and the authors claim 100,000 writes/second. Released under the AGPL.

PostgreSQL Development Priorities. The top two for 8.4 are “Simple built-in replication” and “Upgrade-in-place”, Josh Berkus is seeking feedback on priorities for future work on 8.5.

redis (via) An in-memory scalable key/value store but with an important difference: this one lets you perform list and set operations against keys, opening up a whole new set of possibilities for application development. It’s very young but already supports persistence to disk and master-slave replication.

[Drizzle] won’t be a get-out-of-jail-free card for very write-heavy applications but I bet it will do wonders for heavily replicated, heavily federated, read-heavy architectures (you know, normal stuff).

What happened to Hot Standby? Hot Standby (the ability to have read-only replication slaves) has been dropped from PostgreSQL 8.4 and is now scheduled for 8.5. “Making hard decisions to postpone features which aren’t quite ready is how PostgreSQL makes sure that our DBMS is ”bulletproof“ and that we release close to on-time every year”.

Tokyo Tyrant Tutorial. Buried at the bottom of the Tokyo Tyrant protocol documentation, this is the best resource I’ve seen yet for getting up and running with the database server (including setting up replication).

MemcacheDB. A server that speaks the memcache protocol but uses Berkeley DB for reliable persistent storage. Speedy: 20,000 writes/second and 60,000+ reads/second. Includes a full replication mechanism (with custom memcache protocol commands) based on Berkeley DB’s.

2008

Minimal nginx conf to split get/post requests. Interesting idea for master-slave replication balancing where GET v.s. POST is load-balanced by nginx, presumably to different backend servers that are configured to talk to either a slave or a master. This won’t deal very will with replication lag though—you really want a user’s session to be bound to the master server for the next few GET requests after data is modified to ensure they see the effects of their updates. UPDATE: Amit fixed my complaint with a neat hack based around a cookie with a max age of 10 seconds.

Facebook engineering notes on Scaling Out. Jason Sobel explains a couple of tricks Facebook use to deal with consistency between their California and Virginia data centres. The first is to hijack the MySQL replication stream to include information about memcached records to invalidate; the second is to use Layer 7 load balancers which inspect a “last modification time” cookie and send users to the masters in California if they have updated their profile in the past 20 seconds.

Historically the project policy has been to avoid putting replication into core PostgreSQL, so as to leave room for development of competing solutions [...] However, it is becoming clear that this policy is hindering acceptance of PostgreSQL to too great an extent, compared to the benefit it offers to the add-on replication projects. Users who might consider PostgreSQL are choosing other database systems because our existing replication options are too complex to install and use for simple cases.

— Tom Lane

mysql_cluster (via) My Russian isn’t all that good, but this looks like a neat way of getting Django to talk to a master/slave setup, written by Ivan Sagalaev. UPDATE: English docs are linked from the comments.