62 posts tagged “redis”

2026

The thing about 90% of TDMs [Technical Decision Makers] is that they're motivated primarily by NOT GETTING FIRED. These aren't people who browser Lobsters or push to GH on the weekend. These are people that work 9 to 5, get paid, go home, and NEVER THINK ABOUT WORK AGAIN. So to achieve all that, they follow secular trends supported by analysts and broad public sentiment. Oh, Gartner said that "AI strategy" is most important? McKinsey said "context" needs to be managed? Well, "Context Engine for AI Apps" is going to be defensible. Buy it.

— Mitchell Hashimoto, in a conversation about the design of the Redis homepage

Salvatore Sanfilippo submitted a PR adding a new data type - arrays - to Redis.

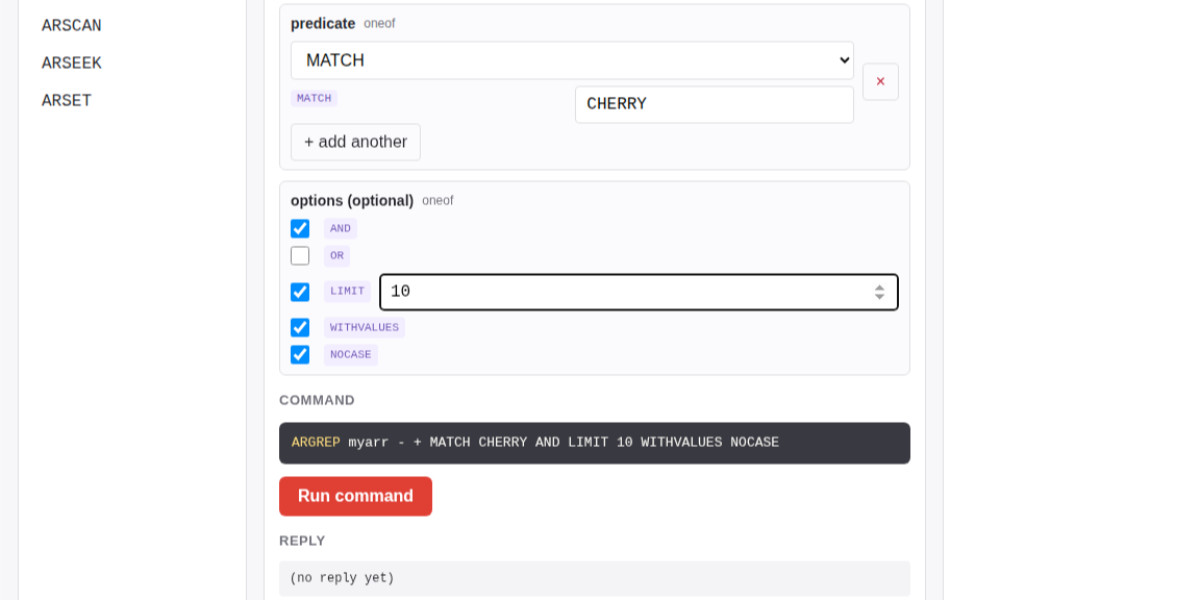

The new commands are ARCOUNT, ARDEL, ARDELRANGE, ARGET, ARGETRANGE, ARGREP, ARINFO, ARINSERT, ARLASTITEMS, ARLEN, ARMGET, ARMSET, ARNEXT, AROP, ARRING, ARSCAN, ARSEEK, ARSET.

The implementation is currently available in a branch, so I had Claude Code for web build this interactive playground for trying out the new commands in a WASM-compiled build of a subset of Redis running in the browser.

The most interesting new command is ARGREP which can run a server-side grep against a range of values in the array using the newly vendored TRE regex library.

Salvatore wrote more about the AI-assisted development process for the array type in Redis array type: short story of a long development.

2025

I had Claude Code build an experimental Redis module for executing JavaScript code in a similar way to Lua using JS.EVAL and similar functions.

I was inspired to try this by this comment from antirez about how he would have used JavaScript in place of Lua as the embedded Redis scripting language if MicroQuickJS had been available in 2010.

If this [MicroQuickJS] had been available in 2010, Redis scripting would have been JavaScript and not Lua. Lua was chosen based on the implementation requirements, not on the language ones... (small, fast, ANSI-C). I appreciate certain ideas in Lua, and people love it, but I was never able to like Lua, because it departs from a more Algol-like syntax and semantics without good reasons, for my taste. This creates friction for newcomers. I love friction when it opens new useful ideas and abstractions that are worth it, if you learn SmallTalk or FORTH and for some time you are lost, it's part of how the languages are different. But I think for Lua this is not true enough: it feels like it departs from what people know without good reasons.

— Salvatore Sanfilippo, Hacker News comment on MicroQuickJS

Scaling HNSWs (via) Salvatore Sanfilippo spent much of this year working on vector sets for Redis, which first shipped in Redis 8 in May.

A big part of that work involved implementing HNSW - Hierarchical Navigable Small World - an indexing technique first introduced in this 2016 paper by Yu. A. Malkov and D. A. Yashunin.

Salvatore's detailed notes on the Redis implementation here offer an immersive trip through a fascinating modern field of computer science. He describes several new contributions he's made to the HNSW algorithm, mainly around efficient deletion and updating of existing indexes.

Since embedding vectors are notoriously memory-hungry I particularly appreciated this note about how you can scale a large HNSW vector set across many different nodes and run parallel queries against them for both reads and writes:

[...] if you have different vectors about the same use case split in different instances / keys, you can ask VSIM for the same query vector into all the instances, and add the WITHSCORES option (that returns the cosine distance) and merge the results client-side, and you have magically scaled your hundred of millions of vectors into multiple instances, splitting your dataset N times [One interesting thing about such a use case is that you can query the N instances in parallel using multiplexing, if your client library is smart enough].

Another very notable thing about HNSWs exposed in this raw way, is that you can finally scale writes very easily. Just hash your element modulo N, and target the resulting Redis key/instance. Multiple instances can absorb the (slow, but still fast for HNSW standards) writes at the same time, parallelizing an otherwise very slow process.

It's always exciting to see new implementations of fundamental algorithms and data structures like this make it into Redis because Salvatore's C code is so clearly commented and pleasant to read - here's vector-sets/hnsw.c and vector-sets/vset.c.

tidwall/pogocache

(via)

New project from Josh Baker, author of the excellent tg C geospatial libarry (covered previously) and various other interesting projects:

Pogocache is fast caching software built from scratch with a focus on low latency and cpu efficency.

Faster: Pogocache is faster than Memcache, Valkey, Redis, Dragonfly, and Garnet. It has the lowest latency per request, providing the quickest response times. It's optimized to scale from one to many cores, giving you the best single-threaded and multithreaded performance.

Faster than Memcache and Redis is a big claim! The README includes a design details section that explains how the system achieves that performance, using a sharded hashmap inspired by Josh's shardmap project and clever application of threads.

Performance aside, the most interesting thing about Pogocache is the server interface it provides: it emulates the APIs for Redis and Memcached, provides a simple HTTP API and lets you talk to it over the PostgreSQL wire protocol as well!

psql -h localhost -p 9401

=> SET first Tom;

=> SET last Anderson;

=> SET age 37;

$ curl http://localhost:9401/last

Anderson

Redis is open source again (via) Salvatore Sanfilippo:

Five months ago, I rejoined Redis and quickly started to talk with my colleagues about a possible switch to the AGPL license, only to discover that there was already an ongoing discussion, a very old one, too. [...]

I’ll be honest: I truly wanted the code I wrote for the new Vector Sets data type to be released under an open source license. [...]

So, honestly, while I can’t take credit for the license switch, I hope I contributed a little bit to it, because today I’m happy. I’m happy that Redis is open source software again, under the terms of the AGPLv3 license.

I'm absolutely thrilled to hear this. Redis 8.0 is out today under the new license, including a beta release of Vector Sets. I've been watching Salvatore's work on those with fascination, while sad that I probably wouldn't use it often due to the janky license. That concern is now gone. I'm looking forward to putting them through their paces!

See also Redis is now available under the AGPLv3 open source license on the Redis blog. An interesting note from that is that they are also:

Integrating Redis Stack technologies, including JSON, Time Series, probabilistic data types, Redis Query Engine and more into core Redis 8 under AGPL

That's a whole bunch of new things that weren't previously part of Redis core.

I hadn't encountered Redis Query Engine before - it looks like that's a whole set of features that turn Redis into more of an Elasticsearch-style document database complete with full-text, vector search operations and geospatial operations and aggregations. It supports search syntax that looks a bit like this:

FT.SEARCH places "museum @city:(san francisco|oakland) @shape:[CONTAINS $poly]" PARAMS 2 poly 'POLYGON((-122.5 37.7, -122.5 37.8, -122.4 37.8, -122.4 37.7, -122.5 37.7))' DIALECT 3

(Noteworthy that Elasticsearch chose the AGPL too when they switched back from the SSPL to an open source license last year).

2024

From where I left. Four and a half years after he left the project, Redis creator Salvatore Sanfilippo is returning to work on Redis.

Hacking randomly was cool but, in the long run, my feeling was that I was lacking a real purpose, and every day I started to feel a bigger urgency to be part of the tech world again. At the same time, I saw the Redis community fragmenting, something that was a bit concerning to me, even as an outsider.

I'm personally still upset at the license change, but Salvatore sees it as necessary to support the commercial business model for Redis Labs. It feels to me like a betrayal of the volunteer efforts by previous contributors. I posted about that on Hacker News and Salvatore replied:

I can understand that, but the thing about the BSD license is that such value never gets lost. People are able to fork, and after a fork for the original project to still lead will be require to put something more on the table.

Salvatore's first new project is an exploration of adding vector sets to Redis. The vector similarity API he previews in this post reminds me of why I fell in love with Redis in the first place - it's clean, simple and feels obviously right to me.

VSIM top_1000_movies_imdb ELE "The Matrix" WITHSCORES

1) "The Matrix"

2) "0.9999999403953552"

3) "Ex Machina"

4) "0.8680362105369568"

...

Themes from DjangoCon US 2024

I just arrived home from a trip to Durham, North Carolina for DjangoCon US 2024. I’ve already written about my talk where I announced a new plugin system for Django; here are my notes on some of the other themes that resonated with me during the conference.

[... 1,470 words]redka (via) Anton Zhiyanov’s new project to build a subset of Redis (including protocol support) using Go and SQLite. Also works as a Go library.

The guts of the SQL implementation are in the internal/sqlx folder.

Redis Adopts Dual Source-Available Licensing (via) Well this sucks: after fifteen years (and contributions from more than 700 people), Redis is dropping the 3-clause BSD license going forward, instead being “dual-licensed under the Redis Source Available License (RSALv2) and Server Side Public License (SSPLv1)” from Redis 7.4 onwards.

Testcontainers (via) Not sure how I missed this: Testcontainers is a family of testing libraries (for Python, Go, JavaScript, Ruby, Rust and a bunch more) that make it trivial to spin up a service such as PostgreSQL or Redis in a container for the duration of your tests and then spin it back down again.

The Python example code is delightful:

redis = DockerContainer("redis:5.0.3-alpine").with_exposed_ports(6379)

redis.start()

wait_for_logs(redis, "Ready to accept connections")

I much prefer integration-style tests over unit tests, and I like to make sure any of my projects that depend on PostgreSQL or similar can run their tests against a real running instance. I've invested heavily in spinning up Varnish or Elasticsearch ephemeral instances in the past - Testcontainers look like they could save me a lot of time.

The open source project started in 2015, span off a company called AtomicJar in 2021 and was acquired by Docker in December 2023.

2023

lmdb.tcl —the first version of Redis, written in TCL (via) Really neat piece of computing history here—the very first version of what later became Redis, written as a 319 line TCL stript for LLOOGG, Salvatore Sanfilippo’s old analytics startup.

2022

Scaling Mastodon: The Compendium (via) Hazel Weakly’s collection of notes on scaling Mastodon, covering PostgreSQL, Sidekiq, Redis, object storage and more.

Dragonfly: A modern replacement for Redis and Memcached (via) I was initially pretty skeptical of the tagline: does Redis really need a “modern” replacement? But the Background section of the README makes this look like a genuinely interesting project. It re-imagines Redis to have its keyspace partitioned across multiple threads, and uses the VLL lock manager described in a 2014 paper to “compose atomic multi-key operations without using mutexes or spinlocks”. The initial benchmarks show up to a 25x increase in throughput compared to Redis. It’s written in C++.

A tiny CI system (via) Christian Ştefănescu shares a recipe for building a tiny self-hosted CI system using Git and Redis. A post-receive hook runs when a commit is pushed to the repo and uses redis-cli to push jobs to a list. Then a separate bash script runs a loop with a blocking “redis-cli blpop jobs” operation which waits for new jobs and then executes the CI job as a shell script.

2021

Last Mile Redis (via) Fly.io article about running a local redis cache in each of their geographic regions—“Cache data overlaps a lot less than you assume it will. For the most part, people in Singapore will rely on a different subset of data than people in Amsterdam or São Paulo or New Jersey.” But then they note that Redis has the ability to act as both a replica of a primary AND a writable server at the same time (“replica-read-only no”), which actually makes sense for a cache—it lets you cache local data but send out cluster-wide cache purges if necessary.

RabbitMQ Streams Overview. New in RabbitMQ 3.9: streams are a persisted, replicated append-only log with non-destructive consuming semantics. Sounds like it fits the same hole as Kafka and Redis Streams, an extremely useful pattern.

2019

huey. Charles Leifer’s “little task queue for Python”. Similar to Celery, but it’s designed to work with Redis, SQLite or in the parent process using background greenlets. Worth checking out for the really neat design. The project is new to me, but it’s been under active development since 2011 and has a very healthy looking rate of releases.

This paper introduces Mesh, a plug-in replacement for malloc that, for the first time, eliminates fragmentation in unmodified C/C++ applications. Mesh combines novel randomized algorithms with widely-supported virtual memory operations to provably reduce fragmentation, breaking the classical Robson bounds with high probability. Mesh generally matches the runtime performance of state-of-the-art memory allocators while reducing memory consumption; in particular, it reduces the memory of consumption of Firefox by 16% and Redis by 39%.

2018

Introduction to Redis Streams. Redis 5.0 is out, introducing the first new Redis data type in several years: streams, a Kafka-like mechanism for implementing a replayable event stream that can be read by many different subscribers.

Scaling a High-traffic Rate Limiting Stack With Redis Cluster. Brandur Leach describes the simple, elegant and performant design of Redis Cluster, and talks about how Stripe used it to scaled their rate-limiting from one to ten nodes.

Code is like a poem; it's not just something we write to reach some practical result. Sometimes people that are far from the Redis philosophy suggest using other code written by other authors (frequently in other languages) in order to implement something Redis currently lacks. But to us this is like if Shakespeare decided to end Enrico IV using the Paradiso from the Divina Commedia. Is using any external code a bad idea? Not at all. Like in "One Thousand and One Nights" smaller self contained stories are embedded in a bigger story, we'll be happy to use beautiful self contained libraries when needed. At the same time, when writing the Redis story we're trying to write smaller stories that will fit in to other code.

2017

For Redis 4.2 I'm moving Disque as a Redis module. To do this, Redis modules are getting a fully featured Cluster API. This means that it will be possible, for instance, to write a Redis module that orchestrates N Redis masters, using Raft or any other consensus algorithm, as a single distributed system. This will allow to also model strong guarantees easily.

Redis Streams and the Unified Log. In which Brandur Leach explores the new Kafka-style streams functionality coming to Redis 4.0, and shows an example of a robust at-least once processing architecture built on a combination of Redis streams and PostgreSQL transactions. I really like the pattern of writing log records to a staging table in PostgreSQL first in order to bundle them up in the same transaction as the originating state change, then have a separate process read them from that table and publish them to Redis.

Redis streams aren’t exciting for their innovativeness, but rather than they bring building a unified log architecture within reach of a small and/or inexpensive app. Kafka is infamously difficult to configure and get running, and is expensive to operate once you do. [...] Redis on the other hand is probably already in your stack.

Secondary indexing with Redis. I haven’t seen this section of the official Redis documentation before, and it’s absolutely fantastic—well worth reading the whole thing. It talks through various ways in which you can set up indexes in Redis, mainly by leaning on sorted sets—which it turns out will binary lexicographically sort items with the same score. This makes it easy to implement autocomplete with Redis—but if you use them creatively you can implement subject/predicate/object graph searches or even N-dimensional range queries as well.

walrus. Fascinating collection of Python utilities for working with Redis, by Charles Leifer. There are a ton of interesting ideas in here. It starts with Python object wrappers for Redis so you can interact with lists, sets, sorted sets and Redis hashes using Python-like objects. Then it gets really interesting: walrus ships with implementations of autocomplete, rate limiting, a graph engine (using a sorted set hexastore) and an ORM-style models mechanism which manages secondary indexes and even implements basic full-text search.

Scaling the GitLab database.

Lots of interesting details on how GitLab have worked to scale their PostgreSQL setup. They've avoided sharding so far, instead opting for database pooling with pgbouncer and read-only replicas using hot standbys. I like the way they deal with replica lag - they store the current WAL position in a redis key for the user every time there's a write, then use pg_last_xlog_replay_location() on the various replicas to check and see if they have caught up next time the user makes a request that needs to read some data.

Streams: a new general purpose data structure in Redis. Exciting new Redis feature inspired by Kafka: redis streams, which allow you to construct an efficient, in-memory list of messages (similar to a Kafka log) which clients can read sections of or block against and await real-time delivery of new messages. As expected from Salvatore the API design is clean, obvious and covers a wide range of exciting use-cases. Planned for release with Redis 4 by the end of the year!