282 posts tagged “anthropic”

2025

Code execution with MCP: Building more efficient agents (via) When I wrote about Claude Skills I mentioned that I don't use MCP at all any more when working with coding agents - I find CLI utilities and libraries like Playwright Python to be a more effective way of achieving the same goals.

This new piece from Anthropic proposes a way to bring the two worlds more closely together.

It identifies two challenges with MCP as it exists today. The first has been widely discussed before: all of those tool descriptions take up a lot of valuable real estate in the agent context even before you start using them.

The second is more subtle but equally interesting: chaining multiple MCP tools together involves passing their responses through the context, absorbing more valuable tokens and introducing chances for the LLM to make additional mistakes.

What if you could turn MCP tools into code functions instead, and then let the LLM wire them together with executable code?

Anthropic's example here imagines a system that turns MCP tools into TypeScript files on disk, looking something like this:

// ./servers/google-drive/getDocument.ts

interface GetDocumentInput {

documentId: string;

}

interface GetDocumentResponse {

content: string;

}

/* Read a document from Google Drive */

export async function getDocument(input: GetDocumentInput): Promise<GetDocumentResponse> {

return callMCPTool<GetDocumentResponse>('google_drive__get_document', input);

}This takes up no tokens at all - it's a file on disk. In a similar manner to Skills the agent can navigate the filesystem to discover these definitions on demand.

Then it can wire them together by generating code:

const transcript = (await gdrive.getDocument({ documentId: 'abc123' })).content;

await salesforce.updateRecord({

objectType: 'SalesMeeting',

recordId: '00Q5f000001abcXYZ',

data: { Notes: transcript }

});Notably, the example here avoids round-tripping the response from the gdrive.getDocument() call through the model on the way to the salesforce.updateRecord() call - which is faster, more reliable, saves on context tokens, and avoids the model being exposed to any potentially sensitive data in that document.

This all looks very solid to me! I think it's a sensible way to take advantage of the strengths of coding agents and address some of the major drawbacks of MCP as it is usually implemented today.

There's one catch: Anthropic outline the proposal in some detail but provide no code to execute on it! Implementation is left as an exercise for the reader:

If you implement this approach, we encourage you to share your findings with the MCP community.

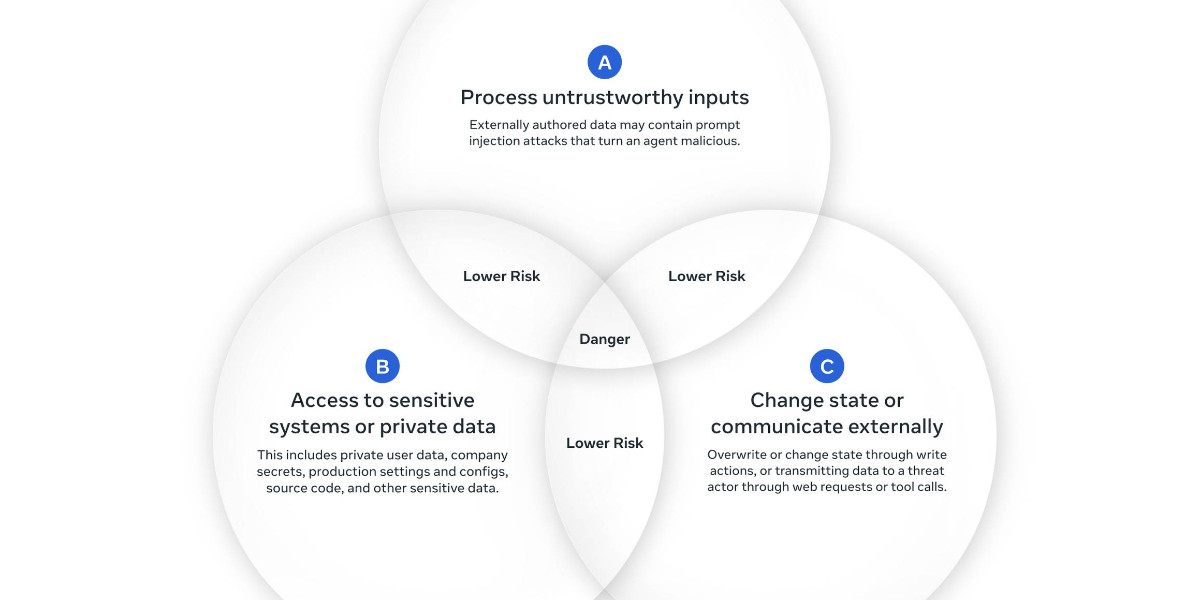

New prompt injection papers: Agents Rule of Two and The Attacker Moves Second

Two interesting new papers regarding LLM security and prompt injection came to my attention this weekend.

[... 1,433 words]If you have an

AGENTS.mdfile, you can source it in yourCLAUDE.mdusing@AGENTS.mdto maintain a single source of truth.

— Claude Docs, with the official answer to standardizing on AGENTS.md

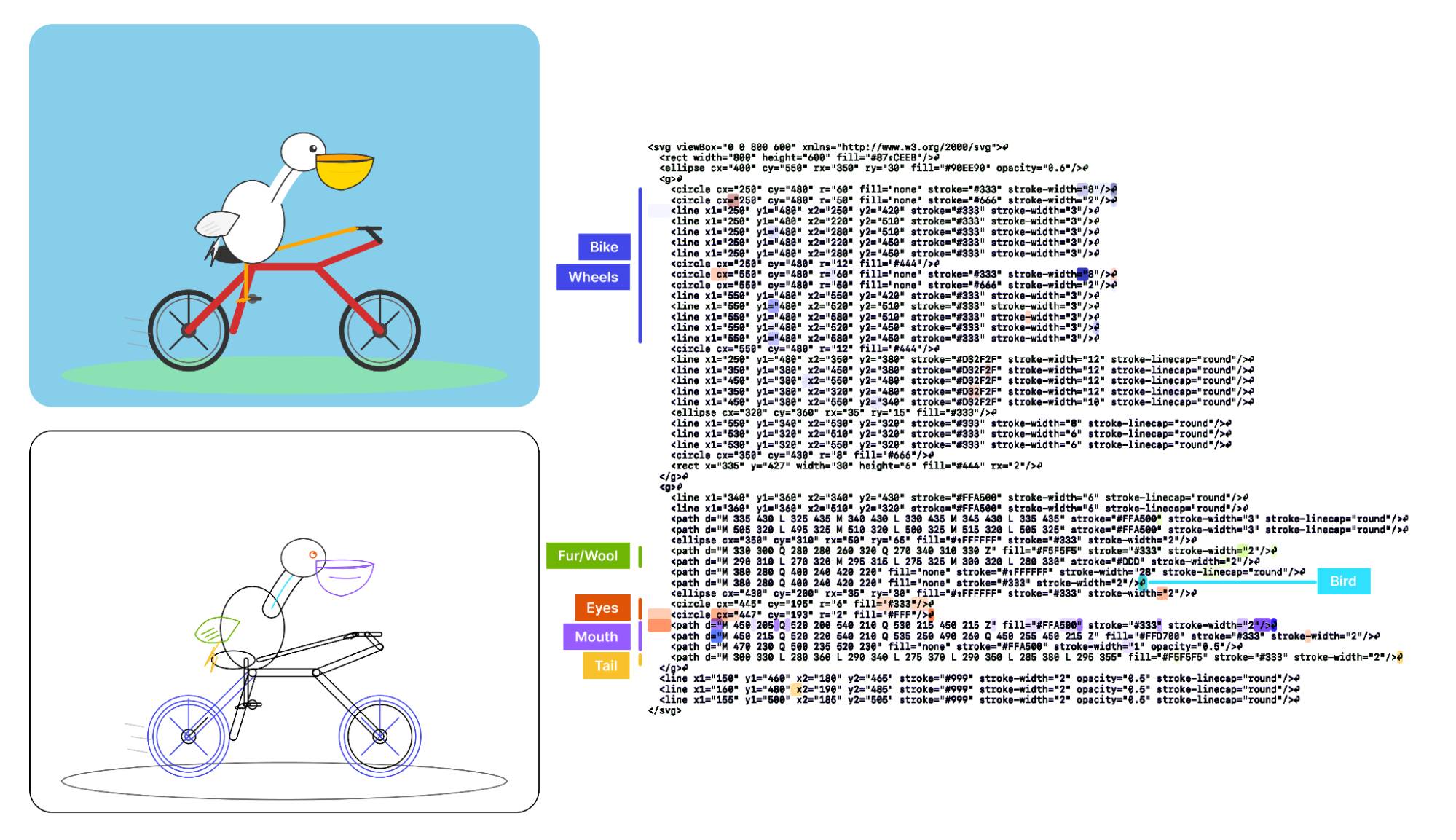

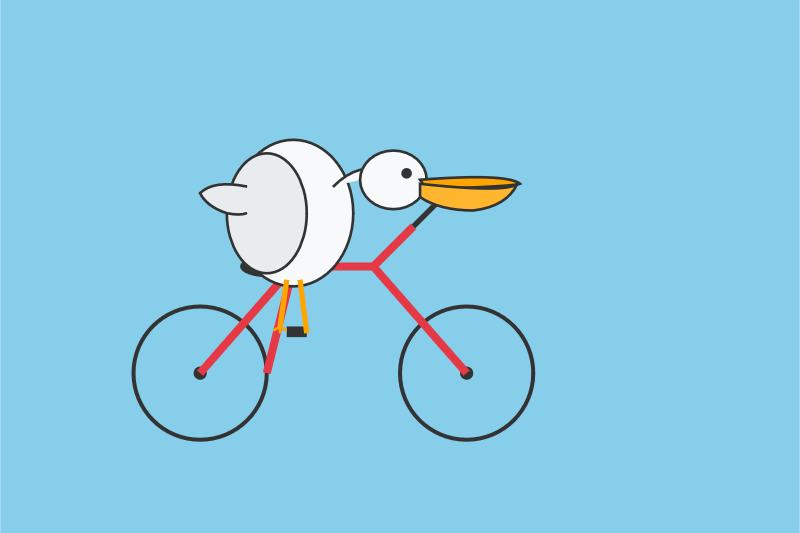

Visual Features Across Modalities: SVG and ASCII Art Reveal Cross-Modal Understanding (via) New model interpretability research from Anthropic, this time focused on SVG and ASCII art generation.

We found that the same feature that activates over the eyes in an ASCII face also activates for eyes across diverse text-based modalities, including SVG code and prose in various languages. This is not limited to eyes – we found a number of cross-modal features that recognize specific concepts: from small components like mouths and ears within ASCII or SVG faces, to full visual depictions like dogs and cats. [...]

These features depend on the surrounding context within the visual depiction. For instance, an SVG circle element activates “eye” features only when positioned within a larger structure that activates “face” features.

And really, I can't not link to this one given the bonus they tagged on at the end!

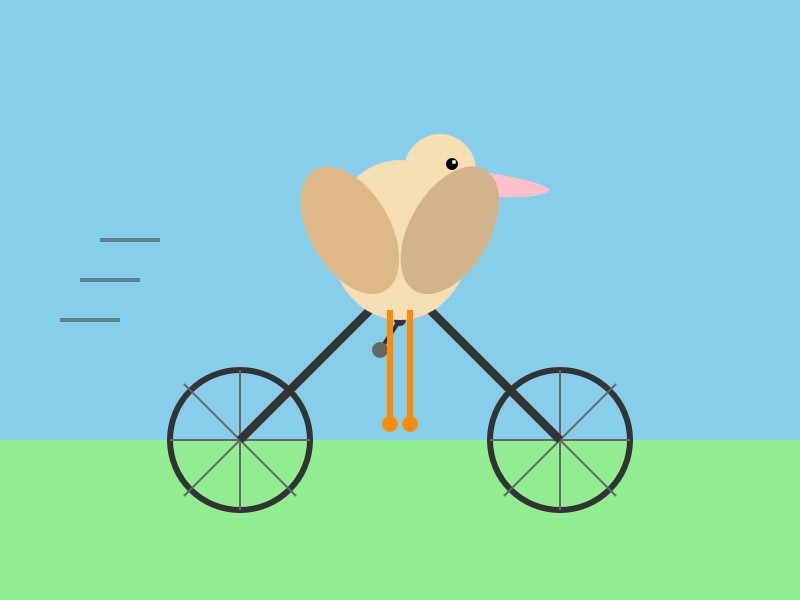

As a bonus, we also inspected features for an SVG of a pelican riding a bicycle, first popularized by Simon Willison as a way to test a model's artistic capabilities. We find features representing concepts including "bike", "wheels", "feet", "tail", "eyes", and "mouth" activating over the corresponding parts of the SVG code.

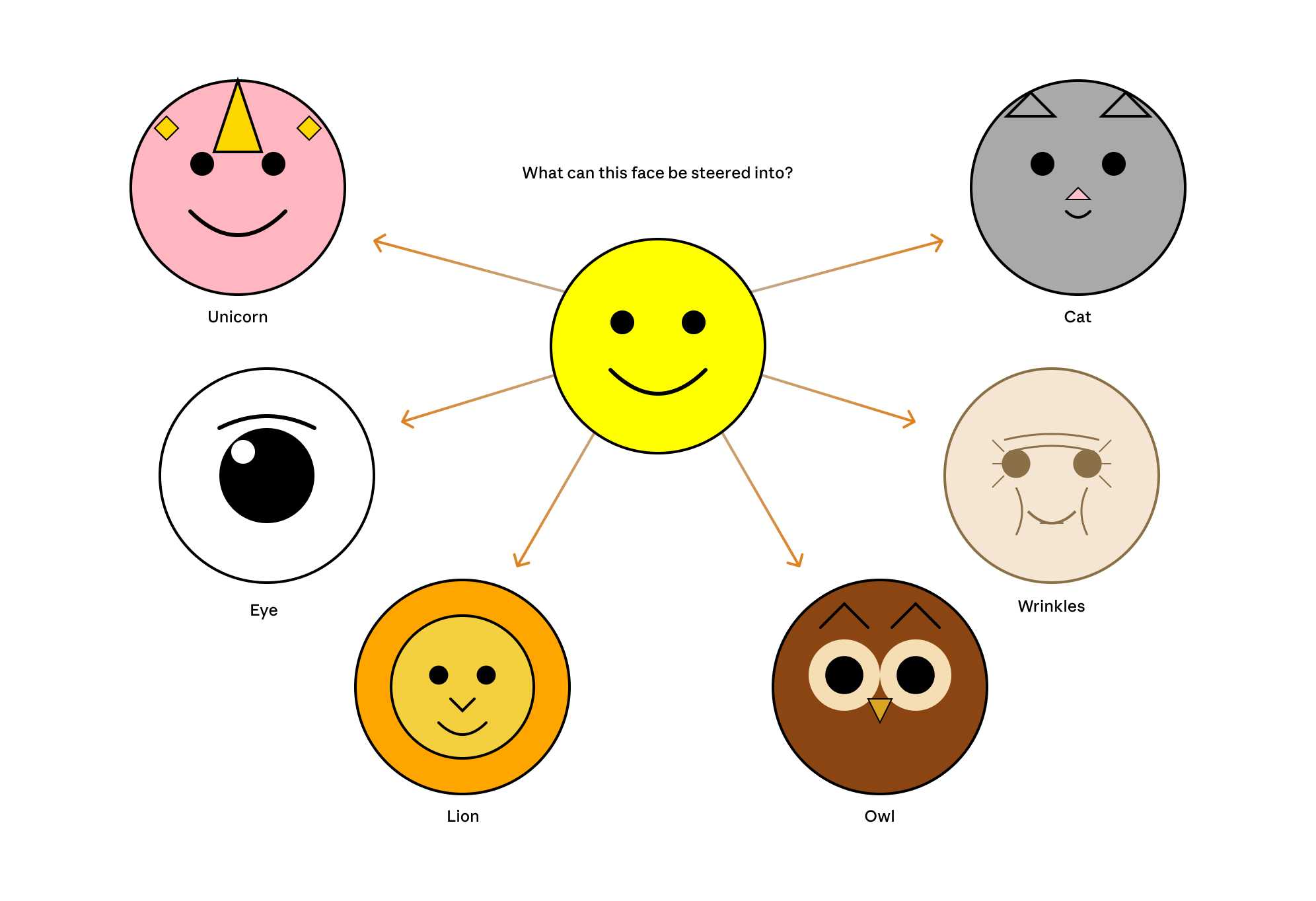

Now that they can identify model features associated with visual concepts in SVG images, can they us those for steering?

It turns out they can! Starting with a smiley SVG (provided as XML with no indication as to what it was drawing) and then applying a negative score to the "smile" feature produced a frown instead, and worked against ASCII art as well.

They could also boost features like unicorn, cat, owl, or lion and get new SVG smileys clearly attempting to depict those creatures.

I'd love to see how this behaves if you jack up the feature for the Golden Gate Bridge.

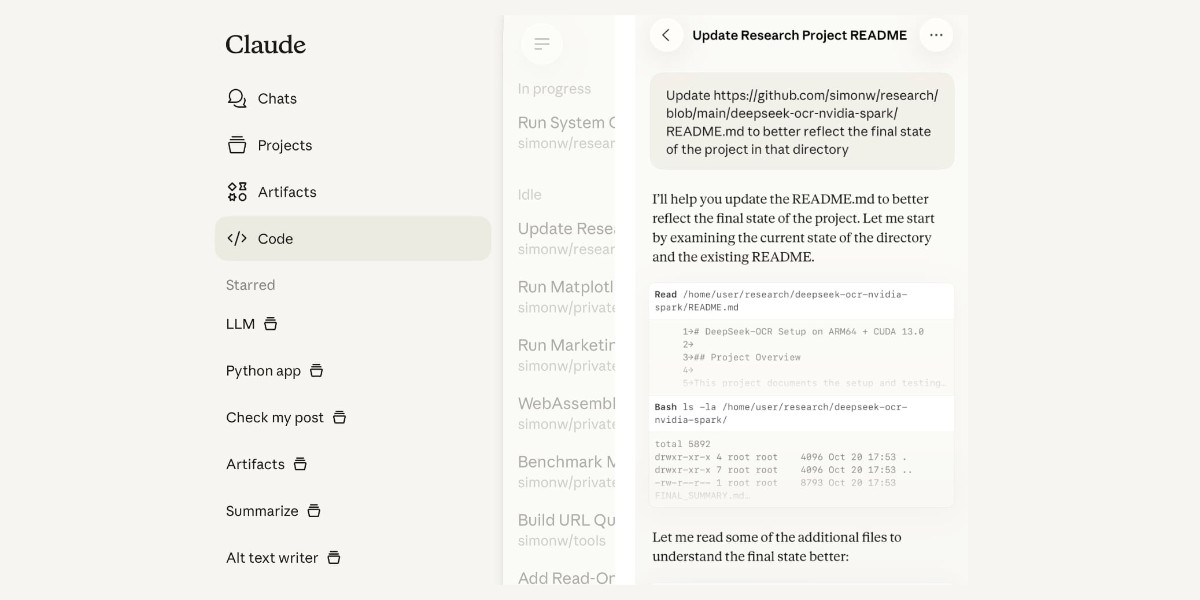

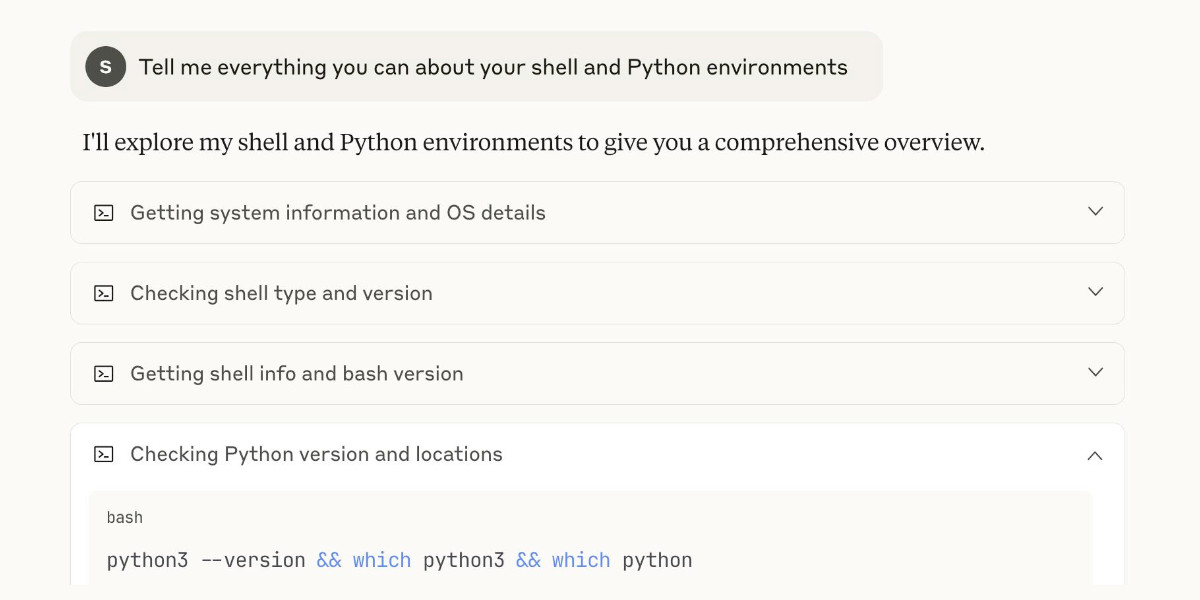

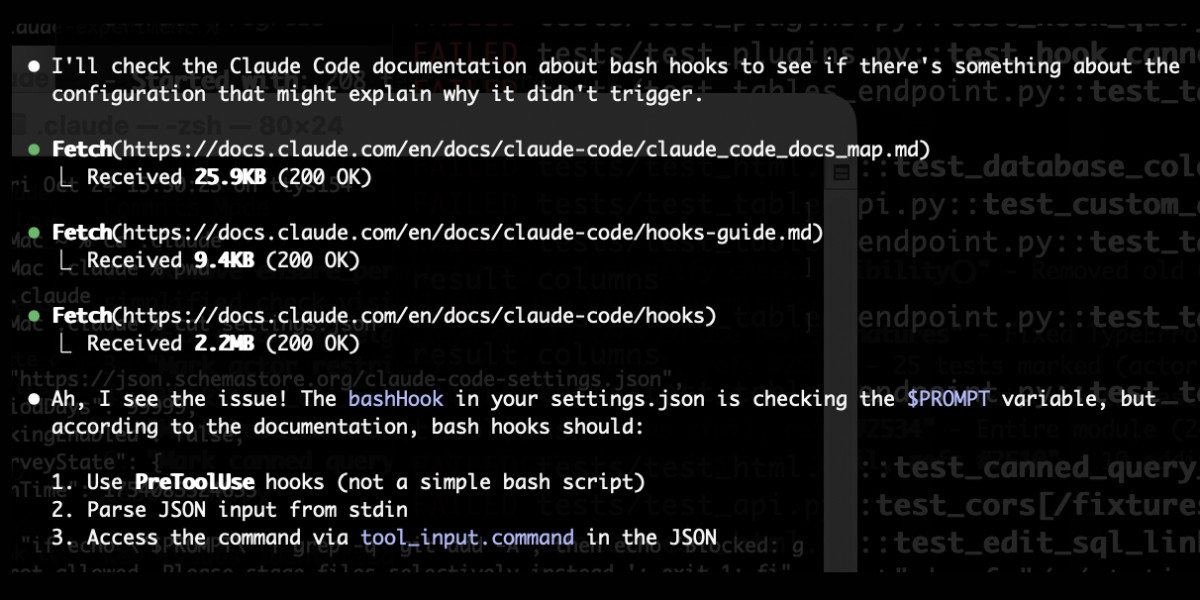

claude_code_docs_map.md. Something I'm enjoying about Claude Code is that any time you ask it questions about itself it runs tool calls like these:

In this case I'd asked it about its "hooks" feature.

The claude_code_docs_map.md file is a neat Markdown index of all of their other documentation - the same pattern advocated by llms.txt. Claude Code can then fetch further documentation to help it answer your question.

I intercepted the current Claude Code system prompt using this trick and sure enough it included a note about this URL:

When the user directly asks about Claude Code (eg. "can Claude Code do...", "does Claude Code have..."), or asks in second person (eg. "are you able...", "can you do..."), or asks how to use a specific Claude Code feature (eg. implement a hook, or write a slash command), use the WebFetch tool to gather information to answer the question from Claude Code docs. The list of available docs is available at https://docs.claude.com/en/docs/claude-code/claude_code_docs_map.md.

I wish other LLM products - including both ChatGPT and Claude.ai themselves - would implement a similar pattern. It's infuriating how bad LLM tools are at answering questions about themselves, though unsurprising given that their model's training data pre-dates the latest version of those tools.

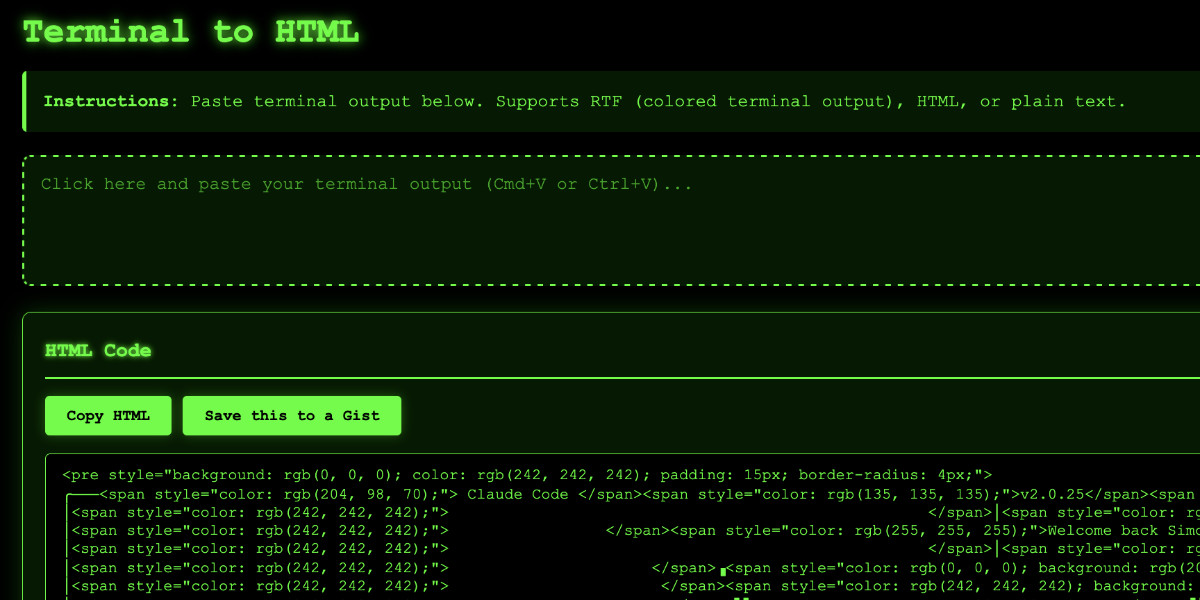

Video: Building a tool to copy-paste share terminal sessions using Claude Code for web

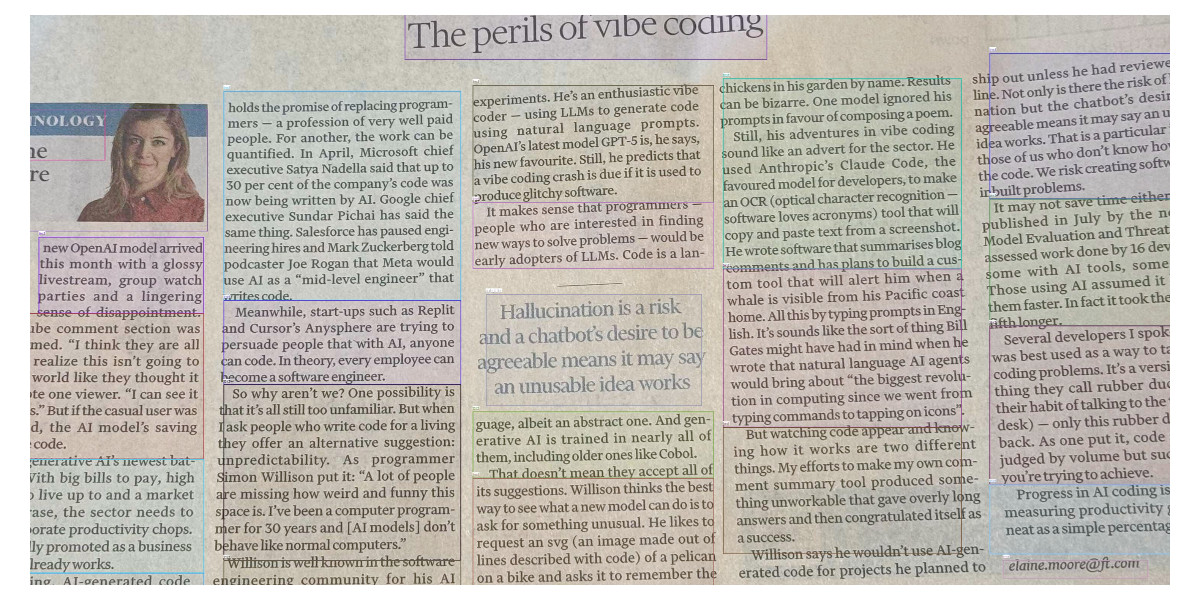

This afternoon I was manually converting a terminal session into a shared HTML file for the umpteenth time when I decided to reduce the friction by building a custom tool for it—and on the spur of the moment I fired up Descript to record the process. The result is this new 11 minute YouTube video showing my workflow for vibe-coding simple tools from start to finish.

[... 1,338 words]Living dangerously with Claude

I gave a talk last night at Claude Code Anonymous in San Francisco, the unofficial meetup for coding agent enthusiasts. I decided to talk about a dichotomy I’ve been struggling with recently. On the one hand I’m getting enormous value from running coding agents with as few restrictions as possible. On the other hand I’m deeply concerned by the risks that accompany that freedom.

[... 2,208 words]Claude Code stores full logs of your sessions as newline-delimited JSON in ~/.claude/projects/encoded-directory/*.jsonl on your machine. I currently have 379MB of these!

Here's an example jsonl file which I extracted from my Deepseek-OCR on NVIDIA Spark project. I have a little vibe-coded tool for converting those into Markdown which produces results like this.

Unfortunately Claude Code has a nasty default behavior of deleting these after 30 days! You can't disable this entirely, but you can at least delay it for 274 years by adding this to your ~/.claude/settings.json file:

{

"cleanupPeriodDays": 99999

}

Claude Code's settings are documented here.

Claude Code for web—a new asynchronous coding agent from Anthropic

Anthropic launched Claude Code for web this morning. It’s an asynchronous coding agent—their answer to OpenAI’s Codex Cloud and Google’s Jules, and has a very similar shape. I had preview access over the weekend and I’ve already seen some very promising results from it.

[... 1,434 words]Getting DeepSeek-OCR working on an NVIDIA Spark via brute force using Claude Code

DeepSeek released a new model yesterday: DeepSeek-OCR, a 6.6GB model fine-tuned specifically for OCR. They released it as model weights that run using PyTorch and CUDA. I got it running on the NVIDIA Spark by having Claude Code effectively brute force the challenge of getting it working on that particular hardware.

[... 1,971 words]Claude Skills are awesome, maybe a bigger deal than MCP

Anthropic this morning introduced Claude Skills, a new pattern for making new abilities available to their models:

[... 1,864 words]While Sonnet 4.5 remains the default [in Claude Code], Haiku 4.5 now powers the Explore subagent which can rapidly gather context on your codebase to build apps even faster.

You can select Haiku 4.5 to be your default model in /model. When selected, you’ll automatically use Sonnet 4.5 in Plan mode and Haiku 4.5 for execution for smarter plans and faster results.

— Catherine Wu, Claude Code PM, Anthropic

Introducing Claude Haiku 4.5 (via) Anthropic released Claude Haiku 4.5 today, the cheapest member of the Claude 4.5 family that started with Sonnet 4.5 a couple of weeks ago.

It's priced at $1/million input tokens and $5/million output tokens, slightly more expensive than Haiku 3.5 ($0.80/$4) and a lot more expensive than the original Claude 3 Haiku ($0.25/$1.25), both of which remain available at those prices.

It's a third of the price of Sonnet 4 and Sonnet 4.5 (both $3/$15) which is notable because Anthropic's benchmarks put it in a similar space to that older Sonnet 4 model. As they put it:

What was recently at the frontier is now cheaper and faster. Five months ago, Claude Sonnet 4 was a state-of-the-art model. Today, Claude Haiku 4.5 gives you similar levels of coding performance but at one-third the cost and more than twice the speed.

I've been hoping to see Anthropic release a fast, inexpensive model that's price competitive with the cheapest models from OpenAI and Gemini, currently $0.05/$0.40 (GPT-5-Nano) and $0.075/$0.30 (Gemini 2.0 Flash Lite). Haiku 4.5 certainly isn't that, it looks like they're continuing to focus squarely on the "great at code" part of the market.

The new Haiku is the first Haiku model to support reasoning. It sports a 200,000 token context window, 64,000 maximum output (up from just 8,192 for Haiku 3.5) and a "reliable knowledge cutoff" of February 2025, one month later than the January 2025 date for Sonnet 4 and 4.5 and Opus 4 and 4.1.

Something that caught my eye in the accompanying system card was this note about context length:

For Claude Haiku 4.5, we trained the model to be explicitly context-aware, with precise information about how much context-window has been used. This has two effects: the model learns when and how to wrap up its answer when the limit is approaching, and the model learns to continue reasoning more persistently when the limit is further away. We found this intervention—along with others—to be effective at limiting agentic “laziness” (the phenomenon where models stop working on a problem prematurely, give incomplete answers, or cut corners on tasks).

I've added the new price to llm-prices.com, released llm-anthropic 0.20 with the new model and updated my Haiku-from-your-webcam demo (source) to use Haiku 4.5 as well.

Here's llm -m claude-haiku-4.5 'Generate an SVG of a pelican riding a bicycle' (transcript).

18 input tokens and 1513 output tokens = 0.7583 cents.

Previous system cards have reported results on an expanded version of our earlier agentic misalignment evaluation suite: three families of exotic scenarios meant to elicit the model to commit blackmail, attempt a murder, and frame someone for financial crimes. We choose not to report full results here because, similarly to Claude Sonnet 4.5, Claude Haiku 4.5 showed many clear examples of verbalized evaluation awareness on all three of the scenarios tested in this suite. Since the suite only consisted of many similar variants of three core scenarios, we expect that the model maintained high unverbalized awareness across the board, and we do not trust it to be representative of behavior in the real extreme situations the suite is meant to emulate.

simonw/claude-skills. One of the tips I picked up from Jesse Vincent's Claude Code Superpowers post (previously) was this:

Skills are what give your agents Superpowers.

The first time they really popped up on my radar was a few weeks ago when Anthropic rolled out improved Office document creation. When the feature rolled out, I went poking around a bit -- I asked Claude to tell me all about its new skills. And it was only too happy to dish.

In that transcript Jesse asks how the skill system works and Claude replies:

Looking at the skills system, here's how it works:

Current Skills (in

/mnt/skills/public/):

- docx - Word document handling

- pdf - PDF processing and form filling

- pptx - PowerPoint presentations

- xlsx - Excel spreadsheets

This looks like it's part of Claude's brand new Code Interpreter feature! I wrote about that extensively last month, but I missed that there was a /mnt/skills/public/ folder full of fascinating implementation details.

So I fired up a fresh Claude instance (fun fact: Code Interpreter also works in the Claude iOS app now, which it didn't when they first launched) and prompted:

Create a zip file of everything in your /mnt/skills folder

This worked, and gave me a .zip to download. You can run the prompt yourself here, though you'll need to enable the new feature first.

I've pushed the contents of that zip to my new simonw/claude-skills GitHub repo.

So now you can see the prompts Anthropic wrote to enable the creation and manipulation of the following files in their Claude consumer applications:

In each case the prompts spell out detailed instructions for manipulating those file types using Python, using libraries that come pre-installed on Claude's containers.

Skills are more than just prompts though: the repository also includes dozens of pre-written Python scripts for performing common operations.

pdf/scripts/fill_fillable_fields.py for example is a custom CLI tool that uses pypdf to find and then fill in a bunch of PDF form fields, specified as JSON, then render out the resulting combined PDF.

This is a really sophisticated set of tools for document manipulation, and I love that Anthropic have made those visible - presumably deliberately - to users of Claude who know how to ask for them.

Superpowers: How I’m using coding agents in October 2025. A follow-up to Jesse Vincent's post about September, but this is a really significant piece in its own right.

Jesse is one of the most creative users of coding agents (Claude Code in particular) that I know. He's put a great amount of work into evolving an effective process for working with them, encourage red/green TDD (watch the test fail first), planning steps, self-updating memory notes and even implementing a feelings journal ("I feel engaged and curious about this project" - Claude).

Claude Code just launched plugins, and Jesse is celebrating by wrapping up a whole host of his accumulated tricks as a new plugin called Superpowers. You can add it to your Claude Code like this:

/plugin marketplace add obra/superpowers-marketplace

/plugin install superpowers@superpowers-marketplace

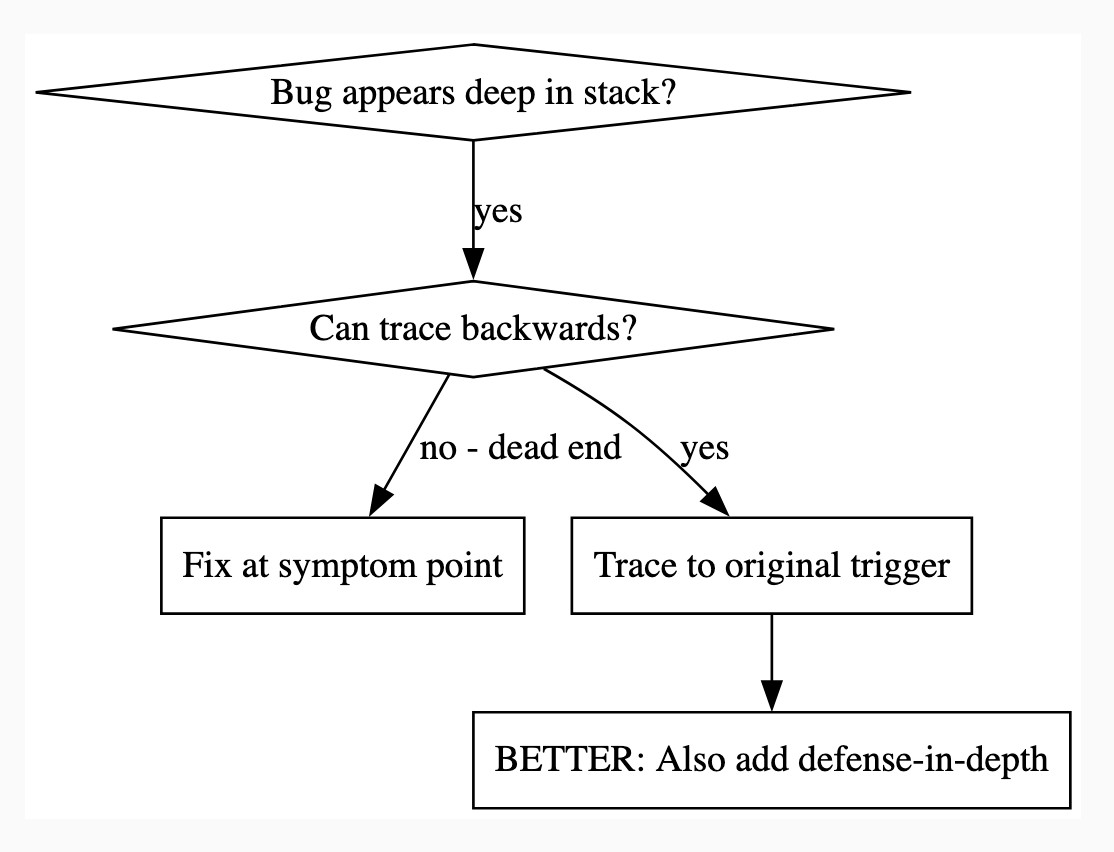

There's a lot in here! It's worth spending some time browsing the repository - here's just one fun example, in skills/debugging/root-cause-tracing/SKILL.md:

--- name: Root Cause Tracing description: Systematically trace bugs backward through call stack to find original trigger when_to_use: Bug appears deep in call stack but you need to find where it originates version: 1.0.0 languages: all ---Overview

Bugs often manifest deep in the call stack (git init in wrong directory, file created in wrong location, database opened with wrong path). Your instinct is to fix where the error appears, but that's treating a symptom.

Core principle: Trace backward through the call chain until you find the original trigger, then fix at the source.

When to Use

digraph when_to_use { "Bug appears deep in stack?" [shape=diamond]; "Can trace backwards?" [shape=diamond]; "Fix at symptom point" [shape=box]; "Trace to original trigger" [shape=box]; "BETTER: Also add defense-in-depth" [shape=box]; "Bug appears deep in stack?" -> "Can trace backwards?" [label="yes"]; "Can trace backwards?" -> "Trace to original trigger" [label="yes"]; "Can trace backwards?" -> "Fix at symptom point" [label="no - dead end"]; "Trace to original trigger" -> "BETTER: Also add defense-in-depth"; }[...]

This one is particularly fun because it then includes a Graphviz DOT graph illustrating the process - it turns out Claude can interpret those as workflow instructions just fine, and Jesse has been wildly experimenting with them.

I vibe-coded up a quick URL-based DOT visualizer, here's that one rendered:

There is so much to learn about putting these tools to work in the most effective way possible. Jesse is way ahead of the curve, so it's absolutely worth spending some time exploring what he's shared so far.

And if you're worried about filling up your context with a bunch of extra stuff, here's a reassuring note from Jesse:

The core of it is VERY token light. It pulls in one doc of fewer than 2k tokens. As it needs bits of the process, it runs a shell script to search for them. The long end to end chat for the planning and implementation process for that todo list app was 100k tokens.

It uses subagents to manage token-heavy stuff, including all the actual implementation.

(Jesse's post also tipped me off about Claude's /mnt/skills/public folder, see my notes here.)

Claude can write complete Datasette plugins now

This isn’t necessarily surprising, but it’s worth noting anyway. Claude Sonnet 4.5 is capable of building a full Datasette plugin now.

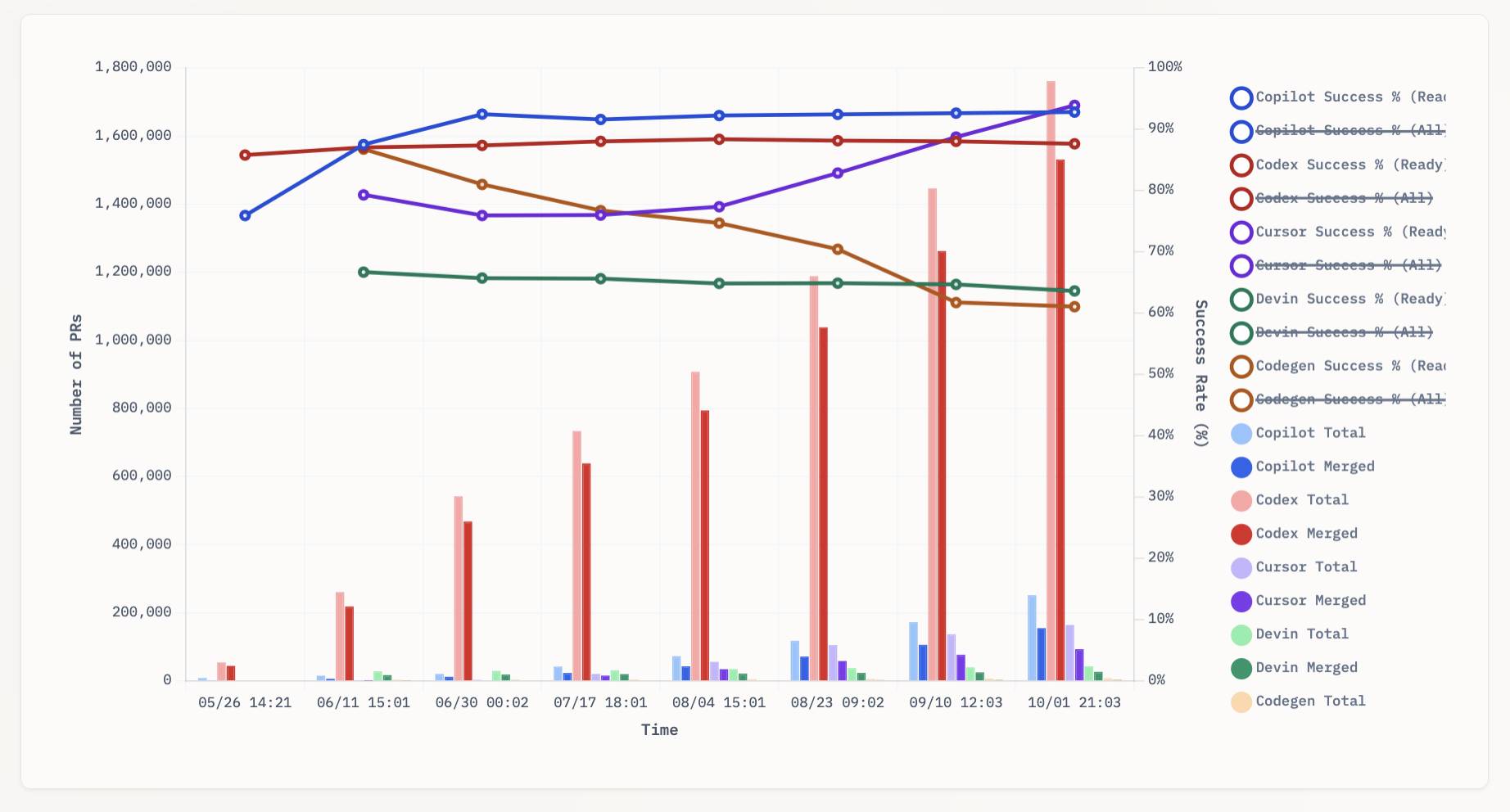

[... 1,296 words]aavetis/PRarena. Albert Avetisian runs this repository on GitHub which uses the Github Search API to track the number of PRs that can be credited to a collection of different coding agents. The repo runs this collect_data.py script every three hours using GitHub Actions to collect the data, then updates the PR Arena site with a visual leaderboard.

The result is this neat chart showing adoption of different agents over time, along with their PR success rate:

I found this today while trying to pull off the exact same trick myself! I got as far as creating the following table before finding Albert's work and abandoning my own project.

| Tool | Search term | Total PRs | Merged PRs | % merged | Earliest |

|---|---|---|---|---|---|

| Claude Code | is:pr in:body "Generated with Claude Code" |

146,000 | 123,000 | 84.2% | Feb 21st |

| GitHub Copilot | is:pr author:copilot-swe-agent[bot] |

247,000 | 152,000 | 61.5% | March 7th |

| Codex Cloud | is:pr in:body "chatgpt.com" label:codex |

1,900,000 | 1,600,000 | 84.2% | April 23rd |

| Google Jules | is:pr author:google-labs-jules[bot] |

35,400 | 27,800 | 78.5% | May 22nd |

(Those "earliest" links are a little questionable, I tried to filter out false positives and find the oldest one that appeared to really be from the agent in question.)

It looks like OpenAI's Codex Cloud is massively ahead of the competition right now in terms of numbers of PRs both opened and merged on GitHub.

Update: To clarify, these numbers are for the category of autonomous coding agents - those systems where you assign a cloud-based agent a task or issue and the output is a PR against your repository. They do not (and cannot) capture the popularity of many forms of AI tooling that don't result in an easily identifiable pull request.

Claude Code for example will be dramatically under-counted here because its version of an autonomous coding agent comes in the form of a somewhat obscure GitHub Actions workflow buried in the documentation.

Claude Sonnet 4.5 is probably the “best coding model in the world” (at least for now)

Anthropic released Claude Sonnet 4.5 today, with a very bold set of claims:

[... 1,205 words]Anthropic: A postmortem of three recent issues. Anthropic had a very bad month in terms of model reliability:

Between August and early September, three infrastructure bugs intermittently degraded Claude's response quality. We've now resolved these issues and want to explain what happened. [...]

To state it plainly: We never reduce model quality due to demand, time of day, or server load. The problems our users reported were due to infrastructure bugs alone. [...]

We don't typically share this level of technical detail about our infrastructure, but the scope and complexity of these issues justified a more comprehensive explanation.

I'm really glad Anthropic are publishing this in so much detail. Their reputation for serving their models reliably has taken a notable hit.

I hadn't appreciated the additional complexity caused by their mixture of different serving platforms:

We deploy Claude across multiple hardware platforms, namely AWS Trainium, NVIDIA GPUs, and Google TPUs. [...] Each hardware platform has different characteristics and requires specific optimizations.

It sounds like the problems came down to three separate bugs which unfortunately came along very close to each other.

Anthropic also note that their privacy practices made investigating the issues particularly difficult:

The evaluations we ran simply didn't capture the degradation users were reporting, in part because Claude often recovers well from isolated mistakes. Our own privacy practices also created challenges in investigating reports. Our internal privacy and security controls limit how and when engineers can access user interactions with Claude, in particular when those interactions are not reported to us as feedback. This protects user privacy but prevents engineers from examining the problematic interactions needed to identify or reproduce bugs.

The code examples they provide to illustrate a TPU-specific bug show that they use Python and JAX as part of their serving layer.

Here's an interesting example of models incrementally improving over time: I am finding that today's leading models are competent at writing prompts for themselves and each other.

A year ago I was quite skeptical of the pattern where models are used to help build prompts. Prompt engineering was still a young enough discipline that I did not expect the models to have enough training data to be able to prompt themselves better than a moderately experienced human.

The Claude 4 and GPT-5 families both have training cut-off dates within the past year - recent enough that they've seen a decent volume of good prompting examples.

I expect they have also been deliberately trained for this. Anthropic make extensive use of sub-agent patterns in Claude Code, and published a fascinating article on that pattern (my notes on that).

I don't have anything solid to back this up - it's more of a hunch based on anecdotal evidence where various of my requests for a model to write a prompt have returned useful results over the last few months.

gpt-5 and gpt-5-mini rate limit updates. OpenAI have increased the rate limits for their two main GPT-5 models. These look significant:

gpt-5

Tier 1: 30K → 500K TPM (1.5M batch)

Tier 2: 450K → 1M (3M batch)

Tier 3: 800K → 2M

Tier 4: 2M → 4Mgpt-5-mini

Tier 1: 200K → 500K (5M batch)

GPT-5 rate limits here show tier 5 stays at 40M tokens per minute. The GPT-5 mini rate limits for tiers 2 through 5 are 2M, 4M, 10M and 180M TPM respectively.

As a reminder, those tiers are assigned based on how much money you have spent on the OpenAI API - from $5 for tier 1 up through $50, $100, $250 and then $1,000 for tier

For comparison, Anthropic's current top tier is Tier 4 ($400 spent) which provides 2M maximum input tokens per minute and 400,000 maximum output tokens, though you can contact their sales team for higher limits than that.

Gemini's top tier is Tier 3 for $1,000 spent and currently gives you 8M TPM for Gemini 2.5 Pro and Flash and 30M TPM for the Flash-Lite and 2.0 Flash models.

So OpenAI's new rate limit increases for their top performing model pulls them ahead of Anthropic but still leaves them significantly behind Gemini.

GPT-5 mini remains the champion for smaller models with that enormous 180M TPS limit for its top tier.

Claude Memory: A Different Philosophy (via) Shlok Khemani has been doing excellent work reverse-engineering LLM systems and documenting his discoveries.

Last week he wrote about ChatGPT memory. This week it's Claude.

Claude's memory system has two fundamental characteristics. First, it starts every conversation with a blank slate, without any preloaded user profiles or conversation history. Memory only activates when you explicitly invoke it. Second, Claude recalls by only referring to your raw conversation history. There are no AI-generated summaries or compressed profiles—just real-time searches through your actual past chats.

Claude's memory is implemented as two new function tools that are made available for a Claude to call. I confirmed this myself with the prompt "Show me a list of tools that you have available to you, duplicating their original names and descriptions" which gave me back these:

conversation_search: Search through past user conversations to find relevant context and information

recent_chats: Retrieve recent chat conversations with customizable sort order (chronological or reverse chronological), optional pagination using 'before' and 'after' datetime filters, and project filtering

The good news here is transparency - Claude's memory feature is implemented as visible tool calls, which means you can see exactly when and how it is accessing previous context.

This helps address my big complaint about ChatGPT memory (see I really don’t like ChatGPT’s new memory dossier back in May) - I like to understand as much as possible about what's going into my context so I can better anticipate how it is likely to affect the model.

The OpenAI system is very different: rather than letting the model decide when to access memory via tools, OpenAI instead automatically include details of previous conversations at the start of every conversation.

Shlok's notes on ChatGPT's memory did include one detail that I had previously missed that I find reassuring:

Recent Conversation Content is a history of your latest conversations with ChatGPT, each timestamped with topic and selected messages. [...] Interestingly, only the user's messages are surfaced, not the assistant's responses.

One of my big worries about memory was that it could harm my "clean slate" approach to chats: if I'm working on code and the model starts going down the wrong path (getting stuck in a bug loop for example) I'll start a fresh chat to wipe that rotten context away. I had worried that ChatGPT memory would bring that bad context along to the next chat, but omitting the LLM responses makes that much less of a risk than I had anticipated.

Update: Here's a slightly confusing twist: yesterday in Bringing memory to teams at work Anthropic revealed an additional memory feature, currently only available to Team and Enterprise accounts, with a feature checkbox labeled "Generate memory of chat history" that looks much more similar to the OpenAI implementation:

With memory, Claude focuses on learning your professional context and work patterns to maximize productivity. It remembers your team’s processes, client needs, project details, and priorities. [...]

Claude uses a memory summary to capture all its memories in one place for you to view and edit. In your settings, you can see exactly what Claude remembers from your conversations, and update the summary at any time by chatting with Claude.

I haven't experienced this feature myself yet as it isn't part of my Claude subscription. I'm glad to hear it's fully transparent and can be edited by the user, resolving another of my complaints about the ChatGPT implementation.

This version of Claude memory also takes Claude Projects into account:

If you use projects, Claude creates a separate memory for each project. This ensures that your product launch planning stays separate from client work, and confidential discussions remain separate from general operations.

I praised OpenAI for adding this a few weeks ago.

I Replaced Animal Crossing’s Dialogue with a Live LLM by Hacking GameCube Memory (via) Brilliant retro-gaming project by Josh Fonseca, who figured out how to run 2002 Game Cube Animal Crossing in the Dolphin Emulator such that dialog with the characters was instead generated by an LLM.

The key trick was running Python code that scanned the Game Cube memory every 10th of a second looking for instances of dialogue, then updated the memory in-place to inject new dialog.

The source code is in vuciv/animal-crossing-llm-mod on GitHub. I dumped it (via gitingest, ~40,000 tokens) into Claude Opus 4.1 and asked the following:

This interacts with Animal Crossing on the Game Cube. It uses an LLM to replace dialog in the game, but since an LLM takes a few seconds to run how does it spot when it should run a prompt and then pause the game while the prompt is running?

Claude pointed me to the watch_dialogue() function which implements the polling loop.

When it catches the dialogue screen opening it writes out this message instead:

loading_text = ".<Pause [0A]>.<Pause [0A]>.<Pause [0A]><Press A><Clear Text>"

Those <Pause [0A]> tokens cause the came to pause for a few moments before giving the user the option to <Press A> to continue. This gives time for the LLM prompt to execute and return new text which can then be written to the correct memory area for display.

Hacker News commenters spotted some fun prompts in the source code, including this prompt to set the scene:

You are a resident of a town run by Tom Nook. You are beginning to realize your mortgage is exploitative and the economy is unfair. Discuss this with the player and other villagers when appropriate.

And this sequence of prompts that slowly raise the agitation of the villagers about their economic situation over time.

The system actually uses two separate prompts - one to generate responses from characters and another which takes those responses and decorates them with Animal Crossing specific control codes to add pauses, character animations and other neat effects.

My review of Claude’s new Code Interpreter, released under a very confusing name

Today on the Anthropic blog: Claude can now create and edit files:

[... 2,771 words]I ran Claude in a loop for three months, and it created a genz programming language called cursed (via) Geoffrey Huntley vibe-coded an entirely new programming language using Claude:

The programming language is called "cursed". It's cursed in its lexical structure, it's cursed in how it was built, it's cursed that this is possible, it's cursed in how cheap this was, and it's cursed through how many times I've sworn at Claude.

Geoffrey's initial prompt:

Hey, can you make me a programming language like Golang but all the lexical keywords are swapped so they're Gen Z slang?

Then he pushed it to keep on iterating over a three month period.

Here's Hello World:

vibe main

yeet "vibez"

slay main() {

vibez.spill("Hello, World!")

}

And here's binary search, part of 17+ LeetCode problems that run as part of the test suite:

slay binary_search(nums normie[], target normie) normie {

sus left normie = 0

sus right normie = len(nums) - 1

bestie (left <= right) {

sus mid normie = left + (right - left) / 2

ready (nums[mid] == target) {

damn mid

}

ready (nums[mid] < target) {

left = mid + 1

} otherwise {

right = mid - 1

}

}

damn -1

}

This is a substantial project. The repository currently has 1,198 commits. It has both an interpreter mode and a compiler mode, and can compile programs to native binaries (via LLVM) for macOS, Linux and Windows.

It looks like it was mostly built using Claude running via Sourcegraph's Amp, which produces detailed commit messages. The commits include links to archived Amp sessions but sadly those don't appear to be publicly visible.

The first version was written in C, then Geoffrey had Claude port it to Rust and then Zig. His cost estimate:

Technically it costs about 5k usd to build your own compiler now because cursed was implemented first in c, then rust, now zig. So yeah, it’s not one compiler it’s three editions of it. For a total of $14k USD.