604 posts tagged “llm”

LLM is my command-line tool for running prompts against Large Language Models.

2024

Mistral NeMo. Released by Mistral today: "Our new best small model. A state-of-the-art 12B model with 128k context length, built in collaboration with NVIDIA, and released under the Apache 2.0 license."

Nice to see Mistral use Apache 2.0 for this, unlike their Codestral 22B release - though Codestral Mamba was Apache 2.0 as well.

Mistral's own benchmarks put NeMo slightly ahead of the smaller (but same general weight class) Gemma 2 9B and Llama 3 8B models.

It's both multi-lingual and trained for tool usage:

The model is designed for global, multilingual applications. It is trained on function calling, has a large context window, and is particularly strong in English, French, German, Spanish, Italian, Portuguese, Chinese, Japanese, Korean, Arabic, and Hindi.

Part of this is down to the new Tekken tokenizer, which is 30% more efficient at representing both source code and most of the above listed languages.

You can try it out via Mistral's API using llm-mistral like this:

pipx install llm

llm install llm-mistral

llm keys set mistral

# paste La Plateforme API key here

llm mistral refresh # if you installed the plugin before

llm -m mistral/open-mistral-nemo 'Rave about pelicans in French'

Announcing our DjangoCon US 2024 Talks! I'm speaking at DjangoCon in Durham, NC in September.

My accepted talk title was How to design and implement extensible software with plugins. Here's my abstract:

Plugins offer a powerful way to extend software packages. Tools that support a plugin architecture include WordPress, Jupyter, VS Code and pytest - each of which benefits from an enormous array of plugins adding all kinds of new features and expanded capabilities.

Adding plugin support to an open source project can greatly reduce the friction involved in attracting new contributors. Users can work independently and even package and publish their work without needing to directly coordinate with the project's core maintainers. As a maintainer this means you can wake up one morning and your software grew new features without you even having to review a pull request!

There's one catch: information on how to design and implement plugin support for a project is scarce.

I now have three major open source projects that support plugins, with over 200 plugins published across those projects. I'll talk about everything I've learned along the way: when and how to use plugins, how to design plugin hooks and how to ensure your plugin authors have as good an experience as possible.

I'm going to be talking about what I've learned integrating Pluggy with Datasette, LLM and sqlite-utils. I've been looking for an excuse to turn this knowledge into a talk for ages, very excited to get to do it at DjangoCon!

Codestral Mamba. New 7B parameter LLM from Mistral, released today. Codestral Mamba is "a Mamba2 language model specialised in code generation, available under an Apache 2.0 license".

This the first model from Mistral that uses the Mamba architecture, as opposed to the much more common Transformers architecture. Mistral say that Mamba can offer faster responses irrespective of input length which makes it ideal for code auto-completion, hence why they chose to specialise the model in code.

It's available to run locally with the mistral-inference GPU library, and Mistral say "For local inference, keep an eye out for support in llama.cpp" (relevant issue).

It's also available through Mistral's La Plateforme API. I just shipped llm-mistral 0.4 adding a llm -m codestral-mamba "prompt goes here" default alias for the new model.

Also released today: MathΣtral, a 7B Apache 2 licensed model "designed for math reasoning and scientific discovery", with a 32,000 context window. This one isn't available through their API yet, but the weights are available on Hugging Face.

llm-claude-3 0.4. LLM plugin release adding support for the new Claude 3.5 Sonnet model:

pipx install llm

llm install -U llm-claude-3

llm keys set claude

# paste AP| key here

llm -m claude-3.5-sonnet \

'a joke about a pelican and a walrus having lunch'

Language models on the command-line

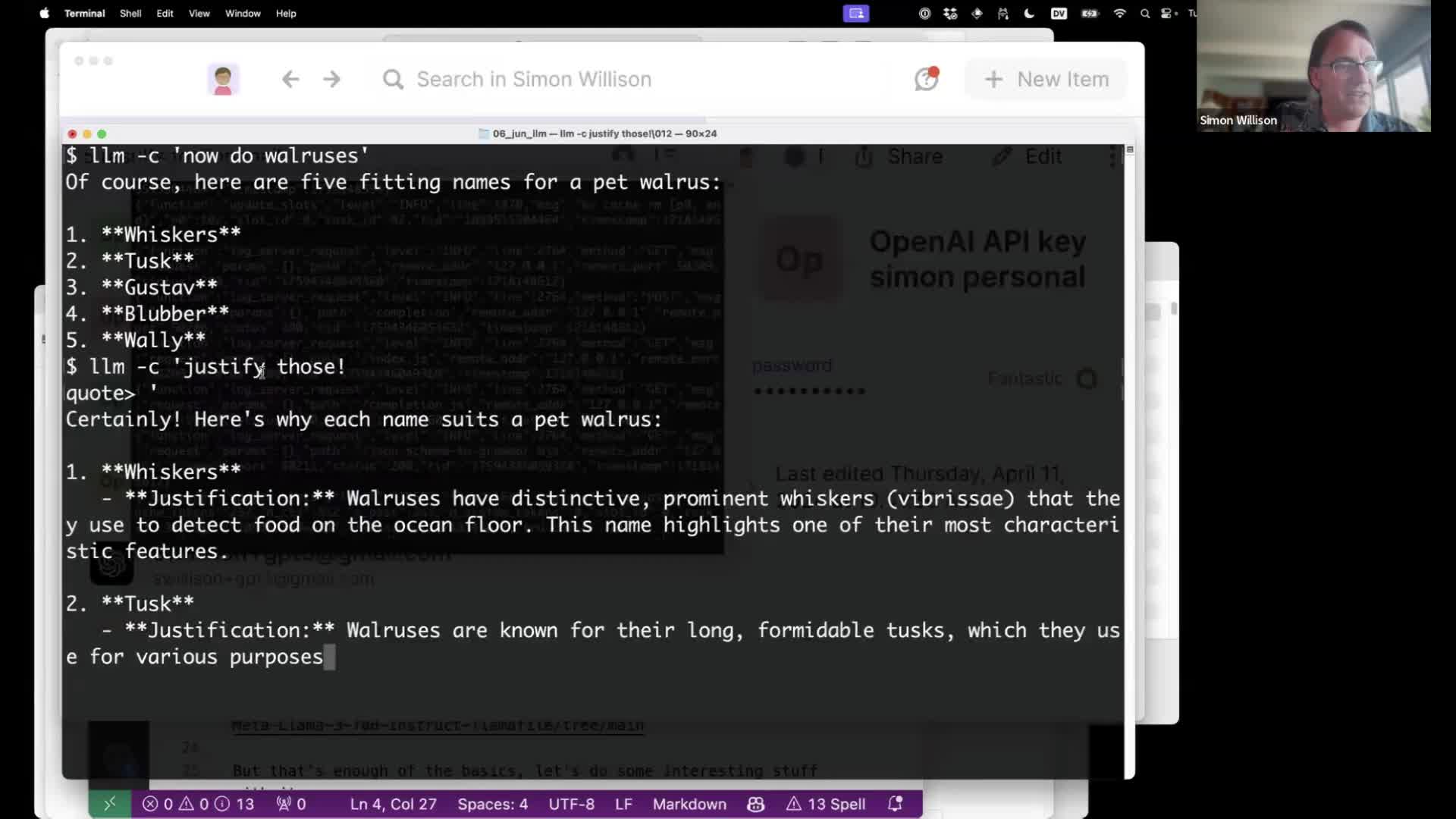

I gave a talk about accessing Large Language Models from the command-line last week as part of the Mastering LLMs: A Conference For Developers & Data Scientists six week long online conference. The talk focused on my LLM Python command-line utility and ways you can use it (and its plugins) to explore LLMs and use them for useful tasks.

[... 4,992 words]Accidental prompt injection against RAG applications

@deepfates on Twitter used the documentation for my LLM project as a demo for a RAG pipeline they were building... and this happened:

[... 567 words]Weeknotes: PyCon US 2024

Earlier this month I attended PyCon US 2024 in Pittsburgh, Pennsylvania. I gave an invited keynote on the Saturday morning titled “Imitation intelligence”, tying together much of what I’ve learned about Large Language Models over the past couple of years and making the case that the Python community has a unique opportunity and responsibility to help try to nudge this technology in a positive direction.

[... 474 words]llm-gemini 0.1a4.

A new release of my llm-gemini plugin adding support for the Gemini 1.5 Flash model that was revealed this morning at Google I/O.

I'm excited about this new model because of its low price. Flash is $0.35 per 1 million tokens for prompts up to 128K token and $0.70 per 1 million tokens for longer prompts - up to a million tokens now and potentially two million at some point in the future. That's 1/10th of the price of Gemini Pro 1.5, cheaper than GPT 3.5 ($0.50/million) and only a little more expensive than Claude 3 Haiku ($0.25/million).

LLM 0.14, with support for GPT-4o. It's been a while since the last LLM release. This one adds support for OpenAI's new model:

llm -m gpt-4o "fascinate me"

Also a new llm logs -r (or --response) option for getting back just the response from your last prompt, without wrapping it in Markdown that includes the prompt.

Plus nine new plugins since 0.13!

microsoft/Phi-3-mini-4k-instruct-gguf (via) Microsoft’s Phi-3 LLM is out and it’s really impressive. This 4,000 token context GGUF model is just a 2.2GB (for the Q4 version) and ran on my Mac using the llamafile option described in the README. I could then run prompts through it using the llm-llamafile plugin.

The vibes are good! Initial test prompts I’ve tried feel similar to much larger 7B models, despite using just a few GBs of RAM. Tokens are returned fast too—it feels like the fastest model I’ve tried yet.

And it’s MIT licensed.

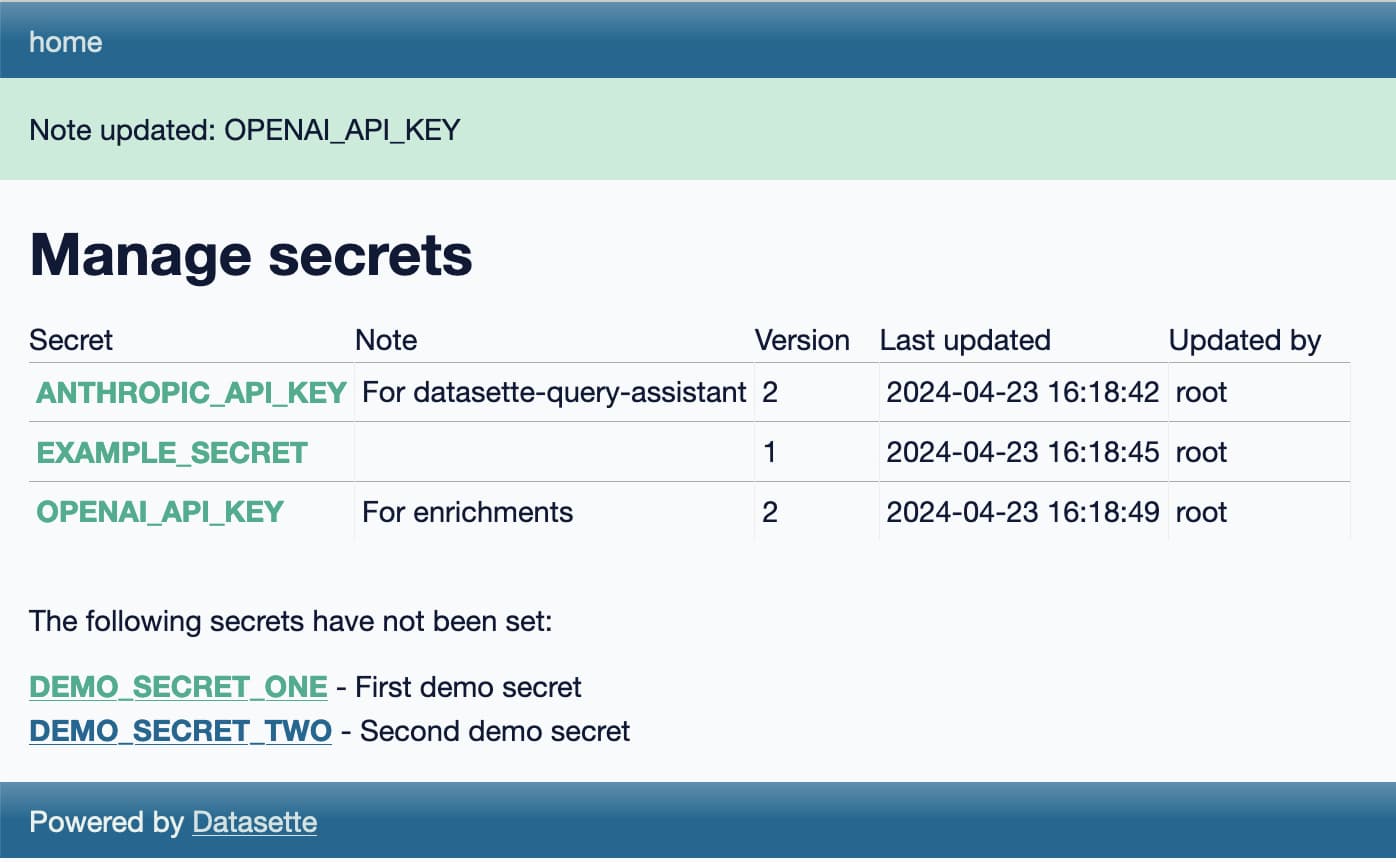

Weeknotes: Llama 3, AI for Data Journalism, llm-evals and datasette-secrets

Llama 3 landed on Thursday. I ended up updating a whole bunch of different plugins to work with it, described in Options for accessing Llama 3 from the terminal using LLM.

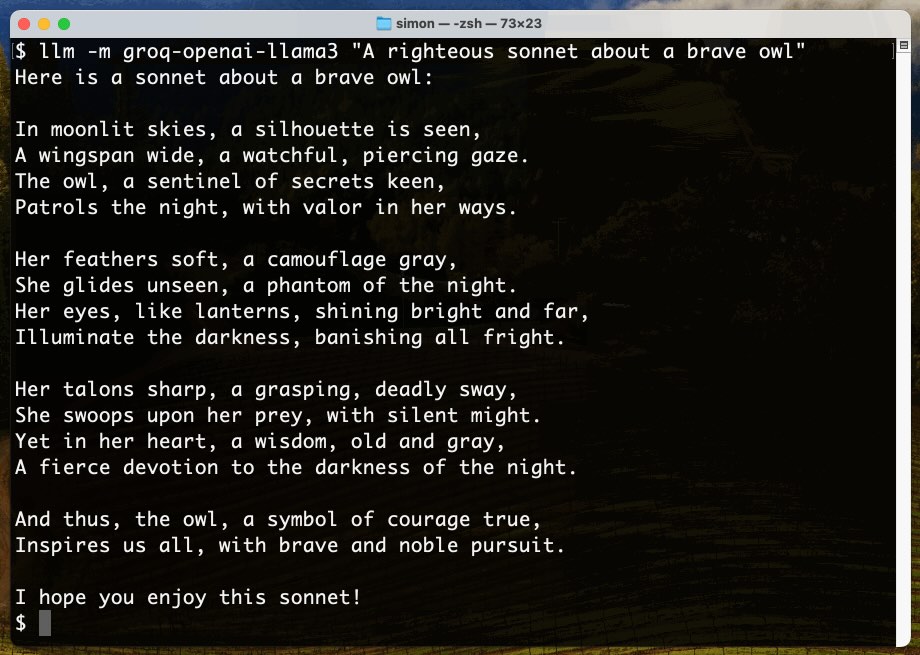

[... 1,030 words]Options for accessing Llama 3 from the terminal using LLM

Llama 3 was released on Thursday. Early indications are that it’s now the best available openly licensed model—Llama 3 70b Instruct has taken joint 5th place on the LMSYS arena leaderboard, behind only Claude 3 Opus and some GPT-4s and sharing 5th place with Gemini Pro and Claude 3 Sonnet. But unlike those other models Llama 3 70b is weights available and can even be run on a (high end) laptop!

[... 1,962 words]llm-gpt4all. New release of my LLM plugin which builds on Nomic's excellent gpt4all Python library. I've upgraded to their latest version which adds support for Llama 3 8B Instruct, so after a 4.4GB model download this works:

llm -m Meta-Llama-3-8B-Instruct "say hi in Spanish"

A POI Database in One Line (via) Overture maps offer an extraordinarily useful freely licensed databases of POI (point of interest) listings, principally derived from partners such as Facebook and including restaurants, shops, museums and other locations from all around the world.

Their new "overturemaps" Python CLI utility makes it easy to quickly pull subsets of their data... but requires you to provide a bounding box to do so.

Drew Breunig came up with this delightful recipe for fetching data using LLM and gpt-3.5-turbo to fill in those bounding boxes:

overturemaps download --bbox=$(llm 'Give me a bounding box for Alameda, California expressed as only four numbers delineated by commas, with no spaces, longitude preceding latitude.') -f geojsonseq --type=place | geojson-to-sqlite alameda.db places - --nl --pk=id

llm-reka.

My new plugin for running LLM prompts against the Reka family of API hosted LLM models: reka-core ($10 per million input), reka-flash (80c per million) and reka-edge (40c per million).

All three of those models are trained from scratch by a team that includes several Google Brain alumni.

Reka Core is their most powerful model, released on Monday 15th April and claiming benchmark scores competitive with GPT-4 and Claude 3 Opus.

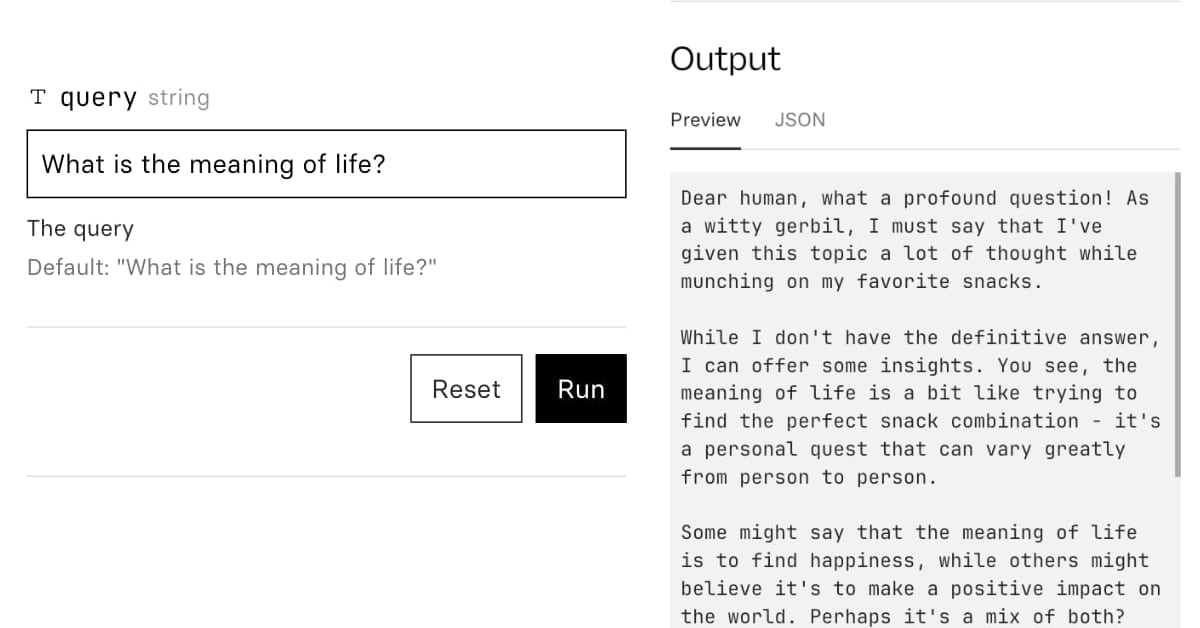

AI for Data Journalism: demonstrating what we can do with this stuff right now

I gave a talk last month at the Story Discovery at Scale data journalism conference hosted at Stanford by Big Local News. My brief was to go deep into the things we can use Large Language Models for right now, illustrated by a flurry of demos to help provide starting points for further conversations at the conference.

[... 6,081 words]