April 2026

109 posts: 13 entries, 25 links, 16 quotes, 8 notes, 46 beats, 1 chapter

April 23, 2026

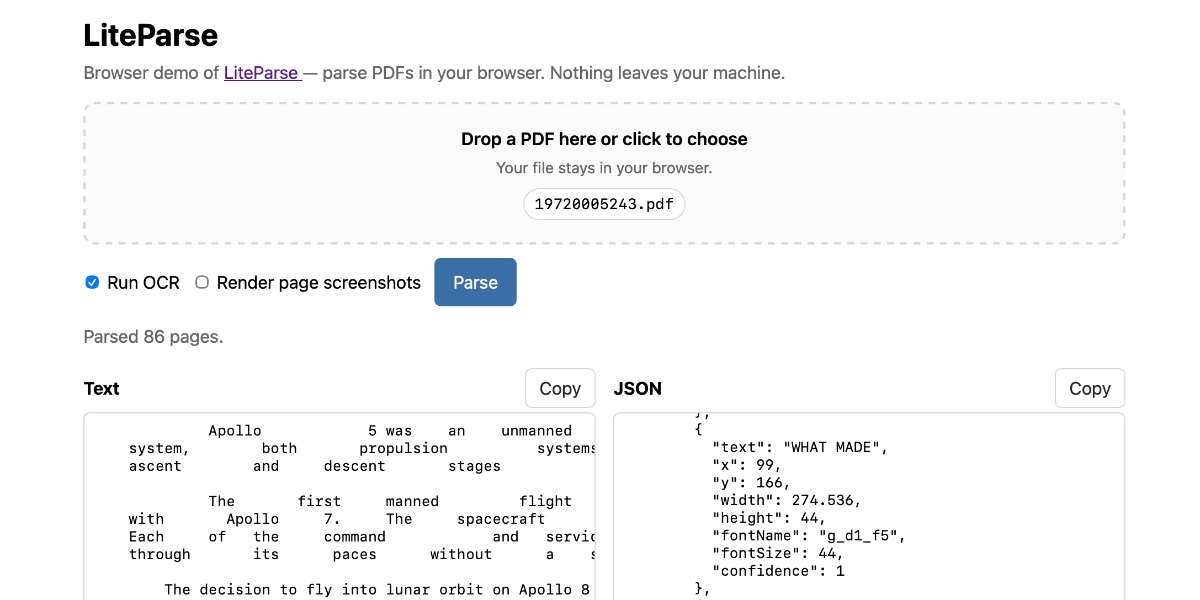

Extract PDF text in your browser with LiteParse for the web

LlamaIndex have a most excellent open source project called LiteParse, which provides a Node.js CLI tool for extracting text from PDFs. I got a version of LiteParse working entirely in the browser, using most of the same libraries that LiteParse uses to run in Node.js.

[... 2,089 words]April 24, 2026

Serving the For You feed. One of Bluesky's most interesting features is that anyone can run their own custom "feed" implementation and make it available to other users - effectively enabling custom algorithms that can use any mechanism they like to recommend posts.

spacecowboy runs the For You Feed, used by around 72,000 people. This guest post on the AT Protocol blog explains how it works.

The architecture is fascinating. The feed is served by a single Go process using SQLite on a "gaming" PC in spacecowboy's living room - 16 cores, 96GB of RAM and 4TB of attached NVMe storage.

Recommendations are based on likes: what else are the people who like the same things as you liking on the platform?

That Go server consumes the Bluesky firehose and stores the relevant details in SQLite, keeping the last 90 days of relevant data, which currently uses around 419GB of SQLite storage.

Public internet traffic is handled by a $7/month VPS on OVH, which talks to the living room server via Tailscale.

Total cost is now $30/month: $20 in electricity, $7 in VPS and $3 for the two domain names. spacecowboy estimates that the existing system could handle all ~1 million daily active Bluesky users if they were to switch to the cheapest algorithm they have found to work.

An update on recent Claude Code quality reports (via) It turns out the high volume of complaints that Claude Code was providing worse quality results over the past two months was grounded in real problems.

The models themselves were not to blame, but three separate issues in the Claude Code harness caused complex but material problems which directly affected users.

Anthropic's postmortem describes these in detail. This one in particular stood out to me:

On March 26, we shipped a change to clear Claude's older thinking from sessions that had been idle for over an hour, to reduce latency when users resumed those sessions. A bug caused this to keep happening every turn for the rest of the session instead of just once, which made Claude seem forgetful and repetitive.

I frequently have Claude Code sessions which I leave for an hour (or often a day or longer) before returning to them. Right now I have 11 of those (according to ps aux | grep 'claude ') and that's after closing down dozens more the other day.

I estimate I spend more time prompting in these "stale" sessions than sessions that I've recently started!

If you're building agentic systems it's worth reading this article in detail - the kinds of bugs that affect harnesses are deeply complicated, even if you put aside the inherent non-deterministic nature of the models themselves.

russellromney/honker (via) "Postgres NOTIFY/LISTEN semantics" for SQLite, implemented as a Rust SQLite extension and various language bindings to help make use of it.

The design of this looks very solid. It lets you write Python code for queues that looks like this:

import honker db = honker.open("app.db") emails = db.queue("emails") emails.enqueue({"to": "alice@example.com"}) # Consume (in a worker process) async for job in emails.claim("worker-1"): send(job.payload) job.ack()

And Kafka-style durable streams like this:

stream = db.stream("user-events") with db.transaction() as tx: tx.execute("UPDATE users SET name=? WHERE id=?", [name, uid]) stream.publish({"user_id": uid, "change": "name"}, tx=tx) async for event in stream.subscribe(consumer="dashboard"): await push_to_browser(event)

It also adds 20+ custom SQL functions including these two:

SELECT notify('orders', '{"id":42}');

SELECT honker_stream_read_since('orders', 0, 1000);The extension requires WAL mode, and workers can poll the .db-wal file with a stat call every 1ms to get as close to real-time as possible without the expense of running a full SQL query.

honker implements the transactional outbox pattern, which ensures items are only queued if a transaction successfully commits. My favorite explanation of that pattern remains Transactionally Staged Job Drains in Postgres by Brandur Leach. It's great to see a new implementation of that pattern for SQLite.

This week's edition of my email newsletter (aka content from this blog delivered to your inbox) features 4 pelicans riding bicycles, 1 possum on an e-scooter, up to 5 raccoons with ham radios hiding in crowds, 5 blog posts, 8 links, 3 quotes and a new chapter of my Agentic Engineering Patterns guide.

LLM reports prompt durations in milliseconds and I got fed up of having to think about how to convert those to seconds and minutes.

DeepSeek V4—almost on the frontier, a fraction of the price

Chinese AI lab DeepSeek’s last model release was V3.2 (and V3.2 Speciale) last December. They just dropped the first of their hotly anticipated V4 series in the shape of two preview models, DeepSeek-V4-Pro and DeepSeek-V4-Flash.

[... 703 words]The people do not yearn for automation (via) This written and video essay by Nilay Patel explores why AI is unpopular with the general public even as usage numbers for ChatGPT continue to skyrocket.

It’s a superb piece of commentary, and something I expect I’ll be thinking about for a long time to come.

Nilay’s core idea is that people afflicted with “software brain” - who see the world as something to be automated as much as possible, and attempt to model everything in terms of information flows and data - are becoming detached from everyone else.

[…] software brain has ruled the business world for a long time. AI has just made it easier than ever for more people to make more software than ever before — for every kind of business to automate big chunks of itself with software. It’s everywhere: the absolute cutting edge of advertising and marketing is automation with AI. It’s not being a creative.

But: not everything is a business. Not everything is a loop! The entire human experience cannot be captured in a database. That’s the limit of software brain. That’s why people hate AI. It flattens them.

Regular people don’t see the opportunity to write code as an opportunity at all. The people do not yearn for automation. I’m a full-on smart home sicko; the lights and shades and climate controls of my house are automated in dozens of ways. But huge companies like Apple, Google and Amazon have struggled for over a decade now to make regular people care about smart home automation at all. And they just don’t.

- New GPT-5.5 OpenAI model:

llm -m gpt-5.5. #1418- New option to set the text verbosity level for GPT-5+ OpenAI models:

-o verbosity low. Values arelow,medium,high.- New option for setting the image detail level used for image attachments to OpenAI models:

-o image_detail low- values arelow,highandauto, and GPT-5.4 and 5.5 also acceptoriginal.- Models listed in

extra-openai-models.yamlare now also registered as asynchronous. #1395

April 25, 2026

GPT-5.5 prompting guide. Now that GPT-5.5 is available in the API, OpenAI have released a wealth of useful tips on how best to prompt the new model.

Here's a neat trick they recommend for applications that might spend considerable time thinking before returning a user-visible response:

Before any tool calls for a multi-step task, send a short user-visible update that acknowledges the request and states the first step. Keep it to one or two sentences.

I've already noticed their Codex app doing this, and it does make longer running tasks feel less like the model has crashed.

OpenAI suggest running the following in Codex to upgrade your existing code using advice embedded in their openai-docs skill:

$openai-docs migrate this project to gpt-5.5

The upgrade guide the coding agent will follow is this one, which even includes light instructions on how to rewrite prompts to better fit the model.

Also relevant is the Using GPT-5.5 guide, which opens with this warning:

To get the most out of GPT-5.5, treat it as a new model family to tune for, not a drop-in replacement for

gpt-5.2orgpt-5.4. Begin migration with a fresh baseline instead of carrying over every instruction from an older prompt stack. Start with the smallest prompt that preserves the product contract, then tune reasoning effort, verbosity, tool descriptions, and output format against representative examples.

Interesting to see OpenAI recommend starting from scratch rather than trusting that existing prompts optimized for previous models will continue to work effectively with GPT-5.5.

Since GPT-5.4, we’ve unified Codex and the main model into a single system, so there’s no separate coding line anymore.

GPT-5.5 takes this further, with strong gains in agentic coding, computer use, and any task on a computer.

— Romain Huet, confirming OpenAI won't release a GPT-5.5-Codex model

@scottjla on Twitter in reply to my pelican riding a bicycle benchmark:

I feel like we need to stack these tests now

I checked to confirm that the model (ChatGPT Images 2.0) added the "WHY ARE YOU LIKE THIS" sign of its own accord and it did - the prompt Scott used was:

Create an image of a horse riding an astronaut, where the astronaut is riding a pelican that is riding a bicycle. It looks very chaotic but they all just manage to balance on top of each other

April 27, 2026

Speech translation in Google Meet is now rolling out to mobile devices. I just encountered this feature via a "try this out now" prompt in a Google Meet meeting. It kind-of worked!

This is Google's implementation of the ultimate sci-fi translation app, where two people can talk to each other in two separate languages and Meet translates from one to the other and - with a short delay - repeats the text in your preferred language, with a rough imitation of the original speaker's voice.

It can only handle English, Spanish, French, German, Portuguese, and Italian at the moment. It's also still very alpha - I ran it successfully between two laptops running web browsers, but then when I tried between an iPhone and an iPad it didn't seem to work.

Tracking the history of the now-deceased OpenAI Microsoft AGI clause

For many years, Microsoft and OpenAI’s relationship has included a weird clause saying that, should AGI be achieved, Microsoft’s commercial IP rights to OpenAI’s technology would be null and void. That clause appeared to end today. I decided to try and track its expression over time on openai.com.

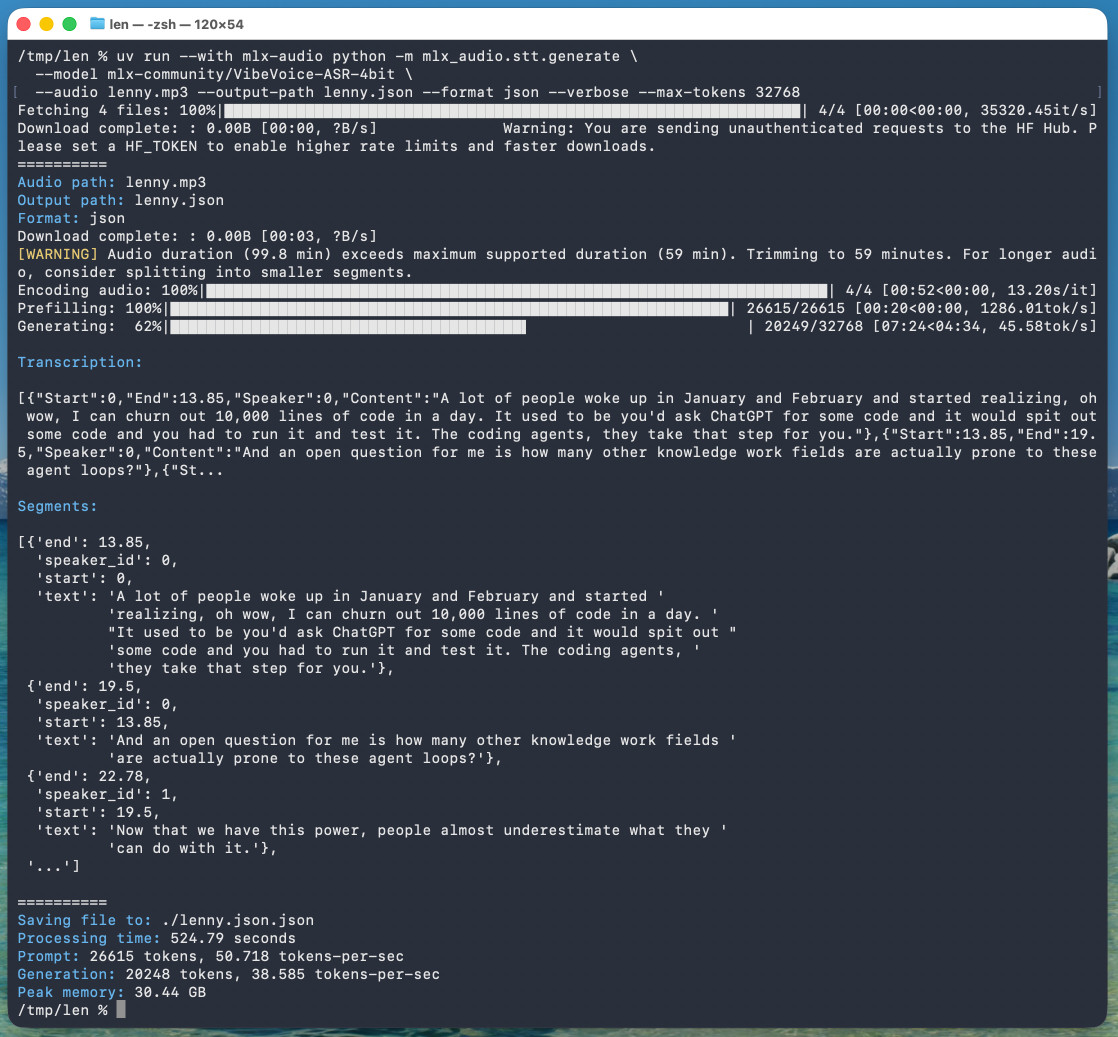

[... 691 words]microsoft/VibeVoice. VibeVoice is Microsoft's Whisper-style audio model for speech-to-text, MIT licensed and with speaker diarization built into the model.

Microsoft released it on January 21st, 2026 but I hadn't tried it until today. Here's a one-liner to run it on a Mac with uv, mlx-audio (by Prince Canuma) and the 5.71GB mlx-community/VibeVoice-ASR-4bit MLX conversion of the 17.3GB VibeVoice-ASR model, in this case against a downloaded copy of my recent podcast appearance with Lenny Rachitsky:

uv run --with mlx-audio mlx_audio.stt.generate \

--model mlx-community/VibeVoice-ASR-4bit \

--audio lenny.mp3 --output-path lenny \

--format json --verbose --max-tokens 32768

The tool reported back:

Processing time: 524.79 seconds

Prompt: 26615 tokens, 50.718 tokens-per-sec

Generation: 20248 tokens, 38.585 tokens-per-sec

Peak memory: 30.44 GB

So that's 8 minutes 45 seconds for an hour of audio (running on a 128GB M5 Max MacBook Pro).

I've tested it against .wav and .mp3 files and they both worked fine.

If you omit --max-tokens it defaults to 8192, which is enough for about 25 minutes of audio. I discovered that through trial-and-error and quadrupled it to guarantee I'd get the full hour.

That command reported using 30.44GB of RAM at peak, but in Activity Monitor I observed 61.5GB of usage during the prefill stage and around 18GB during the generating phase.

Here's the resulting JSON. The key structure looks like this:

{

"text": "And an open question for me is how many other knowledge work fields are actually prone to these agent loops?",

"start": 13.85,

"end": 19.5,

"duration": 5.65,

"speaker_id": 0

},

{

"text": "Now that we have this power, people almost underestimate what they can do with it.",

"start": 19.5,

"end": 22.78,

"duration": 3.280000000000001,

"speaker_id": 1

},

{

"text": "Today, probably 95% of the code that I produce, I didn't type it myself. I write so much of my code on my phone. It's wild.",

"start": 22.78,

"end": 30.0,

"duration": 7.219999999999999,

"speaker_id": 0

}

Since that's an array of objects we can open it in Datasette Lite, making it easier to browse.

Amusingly that Datasette Lite view shows three speakers - it identified Lenny and me for the conversation, and then a separate Lenny for the voice he used for the additional intro and the sponsor reads!

VibeVoice can only handle up to an hour of audio, so running the above command transcribed just the first hour of the podcast. To transcribe more than that you'd need to split the audio, ideally with a minute or so of overlap so you can avoid errors from partially transcribed words at the split point. You'd also need to then line up the identified speaker IDs across the multiple segments.

April 28, 2026

Introducing talkie: a 13B vintage language model from 1930 (via) New project from Nick Levine, David Duvenaud, and Alec Radford (of GPT, GPT-2, Whisper fame).

talkie-1930-13b-base (53.1 GB) is a "13B language model trained on 260B tokens of historical pre-1931 English text".

talkie-1930-13b-it (26.6 GB) is a checkpoint "finetuned using a novel dataset of instruction-response pairs extracted from pre-1931 reference works", designed to power a chat interface. You can try that out here.

Both models are Apache 2.0 licensed. Since the training data for the base model is entirely out of copyright (the USA copyright cutoff date is currently January 1, 1931), I'm hoping they later decide to release the training data as well.

Update on that: Nick Levine on Twitter:

Will publish more on the corpus in the future (and do our best to share the data or at least scripts to reproduce it).

Their report suggests some fascinating research objectives for this class of model, including:

- How good are these models at predicting the future? "we calculated the surprisingness of short descriptions of historical events to a 13B model trained on pre-1931 text"

- Can these models invent things that are past their knowledge cutoffs? "As Demis Hassabis has asked, could a model trained up to 1911 independently discover General Relativity, as Einstein did in 1915?"

- Can they be taught to program? "Figure 3 (left-hand side) shows an early example of such a test, measuring how well models trained on pre-1931 text can, when given a few demonstration examples of Python programs, write new correct programs."

I have a long-running interest in what I call "vegan models" - LLMs that are trained entirely on licensed or out-of-copyright data. I think the base model here qualifies, but the chat model isn't entirely pure due to the reliance on non-vegan models to help with the fine-tuning - emphasis mine:

First, we generated instruction-response pairs from historical texts with regular structure, such as etiquette manuals, letter-writing manuals, cookbooks, dictionaries, encyclopedias, and poetry and fable collections (see Figure 7), and fine-tuned our base model on them using a simple chat format.

Next, to improve instruction-following abilities, we generated synthetic prompts covering different types of tasks, such as summarizing documents, responding to direct information requests, and continuing multi-turn conversations coherently. We then ran online direct preference optimization on rollouts generated from these prompts, using Claude Sonnet 4.6 as a judge. [...]

Finally, we did another round of supervised fine-tuning, this time on rejection-sampled multi-turn synthetic chats between Claude Opus 4.6 and talkie, to smooth out persistent rough edges in its conversational abilities.

A major challenge in training talkie was avoiding accidental contamination, either from post-1931 text that snuck into the training corpus or from the fine-tuning assistance from modern LLMs introducing anachronistic knowledge to the chat model.

I recently wrote about Mr. Chatterbox, a similar vintage language model project which also had to turn to modern LLMs to create synthetic conversations to help train the chat model.

The talkie team aspire to eventually move beyond this limitation:

While we have tried to post-train talkie free from modern influence, reinforcement learning with AI feedback inevitably shapes talkie’s behavior anachronistically. (The 7B version of talkie emerged from RL speaking in listicles.) As we scale up, we hope to be able to use our vintage base models themselves as judges to enable a fully bootstrapped era-appropriate post-training pipeline.

I couldn't resist hitting the talkie demo with my classic test prompt:

Generate an SVG of a pelican riding a bicycle

It replied:

An SVG of a pelican riding a bicycle was generated in 1860. The pelican was represented as perched on the saddle, with its long bill pointed forward, and its legs astride the handles. The idea was suggested by the fact that pelicans are sometimes seen fishing on horseback, on the banks of the Rhine.

What’s new in pip 26.1—lockfiles and dependency cooldowns!

(via)

Richard Si describes an excellent set of upgrades to Python's default pip tool for installing dependencies.

This version drops support for Python 3.9 - fair enough, since it's been EOL since October. macOS still ships with python3 as a default Python 3.9, so I tried out the new Python version against Python 3.14 like this:

uv python install 3.14

mkdir /tmp/experiment

cd /tmp/experiment

python3.14 -m venv venv

source venv/bin/activate

pip install -U pip

pip --version

This confirmed I had pip 26.1 - then I tried out the new lock files:

pip lock datasette llm

This installs Datasette and LLM and all of their dependencies and writes the whole lot to a 519 line pylock.toml file - here's the result.

The new release also supports dependency cooldowns, discussed here previously, via the new --uploaded-prior-to PXD option where X is a number of days. The format is P-number-of-days-D, following ISO duration format but only supporting days.

I shipped a new release of LLM, version 0.31, three days ago. Here's how to use the new --uploaded-prior-to P4D option to ask for a version that is at least 4 days old.

pip install llm --uploaded-prior-to P4D

venv/bin/llm --version

This gave me version 0.30.

Five months in, I think I've decided that I don't want to vibecode — I want professionally managed software companies to use AI coding assistance to make more/better/cheaper software products that they sell to me for money.

Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query.

— OpenAI Codex base_instructions, for GPT-5.5