461 posts tagged “sqlite”

SQLite is the world's most widely deployed database engine.

2020

Weeknotes: Datasette 0.39 and many other projects

This week’s theme: Well, I’m not going anywhere. So a ton of progress to report on various projects.

[... 806 words]hacker-news-to-sqlite (via) The latest in my Dogsheep series of tools: hacker-news-to-sqlite uses the Hacker News API to fetch your comments and submissions from Hacker News and save them to a SQLite database.

Weeknotes: Datasette Writes

As discussed previously, the biggest hole in Datasette’s feature set at the moment involves writing to the database.

[... 604 words]Things I learned about shapefiles building shapefile-to-sqlite

The latest in my series of x-to-sqlite tools is shapefile-to-sqlite. I learned a whole bunch of things about the ESRI shapefile format while building it.

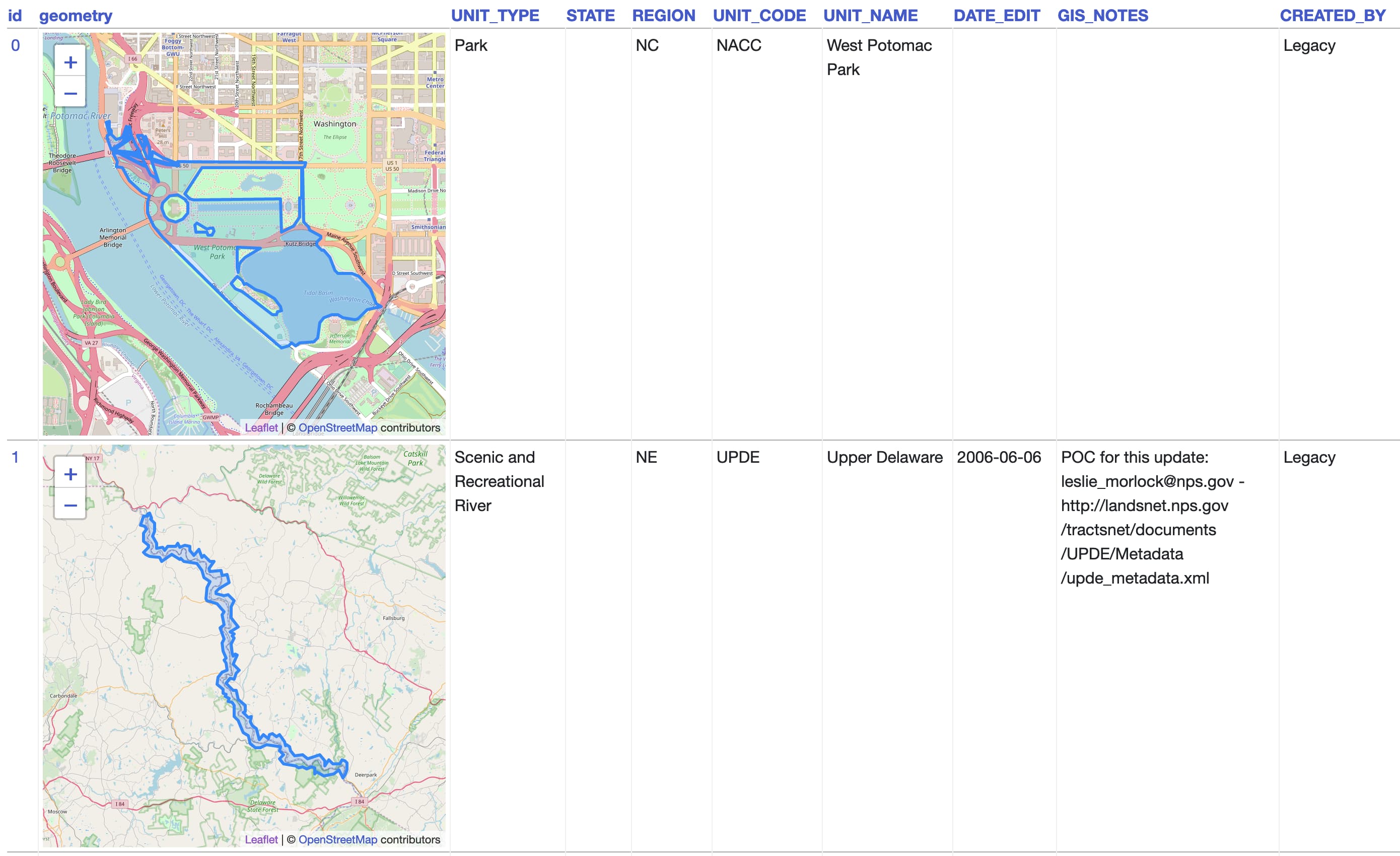

[... 1,073 words]geojson-to-sqlite (via) I just put out the first release of geojson-to-sqlite—a CLI tool that can convert GeoJSON files (consisting of a Feature or a set of features in a FeatureCollection) into a table in a SQLite database. If you use the --spatialite option it will initalize the table with SpatiaLite and store the geometries in a spacially indexed geometry field—without that option it stores them as GeoJSON.

Generated Columns in SQLite (via) SQLite 3.31.0 released today, and generated columns are the single most notable new feature. PostgreSQL 12 added these in October 2019, and MySQL has had them since 5.7 in October 2015. MySQL and SQLite both offer either “stored” or “virtual” generated columns, with virtual columns being calculated at runtime. PostgreSQL currently only supports stored columns.

Serving 100µs reads with 100% availability (via) Fascinating use-case for SQLite from Segment: they needed a massively replicated configuration database across all of their instances that process streaming data. They chose to make the configuration available as a ~50GB SQLite database file mirrored to every instance, meaning lookups against that data could complete in microseconds. Changes to the central MySQL configuration store are pulled every 2-3 seconds, resulting in a trade-off of consistency for availability which fits their use-case just fine.

2019

sqlite-utils 2.0: real upserts

I just released version 2.0 of my sqlite-utils library/CLI tool to PyPI.

[... 1,140 words]athena-sqlite (via) Amazon Athena is the AWS tool for querying data stored in S3—as CSV, JSON or Apache Parquet files—using SQL. It’s an interesting way of buliding a very cheap data warehouse on top of S3 without having to run any additional services. Athena recently added a query federation SDK which lets you define additional custom data sources using Lambda functions. Damon Cortesi used this to write a custom connector for SQLite, which lets you run queries against data stored in SQLite files that you have uploaded to S3. You can then run joins between that data and other Athena sources.

Logging to SQLite using ASGI middleware

I had some fun playing around with ASGI middleware and logging during our flight back to England for the holidays.

[... 2,535 words]sqlite-transform. I released a new CLI tool today: sqlite-transform, which lets you run “transformations” against a SQLite database. I built it out of frustration of constantly running into CSV files that use horrible American date formatting—the “sqlite-transform parsedatetime my.db mytable col1” command runs dateutil’s parser against those columns and replaces them with a nice, sortable ISO formatted timestamp. I’ve also added a “sqlite-transform lambda” command that lets you specify Python code directly on the command-line that should be used to transform every value in a specified column.

goodreads-to-sqlite (via) This is so cool! Tobias Kunze built a Python CLI tool to import your Goodreads data into a SQLite database, inspired by github-to-sqlite and my various other Dogsheep tools. It’s the first Dogsheep style tool I’ve seen that wasn’t built by me—and Tobias’ write-up includes some neat examples of queries you can run against your Goodreads data. I’ve now started using Goodreads and I’m importing my books into my own private Dogsheep Datasette instance.