Posts in Sep, 2023

Filters: Year: 2023 × Month: Sep × Sorted by date

Build an image search engine with llm-clip, chat with models with llm chat

LLM is my combination CLI tool and Python library for working with Large Language Models. I just released LLM 0.10 with two significant new features: embedding support for binary files and the llm chat command.

All models on Hugging Face, sorted by downloads (via) I realized this morning that “sort by downloads” against the list of all of the models on Hugging Face can work as a reasonably good proxy for “which of these models are easiest to get running on your own computer”.

The AI-assistant wars heat up with Claude Pro, a new ChatGPT Plus rival. I'm quoted in this piece about the new Claude Pro $20/month subscription from Anthropic:

Willison has also run into problems with Claude's morality filter, which has caused him trouble by accident: "I tried to use it against a transcription of a podcast episode, and it processed most of the text before—right in front of my eyes—it deleted everything it had done! I eventually figured out that they had started talking about bomb threats against data centers towards the end of the episode, and Claude effectively got triggered by that and deleted the entire transcript."

promptfoo: How to benchmark Llama2 Uncensored vs. GPT-3.5 on your own inputs. promptfoo is a CLI and library for “evaluating LLM output quality”. This tutorial in their documentation about using it to compare Llama 2 to gpt-3.5-turbo is a good illustration of how it works: it uses YAML files to configure the prompts, and more YAML to define assertions such as “not-icontains: AI language model”.

Matthew Honnibal from spaCy on why LLMs have not solved NLP. A common trope these days is that the entire field of NLP has been effectively solved by Large Language Models. Here’s a lengthy comment from Matthew Honnibal, creator of the highly regarded spaCy Python NLP library, explaining in detail why that argument doesn’t hold up.

Dynamic linker tricks: Using LD_PRELOAD to cheat, inject features and investigate programs (via) This tutorial by Rafał Cieślak from 2013 filled in a bunch of gaps in my knowledge about how C works on Linux.

bpy—Blender on PyPI (via) TIL you can “pip install” Blender!

bpy “provides Blender as a Python module”—it’s part of the official Blender project, and ships with binary wheels ranging in size from 168MB to 319MB depending on your platform.

It only supports the version of Python used by the current Blender release though—right now that’s Python 3.10.

hubcap.php (via) This PHP script by Dave Hulbert delights me. It’s 24 lines of code that takes a specified goal, then calls my LLM utility on a loop to request the next shell command to execute in order to reach that goal... and pipes the output straight into `exec()` after a 3s wait so the user can panic and hit Ctrl+C if it’s about to do something dangerous!

Using ChatGPT Code Intepreter (aka “Advanced Data Analysis”) to analyze your ChatGPT history. I posted a short thread showing how to upload your ChatGPT history to ChatGPT itself, then prompt it with “Build a dataframe of the id, title, create_time properties from the conversations.json JSON array of objects. Convert create_time to a date and plot it daily”.

Perplexity: interactive LLM visualization (via) I linked to a video of Linus Lee’s GPT visualization tool the other day. Today he’s released a new version of it that people can actually play with: it runs entirely in a browser, powered by a 120MB version of the GPT-2 ONNX model loaded using the brilliant Transformers.js JavaScript library.

Symbex 1.4. New release of my Symbex tool for finding symbols (functions, methods and classes) in a Python codebase. Symbex can now output matching symbols in JSON, CSV or TSV in addition to plain text.

I designed this feature for compatibility with the new “llm embed-multi” command—so you can now use Symbex to find every Python function in a nested directory and then pipe them to LLM to calculate embeddings for every one of them.

I tried it on my projects directory and embedded over 13,000 functions in just a few minutes! Next step is to figure out what kind of interesting things I can do with all of those embeddings.

A token-wise likelihood visualizer for GPT-2. Linus Lee built a superb visualization to help demonstrate how Large Language Models work, in the form of a video essay where each word is coloured to show how “surprising” it is to the model. It’s worth carefully reading the text in the video as each term is highlighted to get the full effect.

Wikipedia search-by-vibes through millions of pages offline (via) Really cool demo by Lee Butterman, who built embeddings of 2 million Wikipedia pages and figured out how to serve them directly to the browser, where they are used to implement “vibes based” similarity search returning results in 250ms. Lots of interesting details about how he pulled this off, using Arrow as the file format and ONNX to run the model in the browser.

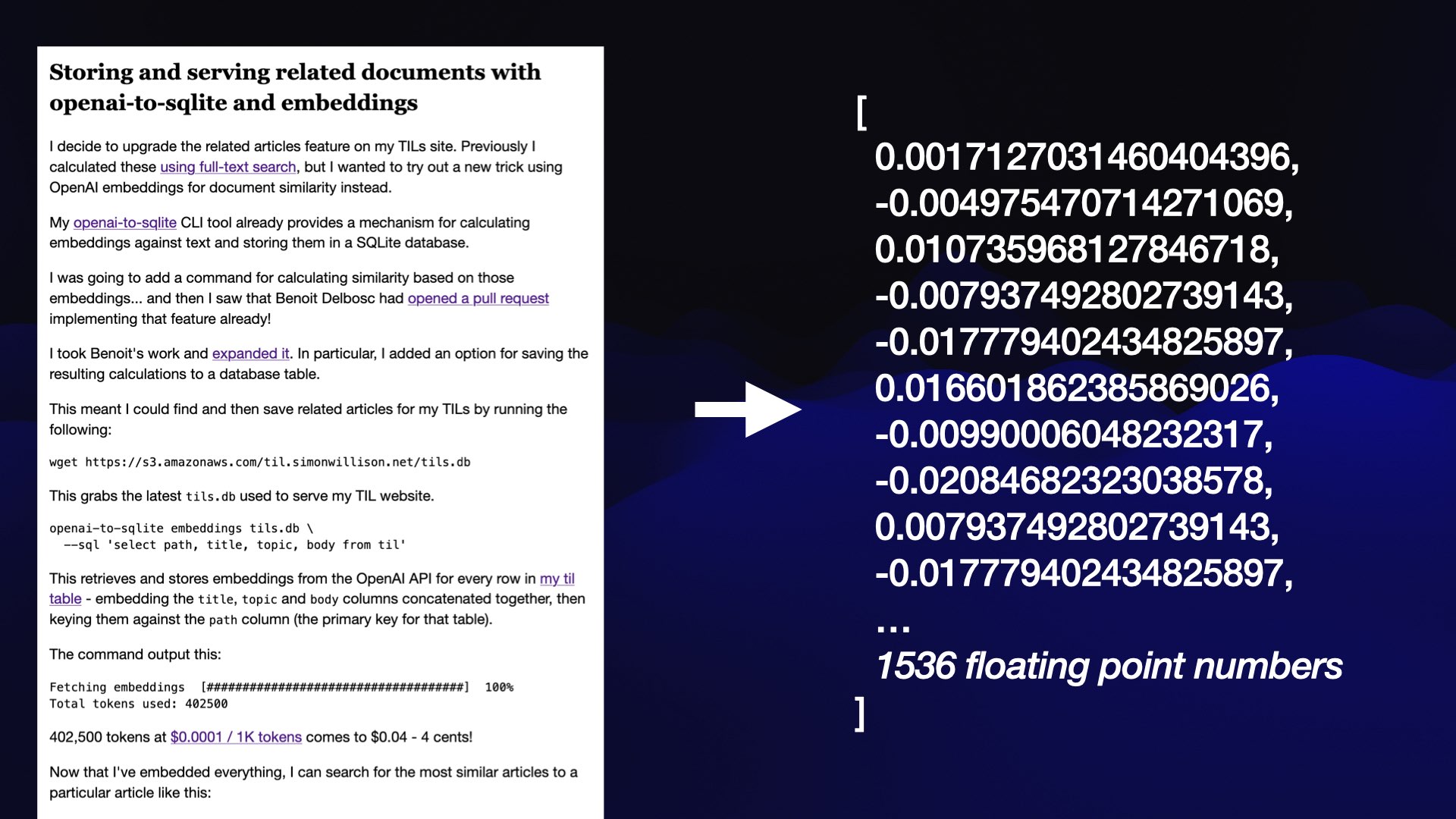

LLM now provides tools for working with embeddings

LLM is my Python library and command-line tool for working with language models. I just released LLM 0.9 with a new set of features that extend LLM to provide tools for working with embeddings.

[... 3,521 words]A practical guide to deploying Large Language Models Cheap, Good *and* Fast. Joel Kang’s extremely comprehensive notes on what he learned trying to run Vicuna-13B-v1.5 on an affordable cloud GPU server (a T4 at $0.615/hour). The space is in so much flux right now—Joel ended up using MLC but the best option could change any minute.

Vicuna 13B quantized to 4-bit integers needed 7.5GB of the T4’s 16GB of VRAM, and returned tokens at 20/second.

An open challenge running MLC right now is around batching and concurrency: “I did try making 3 concurrent requests to the endpoint, and while they all stream tokens back and the server doesn’t OOM, the output of all 3 streams seem to actually belong to a single prompt.”