Monday, 17th April 2023

MiniGPT-4 (via) An incredible project with a poorly chosen name. A team from King Abdullah University of Science and Technology in Saudi Arabia combined Vicuna-13B (a model fine-tuned on top of Facebook’s LLaMA) with the BLIP-2 vision-language model to create a model that can conduct ChatGPT-style conversations around an uploaded image. The demo is very impressive, and the weights are available to download—45MB for MiniGPT-4, but you’ll need the much larger Vicuna and LLaMA weights as well.

Latest Twitter search results for “as an AI language model” (via) Searching for “as an AI language model” on Twitter reveals hundreds of bot accounts which are clearly being driven by GPT models and have been asked to generate content which occasionally trips the ethical guidelines trained into the OpenAI models.

If Twitter still had an affordable search API someone could do some incredible disinformation research on top of this, looking at which accounts are implicated, what kinds of things they are tweeting about, who they follow and retweet and so-on.

RedPajama, a project to create leading open-source models, starts by reproducing LLaMA training dataset of over 1.2 trillion tokens. With the amount of projects that have used LLaMA as a foundation model since its release two months ago—despite its non-commercial license—it’s clear that there is a strong desire for a fully openly licensed alternative.

RedPajama is a collaboration between Together, Ontocord.ai, ETH DS3Lab, Stanford CRFM, Hazy Research, and MILA Québec AI Institute aiming to build exactly that.

Step one is gathering the training data: the LLaMA paper described a 1.2 trillion token training set gathered from sources that included Wikipedia, Common Crawl, GitHub, arXiv, Stack Exchange and more.

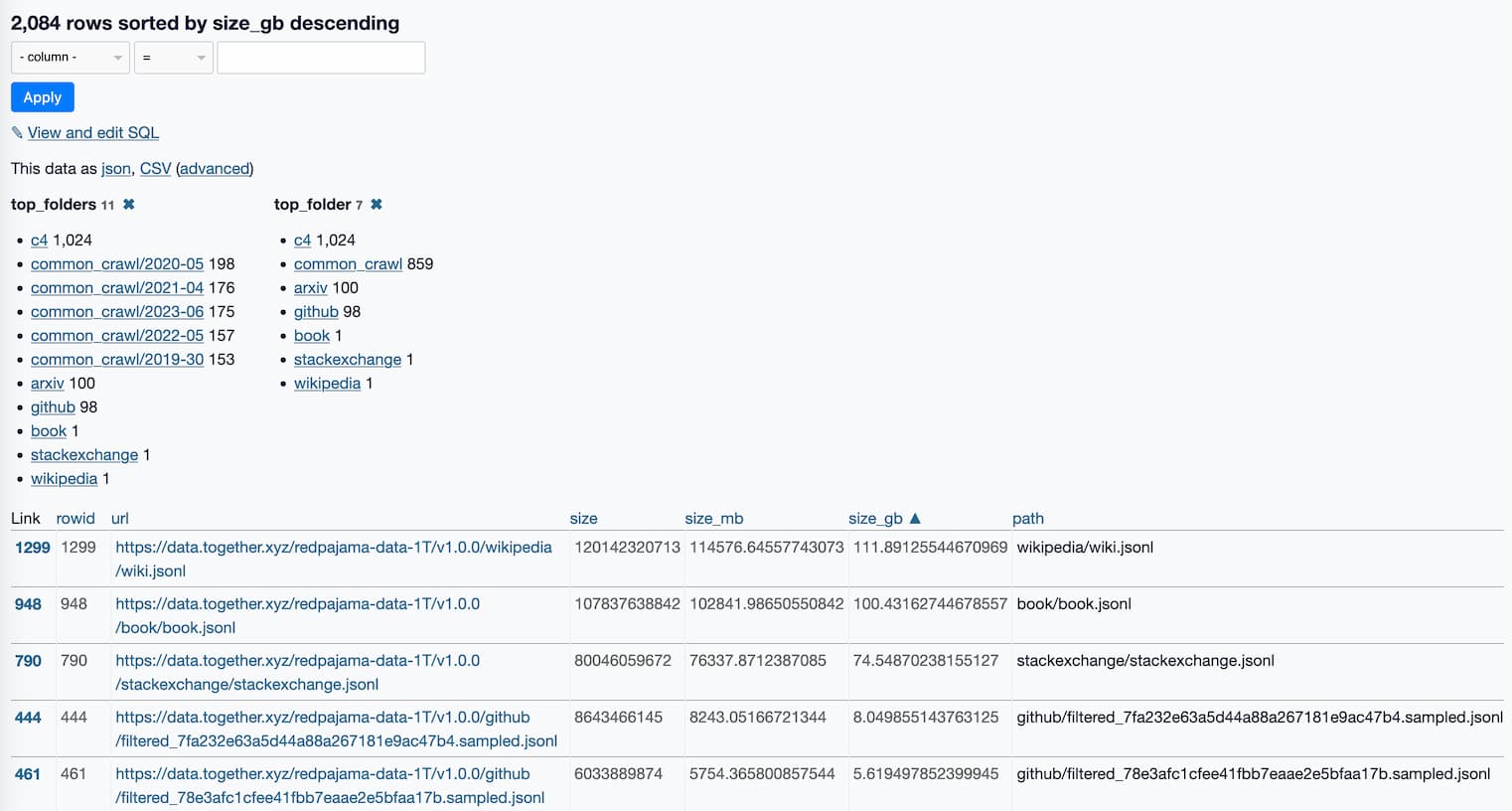

RedPajama-Data-1T is an attempt at recreating that training set. It’s now available to download, as 2,084 separate multi-GB jsonl files—2.67TB total.

Even without a trained model, this is a hugely influential contribution to the world of open source LLMs. Any team looking to build their own LLaMA from scratch can now jump straight to the next stage, training the model.

What’s in the RedPajama-Data-1T LLM training set

RedPajama is “a project to create leading open-source models, starts by reproducing LLaMA training dataset of over 1.2 trillion tokens”. It’s a collaboration between Together, Ontocord.ai, ETH DS3Lab, Stanford CRFM, Hazy Research, and MILA Québec AI Institute.

[... 1,077 words]