Wednesday, 12th April 2023

Running Python micro-benchmarks using the ChatGPT Code Interpreter alpha

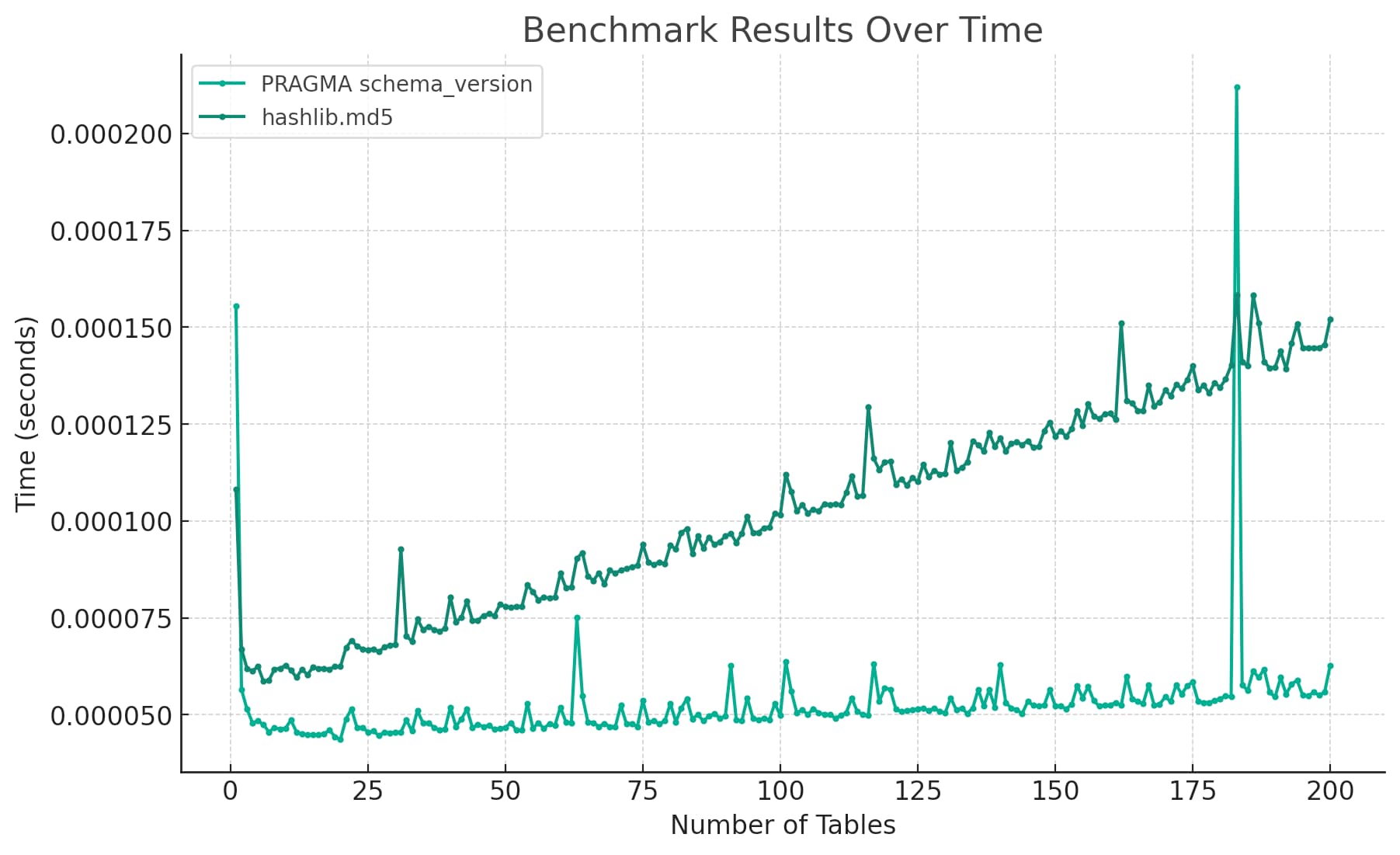

Today I wanted to understand the performance difference between two Python implementations of a mechanism to detect changes to a SQLite database schema. I rendered the difference between the two as this chart:

[... 2,939 words]Graphic designers had a similar sea change ~20-25 years ago.

Flyers, restaurant menus, wedding invitations, price lists... That sort of thing was bread and butter work for most designers. Then desktop publishing happened and a large fraction of designers lost their main source of income as the work shifted to computer assisted unskilled labor.

The field still thrives today, but that simple work is gone forever.

Replacing my best friends with an LLM trained on 500,000 group chat messages (via) Izzy Miller used a 7 year long group text conversation with five friends from college to fine-tune LLaMA, such that it could simulate ongoing conversations. They started by extracting the messages from the iMessage SQLite database on their Mac, then generated a new training set from those messages and ran it using code from the Stanford Alpaca repository. This is genuinely one of the clearest explanations of the process of fine-tuning a model like this I’ve seen anywhere.