Posts tagged llm, plugins in 2024

Filters: Year: 2024 × llm × plugins × Sorted by date

llm-openrouter 0.3. New release of my llm-openrouter plugin, which allows LLM to access models hosted by OpenRouter.

Quoting the release notes:

- Enable image attachments for models that support images. Thanks, Adam Montgomery. #12

- Provide async model access. #15

- Fix documentation to list correct

LLM_OPENROUTER_KEYenvironment variable. #10

datasette-enrichments-llm. Today's new alpha release is datasette-enrichments-llm, a plugin for Datasette 1.0a+ that provides an enrichment that lets you run prompts against data from one or more column and store the result in another column.

So far it's a light re-implementation of the existing datasette-enrichments-gpt plugin, now using the new llm.get_async_models() method to allow users to select any async-enabled model that has been registered by a plugin - so currently any of the models from OpenAI, Anthropic, Gemini or Mistral via their respective plugins.

Still plenty to do on this one. Next step is to integrate it with datasette-llm-usage and use it to drive a design-complete stable version of that.

datasette-queries. I released the first alpha of a new plugin to replace the crusty old datasette-saved-queries. This one adds a new UI element to the top of the query results page with an expandable form for saving the query as a new canned query:

It's my first plugin to depend on LLM and datasette-llm-usage - it uses GPT-4o mini to power an optional "Suggest title and description" button, labeled with the becoming-standard ✨ sparkles emoji to indicate an LLM-powered feature.

I intend to expand this to work across multiple models as I continue to iterate on llm-datasette-usage to better support those kinds of patterns.

For the moment though each suggested title and description call costs about 250 input tokens and 50 output tokens, which against GPT-4o mini adds up to 0.0067 cents.

datasette-llm-usage. I released the first alpha of a Datasette plugin to help track LLM usage by other plugins, with the goal of supporting token allowances - both for things like free public apps that stop working after a daily allowance, plus free previews of AI features for paid-account-based projects such as Datasette Cloud.

It's using the usage features I added in LLM 0.19.

The alpha doesn't do much yet - it will start getting interesting once I upgrade other plugins to depend on it.

Design notes so far in issue #1.

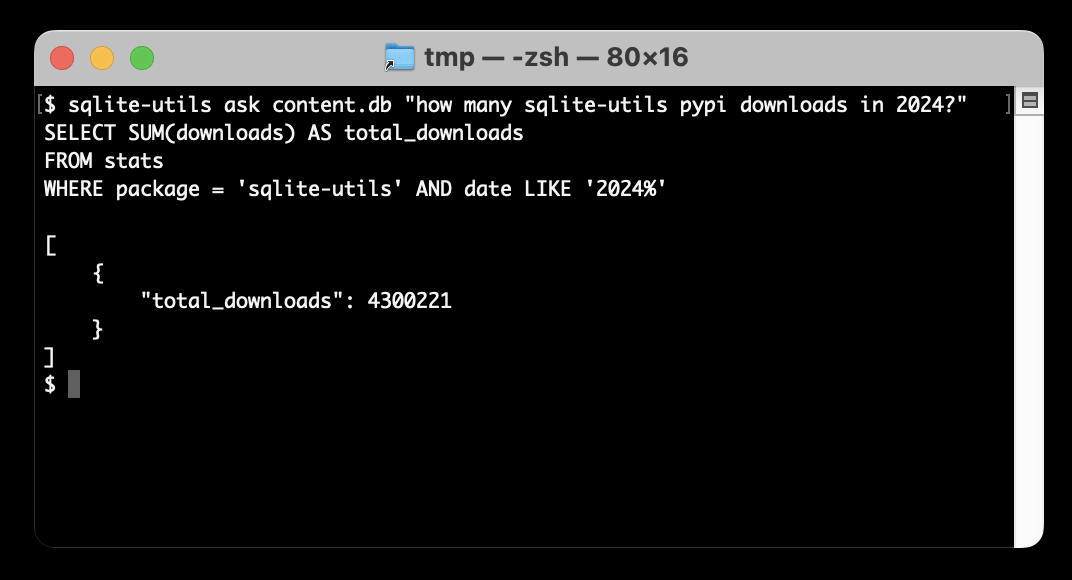

Ask questions of SQLite databases and CSV/JSON files in your terminal

I built a new plugin for my sqlite-utils CLI tool that lets you ask human-language questions directly of SQLite databases and CSV/JSON files on your computer.

[... 723 words]llm-gemini 0.4.

New release of my llm-gemini plugin, adding support for asynchronous models (see LLM 0.18), plus the new gemini-exp-1114 model (currently at the top of the Chatbot Arena) and a -o json_object 1 option to force JSON output.

I also released llm-claude-3 0.9 which adds asynchronous support for the Claude family of models.

llm-whisper-api. I wanted to run an experiment through the OpenAI Whisper API this morning so I knocked up a very quick plugin for LLM that provides the following interface:

llm install llm-whisper-api

llm whisper-api myfile.mp3 > transcript.txt

It uses the API key that you previously configured using the llm keys set openai command. If you haven't configured one you can pass it as --key XXX instead.

It's a tiny plugin: the source code is here.

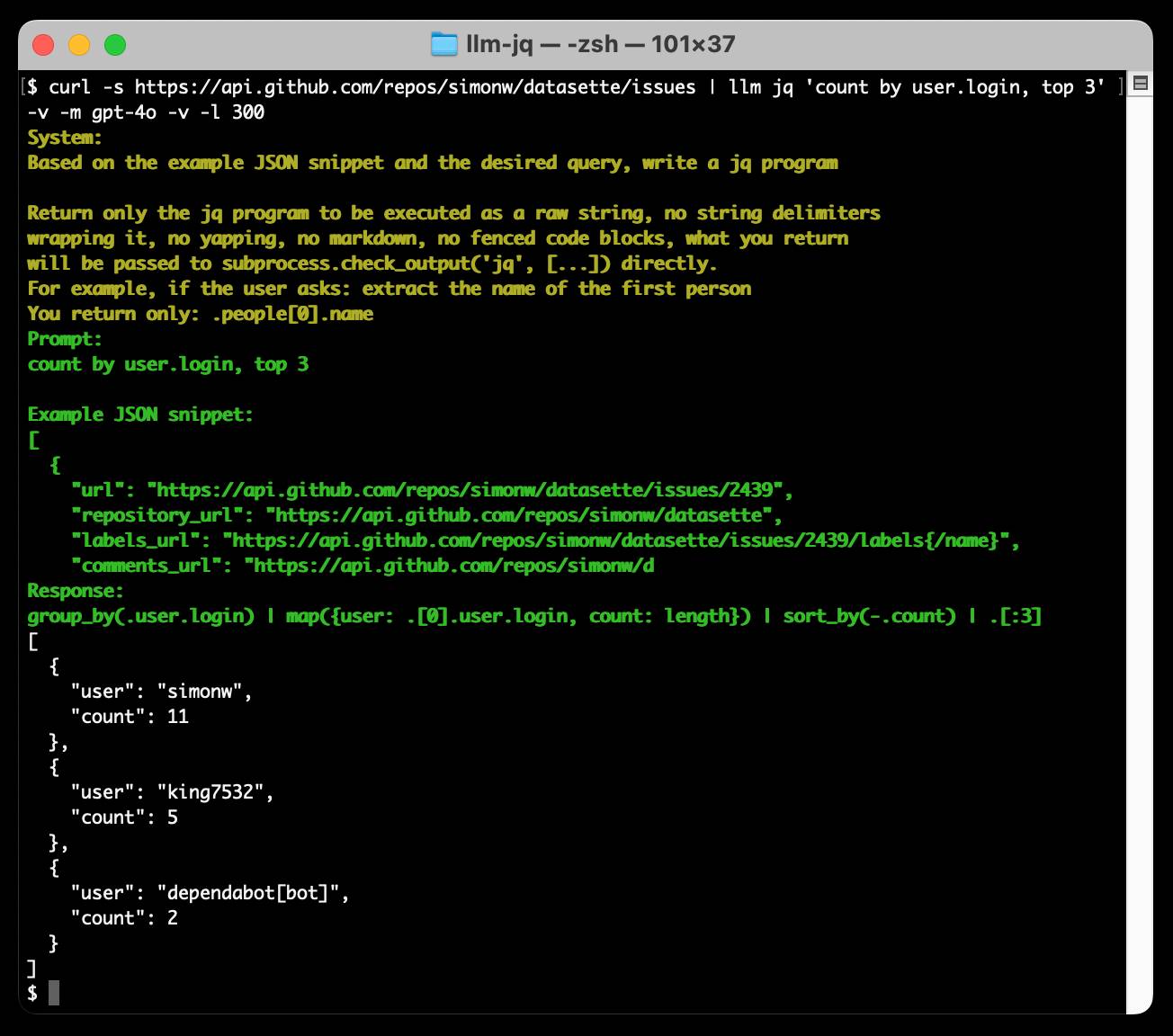

Run a prompt to generate and execute jq programs using llm-jq

llm-jq is a brand new plugin for LLM which lets you pipe JSON directly into the llm jq command along with a human-language description of how you’d like to manipulate that JSON and have a jq program generated and executed for you on the fly.

Announcing our DjangoCon US 2024 Talks! I'm speaking at DjangoCon in Durham, NC in September.

My accepted talk title was How to design and implement extensible software with plugins. Here's my abstract:

Plugins offer a powerful way to extend software packages. Tools that support a plugin architecture include WordPress, Jupyter, VS Code and pytest - each of which benefits from an enormous array of plugins adding all kinds of new features and expanded capabilities.

Adding plugin support to an open source project can greatly reduce the friction involved in attracting new contributors. Users can work independently and even package and publish their work without needing to directly coordinate with the project's core maintainers. As a maintainer this means you can wake up one morning and your software grew new features without you even having to review a pull request!

There's one catch: information on how to design and implement plugin support for a project is scarce.

I now have three major open source projects that support plugins, with over 200 plugins published across those projects. I'll talk about everything I've learned along the way: when and how to use plugins, how to design plugin hooks and how to ensure your plugin authors have as good an experience as possible.

I'm going to be talking about what I've learned integrating Pluggy with Datasette, LLM and sqlite-utils. I've been looking for an excuse to turn this knowledge into a talk for ages, very excited to get to do it at DjangoCon!

llm-gpt4all. New release of my LLM plugin which builds on Nomic's excellent gpt4all Python library. I've upgraded to their latest version which adds support for Llama 3 8B Instruct, so after a 4.4GB model download this works:

llm -m Meta-Llama-3-8B-Instruct "say hi in Spanish"

llm-command-r. Cohere released Command R Plus today—an open weights (non commercial/research only) 104 billion parameter LLM, a big step up from their previous 35 billion Command R model.

Both models are fine-tuned for both tool use and RAG. The commercial API has features to expose this functionality, including a web-search connector which lets the model run web searches as part of answering the prompt and return documents and citations as part of the JSON response.

I released a new plugin for my LLM command line tool this morning adding support for the Command R models.

In addition to the two models it also adds a custom command for running prompts with web search enabled and listing the referenced documents.

llm-nomic-api-embed. My new plugin for LLM which adds API access to the Nomic series of embedding models. Nomic models can be run locally too, which makes them a great long-term commitment as there’s no risk of the models being retired in a way that damages the value of your previously calculated embedding vectors.

llm-sentence-transformers 0.2. I added a new --trust-remote-code option when registering an embedding model, which means LLM can now run embeddings through the new Nomic AI nomic-embed-text-v1 model.

llm-embed-onnx. I wrote a new plugin for LLM that acts as a thin wrapper around onnx_embedding_models by Benjamin Anderson, providing access to seven embedding models that can run on the ONNX model framework.

The actual plugin is around 50 lines of code, which makes for a nice example of how thin a plugin wrapper can be that adds new models to my LLM tool.