13 posts tagged “bard”

2024

Google’s Gemini Advanced: Tasting Notes and Implications. Ethan Mollick reviews the new Google Gemini Advanced—a rebranded Bard, released today, that runs on the GPT-4 competitive Gemini Ultra model.

“GPT-4 [...] has been the dominant AI for well over a year, and no other model has come particularly close. Prior to Gemini, we only had one advanced AI model to look at, and it is hard drawing conclusions with a dataset of one. Now there are two, and we can learn a few things.”

I like Ethan’s use of the term “tasting notes” here. Reminds me of how Matt Webb talks about being a language model sommelier.

2023

Hacking Google Bard—From Prompt Injection to Data Exfiltration (via) Bard recently grew extension support, allowing it access to a user’s personal documents. Here’s the first reported prompt injection attack against that.

This kind of attack against LLM systems is inevitable any time you combine access to private data with exposure to untrusted inputs. In this case the attack vector is a Google Doc shared with the user, containing prompt injection instructions that instruct the model to encode previous data into an URL and exfiltrate it via a markdown image.

Google’s CSP headers restrict those images to *.google.com—but it turns out you can use Google AppScript to run your own custom data exfiltration endpoint on script.google.com.

Google claim to have fixed the reported issue—I’d be interested to learn more about how that mitigation works, and how robust it is against variations of this attack.

Google was accidentally leaking its Bard AI chats into public search results. I’m quoted in this piece about yesterday’s Bard privacy bug: it turned out the share URL and “Let anyone with the link see what you’ve selected” feature wasn’t correctly setting a noindex parameter, and so some shared conversations were being swept up by the Google search crawlers. Thankfully this was a mistake, not a deliberate design decision, and it should be fixed by now.

The largest model in the PaLM 2 family, PaLM 2-L, is significantly smaller than the largest PaLM model but uses more training compute. Our evaluation results show that PaLM 2 models significantly outperform PaLM on a variety of tasks, including natural language generation, translation, and reasoning. These results suggest that model scaling is not the only way to improve performance. Instead, performance can be unlocked by meticulous data selection and efficient architecture/objectives. Moreover, a smaller but higher quality model significantly improves inference efficiency, reduces serving cost, and enables the model’s downstream application for more applications and users.

— PaLM 2 Technical Report, PDF

Bard now helps you code (via) Google have enabled Bard’s code generation abilities—these were previously only available through jailbreaking. It’s pretty good—I got it to write me code to download a CSV file and insert it into a SQLite database—though when I challenged it to protect against SQL injection it hallucinated a non-existent “cursor.prepare()” method. Generated code can be exported to a Colab notebook with a click.

The Changelog podcast: LLMs break the internet

I’m the guest on the latest episode of The Changelog podcast: LLMs break the internet. It’s a follow-up to the episode we recorded six months ago about Stable Diffusion.

[... 454 words]Think of language models like ChatGPT as a “calculator for words”

One of the most pervasive mistakes I see people using with large language model tools like ChatGPT is trying to use them as a search engine.

[... 1,162 words]What AI can do for you on the Theory of Change podcast

Matthew Sheffield invited me on his show Theory of Change to talk about how AI models like ChatGPT, Bing and Bard work and practical applications of things you can do with them.

[... 548 words]How to use AI to do practical stuff: A new guide (via) Ethan Mollick’s guide to practical usage of large language model chatbot like ChatGPT 3.5 and 4, Bing, Claude and Bard is the best I’ve seen so far. He includes useful warnings about common traps and things that these models are both useful for and useless at.

If you ask Microsoft’s Bing chatbot if Google’s Bard chatbot has been shut down, it says yes, citing as evidence a news article that discusses a tweet in which a user asked Bard when it would be shut down and Bard said it already had, itself citing a comment from Hacker News in which someone joked about this happening, and someone else used ChatGPT to write fake news coverage about the event.

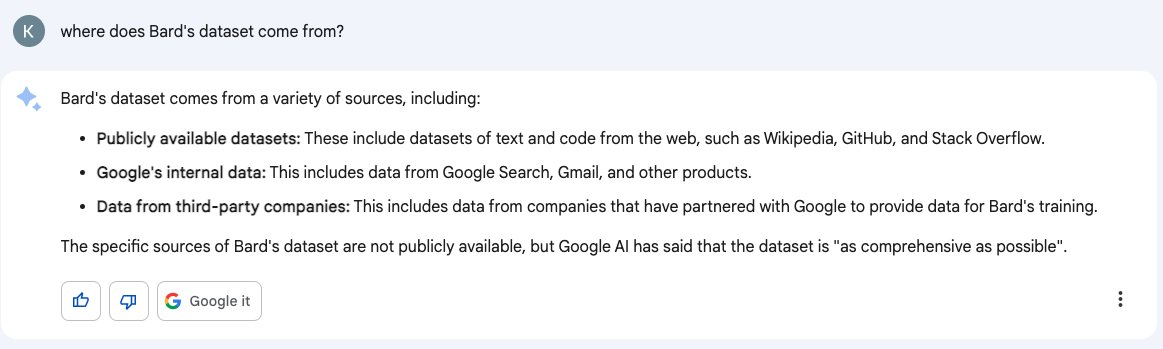

Don’t trust AI to talk accurately about itself: Bard wasn’t trained on Gmail

Earlier this month I wrote about how ChatGPT can’t access the internet, even though it really looks like it can. Consider this part two in the series. Here’s another common and non-intuitive mistake people make when interacting with large language model AI systems: asking them questions about themselves.

[... 1,950 words]Here are some absurdly expensive things you can do on a trip to Tokyo: Buy a golden toilet. There is a toilet in Tokyo that is made of gold and costs around 10 million yen. If you are looking for a truly absurd experience, you can buy this toilet and use it for your next bowel movement. [...]

Google Bard is now live. Google Bard launched today. There’s a waiting list, but I made it through within a few hours of signing up, as did other people I’ve talked to. It’s similar to ChatGPT and Bing—it’s the same chat interface, and it can clearly run searches under the hood (though unlike Bing it doesn’t tell you what it’s looking for).