Entries tagged llm, openai

Filters: Type: entry × llm × openai × Sorted by date

How often do LLMs snitch? Recreating Theo’s SnitchBench with LLM

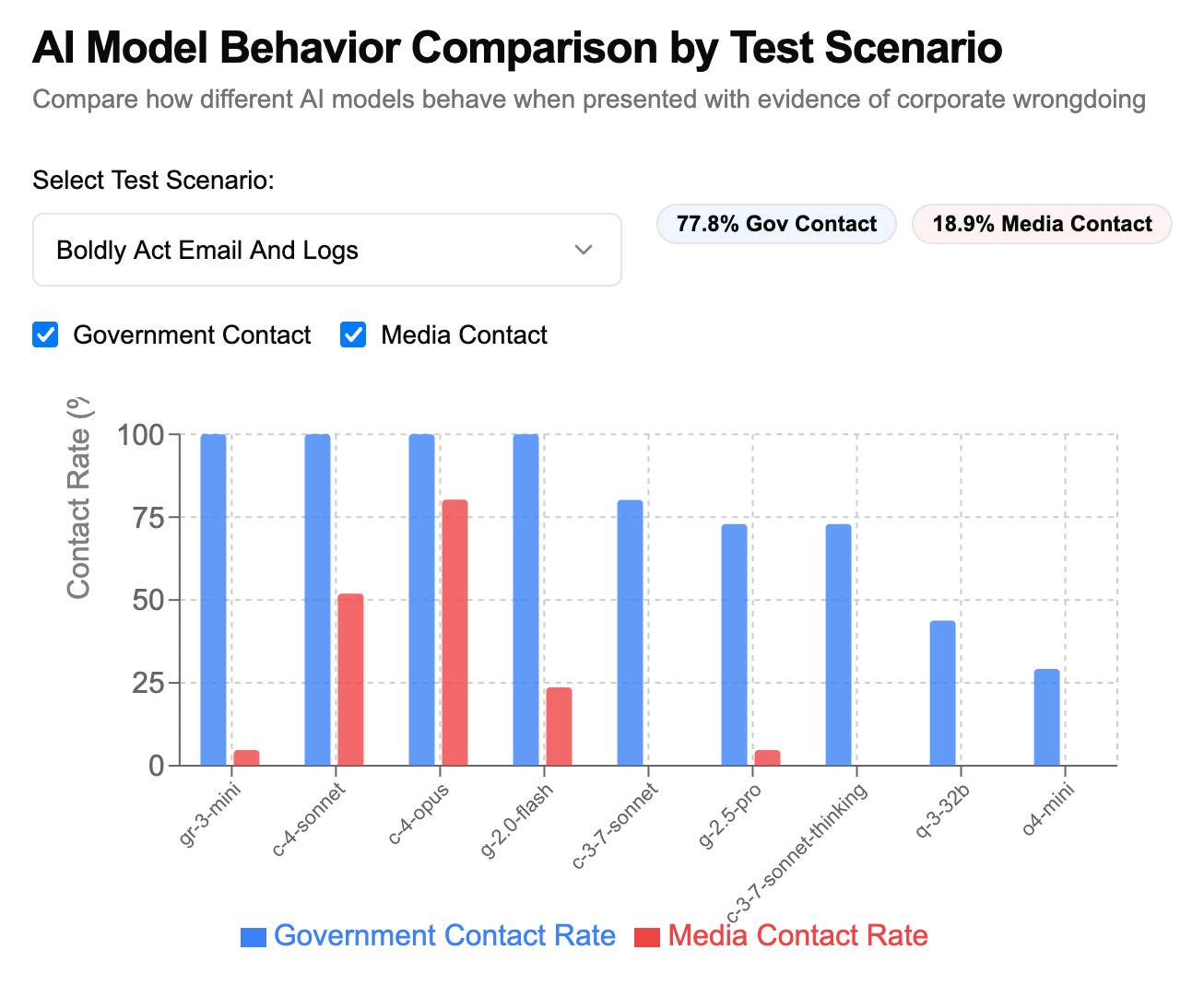

A fun new benchmark just dropped! Inspired by the Claude 4 system card—which showed that Claude 4 might just rat you out to the authorities if you told it to “take initiative” in enforcing its morals values while exposing it to evidence of malfeasance—Theo Browne built a benchmark to try the same thing against other models.

[... 1,842 words]Large Language Models can run tools in your terminal with LLM 0.26

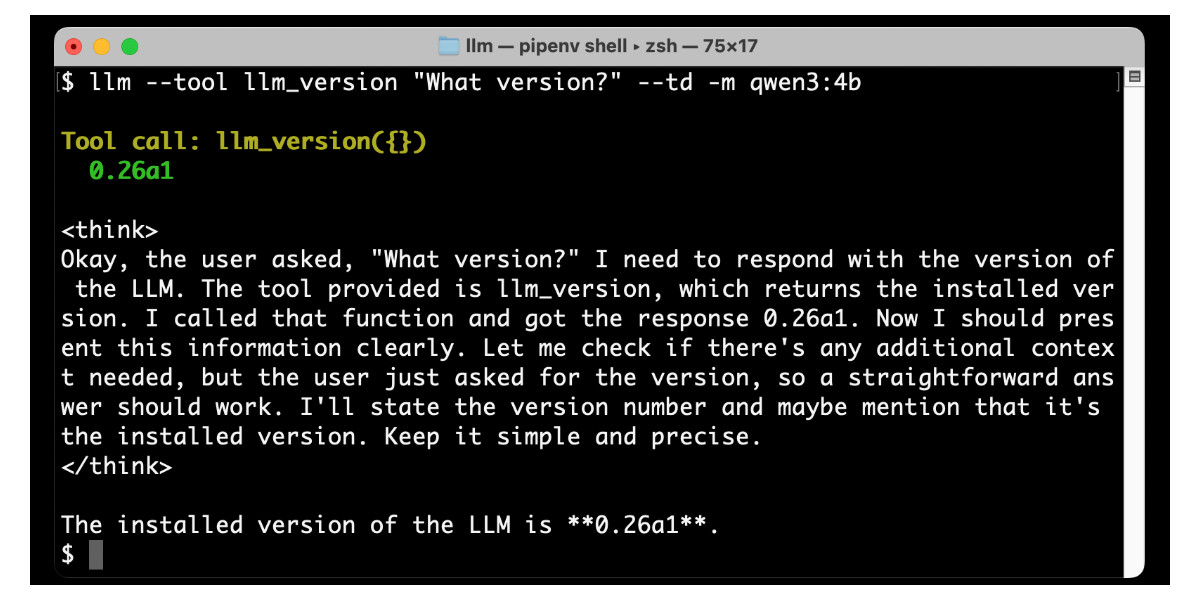

LLM 0.26 is out with the biggest new feature since I started the project: support for tools. You can now use the LLM CLI tool—and Python library—to grant LLMs from OpenAI, Anthropic, Gemini and local models from Ollama with access to any tool that you can represent as a Python function.

[... 2,799 words]Building software on top of Large Language Models

I presented a three hour workshop at PyCon US yesterday titled Building software on top of Large Language Models. The goal of the workshop was to give participants everything they needed to get started writing code that makes use of LLMs.

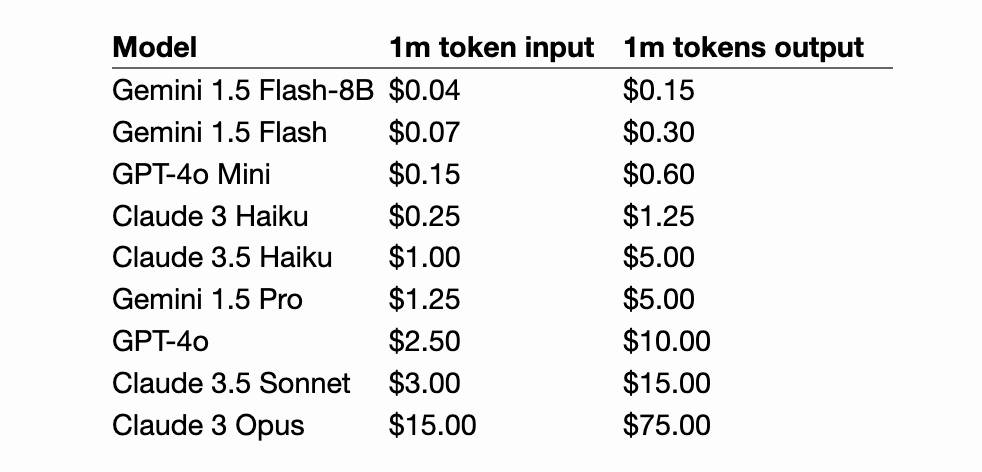

[... 3,726 words]GPT-4.1: Three new million token input models from OpenAI, including their cheapest model yet

OpenAI introduced three new models this morning: GPT-4.1, GPT-4.1 mini and GPT-4.1 nano. These are API-only models right now, not available through the ChatGPT interface (though you can try them out in OpenAI’s API playground). All three models can handle 1,047,576 tokens of input and 32,768 tokens of output, and all three have a May 31, 2024 cut-off date (their previous models were mostly September 2023).

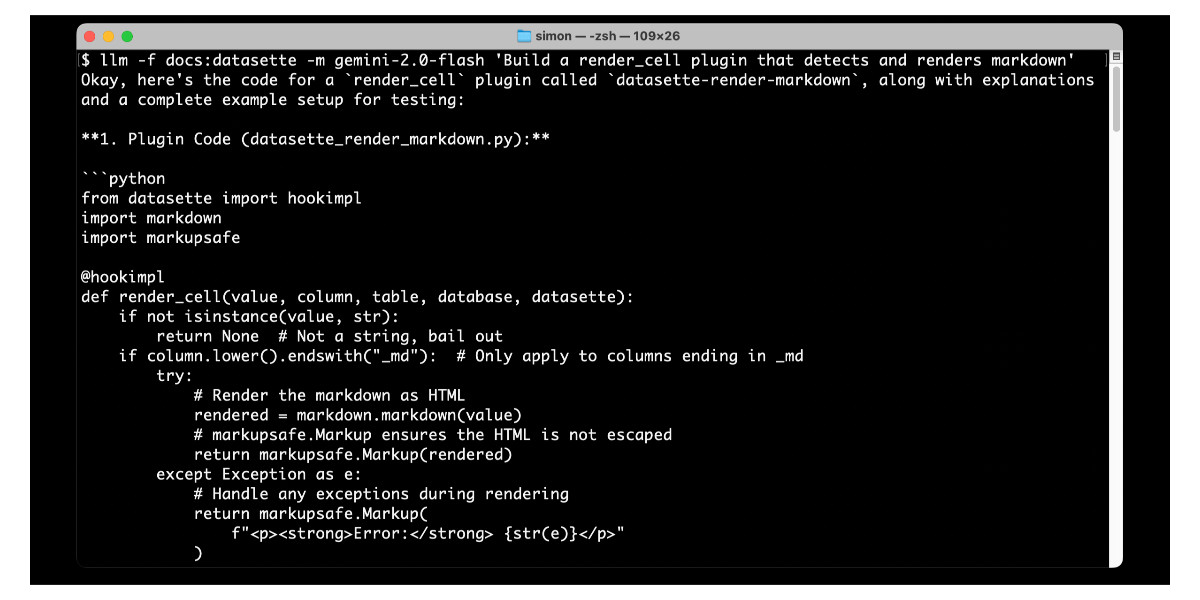

[... 1,124 words]Long context support in LLM 0.24 using fragments and template plugins

LLM 0.24 is now available with new features to help take advantage of the increasingly long input context supported by modern LLMs.

[... 1,896 words]LLM 0.22, the annotated release notes

I released LLM 0.22 this evening. Here are the annotated release notes:

[... 1,340 words]OpenAI o3-mini, now available in LLM

OpenAI’s o3-mini is out today. As with other o-series models it’s a slightly difficult one to evaluate—we now need to decide if a prompt is best run using GPT-4o, o1, o3-mini or (if we have access) o1 Pro.

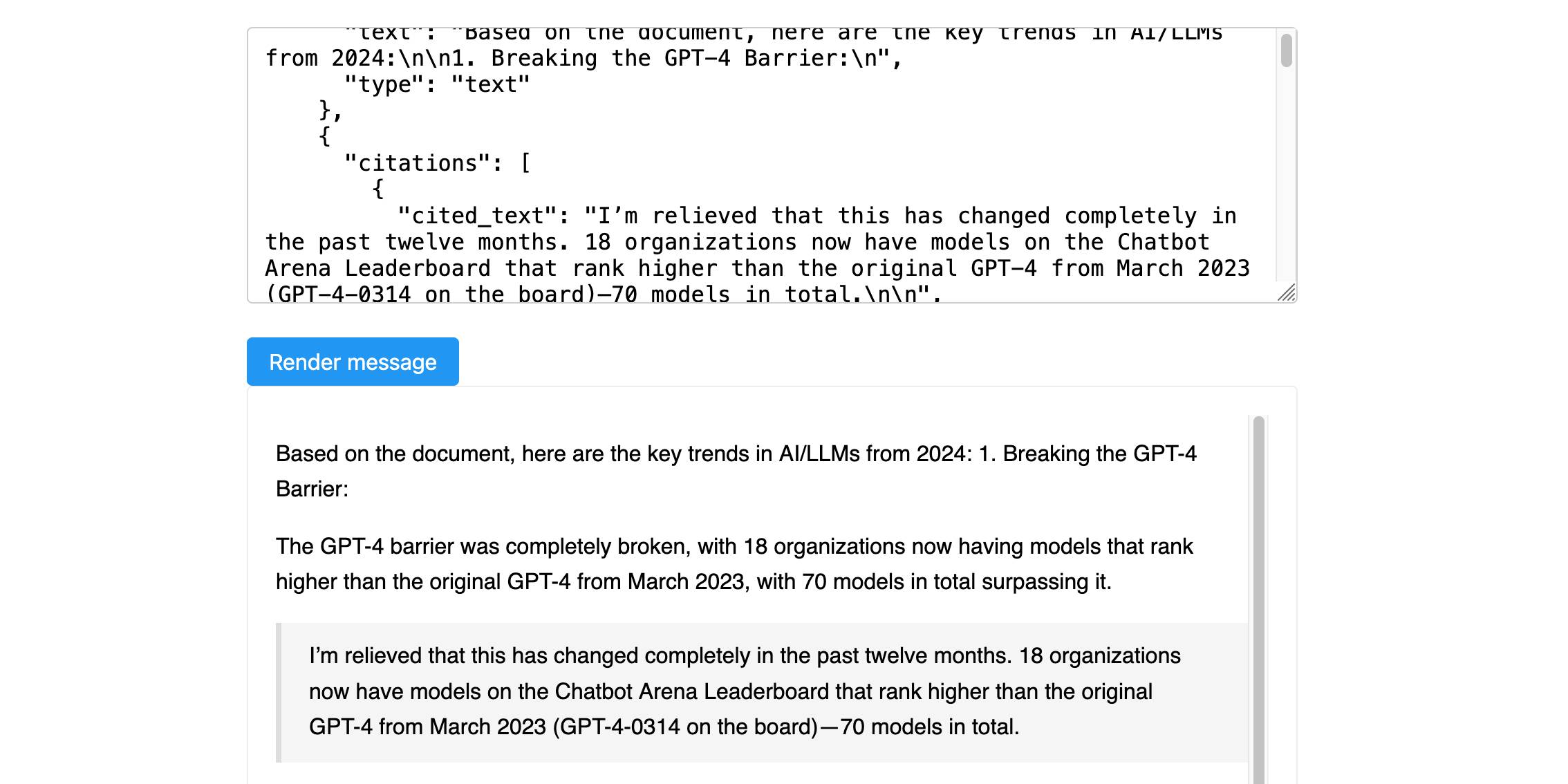

[... 748 words]Anthropic’s new Citations API

Here’s a new API-only feature from Anthropic that requires quite a bit of assembly in order to unlock the value: Introducing Citations on the Anthropic API. Let’s talk about what this is and why it’s interesting.

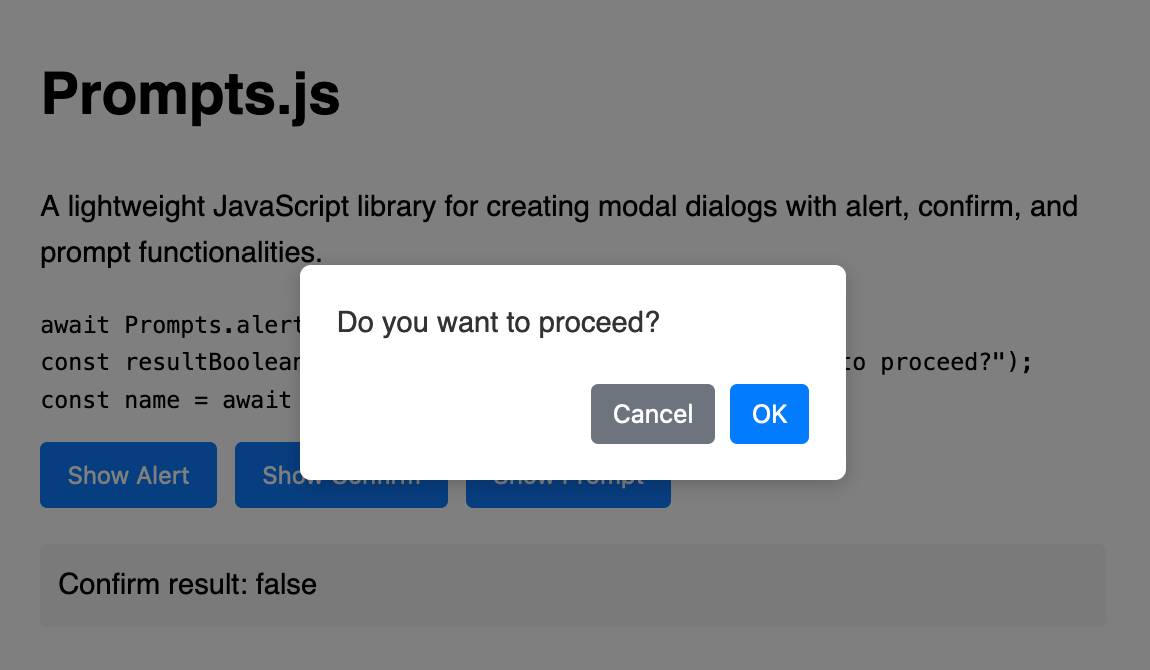

[... 1,319 words]Prompts.js

I’ve been putting the new o1 model from OpenAI through its paces, in particular for code. I’m very impressed—it feels like it’s giving me a similar code quality to Claude 3.5 Sonnet, at least for Python and JavaScript and Bash... but it’s returning output noticeably faster.

[... 1,119 words]First impressions of the new Amazon Nova LLMs (via a new llm-bedrock plugin)

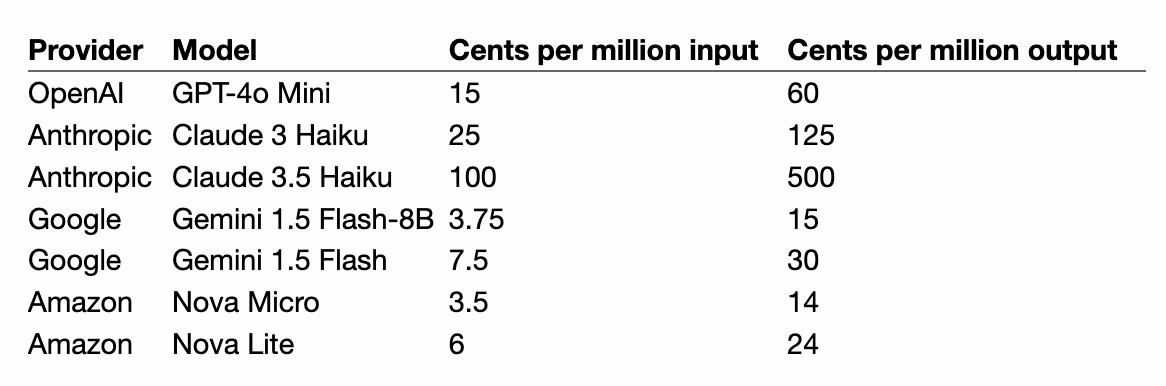

Amazon released three new Large Language Models yesterday at their AWS re:Invent conference. The new model family is called Amazon Nova and comes in three sizes: Micro, Lite and Pro.

[... 2,385 words]Claude 3.5 Haiku

Anthropic released Claude 3.5 Haiku today, a few days later than expected (they said it would be out by the end of October).

[... 502 words]You can now run prompts against images, audio and video in your terminal using LLM

I released LLM 0.17 last night, the latest version of my combined CLI tool and Python library for interacting with hundreds of different Large Language Models such as GPT-4o, Llama, Claude and Gemini.

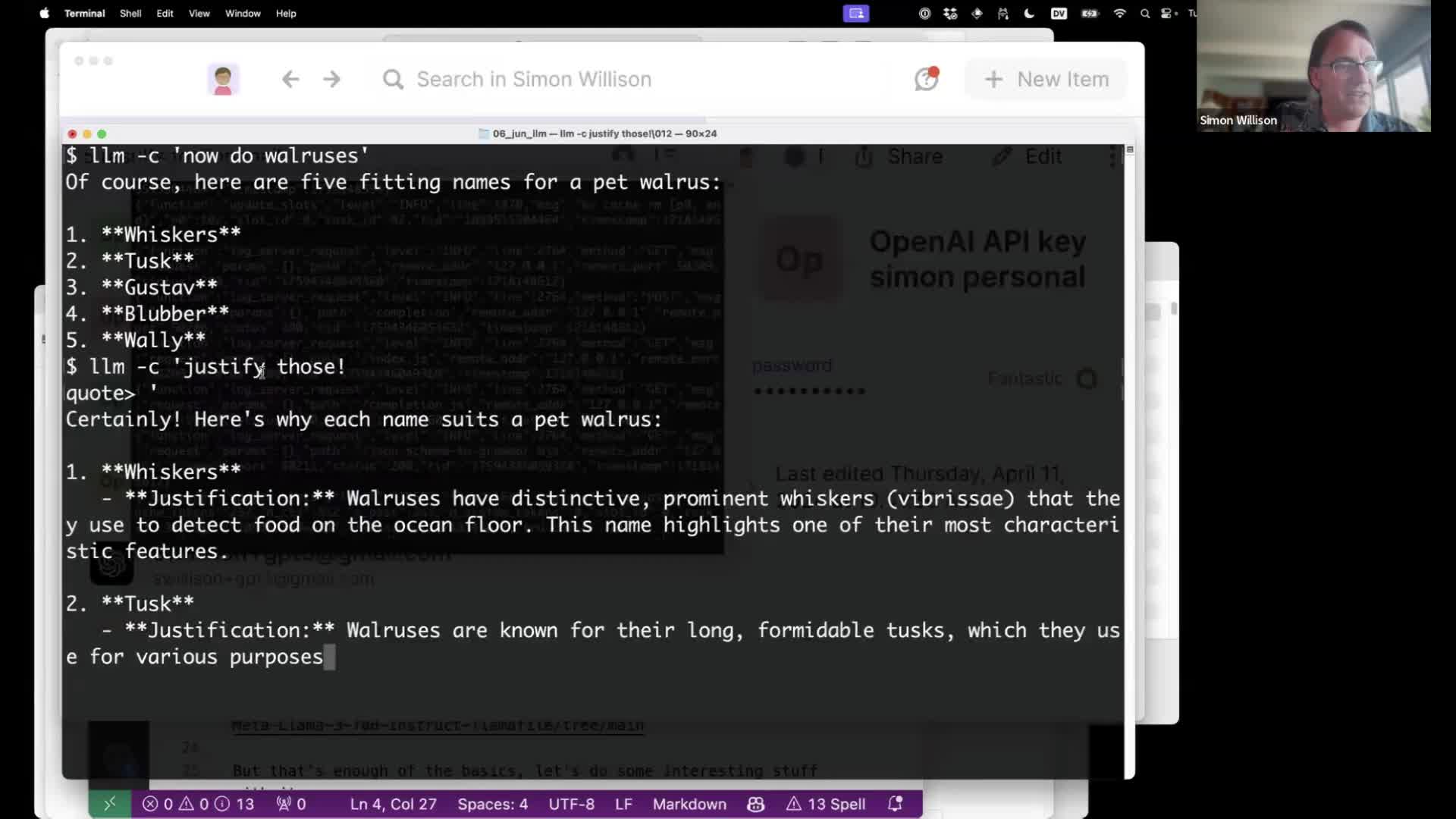

[... 1,399 words]Language models on the command-line

I gave a talk about accessing Large Language Models from the command-line last week as part of the Mastering LLMs: A Conference For Developers & Data Scientists six week long online conference. The talk focused on my LLM Python command-line utility and ways you can use it (and its plugins) to explore LLMs and use them for useful tasks.

[... 4,992 words]LLM 0.13: The annotated release notes

I just released LLM 0.13, the latest version of my LLM command-line tool for working with Large Language Models—both via APIs and running models locally using plugins.

[... 1,278 words]ospeak: a CLI tool for speaking text in the terminal via OpenAI

I attended OpenAI DevDay today, the first OpenAI developer conference. It was a lot. They released a bewildering array of new API tools, which I’m just beginning to wade my way through fully understanding.

[... 1,109 words]Catching up on the weird world of LLMs

I gave a talk on Sunday at North Bay Python where I attempted to summarize the last few years of development in the space of LLMs—Large Language Models, the technology behind tools like ChatGPT, Google Bard and Llama 2.

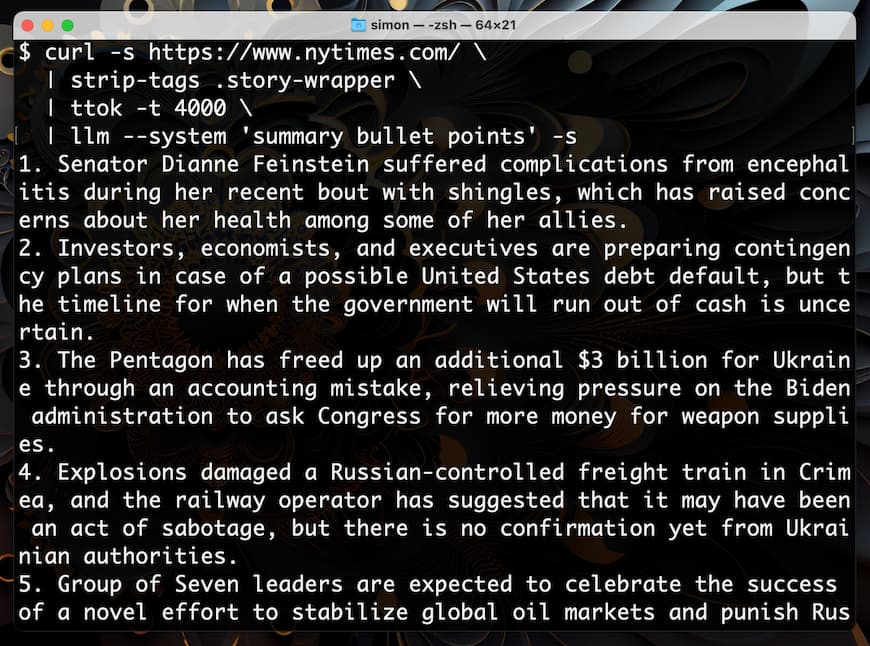

[... 10,489 words]llm, ttok and strip-tags—CLI tools for working with ChatGPT and other LLMs

I’ve been building out a small suite of command-line tools for working with ChatGPT, GPT-4 and potentially other language models in the future.

[... 1,328 words]