July 2023

77 posts: 4 entries, 20 links, 7 quotes, 46 beats

July 13, 2023

Not every conversation I had at Anthropic revolved around existential risk. But dread was a dominant theme. At times, I felt like a food writer who was assigned to cover a trendy new restaurant, only to discover that the kitchen staff wanted to talk about nothing but food poisoning.

July 14, 2023

July 16, 2023

Increasingly powerful AI systems are being released at an increasingly rapid pace. [...] And yet not a single AI lab seems to have provided any user documentation. Instead, the only user guides out there appear to be Twitter influencer threads. Documentation-by-rumor is a weird choice for organizations claiming to be concerned about proper use of their technologies, but here we are.

Weeknotes: Self-hosted language models with LLM plugins, a new Datasette tutorial, a dozen package releases, a dozen TILs

A lot of stuff to cover from the past two and a half weeks.

[... 1,742 words]July 17, 2023

July 18, 2023

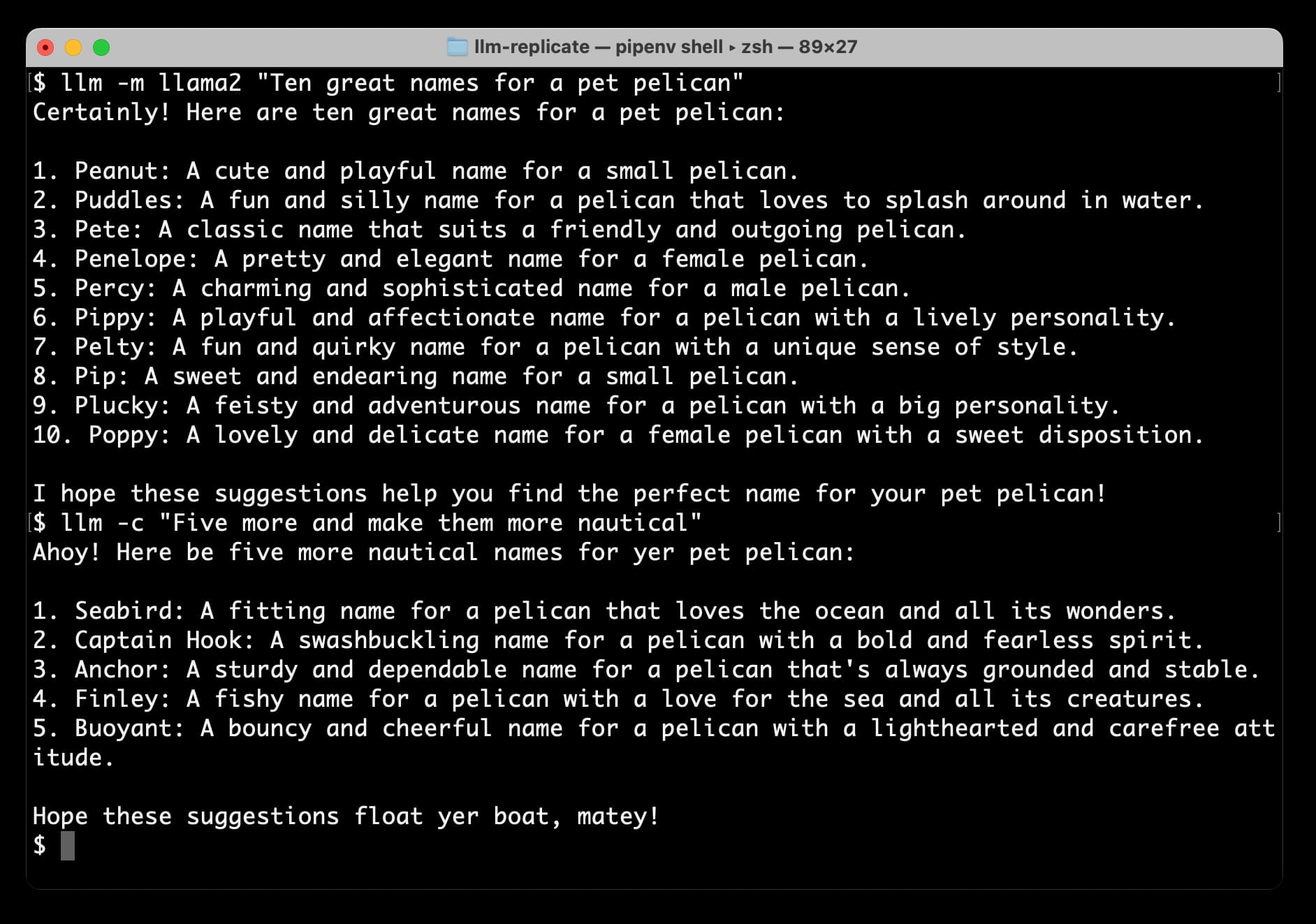

Accessing Llama 2 from the command-line with the llm-replicate plugin

The big news today is Llama 2, the new openly licensed Large Language Model from Meta AI. It’s a really big deal:

[... 1,206 words]Ollama (via) This tool for running LLMs on your own laptop directly includes an installer for macOS (Apple Silicon) and provides a terminal chat interface for interacting with models. They already have Llama 2 support working, with a model that downloads directly from their own registry service without need to register for an account or work your way through a waiting list.

July 19, 2023

llama2-mac-gpu.sh (via) Adrien Brault provided this recipe for compiling llama.cpp on macOS with GPU support enabled (“LLAMA_METAL=1 make”) and then downloading and running a GGML build of Llama 2 13B.

Llama 2: The New Open LLM SOTA. I’m in this Latent Space podcast, recorded yesterday, talking about the Llama 2 release.

July 20, 2023

Study claims ChatGPT is losing capability, but some experts aren’t convinced. Benj Edwards talks about the ongoing debate as to whether or not GPT-4 is getting weaker over time. I remain skeptical of those claims—I think it’s more likely that people are seeing more of the flaws now that the novelty has worn off.

I’m quoted in this piece: “Honestly, the lack of release notes and transparency may be the biggest story here. How are we meant to build dependable software on top of a platform that changes in completely undocumented and mysterious ways every few months?”

sqlite-vss v0.1.1 Annotated Release Notes (via) Alex Garcia’s sqlite-vss adds vector search directly to SQLite through a custom extension. It’s now easily installed for Python, Node.js, Deno, Elixir, Go, Rust and Ruby (“gem install sqlite-vss”), and is being used actively by enough people that Alex is getting actionable feedback, including fixes for memory leaks spotted in production.

Prompt injected OpenAI’s new Custom Instructions to see how it is implemented. ChatGPT added a new "custom instructions" feature today, which you can use to customize the system prompt used to control how it responds to you. swyx prompt-inject extracted the way it works:

The user provided the following information about themselves. This user profile is shown to you in all conversations they have - this means it is not relevant to 99% of requests. Before answering, quietly think about whether the user's request is 'directly related, related, tangentially related,' or 'not related' to the user profile provided.

I'm surprised to see OpenAI using "quietly think about..." in a prompt like this - I wouldn't have expected that language to be necessary.