11 posts tagged “s3-credentials”

s3-credentials is a CLI tool for creating and managing credentials for S3 buckets.

2025

s3-credentials 0.17. New release of my s3-credentials CLI tool for managing credentials needed to access just one S3 bucket. Here are the release notes in full:

That s3-credentials localserver command (documented here) is a little obscure, but I found myself wanting something like that to help me test out a new feature I'm building to help create temporary Litestream credentials using Amazon STS.

Most of that new feature was built by Claude Code from the following starting prompt:

Add a feature s3-credentials localserver which starts a localhost weberver running (using the Python standard library stuff) on port 8094 by default but -p/--port can set a different port and otherwise takes an option that names a bucket and then takes the same options for read--write/read-only etc as other commands. It also takes a required --refresh-interval option which can be set as 5m or 10h or 30s. All this thing does is reply on / to a GET request with the IAM expiring credentials that allow access to that bucket with that policy for that specified amount of time. It caches internally the credentials it generates and will return the exact same data up until they expire (it also tracks expected expiry time) after which it will generate new credentials (avoiding dog pile effects if multiple requests ask at the same time) and return and cache those instead.

Poe the Poet.

I was looking for a way to specify additional commands in my pyproject.toml file to execute using uv. There's an enormous issue thread on this in the uv issue tracker (300+ comments dating back to August 2024) and from there I learned of several options including this one, Poe the Poet.

It's neat. I added it to my s3-credentials project just now and the following now works for running the live preview server for the documentation:

uv run poe livehtml

Here's the snippet of TOML I added to my pyproject.toml:

[dependency-groups] test = [ "pytest", "pytest-mock", "cogapp", "moto>=5.0.4", ] docs = [ "furo", "sphinx-autobuild", "myst-parser", "cogapp", ] dev = [ {include-group = "test"}, {include-group = "docs"}, "poethepoet>=0.38.0", ] [tool.poe.tasks] docs = "sphinx-build -M html docs docs/_build" livehtml = "sphinx-autobuild -b html docs docs/_build" cog = "cog -r docs/*.md"

Since poethepoet is in the dev= dependency group any time I run uv run ... it will be available in the environment.

2024

s3-credentials 0.16.

I spent entirely too long this evening trying to figure out why files in my new supposedly public S3 bucket were unavailable to view. It turns out these days you need to set a PublicAccessBlockConfiguration of {"BlockPublicAcls": false, "IgnorePublicAcls": false, "BlockPublicPolicy": false, "RestrictPublicBuckets": false}.

The s3-credentials --create-bucket --public option now does that for you. I also added a s3-credentials debug-bucket name-of-bucket command to help figure out why a bucket isn't working as expected.

2022

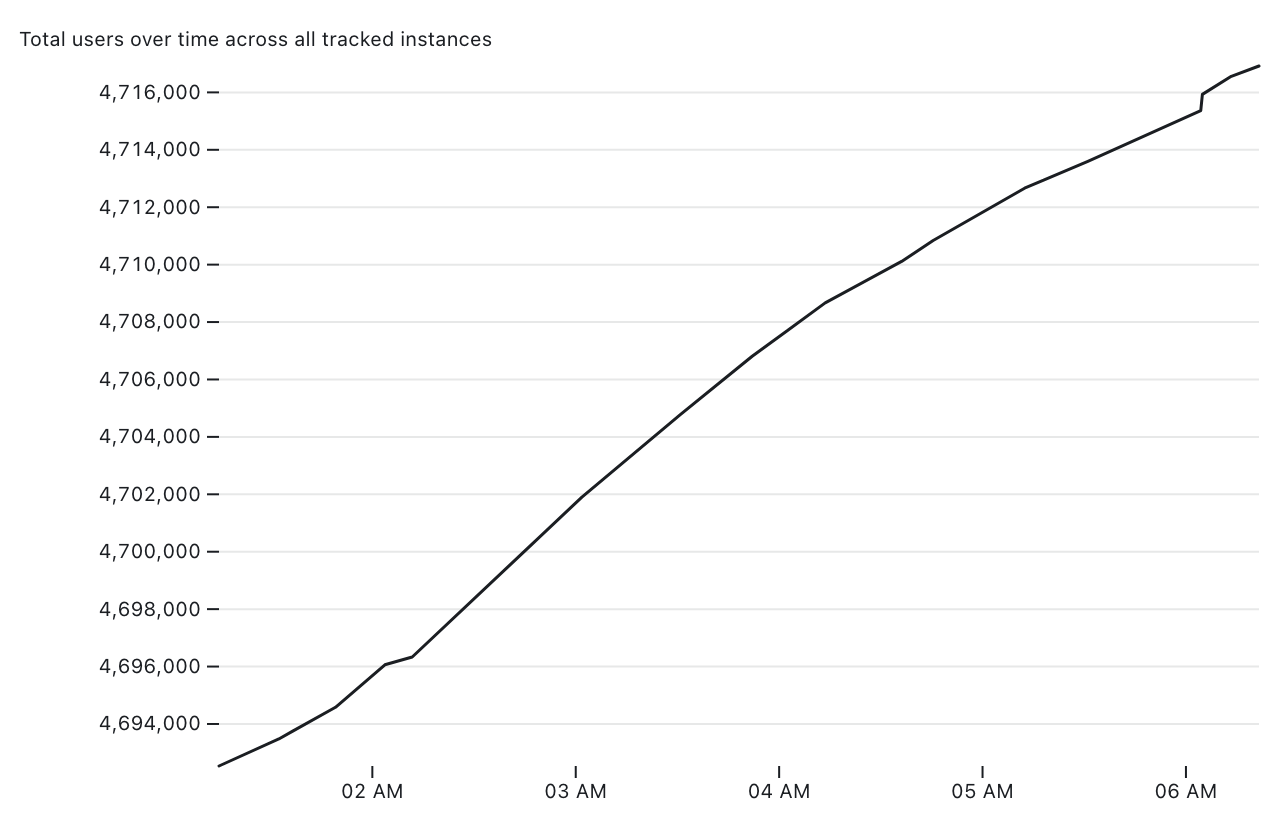

Tracking Mastodon user numbers over time with a bucket of tricks

Mastodon is definitely having a moment. User growth is skyrocketing as more and more people migrate over from Twitter.

[... 1,534 words]Weeknotes: Datasette Lite, s3-credentials, shot-scraper, datasette-edit-templates and more

Despite distractions from AI I managed to make progress on a bunch of different projects this week, including new releases of s3-credentials and shot-scraper, a new datasette-edit-templates plugin and a small but neat improvement to Datasette Lite.

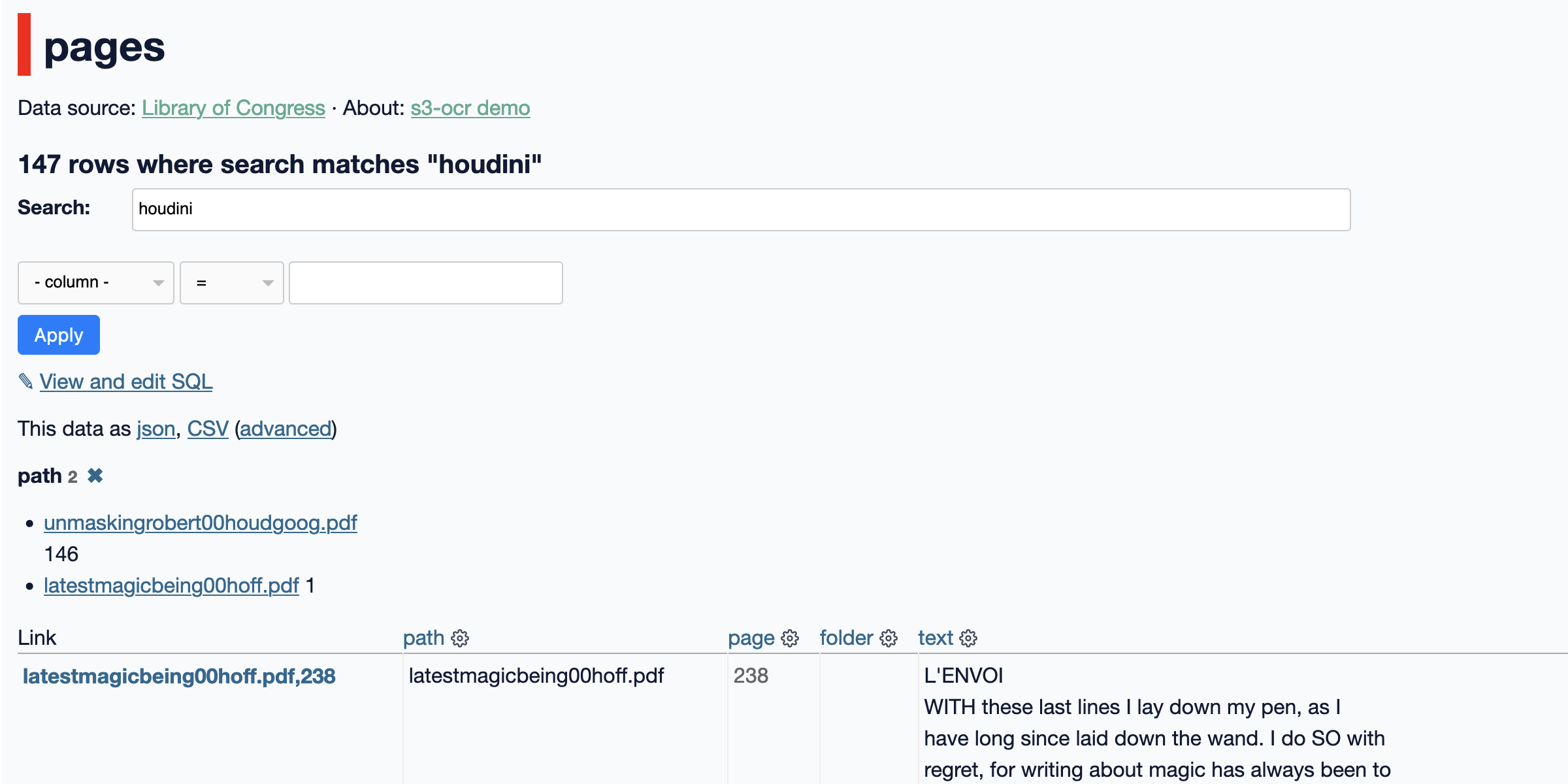

[... 1,562 words]s3-ocr: Extract text from PDF files stored in an S3 bucket

I’ve released s3-ocr, a new tool that runs Amazon’s Textract OCR text extraction against PDF files in an S3 bucket, then writes the resulting text out to a SQLite database with full-text search configured so you can run searches against the extracted data.

[... 1,493 words]Weeknotes: s3-credentials prefix and Datasette 0.60

A new release of s3-credentials with support for restricting access to keys that start with a prefix, Datasette 0.60 and a write-up of my process for shipping a feature.

[... 1,134 words]2021

Weeknotes: git-history, bug magnets and s3-credentials --public

I’ve stopped considering my projects “shipped” until I’ve written a proper blog entry about them, so yesterday I finally shipped git-history, coinciding with the release of version 0.6—a full 27 days after the first 0.1.

[... 1,013 words]s3-credentials 0.8. The latest release of my s3-credentials CLI tool for creating S3 buckets with credentials to access them (with read-write, read-only or write-only policies) adds a new --public option for creating buckets that allow public access, such that anyone who knows a filename can download a file. The s3-credentials put-object command also now sets the appropriate Content-Type heading on the uploaded object.

Weeknotes: git-history, created for a Git scraping workshop

My main project this week was a 90 minute workshop I delivered about Git scraping at Coda.Br 2021, a Brazilian data journalism conference, on Friday. This inspired the creation of a brand new tool, git-history, plus smaller improvements to a range of other projects.

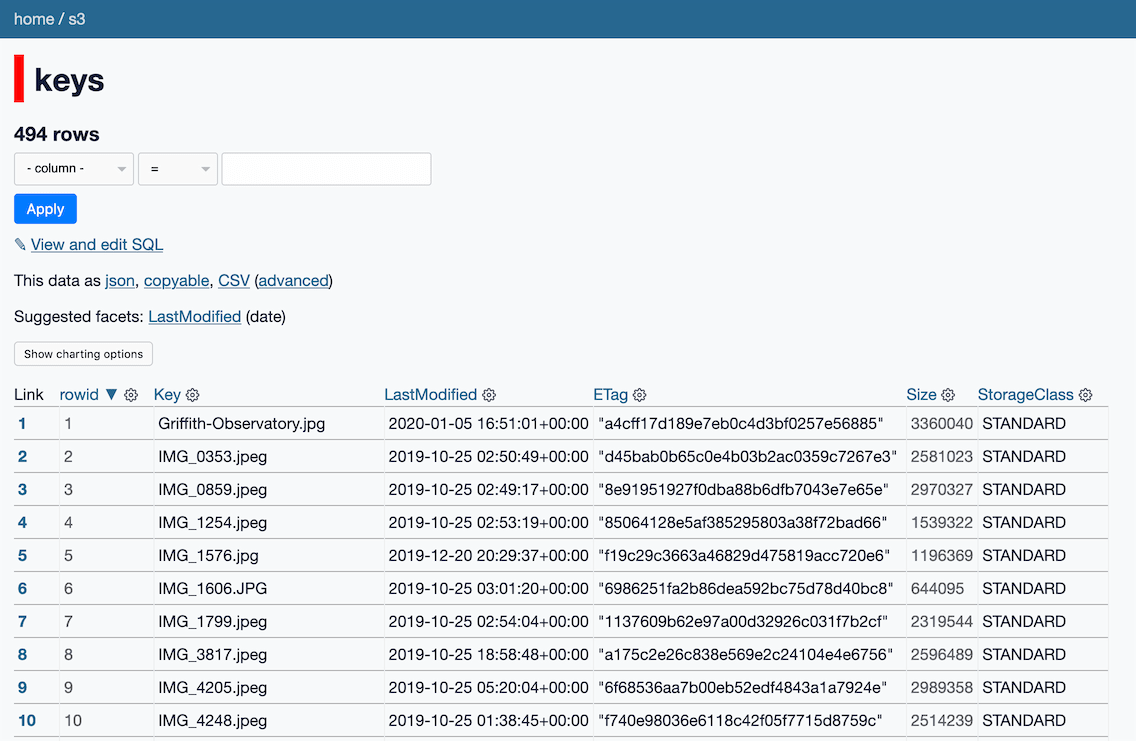

[... 1,239 words]s3-credentials: a tool for creating credentials for S3 buckets

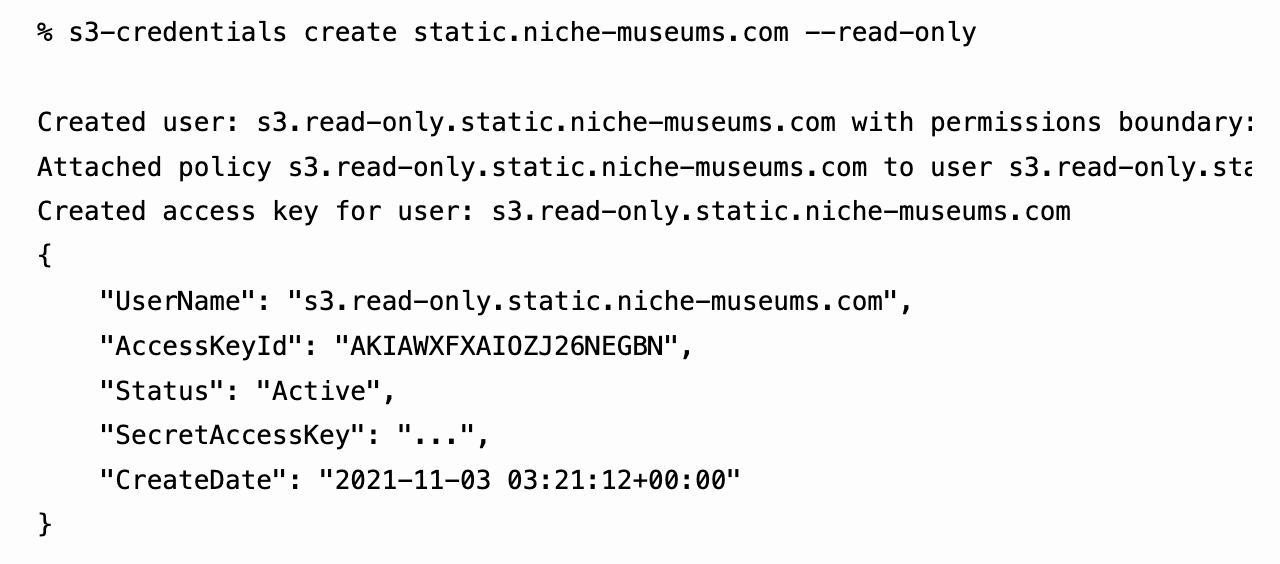

I’ve built a command-line tool called s3-credentials to solve a problem that’s been frustrating me for ages: how to quickly and easily create AWS credentials (an access key and secret key) that have permission to read or write from just a single S3 bucket.

[... 1,618 words]