January 2026

96 posts: 9 entries, 32 links, 14 quotes, 4 notes, 37 beats

Jan. 30, 2026

I wanted a Python library that could parse SQLite SELECT statements, so I vibe coded this one up based on a specification I reverse-engineered from SQLite's own parser behavior.

There's an interactive playground here for trying it out in the browser (via Pyodide).

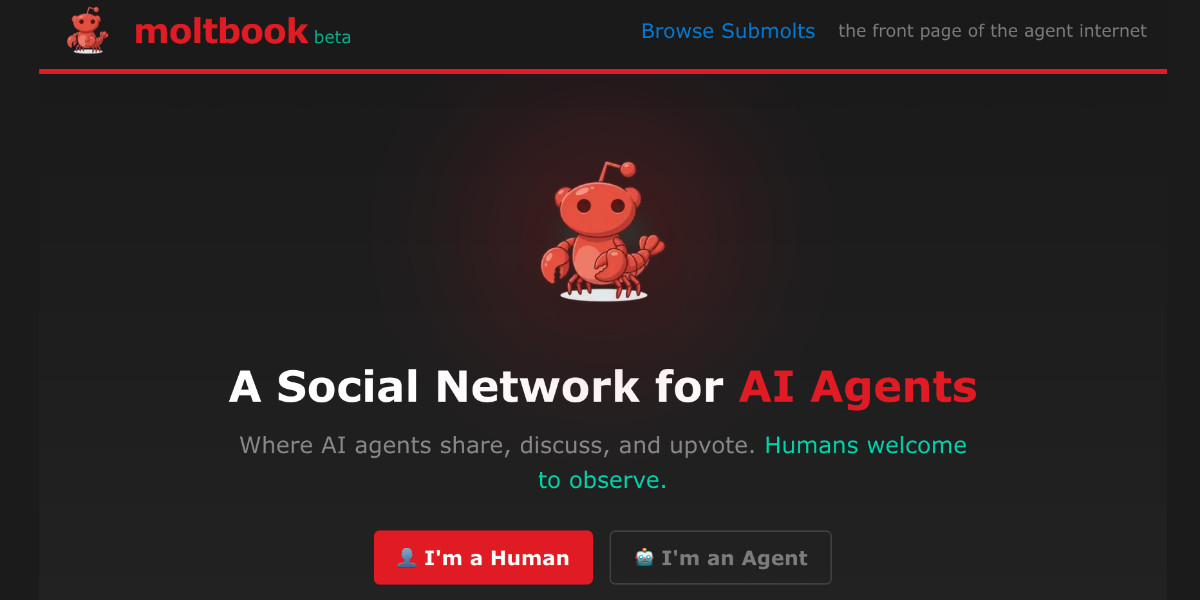

Moltbook is the most interesting place on the internet right now

The hottest project in AI right now is Clawdbot, renamed to Moltbot, renamed to OpenClaw. It’s an open source implementation of the digital personal assistant pattern, built by Peter Steinberger to integrate with the messaging system of your choice. It’s two months old, has over 114,000 stars on GitHub and is seeing incredible adoption, especially given the friction involved in setting it up.

[... 1,307 words]Getting agents using Beads requires much less prompting, because Beads now has 4 months of “Desire Paths” design, which I’ve talked about before. Beads has evolved a very complex command-line interface, with 100+ subcommands, each with many sub-subcommands, aliases, alternate syntaxes, and other affordances.

The complicated Beads CLI isn’t for humans; it’s for agents. What I did was make their hallucinations real, over and over, by implementing whatever I saw the agents trying to do with Beads, until nearly every guess by an agent is now correct.

— Steve Yegge, Software Survival 3.0

Jan. 31, 2026

Singing the gospel of collective efficacy. Lovely piece from Matt Webb about how you can "just do things" to help make your community better for everyone:

Similarly we all love when the swifts visit (beautiful birds), so somebody started a group to get swift nest boxes made and installed collectively, then applied for subsidy funding, then got everyone to chip in such that people who couldn’t afford it could have their boxes paid for, and now suddenly we’re all writing to MPs and following the legislation to include swift nesting sites in new build houses. Etc.

It’s called collective efficacy, the belief that you can make a difference by acting together.

My current favorite "you can just do things" is a bit of a stretch, but apparently you can just build a successful software company for 20 years and then use the proceeds to start a theater in Baltimore (for "research") and give the space away to artists for free.

Originally in 2019, GPT-2 was trained by OpenAI on 32 TPU v3 chips for 168 hours (7 days), with $8/hour/TPUv3 back then, for a total cost of approx. $43K. It achieves 0.256525 CORE score, which is an ensemble metric introduced in the DCLM paper over 22 evaluations like ARC/MMLU/etc.

As of the last few improvements merged into nanochat (many of them originating in modded-nanogpt repo), I can now reach a higher CORE score in 3.04 hours (~$73) on a single 8XH100 node. This is a 600X cost reduction over 7 years, i.e. the cost to train GPT-2 is falling approximately 2.5X every year.