Tuesday, 4th November 2025

Every time an engineer evaluates a language that isn’t “theirs,” their brain is literally working against them. They’re not just analyzing technical trade offs, they’re contemplating a version of themselves that doesn’t exist yet, that feels threatening to the version that does. The Python developer reads case studies about Go’s performance and their amygdala quietly marks each one as a threat to be neutralized. The Rust advocate looks at identical problems and their Default Mode Network constructs narratives about why “only” Rust can solve them.

We’re not lying. We genuinely believe our reasoning is sound. That’s what makes identity based thinking so expensive, and so invisible.

— Steve Francia, Why Engineers Can't Be Rational About Programming Languages

MCP Colors: Systematically deal with prompt injection risk (via) Tim Kellogg proposes a neat way to think about prompt injection, especially with respect to MCP tools.

Classify every tool with a color: red if it exposes the agent to untrusted (potentially malicious) instructions, blue if it involves a "critical action" - something you would not want an attacker to be able to trigger.

This means you can configure your agent to actively avoid mixing the two colors at once:

The Chore: Go label every data input, and every tool (especially MCP tools). For MCP tools & resources, you can use the _meta object to keep track of the color. The agent can decide at runtime (or earlier) if it’s gotten into an unsafe state.

Personally, I like to automate. I needed to label ~200 tools, so I put them in a spreadsheet and used an LLM to label them. That way, I could focus on being precise and clear about my criteria for what constitutes “red”, “blue” or “neither”. That way I ended up with an artifact that scales beyond my initial set of tools.

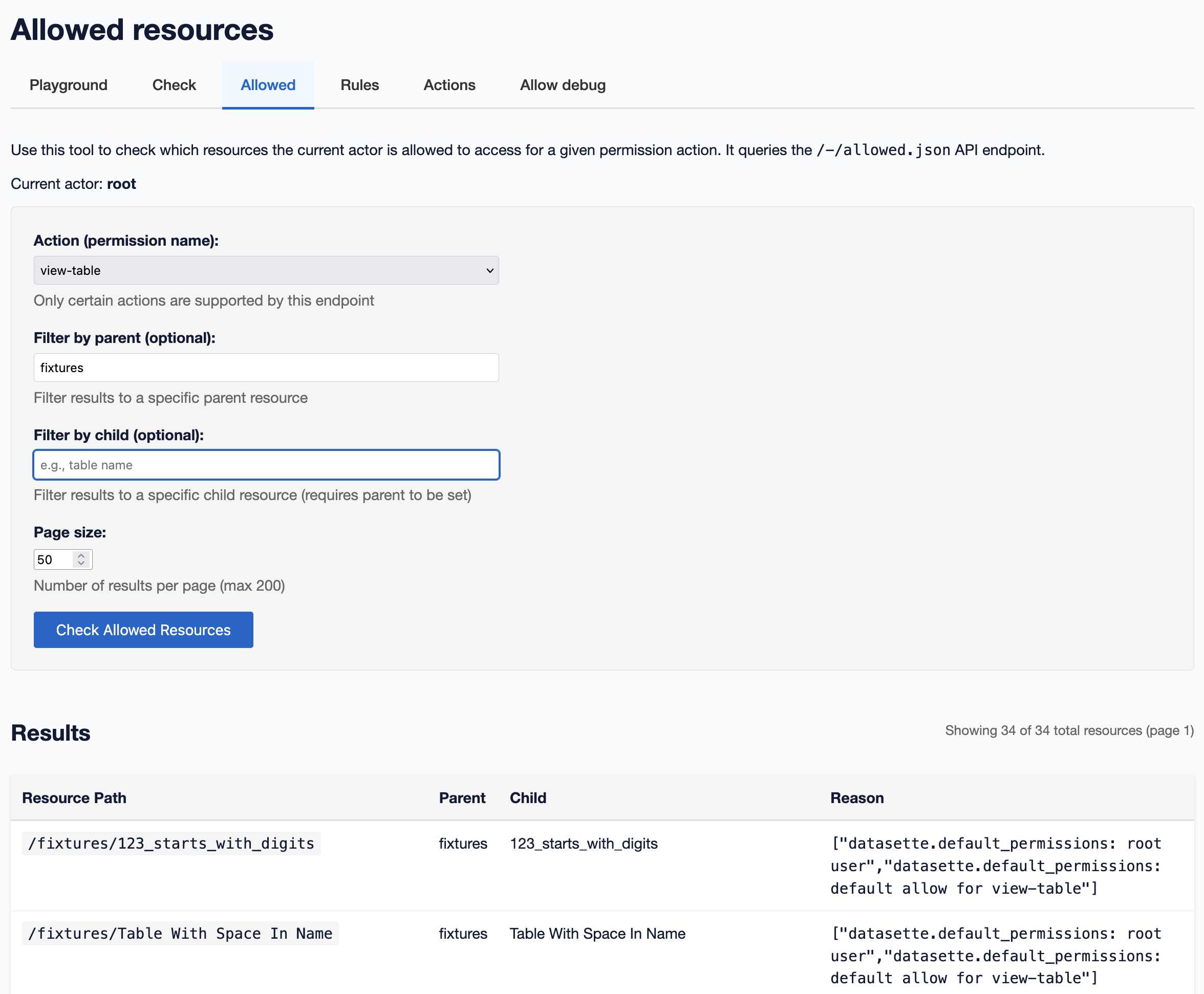

A new SQL-powered permissions system in Datasette 1.0a20

Datasette 1.0a20 is out with the biggest breaking API change on the road to 1.0, improving how Datasette’s permissions system works by migrating permission logic to SQL running in SQLite. This release involved 163 commits, with 10,660 additions and 1,825 deletions, most of which was written with the help of Claude Code.

[... 2,750 words]Code execution with MCP: Building more efficient agents (via) When I wrote about Claude Skills I mentioned that I don't use MCP at all any more when working with coding agents - I find CLI utilities and libraries like Playwright Python to be a more effective way of achieving the same goals.

This new piece from Anthropic proposes a way to bring the two worlds more closely together.

It identifies two challenges with MCP as it exists today. The first has been widely discussed before: all of those tool descriptions take up a lot of valuable real estate in the agent context even before you start using them.

The second is more subtle but equally interesting: chaining multiple MCP tools together involves passing their responses through the context, absorbing more valuable tokens and introducing chances for the LLM to make additional mistakes.

What if you could turn MCP tools into code functions instead, and then let the LLM wire them together with executable code?

Anthropic's example here imagines a system that turns MCP tools into TypeScript files on disk, looking something like this:

// ./servers/google-drive/getDocument.ts

interface GetDocumentInput {

documentId: string;

}

interface GetDocumentResponse {

content: string;

}

/* Read a document from Google Drive */

export async function getDocument(input: GetDocumentInput): Promise<GetDocumentResponse> {

return callMCPTool<GetDocumentResponse>('google_drive__get_document', input);

}This takes up no tokens at all - it's a file on disk. In a similar manner to Skills the agent can navigate the filesystem to discover these definitions on demand.

Then it can wire them together by generating code:

const transcript = (await gdrive.getDocument({ documentId: 'abc123' })).content;

await salesforce.updateRecord({

objectType: 'SalesMeeting',

recordId: '00Q5f000001abcXYZ',

data: { Notes: transcript }

});Notably, the example here avoids round-tripping the response from the gdrive.getDocument() call through the model on the way to the salesforce.updateRecord() call - which is faster, more reliable, saves on context tokens, and avoids the model being exposed to any potentially sensitive data in that document.

This all looks very solid to me! I think it's a sensible way to take advantage of the strengths of coding agents and address some of the major drawbacks of MCP as it is usually implemented today.

There's one catch: Anthropic outline the proposal in some detail but provide no code to execute on it! Implementation is left as an exercise for the reader:

If you implement this approach, we encourage you to share your findings with the MCP community.