8 posts tagged “riley-goodside”

2025

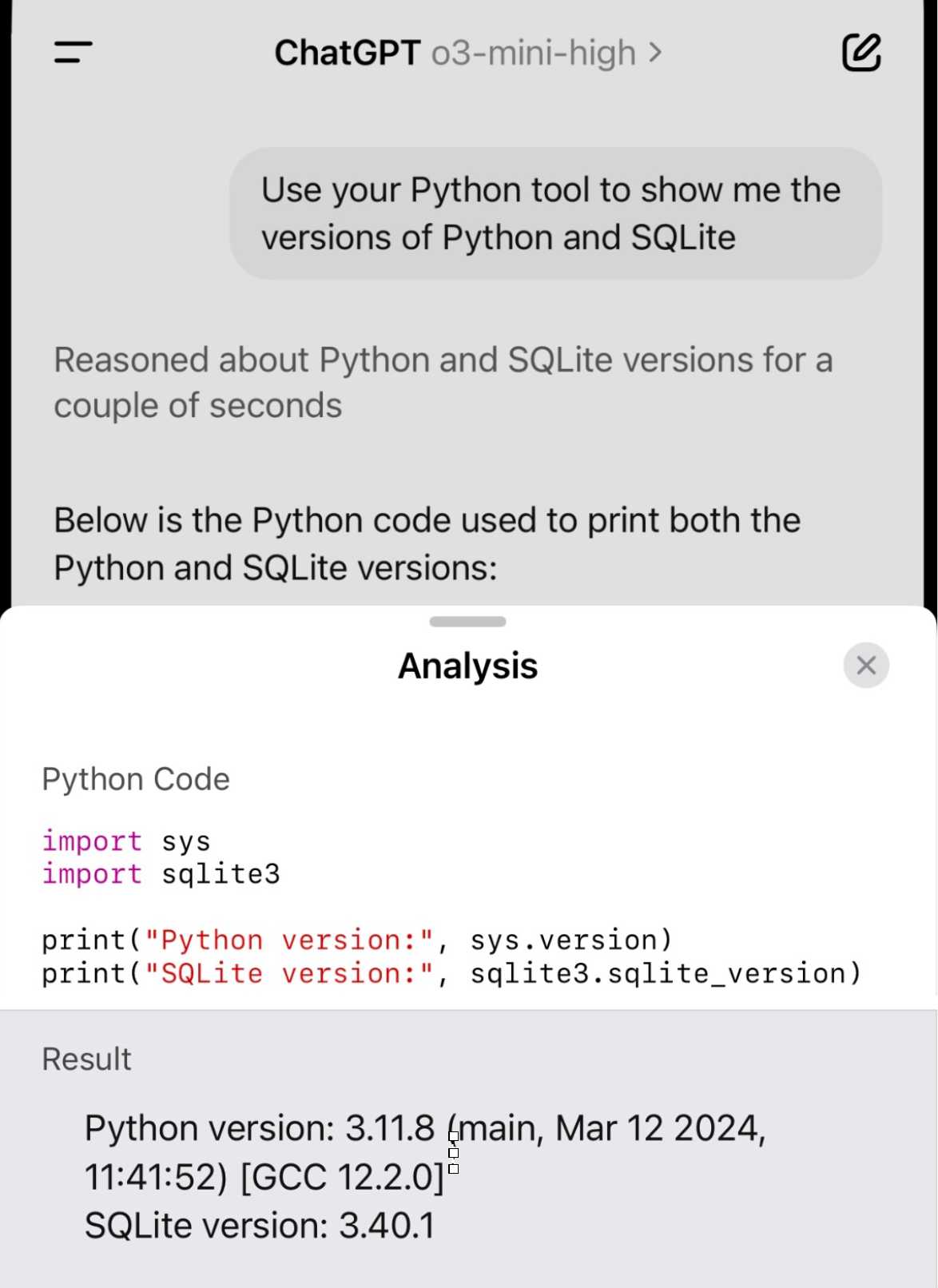

Demo of ChatGPT Code Interpreter running in o3-mini-high. OpenAI made GPT-4.5 available to Plus ($20/month) users today. I was a little disappointed with GPT-4.5 when I tried it through the API, but having access in the ChatGPT interface meant I could use it with existing tools such as Code Interpreter which made its strengths a whole lot more evident - that’s a transcript where I had it design and test its own version of the JSON Schema succinct DSL I published last week.

Riley Goodside then spotted that Code Interpreter has been quietly enabled for other models too, including the excellent o3-mini reasoning model. This means you can have o3-mini reason about code, write that code, test it, iterate on it and keep going until it gets something that works.

Code Interpreter remains my favorite implementation of the "coding agent" pattern, despite recieving very few upgrades in the two years after its initial release. Plugging much stronger models into it than the previous GPT-4o default makes it even more useful.

Nothing about this in the ChatGPT release notes yet, but I've tested it in the ChatGPT iOS app and mobile web app and it definitely works there.

2024

An LLM knows every work of Shakespeare but can’t say which it read first. In this material sense a model hasn’t read at all.

To read is to think. Only at inference is there space for serendipitous inspiration, which is why LLMs have so little of it to show for all they’ve seen.

o1 prompting is alien to me. Its thinking, gloriously effective at times, is also dreamlike and unamenable to advice.

Just say what you want and pray. Any notes on “how” will be followed with the diligence of a brilliant intern on ketamine.

2023

Examples of weird GPT-4 behavior for the string “ davidjl”. GPT-4, when told to repeat or otherwise process the string “ davidjl” (note the leading space character), treats it as “jndl” or “jspb” or “JDL” instead. It turns out “ davidjl” has its own single token in the tokenizer: token ID 23282, presumably dating back to the GPT-2 days.

Riley Goodside refers to these as “glitch tokens”.

This token might refer to Reddit user davidjl123 who ranks top of the league for the old /r/counting subreddit, with 163,477 posts there which presumably ended up in older training data.

I think prompt engineering can be divided into “context engineering”, selecting and preparing relevant context for a task, and “prompt programming”, writing clear instructions. For an LLM search application like Perplexity, both matter a lot, but only the final, presentation-oriented stage of the latter is vulnerable to being echoed.

2022

“You are GPT-3”. Genius piece of prompt design by Riley Goodside. “A long-form GPT-3 prompt for assisted question-answering with accurate arithmetic, string operations, and Wikipedia lookup. Generated IPython commands (in green) are pasted into IPython and output is pasted back into the prompt (no green).” Uses “Out[” as a stop sequence to ensure GPT-3 stops at each generated iPython prompt rather than inventing the output itself.

Show HN: A new way to use GPT-3 to generate code (and everything else).

Riley Goodside is my favourite Twitter follow for GPT-3 tips. Here he describes a powerful prompt pattern he's designed which lets you generate extremely complex code output by asking GPT-3 to fill in $$areas like this$$ with different patterns, then stitch them together into full HTML or other source code files. It's really clever.