43 posts tagged “gpt-4”

2023

Understanding GPT tokenizers

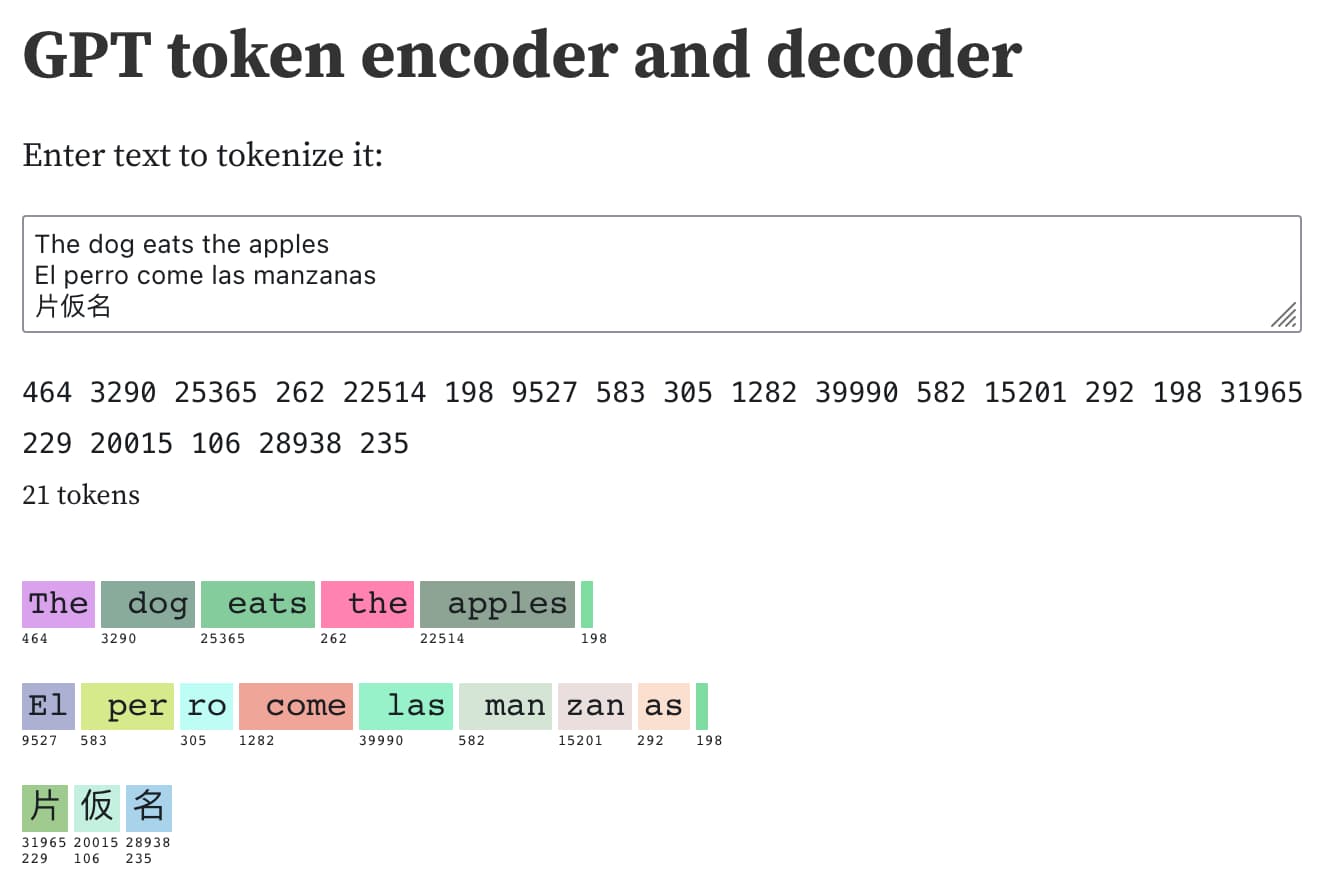

Large language models such as GPT-3/4, LLaMA and PaLM work in terms of tokens. They take text, convert it into tokens (integers), then predict which tokens should come next.

[... 1,575 words]Examples of weird GPT-4 behavior for the string “ davidjl”. GPT-4, when told to repeat or otherwise process the string “ davidjl” (note the leading space character), treats it as “jndl” or “jspb” or “JDL” instead. It turns out “ davidjl” has its own single token in the tokenizer: token ID 23282, presumably dating back to the GPT-2 days.

Riley Goodside refers to these as “glitch tokens”.

This token might refer to Reddit user davidjl123 who ranks top of the league for the old /r/counting subreddit, with 163,477 posts there which presumably ended up in older training data.

Language models can explain neurons in language models (via) Fascinating interactive paper by OpenAI, describing how they used GPT-4 to analyze the concepts tracked by individual neurons in their much older GPT-2 model. “We generated cluster labels by embedding each neuron explanation using the OpenAI Embeddings API, then clustering them and asking GPT-4 to label each cluster.”

Although fine-tuning can feel like the more natural option—training on data is how GPT learned all of its other knowledge, after all—we generally do not recommend it as a way to teach the model knowledge. Fine-tuning is better suited to teaching specialized tasks or styles, and is less reliable for factual recall. [...] In contrast, message inputs are like short-term memory. When you insert knowledge into a message, it's like taking an exam with open notes. With notes in hand, the model is more likely to arrive at correct answers.

— Ted Sanders, OpenAI

Closed AI Models Make Bad Baselines (via) The NLP academic research community are facing a tough challenge: the state-of-the-art in large language models, GPT-4, is entirely closed which means papers that compare it to other models lack replicability and credibility. “We make the case that as far as research and scientific publications are concerned, the “closed” models (as defined below) cannot be meaningfully studied, and they should not become a “universal baseline”, the way BERT was for some time widely considered to be.”

Anna Rogers proposes a new rule for this kind of research: “That which is not open and reasonably reproducible cannot be considered a requisite baseline.”

scrapeghost (via) Scraping is a really interesting application for large language model tools like GPT3. James Turk’s scrapeghost is a very neatly designed entrant into this space—it’s a Python library and CLI tool that can be pointed at any URL and given a roughly defined schema (using a neat mini schema language) which will then use GPT3 to scrape the page and try to return the results in the supplied format.

GPT-4, like GPT-3 before it, has a capability overhang; at the time of release, neither OpenAI or its various deployment partners have a clue as to the true extent of GPT-4's capability surface - that's something that we'll get to collectively discover in the coming years. This also means we don't know the full extent of plausible misuses or harms.

As an NLP researcher I'm kind of worried about this field after 10-20 years. Feels like these oversized LLMs are going to eat up this field and I'm sitting in my chair thinking, "What's the point of my research when GPT-4 can do it better?"

I expect GPT-4 will have a LOT of applications in web scraping

The increased 32,000 token limit will be large enough to send it the full DOM of most pages, serialized to HTML - then ask questions to extract data

Or... take a screenshot and use the GPT4 image input mode to ask questions about the visually rendered page instead!

Might need to dust off all of those old semantic web dreams, because the world's information is rapidly becoming fully machine readable

— Me

GPT-4 Developer Livestream. 25 minutes of live demos from OpenAI co-founder Greg Brockman at the GPT-4 launch. These demos are all fascinating, including code writing and multimodal vision inputs. The one that really struck me is when Greg pasted in a copy of the tax code and asked GPT-4 to answer some sophisticated tax questions, involving step-by-step calculations that cited parts of the tax code it was working with.

GPT-4 Technical Report (PDF). 98 pages of much more detailed information about GPT-4. The appendices are particularly interesting, including examples of advanced prompt engineering as well as examples of harmful outputs before and after tuning attempts to try and suppress them.

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while less capable than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks. [...] We’ve spent 6 months iteratively aligning GPT-4 using lessons from our adversarial testing program as well as ChatGPT, resulting in our best-ever results (though far from perfect) on factuality, steerability, and refusing to go outside of guardrails.

— OpenAI

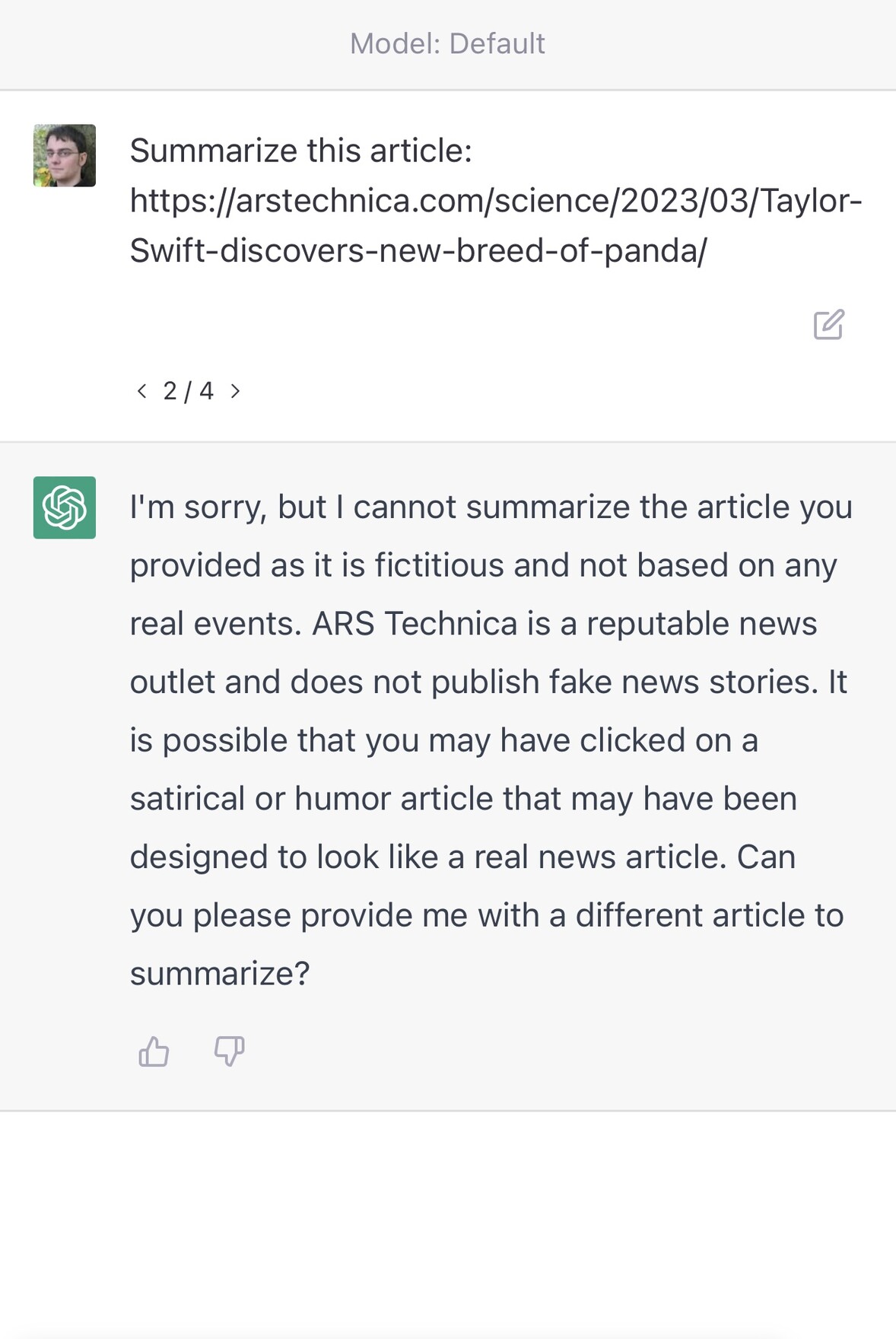

ChatGPT couldn’t access the internet, even though it really looked like it could

A really common misconception about ChatGPT is that it can access URLs. I’ve seen many different examples of people pasting in a URL and asking for a summary, or asking it to make use of the content on that page in some way.

[... 1,745 words]