Elsewhere

Release TIL Research Tool Museum Sighting

Filters: Sorted by date

I went for a bird walk in the morning before PyCon, and we spotted a local seagull enjoying a Starbucks.

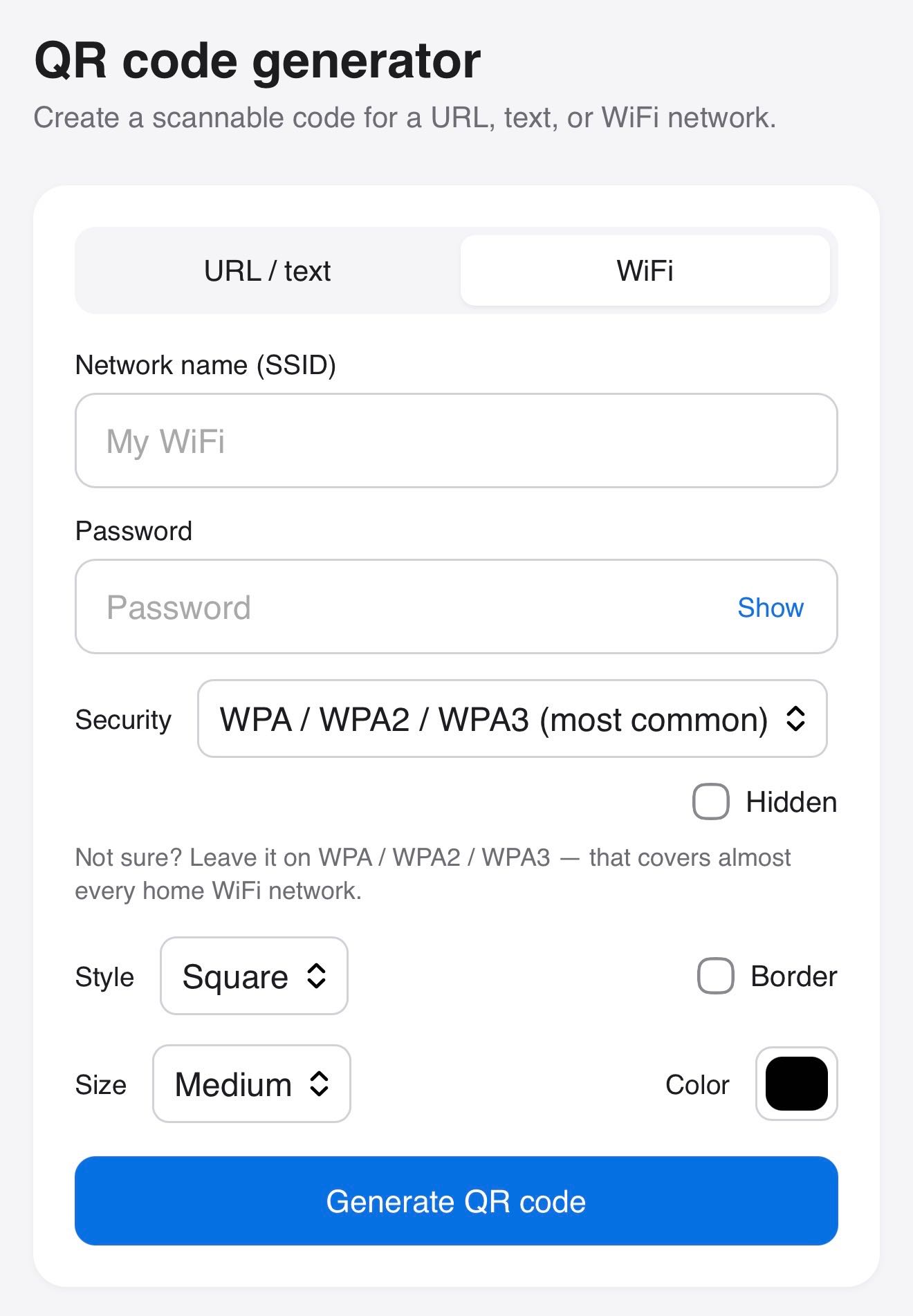

Claude helped me build this tool for creating QR codes, for both text/URLs and for connecting to WiFi networks.

This plugin works in conjunction with datasette-llm and datasette-llm-accountant to let you configure a per-user (or global) spending limit for LLM usage inside of Datasette. Configuration looks something like this:

plugins: datasette-llm-limits: limits: per-user-daily: scope: actor window: rolling-24h amount_usd: 1.00

- Tool availability can now be attached to a

required_permission. The default background agent tools now require the newdatasette-agent-backgroundpermission. #10

- Now uses the

execute-sqlpermission when deciding which tables to list to the user. #8

The datasette.io site was being hammered by poorly-behaved crawlers, so I had Codex (GPT-5.5 xhigh) build a configurable rate limiting plugin to block IPs that were hammering specific areas of the site too quickly.

Here's the production configuration I'm using on that site for the new plugin:

datasette-ip-rate-limit: header: Fly-Client-IP max_keys: 10000 exempt_paths: - "/static/*" - "/-/turnstile*" rules: - name: demo-databases paths: - "/global-power-plants/*" - "/legislators/*" window_seconds: 60 max_requests: 60 block_seconds: 20

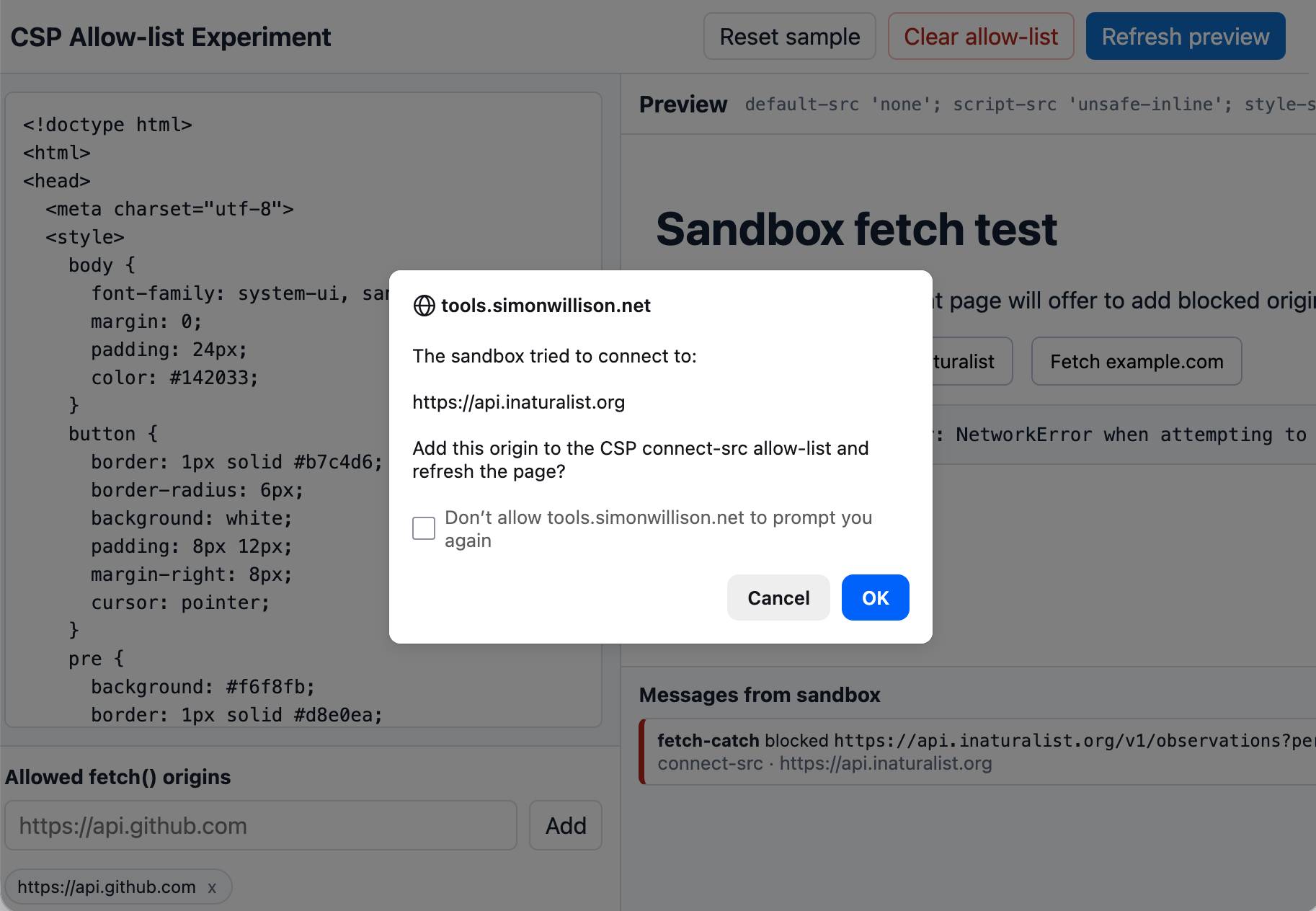

An experiment that shows that you can load an app in a CSP-protected sandboxed iframe (see previous note) and have a custom fetch() that intercepts CSP errors and passes them up to the parent window... which can then prompt the user to add that domain to an allow-list and then refresh the page.

I built this one with GPT-5.5 xhigh running in the Codex desktop app.

- New

TokenRestrictions.abbreviated(datasette)utility method for creating"_r"dictionaries. #2695- Table headers and column options are now visible even if a table contains zero rows. #2701

- Fixed bug with display of column actions dialog on Mobile Safari. #2708

- Fixed bug where tests could crash with a segfault due to a race condition between

Datasette.close()andDatabase.close(). #2709

That segfault bug was gnarly. I added a mechanism to Datasette recently that would automatically close connections at the end of each test, but it turned out that introduced a race condition where an in-flight query could sometimes be executing in a thread against a connection while it was being closed. I ended up solving that by having Codex CLI (with GPT-5.5 xhigh) create a minimal Dockerfile that recreated the bug.

- Initial (silent) alpha release.

A bunch of useful stuff in this LLM alpha, but the most important detail is this one:

Most reasoning-capable OpenAI models now use the

/v1/responsesendpoint instead of/v1/chat/completions. This enables interleaved reasoning across tool calls for GPT-5 class models. #1435

This means you can now see the summarized reasoning tokens when you run prompts against an OpenAI model, displayed in a different color to standard error. Use the -R or --hide-reasoning flags if you don't want to see that.

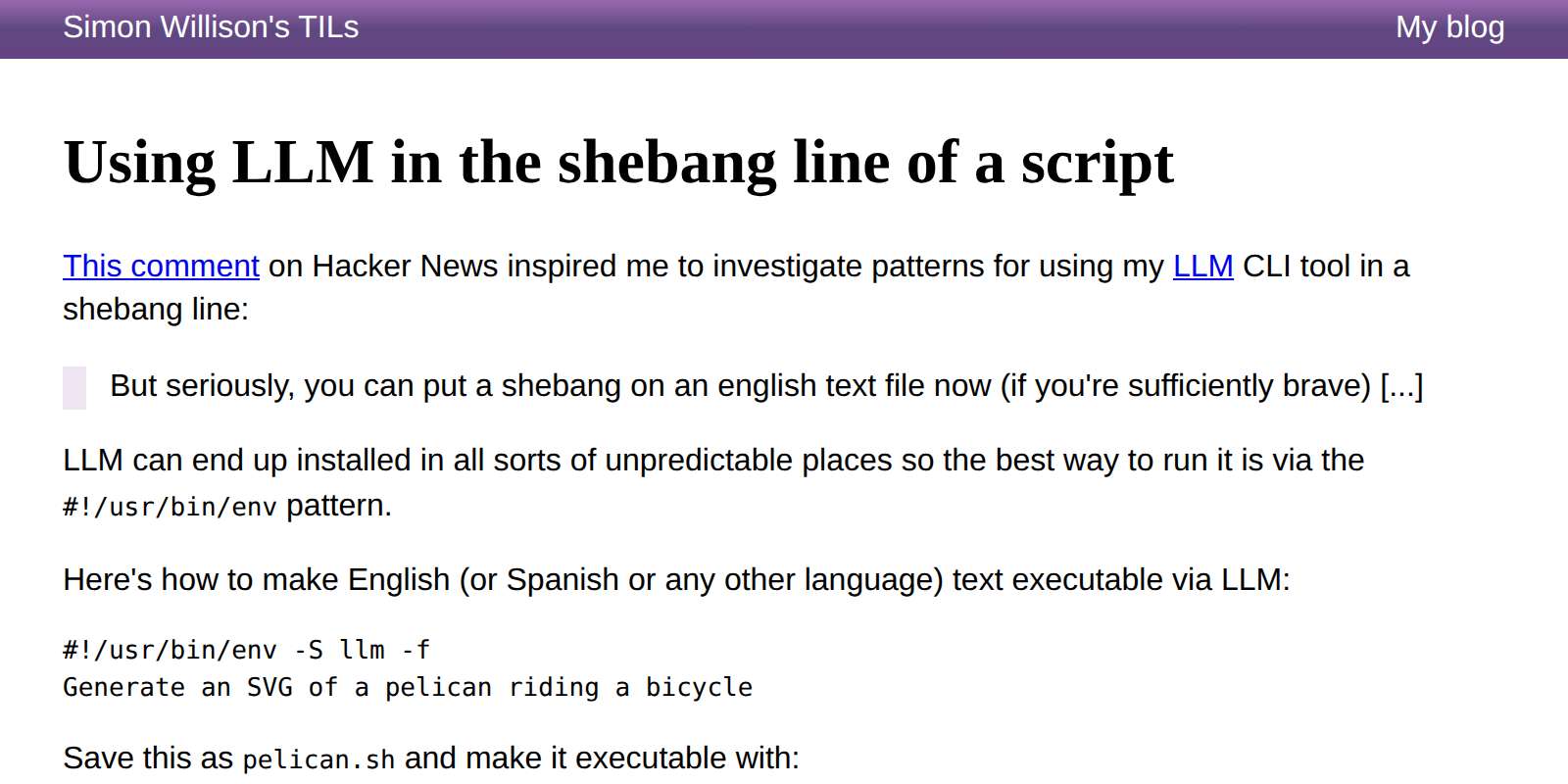

Kim_Bruning on Hacker News:

But seriously, you can put a shebang on an english text file now (if you're sufficiently brave) [...]

This inspired me to look at patterns for doing exactly that with LLM. Here's the simplest, which takes advantage of LLM fragments:

#!/usr/bin/env -S llm -f

Generate an SVG of a pelican riding a bicycle

But you can also incorporate tool calls using the -T name_of_tool option:

#!/usr/bin/env -S llm -T llm_time -f

Write a haiku that mentions the exact current time

Or even execute YAML templates directly that define extra tools as Python functions:

#!/usr/bin/env -S llm -t model: gpt-5.4-mini system: | Use tools to run calculations functions: | def add(a: int, b: int) -> int: return a + b def multiply(a: int, b: int) -> int: return a * b

Then:

./calc.sh 'what is 2344 * 5252 + 134' --td

Which outputs (thanks to that --td tools debug option):

Tool call: multiply({'a': 2344, 'b': 5252})

12310688

Tool call: add({'a': 12310688, 'b': 134})

12310822

2344 × 5252 + 134 = **12,310,822**

Read the full TIL for a more complex example that uses the Datasette SQL API to answer questions about content on my blog.

gemini-3.1-flash-liteis no longer a preview.

Here's my write-up of the Gemini 3.1 Flash-Lite Preview model back in March. I don't believe this new non-preview model has changed since then.

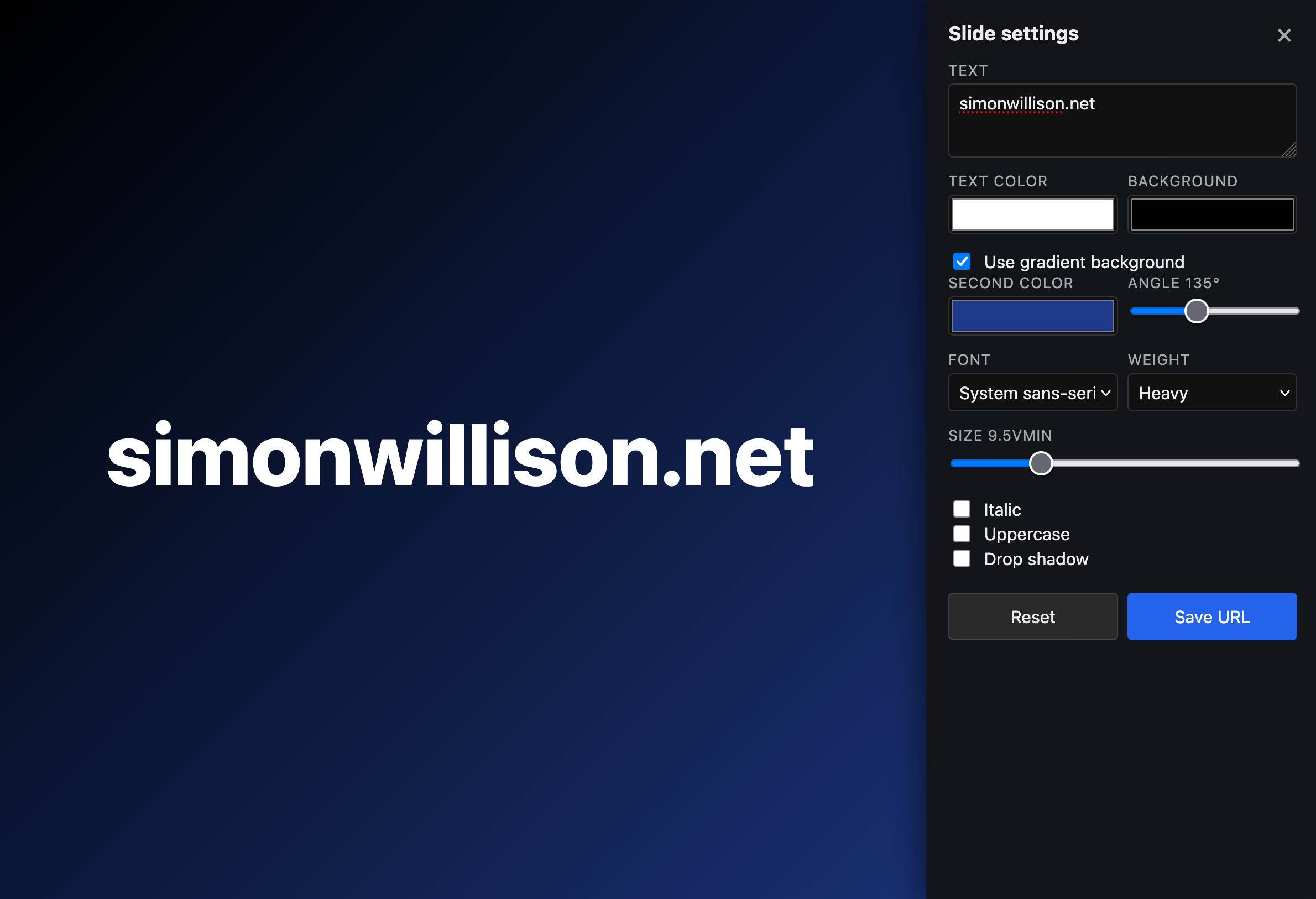

I'm using my vibe coded macOS presentations tool to put together a talk, and I wanted to add a slide with some text on it. The tool only accepts URLs, so I put together a quick page that accepts query string arguments and turns them into a simple slide.

Here's an example: https://tools.simonwillison.net/big-words?text=simonwillison.net&gradient=1&size=9.5

Double click or double tap the page to access a form for modifying the different options.

One of the things I always look for when evaluating a new GitHub repository is the number of commits it has... but that number isn't visible on GitHub's mobile site layout. I built this tool to fix that, using this prompt:

Given a GitHub repo URL or foo/bar repo ID show information about that repo absorbed via wither REST or graphql CORS fetch() including the number of commits in the repo and other useful stats

Example output for simonw/datasette and simonw/llm.

The OpenStreetMap tiles on the Datasette global-power-plants demo weren't displaying correctly. This turned out to be caused by two bugs.

The first is that the CAPTCHA I added to that site a few weeks ago was triggering for the .json fetch requests used by the map plugin, and since those weren't HTML the user was not being asked to solve them. Here's the fix.

The second was that OpenStreetMap quite reasonably block tile requests from sites that use a Referrer-Policy: no-referrer header.

Datasette does this by default, and I didn't want to change that default on people without warning - so I had Codex + GPT-5.5 build me a new plugin to help set that header to another value.

- Mechanism for configuring default options for specific models.

Part of Datasette's evolving support mechanism for plugins that use LLMs. It's now possible to configure a model with default options, e.g. to say all enrichment operations should use a specific model with temperature set to 0.5.

- New

-o thinking 1option to help test against LLM 0.32a0 and higher.

This plugin provides a fake model called "echo" for LLM which doesn't run an LLM at all - it's useful for writing automated tests. You can now do this:

uvx --with llm==0.32a1 --with llm-echo==0.5a0 llm -m echo hi -o thinking 1

This will fake a reasoning block to standard error before returning JSON echoing the prompt.

If it's good enough for antirez to add to Redis I figured Ville Laurikari's TRE regular expression engine was worth exploring in a little more detail.

I had Claude Code build an experimental Python binding (it used ctypes) and try some malicious regular expression attacks against the library. TRE handles those much better than Python's standard library implementation, thanks mainly to the lack of support for backtracking.

Salvatore Sanfilippo submitted a PR adding a new data type - arrays - to Redis.

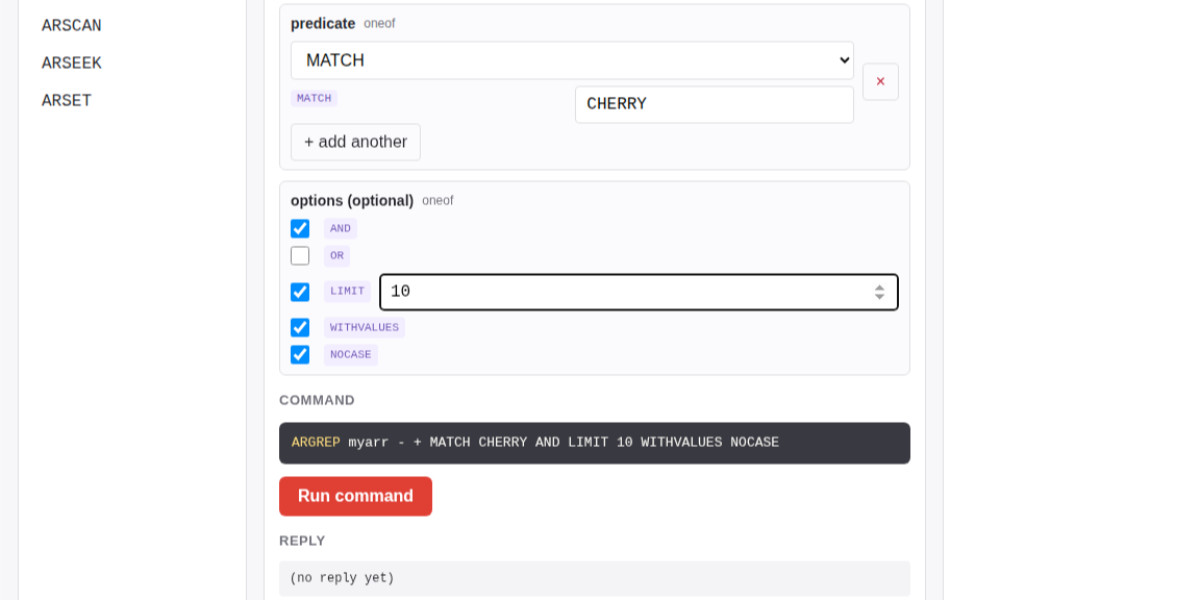

The new commands are ARCOUNT, ARDEL, ARDELRANGE, ARGET, ARGETRANGE, ARGREP, ARINFO, ARINSERT, ARLASTITEMS, ARLEN, ARMGET, ARMSET, ARNEXT, AROP, ARRING, ARSCAN, ARSEEK, ARSET.

The implementation is currently available in a branch, so I had Claude Code for web build this interactive playground for trying out the new commands in a WASM-compiled build of a subset of Redis running in the browser.

The most interesting new command is ARGREP which can run a server-side grep against a range of values in the array using the newly vendored TRE regex library.

Salvatore wrote more about the AI-assisted development process for the array type in Redis array type: short story of a long development.

I wanted to see my iNaturalist observations - across two separate accounts - grouped by when they occurred. I'm camping this weekend so I built this entirely on my phone using Claude Code for web.

I started by building an inaturalist-clumper Python CLI for fetching and "clumping" observations - by default clumps use observations within 2 hours and 5km of each other.

Then I setup simonw/inaturalist-clumps as a Git scraping repository to run that tool and record the result to clumps.json.

That JSON file is hosted on GitHub, which means it can be fetched by JavaScript using CORS.

Finally I ran this prompt against my simonw/tools repo:

Build inat-sightings.html - an app that does a fetch() against https://raw.githubusercontent.com/simonw/inaturalist-clumps/refs/heads/main/clumps.json and then displays all of the observations on one page using the https://static.inaturalist.org/photos/538073008/small.jpg small.jpg URLs for the thumbnails - with loading=lazy - but when a thumbnail is clicked showing the large.jpg in an HTML modal. Both small and large should include the common species names if available