Saturday, 11th March 2023

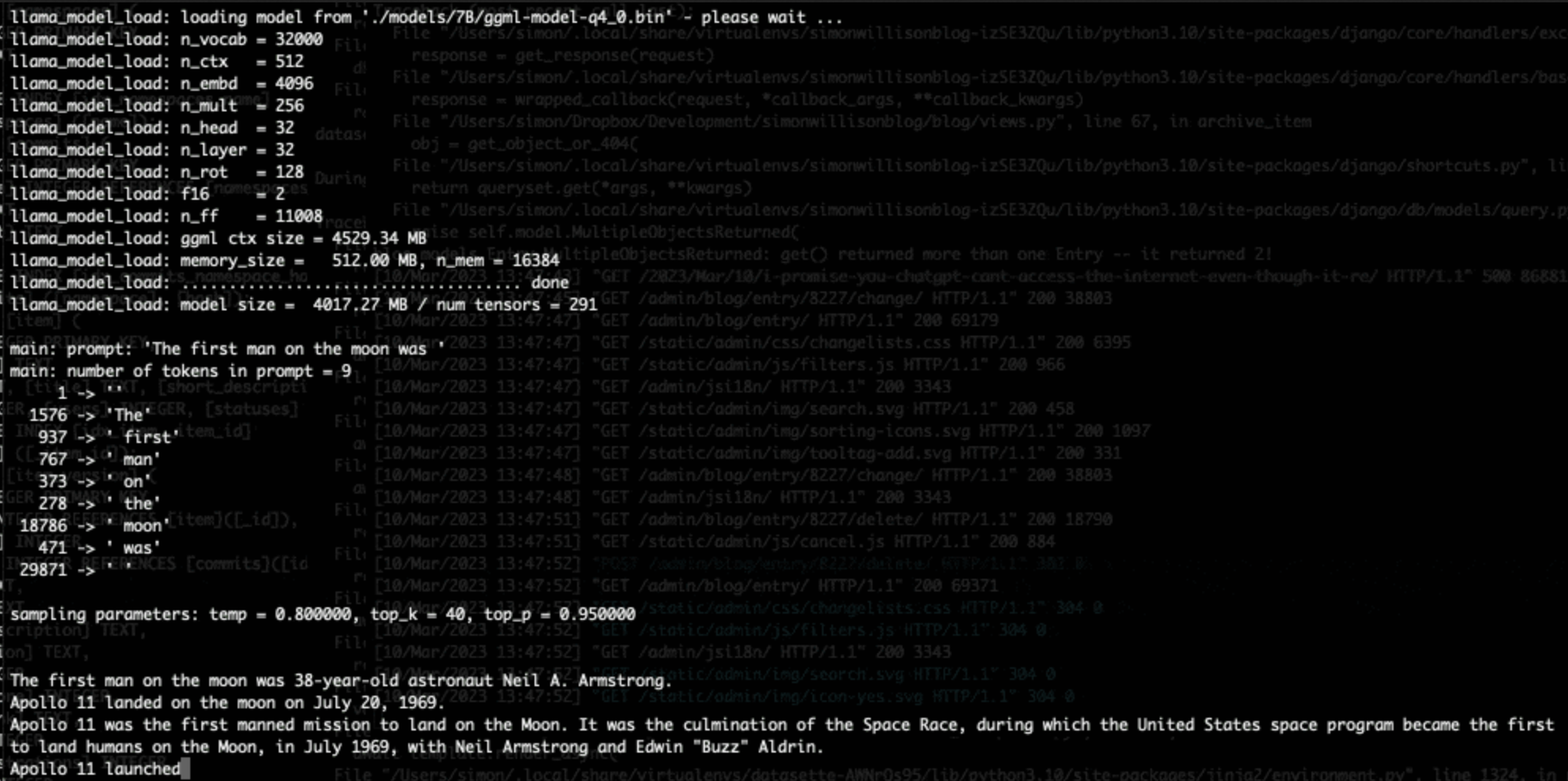

Running LLaMA 7B on a 64GB M2 MacBook Pro with llama.cpp. I got Facebook’s LLaMA 7B to run on my MacBook Pro using llama.cpp (a “port of Facebook’s LLaMA model in C/C++”) by Georgi Gerganov. It works! I’ve been hoping to run a GPT-3 class language model on my own hardware for ages, and now it’s possible to do exactly that. The model itself ends up being just 4GB after applying Georgi’s script to “quantize the model to 4-bits”.

Large language models are having their Stable Diffusion moment

The open release of the Stable Diffusion image generation model back in August 2022 was a key moment. I wrote how Stable Diffusion is a really big deal at the time.

[... 1,815 words]ChatGPT’s API is So Good and Cheap, It Makes Most Text Generating AI Obsolete (via) Max Woolf on the quite frankly weird economics of the ChatGPT API: it’s 1/10th the price of GPT-3 Da Vinci and appears to be equivalent (if not more) capable. “But it is very hard to economically justify not using ChatGPT as a starting point for a business need and migrating to a more bespoke infrastructure later as needed, and that’s what OpenAI is counting on. [...] I don’t envy startups whose primary business is text generation right now.”