How I make annotated presentations

6th August 2023

Giving a talk is a lot of work. I go by a rule of thumb I learned from Damian Conway: a minimum of ten hours of preparation for every one hour spent on stage.

If you’re going to put that much work into something, I think it’s worth taking steps to maximize the value that work produces—both for you and for your audience.

One of my favourite ways of getting “paid” for a talk is when the event puts in the work to produce a really good video of that talk, and then shares that video online. North Bay Python is a fantastic example of an event that does this well: they team up with Next Day Video and White Coat Captioning and have talks professionally recorded, captioned and uploaded to YouTube within 24 hours of the talk being given.

Even with that quality of presentation, I don’t think a video on its own is enough. My most recent talk was 40 minutes long—I’d love people to watch it, but I myself watch very few 40m long YouTube videos each year.

So I like to publish my talks with a text and image version of the talk that can provide as much of the value as possible to people who don’t have the time or inclination to sit through a 40m talk (or 20m if you run it at 2x speed, which I do for many of the talks I watch myself).

Annotated presentations

My preferred format for publishing these documents is as an annotated presentation—a single document (no clicking “next” dozens of times) combining key slides from the talk with custom written text to accompany each one, plus additional links and resources.

Here’s my most recent example: Catching up on the weird world of LLMs, from North Bay Python last week.

More examples (see also my annotated-talks tag):

- Prompt injection explained, with video, slides, and a transcript for a LangChain webinar in May 2023.

- Coping strategies for the serial project hoarder for DjangoCon US 2022.

- How to build, test and publish an open source Python library for PyGotham 2021

- Video introduction to Datasette and sqlite-utils for FOSDEM February 2021

- Datasette—an ecosystem of tools for working with small data for PyGotham 2020.

- Personal Data Warehouses: Reclaiming Your Data for the GitHub OCTO speaker series in November 2020.

- Redis tutorial for NoSQL Europe 2010 (my first attempt at this format).

I don’t tend to write a detailed script for my talks in advance. If I did, I might use that as a starting point, but I usually prepare the outline of the talk and then give it off-the-cuff on the day. I find this fits my style (best described as “enthusiastic rambling”) better.

Instead, I’ll assemble notes for each slide from re-watching the video after it has been released.

I don’t just cover the things I said in the the talk—I’ll also add additional context, and links to related resources. The annotated presentation isn’t just for people who didn’t watch the talk, it’s aimed at providing extra context for people who did watch it as well.

A custom tool for building annotated presentations

For this most recent talk I finally built something I’ve been wanting for years: a custom tool to help me construct the annotated presentation as quickly as possible.

Annotated presentations look deceptively simple: each slide is an image and one or two paragraphs of text.

There are a few extra details though:

- The images really need good

alt=text—a big part of the information in the presentation is conveyed by those images, so they need to have good descriptions both for screen reader users and to index in search engines / for retrieval augmented generation. - Presentations might have dozens of slides—just assembling the image tags in the correct order can be a frustrating task.

- For editing the annotations I like to use Markdown, as it’s quicker to write than HTML. Making this as easy as possible encourages me to add more links, bullet points and code snippets.

One of my favourite use-cases for tools like ChatGPT is to quickly create one-off custom tools. This was a perfect fit for that.

You can see the tool I create here: Annotated presentation creator (source code here).

The first step is to export the slides as images, being sure to have filenames which sort alphabetically in the correct order. I use Apple Keynote for my slides and it has an “Export” feature which does this for me.

Next, open those images using the annotation tool.

The tool is written in JavaScript and works entirely in your browser—it asks you to select images but doesn’t actually upload them to a server, just displays them directly inline in the page.

Anything you type in a textarea as work-in-progress will be saved to localStorage, so a browser crash or restart shouldn’t lose any of your work.

It uses Tesseract.js to run OCR against your images, providing a starting point for the alt= attributes for each slide.

Annotations can be entered in Markdown and are rendered to HTML as a live preview using the Marked library.

Finally, it offers a templating mechanism for the final output, which works using JavaScript template literals. So once you’ve finished editing the alt= text and writing the annotations, click “Execute template” at the bottom of the page and copy out the resulting HTML.

Here’s an animated GIF demo of the tool in action:

I ended up putting this together with the help of multiple different ChatGPT sessions. You can see those here:

- HTML and JavaScript in a single document to create an app that lets me do the following...

- JavaScript and HTML app on one page. User can select multiple image files on their own computer...

- JavaScript that runs once every 1s and builds a JavaScript object of every textarea on the page where the key is the name= attribute of that textarea and the value is its current contents. That whole object is then stored in localStorage in a key called savedTextAreas...

- Write a JavaScript function like this: executeTemplates(template, arrayOfObjects)...

Cleaning up the transcript with Claude

Since the video was already up on YouTube when I started writing the annotations, I decided to see if I could get a head start on writing them using the YouTube generated transcript.

I used my Action Transcription tool to extract the transcript, but it was pretty low quality—you can see a copy of it here. A sample:

okay hey everyone it's uh really

exciting to be here so yeah I call this

court talk catching up on the weird

world of llms I'm going to try and give

you the last few years of of llm

developments in 35 minutes this is

impossible so uh hopefully I'll at least

give you a flavor of some of the weirder

corners of the space because the thing

about language models is the more I look

at the more I think they're practically

interesting any particular aspect of

them anything at all if you zoom in

there are just more questions there are

just more unknowns about it there are

more interesting things to get into lots

of them are deeply disturbing and

unethical lots of them are fascinating

it's um I've called it um it's it's

impossible to tear myself away from this

I I just keep on keep on finding new

aspects of it that are interesting

It’s basically one big run-on sentence, with no punctuation, little capitalization and lots of umms and ahs.

Anthropic’s Claude 2 was released last month and supports up to 100,000 tokens per prompt—a huge improvement on ChatGPT (4,000) and GPT-4 (8,000). I decided to see if I could use that to clean up my transcript.

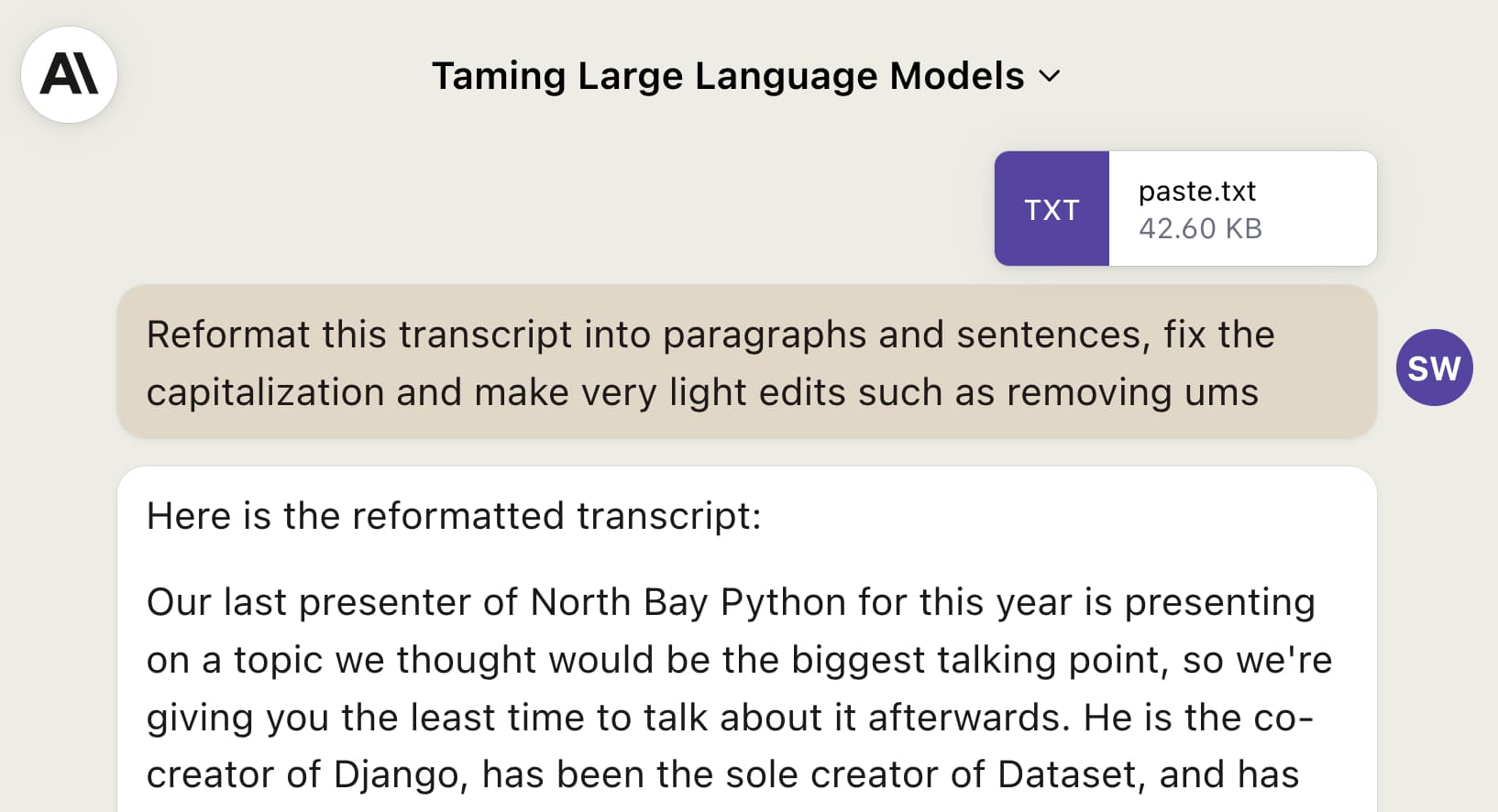

I pasted it into Claude and tried a few prompts... until I hit upon this one:

Reformat this transcript into paragraphs and sentences, fix the capitalization and make very light edits such as removing ums

This worked really, really well! Here’s the first paragraph it produced, based on the transcript I show above:

Okay everyone, it’s really exciting to be here. Yeah I call this talk “Catching Up on the Weird World of LLMs.” I’m going to try and give you the last few years of LLMs developments in 35 minutes. This is impossible, so hopefully I’ll at least give you a flavor of some of the weirder corners of the space. The thing about language models is the more I look at them, the more I think they’re practically interesting. Focus on any particular aspect, and there are just more questions, more unknowns, more interesting things to get into.

Note that I said “fractally interesting”, not “practically interesting”—but that error was there in the YouTube transcript, so Claude picked it up from there.

Here’s the full generated transcript.

It’s really impressive! At one point it even turns my dialogue into a set of bullet points:

Today the best are ChatGPT (aka GPT-3.5 Turbo), GPT-4 for capability, and Claude 2 which is free. Google has PaLM 2 and Bard. Llama and Claude are from Anthropic, a splinter of OpenAI focused on ethics. Google and Meta are the other big players.

Some tips:

- OpenAI models cutoff at September 2021 training data. Anything later isn’t in there. This reduces issues like recycling their own text.

- Claude and Palm have more recent data, so I’ll use them for recent events.

- Always consider context length. GPT has 4,000 tokens, GPT-4 has 8,000, Claude 100,000.

- If a friend who read the Wikipedia article could answer my question, I’m confident feeding it in directly. The more obscure, the more likely pure invention.

- Avoid superstitious thinking. Long prompts that “always work” are usually mostly pointless.

- Develop an immunity to hallucinations. Notice signs and check answers.

Compare that to my rambling original to see quite how much of an improvement this is.

But, all of that said... I specified “make very light edits” and it clearly did a whole lot more than just that.

I didn’t use the Claude version directly. Instead, I copied and pasted chunks of it into my annotation tool that made the most sense, then directly edited them to better fit what I was trying to convey.

As with so many things in LLM/AI land: a significant time saver, but no silver bullet.

For workshops, publish the handout

I took the Software Carpentries instructor training a few years ago, which was a really great experience.

A key idea I got from that is that a great way to run a workshop is to prepare an extensive, detailed handout in advance—and then spend the actual workshop time working through that handout yourself, at a sensible pace, in a way that lets the attendees follow along.

A bonus of this approach is that it forces you to put together a really high quality handout which you can distribute after the event.

I used this approach for the 3 hour workshop I ran at PyCon US 2023: Data analysis with SQLite and Python. I turned that into a new official tutorial on the Datasette website, accompanied by the video but also useful for people who don’t want to spend three hours watching me talk!

More people should do this

I’m writing this in the hope that I can inspire more people to give their talks this kind of treatment. It’s not a zero amount of work—it takes me 2-3 hours any time I do this—but it greatly increases the longevity of the talk and ensures that the work I’ve already put into it provides maximum value, both to myself (giving talks is partly a selfish act!) and to the people I want to benefit from it.

More recent articles

- Claude Opus 4.8: "a modest but tangible improvement" - 28th May 2026

- I think Anthropic and OpenAI have found product-market fit - 27th May 2026

- Notes on Pope Leo XIV's encyclical on AI - 25th May 2026