Sunday, 9th November 2025

Reverse engineering Codex CLI to get GPT-5-Codex-Mini to draw me a pelican

OpenAI partially released a new model yesterday called GPT-5-Codex-Mini, which they describe as "a more compact and cost-efficient version of GPT-5-Codex". It’s currently only available via their Codex CLI tool and VS Code extension, with proper API access "coming soon". I decided to use Codex to reverse engineer the Codex CLI tool and give me the ability to prompt the new model directly.

[... 1,774 words]Pelican on a Bike—Raytracer Edition (via) beetle_b ran this prompt against a bunch of recent LLMs:

Write a POV-Ray file that shows a pelican riding on a bicycle.

This turns out to be a harder challenge than SVG, presumably because there are less examples of POV-Ray in the training data:

Most produced a script that failed to parse. I would paste the error back into the chat and let it attempt a fix.

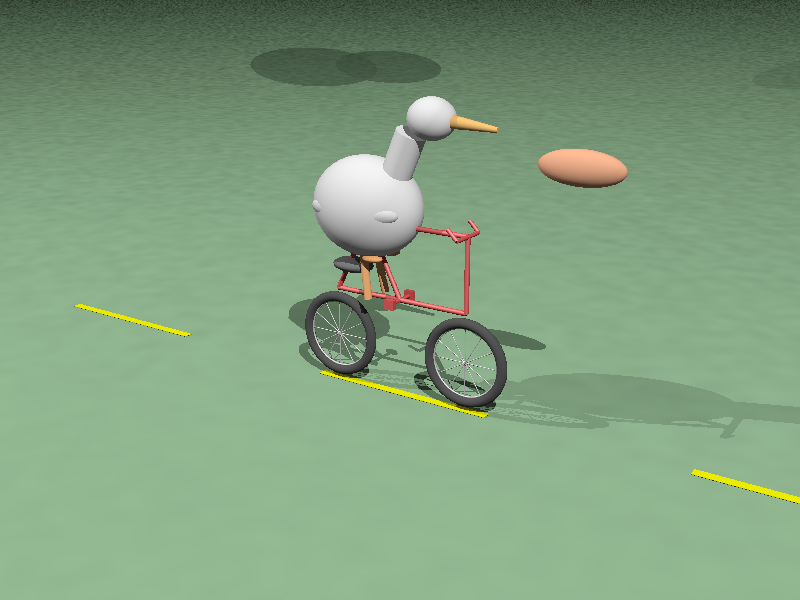

The results are really fun though! A lot of them end up accompanied by a weird floating egg for some reason - here's Claude Opus 4:

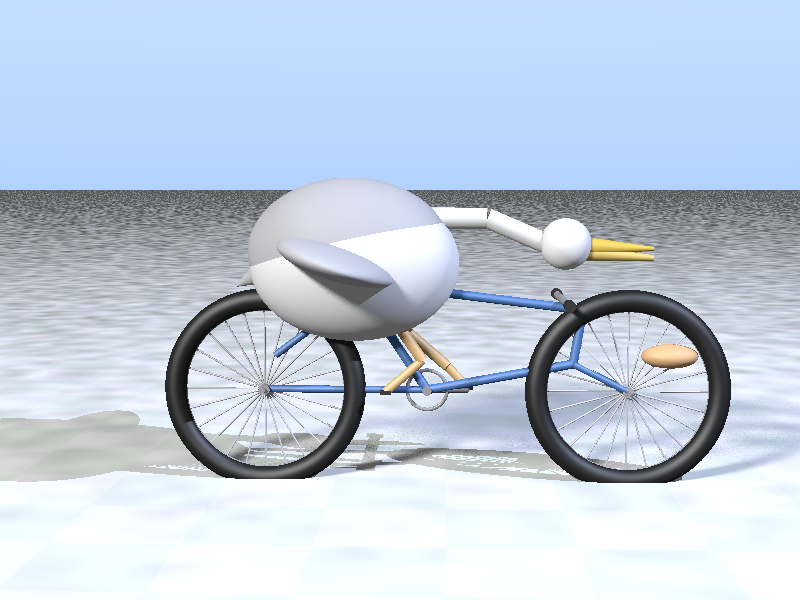

I think the best result came from GPT-5 - again with the floating egg though!

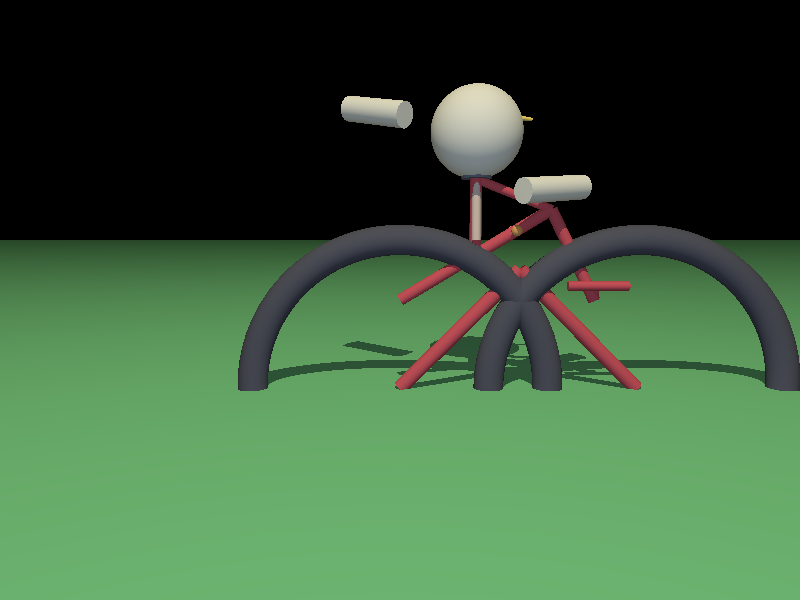

I decided to try this on the new gpt-5-codex-mini, using the trick I described yesterday. Here's the code it wrote.

./target/debug/codex prompt -m gpt-5-codex-mini \

"Write a POV-Ray file that shows a pelican riding on a bicycle."

It turns out you can render POV files on macOS like this:

brew install povray

povray demo.pov # produces demo.png

The code GPT-5 Codex Mini created didn't quite work, so I round-tripped it through Sonnet 4.5 via Claude Code a couple of times - transcript here. Once it had fixed the errors I got this:

That's significantly worse than the one beetle_b got from GPT-5 Mini!