Thursday, 19th June 2025

Radiology has embraced AI enthusiastically, and the labor force is growing nevertheless. The augmentation-not-automation effect of AI is despite the fact that AFAICT there is no identified "task" at which human radiologists beat AI. So maybe the "jobs are bundles of tasks" model in labor economics is incomplete. [...]

Can you break up your own job into a set of well-defined tasks such that if each of them is automated, your job as a whole can be automated? I suspect most people will say no. But when we think about other people's jobs that we don't understand as well as our own, the task model seems plausible because we don't appreciate all the nuances.

I continue to have fun running fantasy cooking prompts through LLMs - this time I tried "Give me a wildly ambitious recipe for zucchini cooked three ways" followed by "Go more ambitious" and now I need to get myself a centrifuge to help spherify my clarified zucchini consommé.

How OpenElections Uses LLMs (via) The OpenElections project collects detailed election data for the USA, all the way down to the precinct level. This is a surprisingly hard problem: while county and state-level results are widely available, precinct-level results are published in thousands of different ad-hoc ways and rarely aggregated once the election result has been announced.

A lot of those precinct results are published as image-filled PDFs.

Derek Willis has recently started leaning on Gemini to help parse those PDFs into CSV data:

For parsing image PDFs into CSV files, Google’s Gemini is my model of choice, for two main reasons. First, the results are usually very, very accurate (with a few caveats I’ll detail below), and second, Gemini’s large context window means it’s possible to work with PDF files that can be multiple MBs in size.

Is this piece he shares the process and prompts for a real-world expert level data entry project, assisted by Gemini.

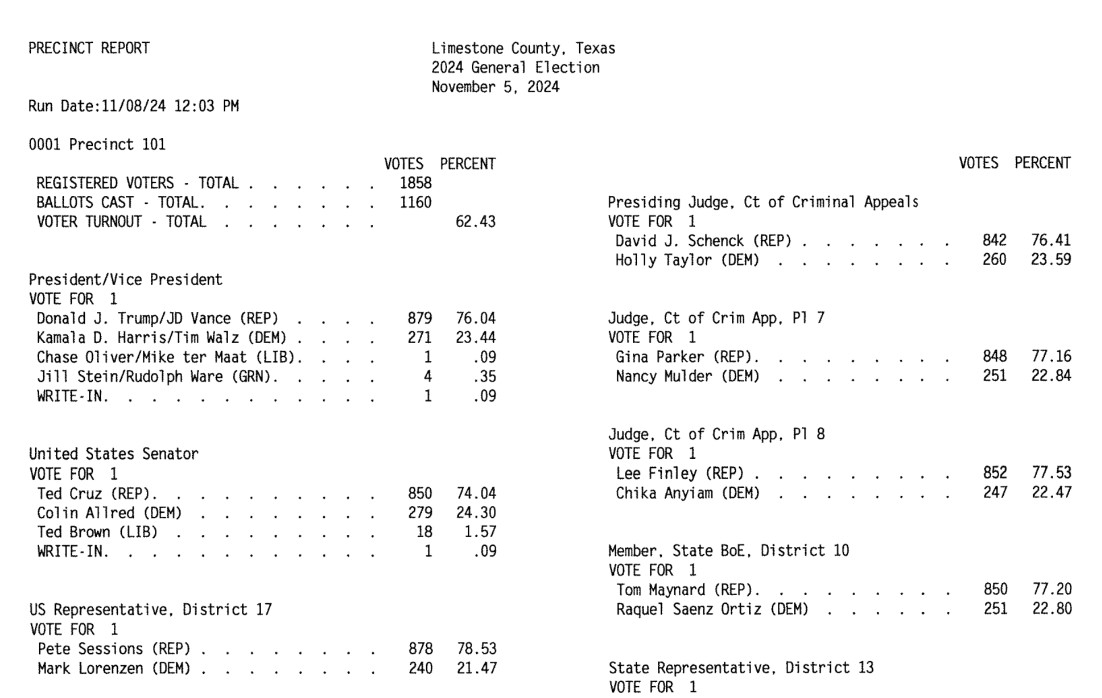

This example from Limestone County, Texas is a great illustration of how tricky this problem can get. Getting traditional OCR software to correctly interpret multi-column layouts like this always requires some level of manual intervention:

Derek's prompt against Gemini 2.5 Pro throws in an example, some special instructions and a note about the two column format:

Produce a CSV file from the attached PDF based on this example:

county,precinct,office,district,party,candidate,votes,absentee,early_voting,election_day

Limestone,Precinct 101,Registered Voters,,,,1858,,,

Limestone,Precinct 101,Ballots Cast,,,,1160,,,

Limestone,Precinct 101,President,,REP,Donald J. Trump,879,,,

Limestone,Precinct 101,President,,DEM,Kamala D. Harris,271,,,

Limestone,Precinct 101,President,,LIB,Chase Oliver,1,,,

Limestone,Precinct 101,President,,GRN,Jill Stein,4,,,

Limestone,Precinct 101,President,,,Write-ins,1,,,

Skip Write-ins with candidate names and rows with "Cast Votes", "Not Assigned", "Rejected write-in votes", "Unresolved write-in votes" or "Contest Totals". Do not extract any values that end in "%"

Use the following offices:

President/Vice President -> President

United States Senator -> U.S. Senate

US Representative -> U.S. House

State Senator -> State Senate

Quote all office and candidate values. The results are split into two columns on each page; parse the left column first and then the right column.

A spot-check and a few manual tweaks and the result against a 42 page PDF was exactly what was needed.

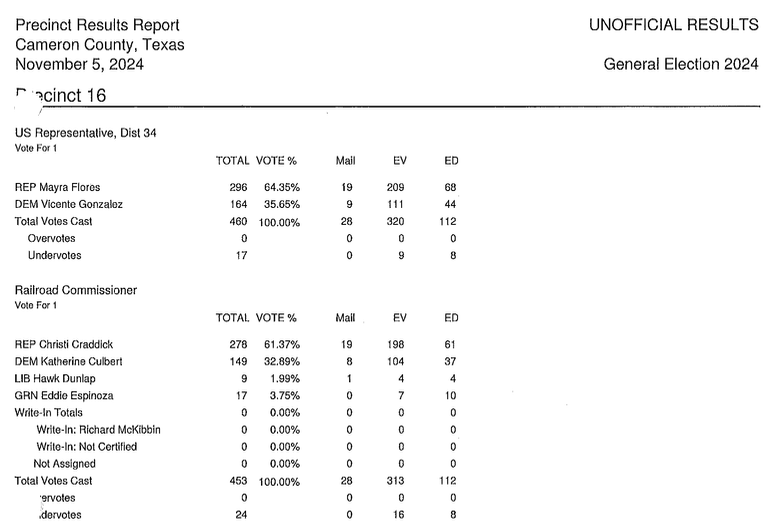

How about something harder? The results for Cameron County came as more than 600 pages and looked like this - note the hole-punch holes that obscure some of the text!

This file had to be split into chunks of 100 pages each, and the entire process still took a full hour of work - but the resulting table matched up with the official vote totals.

I love how realistic this example is. AI data entry like this isn't a silver bullet - there's still a bunch of work needed to verify the results and creative thinking needed to work through limitations - but it represents a very real improvement in how small teams can take on projects of this scale.

In the six weeks since we started working on Texas precinct results, we’ve been able to convert them for more than half of the state’s 254 counties, including many image PDFs like the ones on display here. That pace simply wouldn’t be possible with data entry or traditional OCR software.

Here's a tip that works on YouTube and almost any other web page that shows you a video. You can increase the playback rate beyond the usually-exposed 2x by running this in your browser DevTools console:

document.querySelector('video').playbackRate = 2.5

I find this is the fastest I can reasonably watch most videos at, with subtitles on to help my comprehension - it turns a 40 minute video into just 16 minutes, short enough that I don't feel too guilty taking time off whatever else I'm doing to watch it!

Cato CTRL™ Threat Research: PoC Attack Targeting Atlassian’s Model Context Protocol (MCP) Introduces New “Living off AI” Risk. Stop me if you've heard this one before:

- A threat actor (acting as an external user) submits a malicious support ticket.

- An internal user, linked to a tenant, invokes an MCP-connected AI action.

- A prompt injection payload in the malicious support ticket is executed with internal privileges.

- Data is exfiltrated to the threat actor’s ticket or altered within the internal system.

It's the classic lethal trifecta exfiltration attack, this time against Atlassian's new MCP server, which they describe like this:

With our Remote MCP Server, you can summarize work, create issues or pages, and perform multi-step actions, all while keeping data secure and within permissioned boundaries.

That's a single MCP that can access private data, consume untrusted data (from public issues) and communicate externally (by posting replies to those public issues). Classic trifecta.

It's not clear to me if Atlassian have responded to this report with any form of a fix. It's hard to know what they can fix here - any MCP that combines the three trifecta ingredients is insecure by design.

My recommendation would be to shut down any potential exfiltration vectors - in this case that would mean preventing the MCP from posting replies that could be visible to an attacker without at least gaining human-in-the-loop confirmation first.