Weeknotes: tableau-to-sqlite, django-sql-dashboard

14th March 2021

This week I started a limited production run of my new backend for Vaccinate CA calling, built a tableau-to-sqlite import tool and started working on a subset of Datasette for PostgreSQL and Django called django-sql-dashboard.

Vaccinate CA backend progress

My key project at the moment is building out a new Django-powered backend for the Vaccinate CA call reporting application—where real human beings constantly call pharmacies and medical sites around California to build a comprehensive guide to where the Covid vaccine is available.

As of this week, the new backend is running for a subset of the overall call volume. It’s exciting! It’s also a reminder that the single hardest piece of logic in any crowdsourcing-style application is the logic that gives a human being their next task. I’m continuing to evolve that logic, which is somewhat harder when the system I’m modifying is actively being used.

tableau-to-sqlite

The Vaccinate CA project is constantly on the lookout for new sources of data that might indicate locations that have the vaccine. Some of this data is locked up in Tableau dashboards, which are notoriously tricky to scrape.

When faced with problems like this, I frequently turn to GitHub code search: I’ll find a unique looking token in the data I’m trying to wrangle and run searches to see if anyone on GitHub has written code to handle it.

In doing so, I came across Tableau Scraper—an open source Python library by Bertrand Martel which does a fantastic job of turning a Tableau dashboard into a Pandas DataFrame.

Writing a Pandas DataFrame to a SQLite database is a one-liner: df.to_sql("table-name", sqlite3.connect(db_path)). So I spun up a quick command-line wrapper around the TableuaScraper class called tableau-to-sqlite which lets you do the following:

% tableau-to-sqlite tableau.db https://results.mo.gov/t/COVID19/views/VaccinationsDashboard/Vaccinations

Considering how much valuable data is trapped in government Tableau dashboards I’m really excited to point this tool at more sources. The README includes tips on combining this with sqlite-utils to get a CSV or JSON export which can then be tracked using Git scraping.

django-sql-dashboard

I’m continuing to ponder the idea of getting Datasette to talk to PostgreSQL in addition to SQLite, but in the meantime I have a growing Django application that runs against PostgreSQL and a desire to build some quick dashboards against it.

One of Datasette’s key features is the ability to bookmark a read-only SQL query and share that link with other people. It’s SQL injection attacks repurposed as a feature, and it’s proved to be incredibly useful over the past few years.

Here’s an example from earlier this week where I wanted to see how many GitHub issues I had opened and then closed within 60 seconds. The answer is 17!

django-sql-dashboard is my highly experimental exploration of what that idea looks like against a PostgreSQL database, wrapped inside a Django application

The key idea is to support executing read-only PostgreSQL statements with a strict timelimit (set using PostgreSQL’s statement_timeout setting, described here). Users can execute SQL directly, bookmark and share queries and save them to a database table in order to construct persistent dashboards.

It’s very early days for the project yet, and I’m still not 100% convinced it’s a good idea, but early signs are very promising.

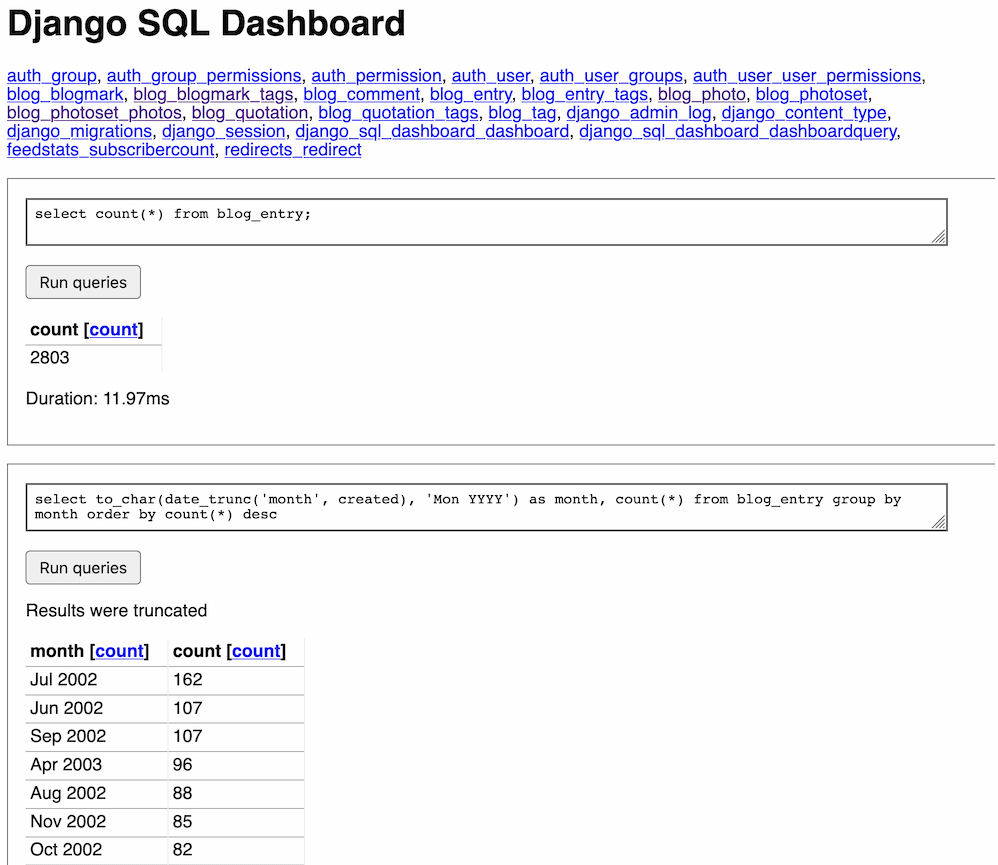

A fun feature is that it lets you have more than one SQL query on the same page. Here’s what it looks like running against my blog’s database, showing a count query and the months in which I wrote the most blog entries:

Releases this week

-

hacker-news-to-sqlite: 0.4—(5 releases total)—2021-03-13

Create a SQLite database containing data pulled from Hacker News -

django-sql-dashboard: 0.1a3—(3 releases total)—2021-03-13

Django app for building dashboards using raw SQL queries -

datasette-ripgrep: 0.7—(11 releases total)—2021-03-11

Web interface for searching your code using ripgrep, built as a Datasette plugin -

tableau-to-sqlite: 0.2—(3 releases total)—2021-03-11

Fetch data from Tableau into a SQLite database

TIL this week

- Pretty-printing all read-only JSON in the Django admin

- Flattening nested JSON objects with jq

- Converting no-decimal-point latitudes and longitudes using jq

- How to almost get facet counts in the Django admin

- Querying for GitHub issues open for less than 60 seconds

- Querying for items stored in UTC that were created on a Thursday in PST

More recent articles

- Claude Opus 4.8: "a modest but tangible improvement" - 28th May 2026

- I think Anthropic and OpenAI have found product-market fit - 27th May 2026

- Notes on Pope Leo XIV's encyclical on AI - 25th May 2026