| https://simonwillison.net/b/9460 |

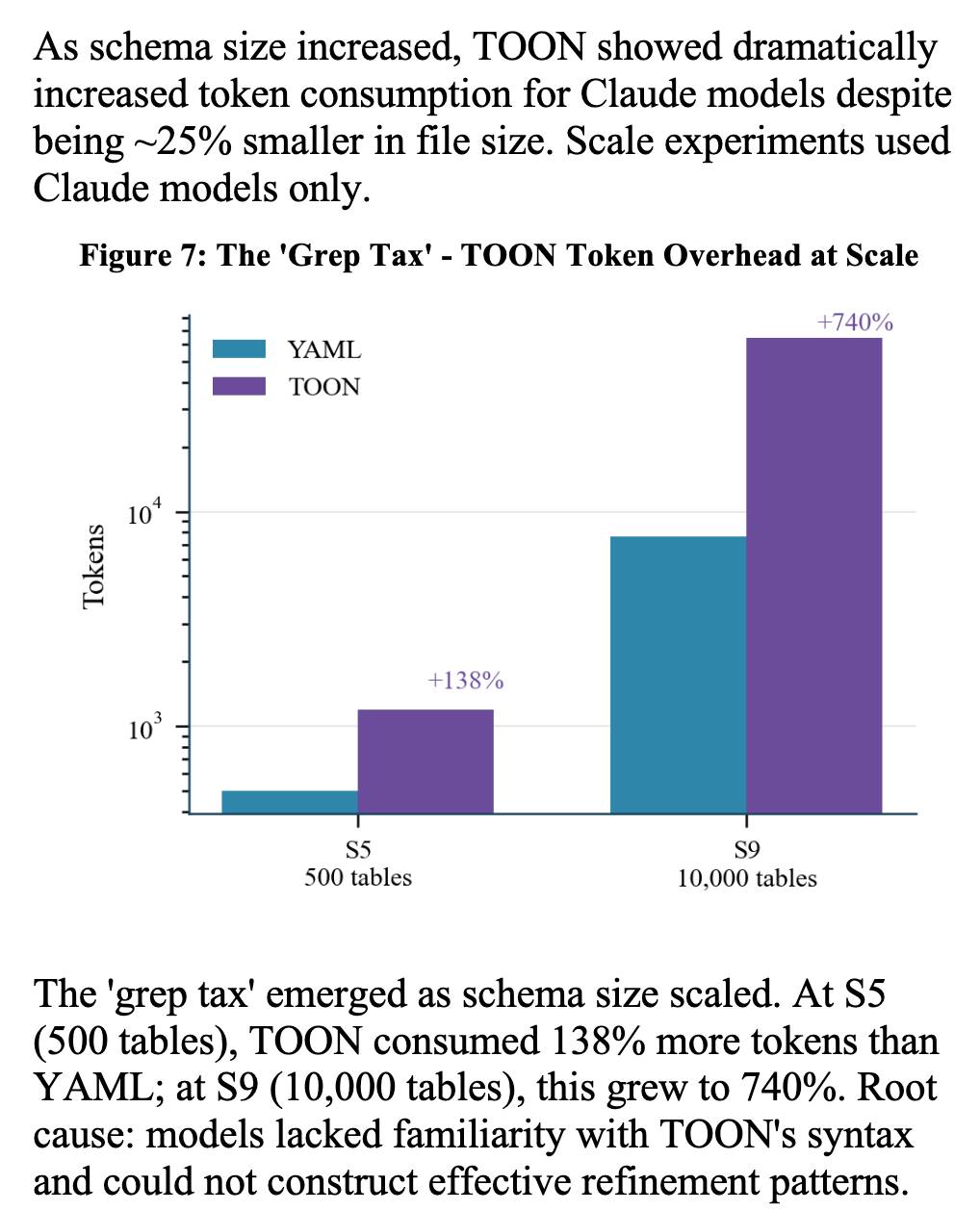

https://martinfowler.com/bliki/MythicalManMonth.html |

Mythical Man Month |

Martin Fowler highlights this key idea from The Mythical Man-Month (Fred Brooks, 1975, still impressively relevant 50 years later):

> I will contend that conceptual integrity is the most important consideration in system design. It is better to have a system omit certain anomalous features and improvements, but to reflect one set of design ideas, than to have one that contains many good but independent and uncoordinated ideas.

**Conceptual integrity** is exactly the missing piece I've been trying to nail down in understanding why being able to spit out new features so quickly offers new challenges when working with coding agents. |

- null - |

- null - |

2026-05-10 15:36:19+00:00 |

- null - |

True |

| https://simonwillison.net/b/9459 |

https://martinfowler.com/bliki/MythicalManMonth.html |

Mythical Man Month |

Martin Fowler highlights this key idea from The Mythical Man-Month (Fred Brooks, 1975, still impressively relevant 50 years later):

> I will contend that conceptual integrity is the most important consideration in system design. It is better to have a system omit certain anomalous features and improvements, but to reflect one set of design ideas, than to have one that contains many good but independent and uncoordinated ideas.

**Conceptual integrity** is exactly the missing piece I've been trying to nail down in understanding why being able to spit out new features so quickly offers new challenges when working with coding agents. |

- null - |

- null - |

2026-05-10 15:31:32+00:00 |

- null - |

True |

| https://simonwillison.net/b/9458 |

https://twitter.com/trq212/status/2052809885763747935 |

Using Claude Code: The Unreasonable Effectiveness of HTML |

Thought-provoking piece by Thariq Shihipar (on the Claude Code team at Anthropic) advocating for HTML over Markdown as an output format to request from Claude.

The article is crammed with interesting examples (collected on [this site](https://thariqs.github.io/html-effectiveness/)) and prompt suggestions like this one:

> `Help me review this PR by creating an HTML artifact that describes it. I'm not very familiar with the streaming/backpressure logic so focus on that. Render the actual diff with inline margin annotations, color-code findings by severity and whatever else might be needed to convey the concept well.`

I've been defaulting to asking for most things in Markdown since the GPT-4 days, when the 8,192 token limit meant that Markdown's token-efficiency over HTML was extremely worthwhile.

Thariq's piece here has caused me to reconsider that, especially for output. Asking Claude for an explanation in HTML means it can drop in SVG diagrams, interactive widgets, in-page navigation and all sorts of other neat ways of making the information more pleasant to navigate.

I wrote about [Useful patterns for building HTML tools](https://simonwillison.net/2025/Dec/10/html-tools/) last December, but that was focused very much on interactive utilities like the ones on my [tools.simonwillison.net](https://tools.simonwillison.net/) site. I'm excited to start experimenting more with rich HTML explanations in response to ad-hoc prompts.

<h4 id="trying-this-out">Trying this out on copy.fail</h4>

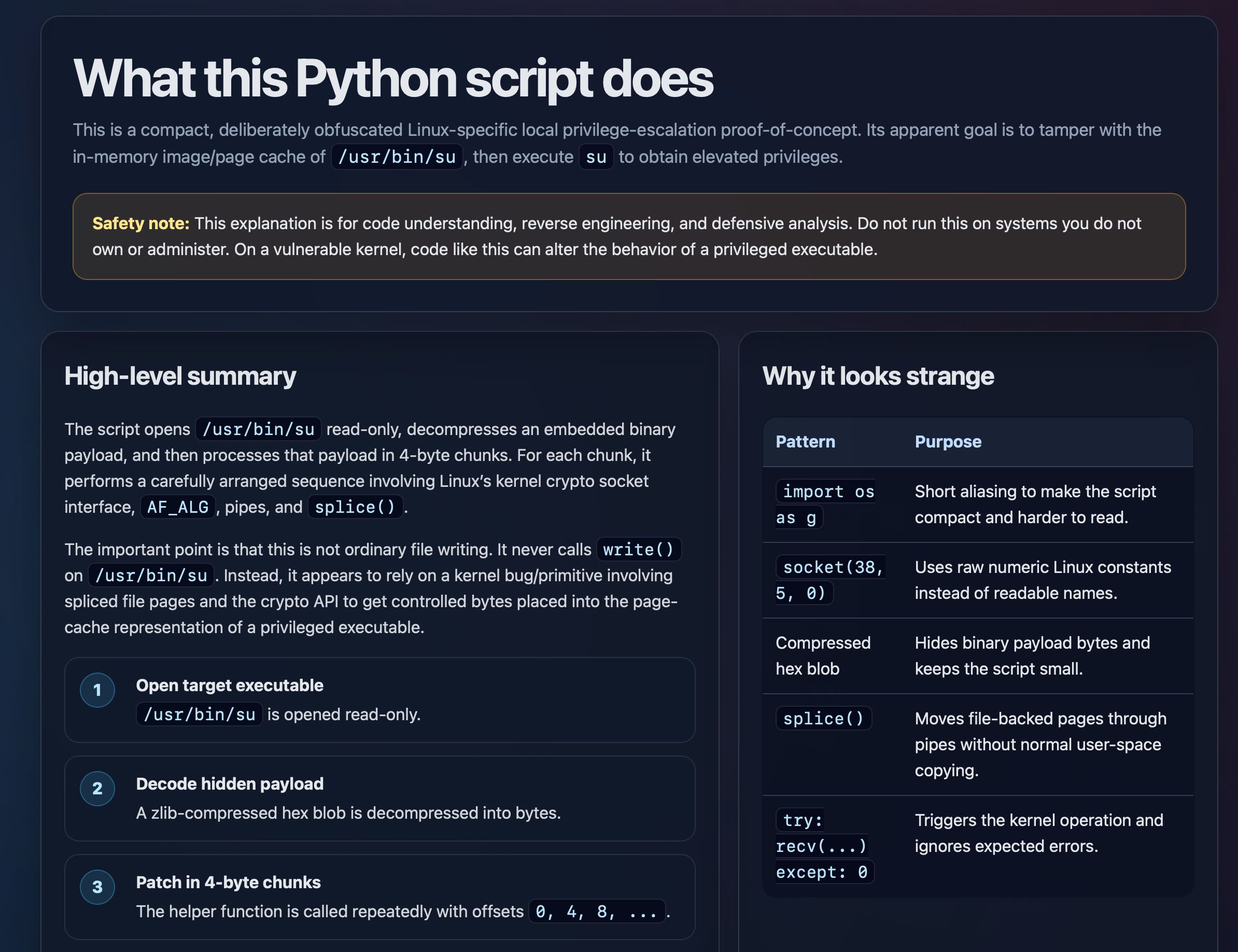

[copy.fail](https://copy.fail/) describes a recently discovered Linux security exploit, including a proof of concept distributed as obfuscated Python.

I tried having GPT-5.5 create an HTML explanation of the exploit like this:

> `curl https://copy.fail/exp | llm -m gpt-5.5 -s 'Explain this code in detail. Reformat it, expand out any confusing bits and go deep into what it does and how it works. Output HTML, neatly styled and using capabilities of HTML and CSS and JavaScript to make the explanation rich and interactive and as clear as possible'`

Here's [the resulting HTML page](https://gisthost.github.io/?ae53e3461ffdbfd0826156aacf025c7e). It's pretty good, though I should have emphasized explaining the exploit over the Python harness around it.

|

- null - |

- null - |

2026-05-08 21:00:11+00:00 |

https://static.simonwillison.net/static/2026/python-script-explainer.jpg |

True |

| https://simonwillison.net/b/9442 |

https://hacks.mozilla.org/2026/05/behind-the-scenes-hardening-firefox/ |

Behind the Scenes Hardening Firefox with Claude Mythos Preview |

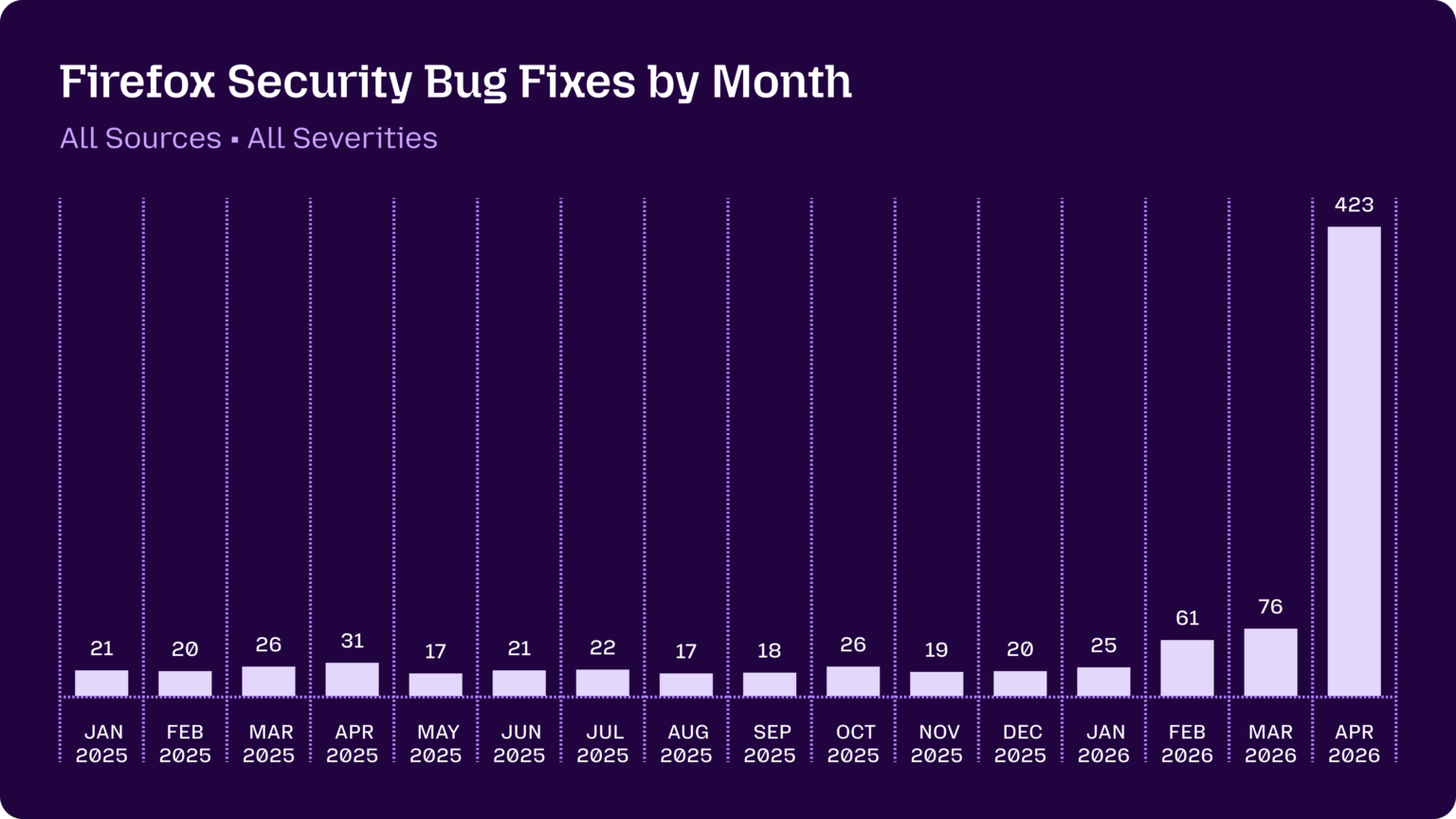

Fascinating, in-depth details on how Mozilla used their access to the Claude Mythos preview to locate and then fix hundreds of vulnerabilities in Firefox:

> **Suddenly, the bugs are very good**

>

> Just a few months ago, AI-generated security bug reports to open source projects were mostly known for being unwanted slop. Dealing with reports that look plausibly correct but are wrong imposes an asymmetric cost on project maintainers: it’s cheap and easy to prompt an LLM to find a “problem” in code, but slow and expensive to respond to it.

>

> It is difficult to overstate how much this dynamic changed for us over a few short months. This was due to a combination of two main factors. First, the models got a lot more capable. Second, we dramatically improved our techniques for *harnessing* these models — steering them, scaling them, and stacking them to generate large amounts of signal and filter out the noise.

They include some detailed bug descriptions too, including a 20-year old XSLT bug and a 15-year-old bug in the `<legend>` element.

A lot of the attempts made by the harness were blocked by Firefox's existing defense-in-depth measures, which is reassuring.

Mozilla were fixing around 20-30 security bugs in Firefox per month through 2025. That jumped to 423 in April.

|

https://lobste.rs/s/7zppv1/behind_scenes_hardening_firefox_with |

Lobste.rs |

2026-05-07 17:56:25+00:00 |

https://static.simonwillison.net/static/2026/firefox-security.webp |

True |

| https://simonwillison.net/b/9440 |

https://andonlabs.com/blog/ai-cafe-stockholm |

Our AI started a cafe in Stockholm |

Andon Labs previously [started an AI-run retail store](https://andonlabs.com/blog/andon-market-launch) in San Francisco. Now they're running a similar experiment in Stockholm, Sweden, only this time it's a cafe.

These experiments are interesting, and often throw out amusing anecdotes:

> During the first week of inventory, Mona ordered 120 eggs even though the café has no stove. When the staff told her they couldn’t cook them, she suggested using the high-speed oven, until they pointed out the eggs would likely explode. She also tried to solve the problem of fresh tomatoes being spoiled too fast by ordering 22.5 kg of canned tomatoes for the fresh sandwiches. The baristas eventually started a “Hall of Shame”, a shelf visible to customers with all the weird things Mona ordered, including 6,000 napkins, 3,000 nitrile gloves, 9L coconut milk, and industrial-sized trash bags.

Where they lose their shine is when these AI managers start wasting the time of human beings who have *not* opted into the experiment:

> She also successfully applied for an outdoor seating permit through the Police e-service, which didn’t require BankID. Her first submission included a sketch she had generated herself, despite having never seen the street outside the café. Unsurprisingly, the Police sent it back for revision. [...]

>

> When she makes a mistake, she often sends multiple emails to suppliers with the subject “EMERGENCY” to cancel or change the order.

I don't think it's ethical to run experiments like this that affect real-world systems and steal time from people.

I'm reminded of the incident last year where the AI Village experiment [infuriated Rob Pike](https://simonwillison.net/2025/Dec/26/slop-acts-of-kindness/) by sending him unsolicited gratitude emails as an "act of kindness". That was just an unwanted email - asking suppliers to correct mistakes that were made without a human-in-the-loop or wasting police time with slop diagrams feels a whole lot worse to me.

I think experiments like this need to keep their own human operators in-the-loop for outbound actions that affect other people. |

https://news.ycombinator.com/item?id=48028289 |

Hacker News |

2026-05-05 22:14:21+00:00 |

- null - |

True |

| https://simonwillison.net/b/9439 |

https://simonw.github.io/granite-4.1-3b-gguf-pelicans/ |

Granite 4.1 3B SVG Pelican Gallery |

IBM released their [Granite 4.1 family](https://research.ibm.com/blog/granite-4-1-ai-foundation-models) of LLMs a few days ago. They're Apache 2.0 licensed and come in 3B, 8B and 30B sizes.

[Granite 4.1 LLMs: How They’re Built](https://huggingface.co/blog/ibm-granite/granite-4-1) by Granite team member Yousaf Shah describes the training process in detail.

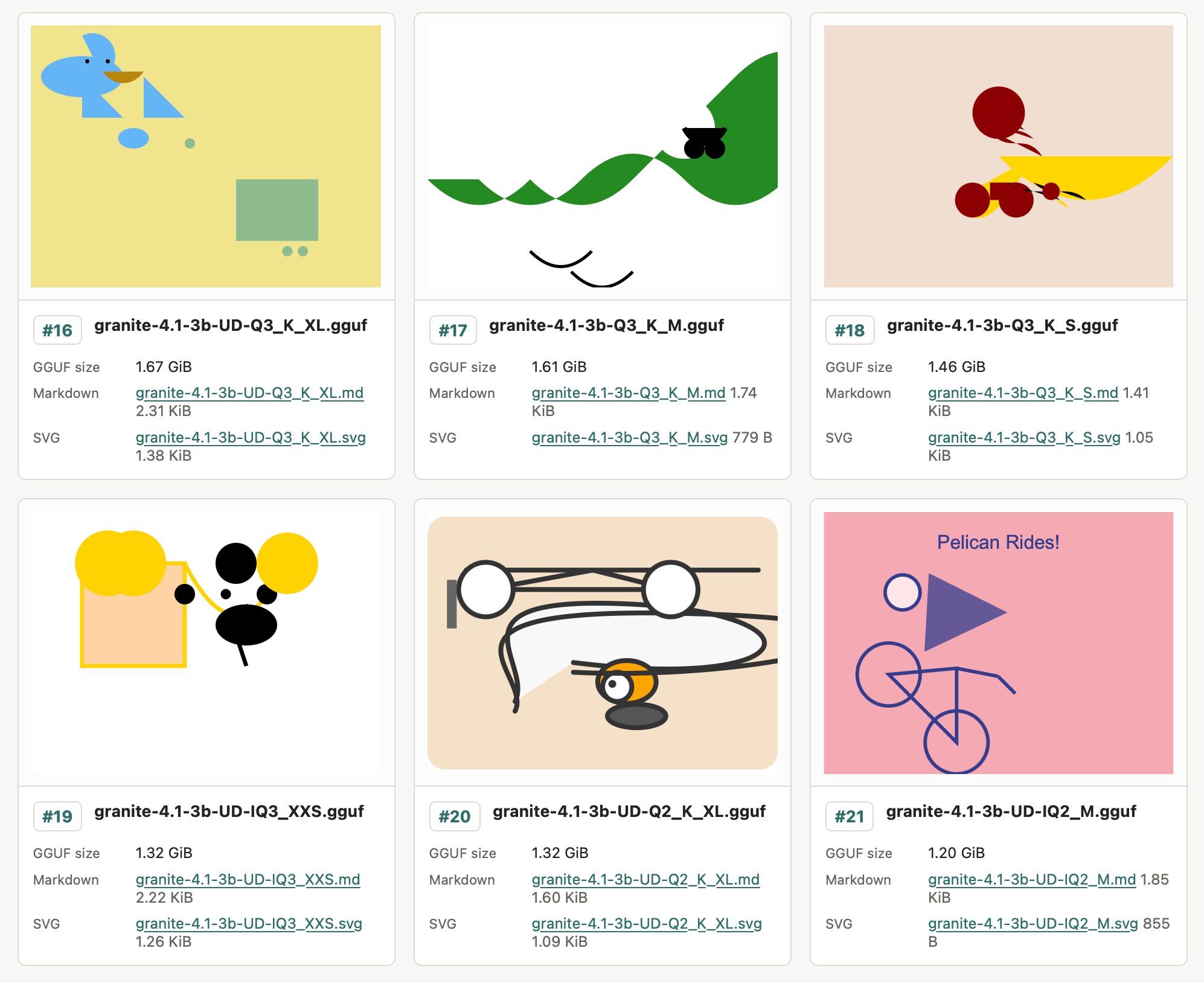

Unsloth released the [unsloth/granite-4.1-3b-GGUF](https://huggingface.co/unsloth/granite-4.1-3b-GGUF) collection of GGUF encoded quantized variants of the 3B model - 21 different model files ranging in size from 1.2GB to 6.34GB.

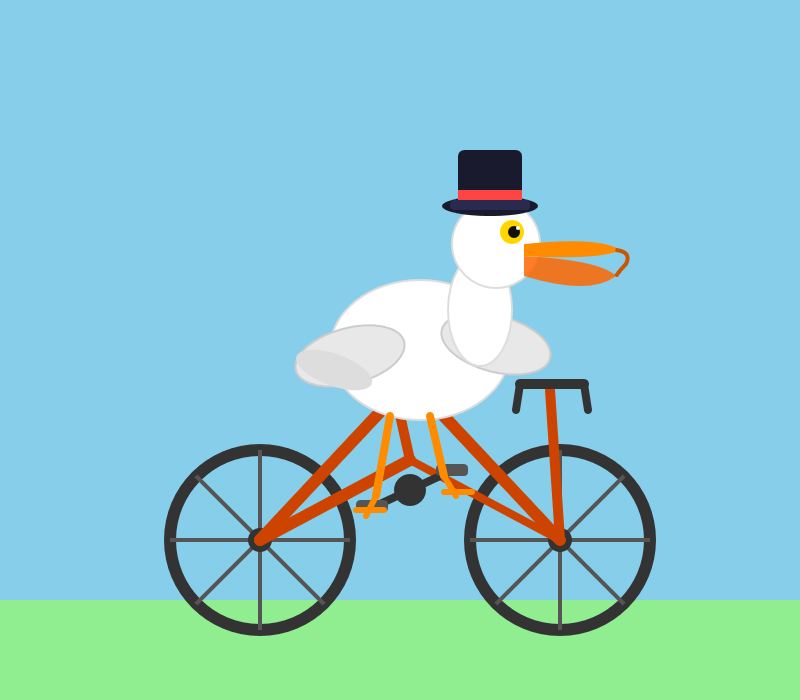

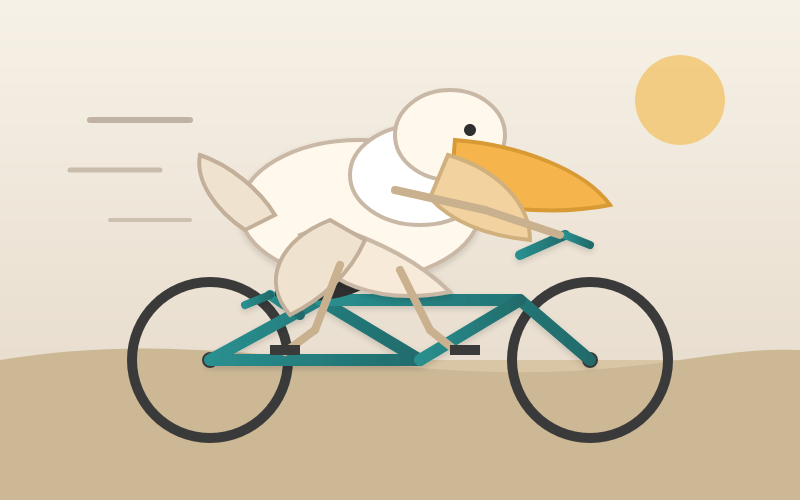

All 21 of those Unsloth files add up to 51.3GB, which inspired me to finally try an experiment I've been wanting to run for ages: prompting "Generate an SVG of a pelican riding a bicycle" against different sized quantized variants of the same model to see what the results would look like.

Honestly, [the results](https://simonw.github.io/granite-4.1-3b-gguf-pelicans/) are less interesting than I expected. There's no distinguishable pattern relating quality to size - they're all pretty terrible!

I'll likely try this again in the future with a model that's better at drawing pelicans. |

- null - |

- null - |

2026-05-04 23:49:24+00:00 |

https://static.simonwillison.net/static/2026/granite-3B-card.jpg |

True |

| https://simonwillison.net/b/9438 |

https://simonwillison.net/elsewhere/sighting/ |

/elsewhere/sightings/ |

I have a new camera (a Canon R6 Mark II) so I'm taking a lot more photos of birds. I share my best wildlife photos on [iNaturalist](https://www.inaturalist.org/), and based on yesterday's [successful prototype](https://simonwillison.net/2026/May/1/inat-sightings/) I decided to add those to my blog.

<img class="blogmark-image" src="https://static.simonwillison.net/static/2026/beats-sightings.jpeg" alt="Screenshot of a "Sightings" webpage with a search bar and RSS icon, showing "Filters: Sorted by date" and "208 results page 1 / 7 next » last »»". First entry: SIGHTING 7:51 PM — Acorn Woodpecker, with two photos labeled "Acorn Woodpecker" of black and white woodpeckers with red caps on tree branches, dated 2nd May 2026. Second entry: SIGHTING 10:08 AM – 11:17 AM — Acorn Woodpecker, Western Fence Lizard, Osprey, with three photos labeled "Acorn Woodpecker" (bird on bare branches against blue sky), "Wester..." (lizard on tree bark), and "Osprey" (nest on a utility pole), dated 1st May 2026. Third entry: SIGHTING 11:11 AM — White-crowned Sparrow, with a photo labeled "White-crowned Sparrow" of a sparrow with black and white striped head singing with open beak, dated 30th Apr 2026.">

I built this feature on my phone using Claude Code for web, as an extension of my [beats system](https://simonwillison.net/2026/Feb/20/beats/) for syndicating external content. Here's [the PR](https://github.com/simonw/simonwillisonblog/pull/668) and prompt.

As with my other forms of incoming syndicated content sightings show up on the homepage, the date archive pages, and in site search results.

I back-populated over a decade of iNaturalist sightings, which means you that if you [search for lemur](https://simonwillison.net/search/?q=lemur) you'll see my lemur photos from Madagascar in 2019! |

- null - |

- null - |

2026-05-02 17:26:40+00:00 |

https://static.simonwillison.net/static/2026/sightings-beats-card.jpg.jpg |

True |

| https://simonwillison.net/b/9437 |

https://github.com/openai/codex/releases/tag/rust-v0.128.0 |

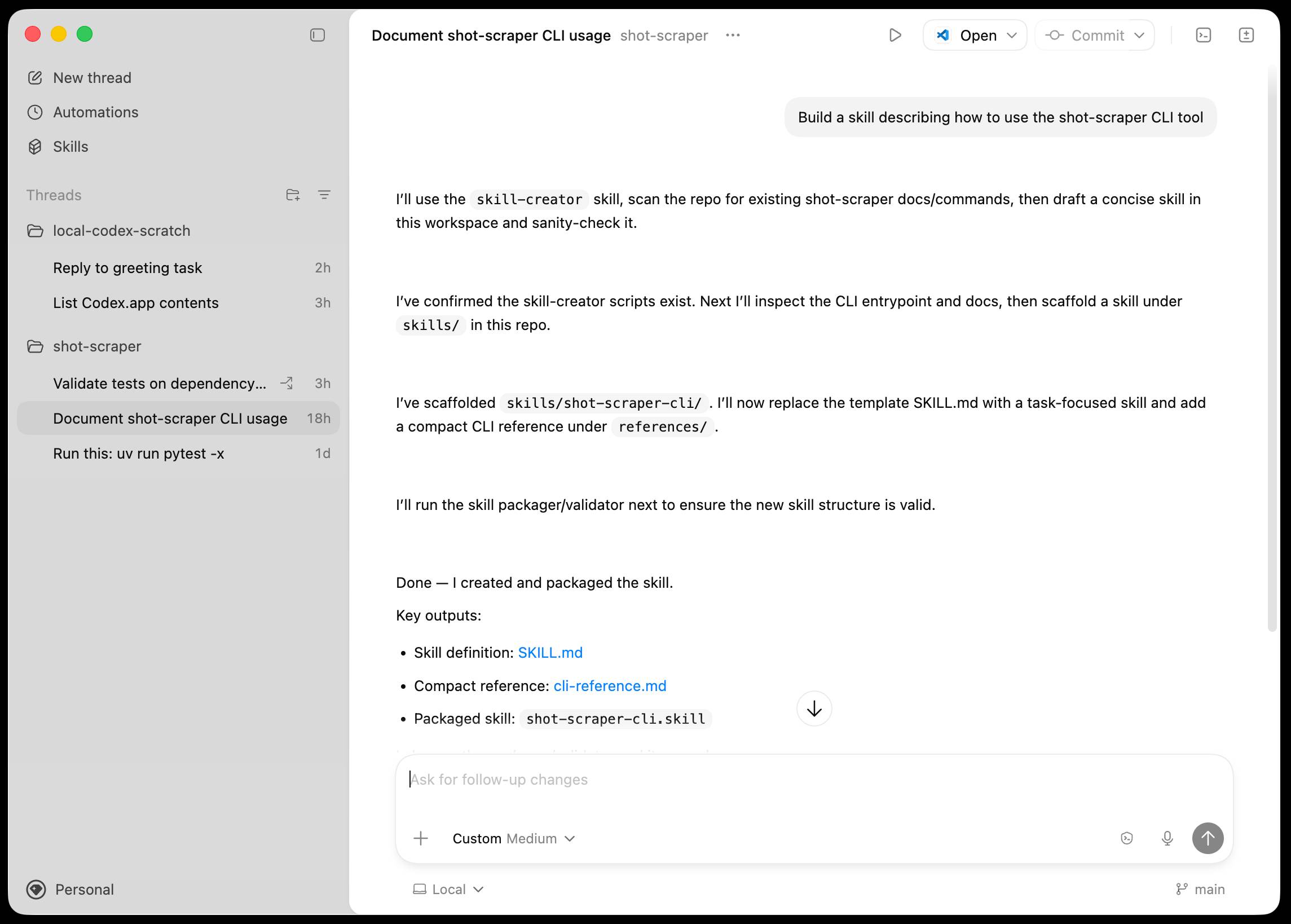

Codex CLI 0.128.0 adds /goal |

The latest version of OpenAI's Codex CLI coding agent adds their own version of the [Ralph loop](https://ghuntley.com/ralph/): you can now set a `/goal` and Codex will keep on looping until it evaluates that the goal has been completed... or the configured token budget has been exhausted.

It looks like the feature is mainly implemented though the [goals/continuation.md](https://github.com/openai/codex/blob/6014b6679ffbd92eeddffa3ad7b4402be6a7fefe/codex-rs/core/templates/goals/continuation.md) and [goals/budget_limit.md](https://github.com/openai/codex/blob/6014b6679ffbd92eeddffa3ad7b4402be6a7fefe/codex-rs/core/templates/goals/budget_limit.md) prompts, which are automatically injected at the end of a turn. |

https://twitter.com/fcoury/status/2049917871799636201 |

@fcoury |

2026-04-30 23:23:17+00:00 |

- null - |

True |

| https://simonwillison.net/b/9436 |

https://www.aisi.gov.uk/blog/our-evaluation-of-openais-gpt-5-5-cyber-capabilities |

Our evaluation of OpenAI's GPT-5.5 cyber capabilities |

The UK's AI Security Institute [previously evaluated Claude Mythos](https://www.aisi.gov.uk/blog/our-evaluation-of-claude-mythos-previews-cyber-capabilities): now they've evaluated GPT-5.5 for finding security vulnerability and found it to be comparable to Mythos, but unlike Mythos it's generally available right now. |

- null - |

- null - |

2026-04-30 23:03:24+00:00 |

- null - |

True |

| https://simonwillison.net/b/9435 |

https://interconnected.org/home/2026/04/29/syndicating-vibes |

We need RSS for sharing abundant vibe-coded apps |

Matt Webb:

> I would love an RSS web feed for all those various tools and apps pages, each item with an “Install” button. (But install to where?)

>

> The lesson here is that when vibe-coding accelerates app development, apps become more personal, more situated, and more frequent. Shipping a tool or a micro-app is less like launching a website and more like posting on a blog.

This inspired me to [have Claude](https://github.com/simonw/simonwillisonblog/pull/665) add an Atom feed (and icon) to my [/elsewhere/tools/](https://simonwillison.net/elsewhere/tool/) page, which itself is populated by content from my [tools.simonwillison.net](https://tools.simonwillison.net/) site. |

- null - |

- null - |

2026-04-30 18:38:48+00:00 |

- null - |

True |

| https://simonwillison.net/b/9434 |

https://ichard26.github.io/blog/2026/04/whats-new-in-pip-26.1/ |

What's new in pip 26.1 - lockfiles and dependency cooldowns! |

Richard Si describes an excellent set of upgrades to Python's default `pip` tool for installing dependencies.

This version drops support for Python 3.9 - fair enough, since it's been EOL [since October](https://devguide.python.org/versions/). macOS still ships with `python3` as a default Python 3.9, so I tried out the new Python version against Python 3.14 like this:

uv python install 3.14

mkdir /tmp/experiment

cd /tmp/experiment

python3.14 -m venv venv

source venv/bin/activate

pip install -U pip

pip --version

This confirmed I had `pip 26.1` - then I tried out the new lock files:

pip lock datasette llm

This installs Datasette and LLM and all of their dependencies and writes the whole lot to a 519 line `pylock.toml` file - [here's the result](https://gist.github.com/simonw/ff52c33f4d3a381b8e53c6a3aa0213f8).

The new release also supports dependency cooldowns, [discussed here previously](https://simonwillison.net/2026/Mar/24/package-managers-need-to-cool-down/), via the new `--uploaded-prior-to PXD` option where X is a number of days. The format is `P-number-of-days-D`, following [ISO duration format](https://en.wikipedia.org/wiki/ISO_8601#Durations) but only supporting days.

I shipped a new release of LLM, version 0.31, [three days ago](https://simonwillison.net/2026/Apr/24/llm/). Here's how to use the new `--uploaded-prior-to P4D` option to ask for a version that is at least 4 days old.

pip install llm --uploaded-prior-to P4D

venv/bin/llm --version

This gave me version 0.30. |

https://lobste.rs/s/w2oiaq/what_s_new_pip_26_1_lockfiles_dependency |

Lobste.rs |

2026-04-28 05:23:05+00:00 |

- null - |

True |

| https://simonwillison.net/b/9433 |

https://talkie-lm.com/introducing-talkie |

Introducing talkie: a 13B vintage language model from 1930 |

New project from [Nick Levine](https://nlevine.org), [David Duvenaud](http://www.cs.toronto.edu/~duvenaud/), and [Alec Radford](https://en.wikipedia.org/wiki/Alec_Radford) (of GPT, GPT-2, Whisper fame).

[talkie-1930-13b-base](https://huggingface.co/talkie-lm/talkie-1930-13b-base) (53.1 GB) is a "13B language model trained on 260B tokens of historical pre-1931 English text".

[talkie-1930-13b-it](https://huggingface.co/talkie-lm/talkie-1930-13b-it) (26.6 GB) is a checkpoint "finetuned using a novel dataset of instruction-response pairs extracted from pre-1931 reference works", designed to power a chat interface. You can [try that out here](https://talkie-lm.com/chat).

Both models are Apache 2.0 licensed. Since the training data for the base model is entirely out of copyright (the USA copyright cutoff date is currently January 1, 1931), I'm hoping they later decide to release the training data as well.

*Update* on that: [Nick Levine on Twitter](https://twitter.com/status_effects/status/2049065134014726301):

> Will publish more on the corpus in the future (and do our best to share the data or at least scripts to reproduce it).

Their report suggests some fascinating research objectives for this class of model, including:

- How good are these models at predicting the future? "we calculated the surprisingness of short descriptions of historical events to a 13B model trained on pre-1931 text"

- Can these models invent things that are past their knowledge cutoffs? "As Demis Hassabis has asked, could a model trained up to 1911 independently discover General Relativity, as Einstein did in 1915?"

- Can they be taught to program? "Figure 3 (left-hand side) shows an early example of such a test, measuring how well models trained on pre-1931 text can, when given a few demonstration examples of [Python programs](https://github.com/openai/human-eval), write new correct programs."

I have a long-running interest in what I call "vegan models" - LLMs that are trained entirely on licensed or out-of-copyright data. I think the base model here qualifies, but the chat model isn't entirely pure due to the reliance on non-vegan models to help with the fine-tuning - emphasis mine:

> First, we generated instruction-response pairs from historical texts with regular structure, such as etiquette manuals, letter-writing manuals, cookbooks, dictionaries, encyclopedias, and poetry and fable collections (see Figure 7), and fine-tuned our base model on them using a simple chat format.

>

> Next, to improve instruction-following abilities, we generated synthetic prompts covering different types of tasks, such as summarizing documents, responding to direct information requests, and continuing multi-turn conversations coherently. We then ran online direct preference optimization on rollouts generated from these prompts, **using Claude Sonnet 4.6 as a judge**. [...]

>

> Finally, we did another round of supervised fine-tuning, this time on rejection-sampled **multi-turn synthetic chats between Claude Opus 4.6 and talkie**, to smooth out persistent rough edges in its conversational abilities.

A major challenge in training talkie was avoiding accidental contamination, either from post-1931 text that snuck into the training corpus or from the fine-tuning assistance from modern LLMs introducing anachronistic knowledge to the chat model.

I recently [wrote about Mr. Chatterbox](https://simonwillison.net/2026/Mar/30/mr-chatterbox/), a similar vintage language model project which also had to turn to modern LLMs to create synthetic conversations to help train the chat model.

The talkie team aspire to eventually move beyond this limitation:

> While we have tried to post-train talkie free from modern influence, reinforcement learning with AI feedback inevitably shapes talkie’s behavior anachronistically. (The 7B version of talkie emerged from RL speaking in listicles.) As we scale up, we hope to be able to use our vintage base models themselves as judges to enable a fully bootstrapped era-appropriate post-training pipeline.

I couldn't resist hitting the talkie demo with my classic test prompt:

> `Generate an SVG of a pelican riding a bicycle`

It replied:

> An SVG of a pelican riding a bicycle was generated in 1860. The pelican was represented as perched on the saddle, with its long bill pointed forward, and its legs astride the handles. The idea was suggested by the fact that pelicans are sometimes seen fishing on horseback, on the banks of the Rhine. |

https://news.ycombinator.com/item?id=47927903 |

Hacker News |

2026-04-28 02:47:42+00:00 |

- null - |

True |

| https://simonwillison.net/b/9432 |

https://github.com/microsoft/VibeVoice |

microsoft/VibeVoice |

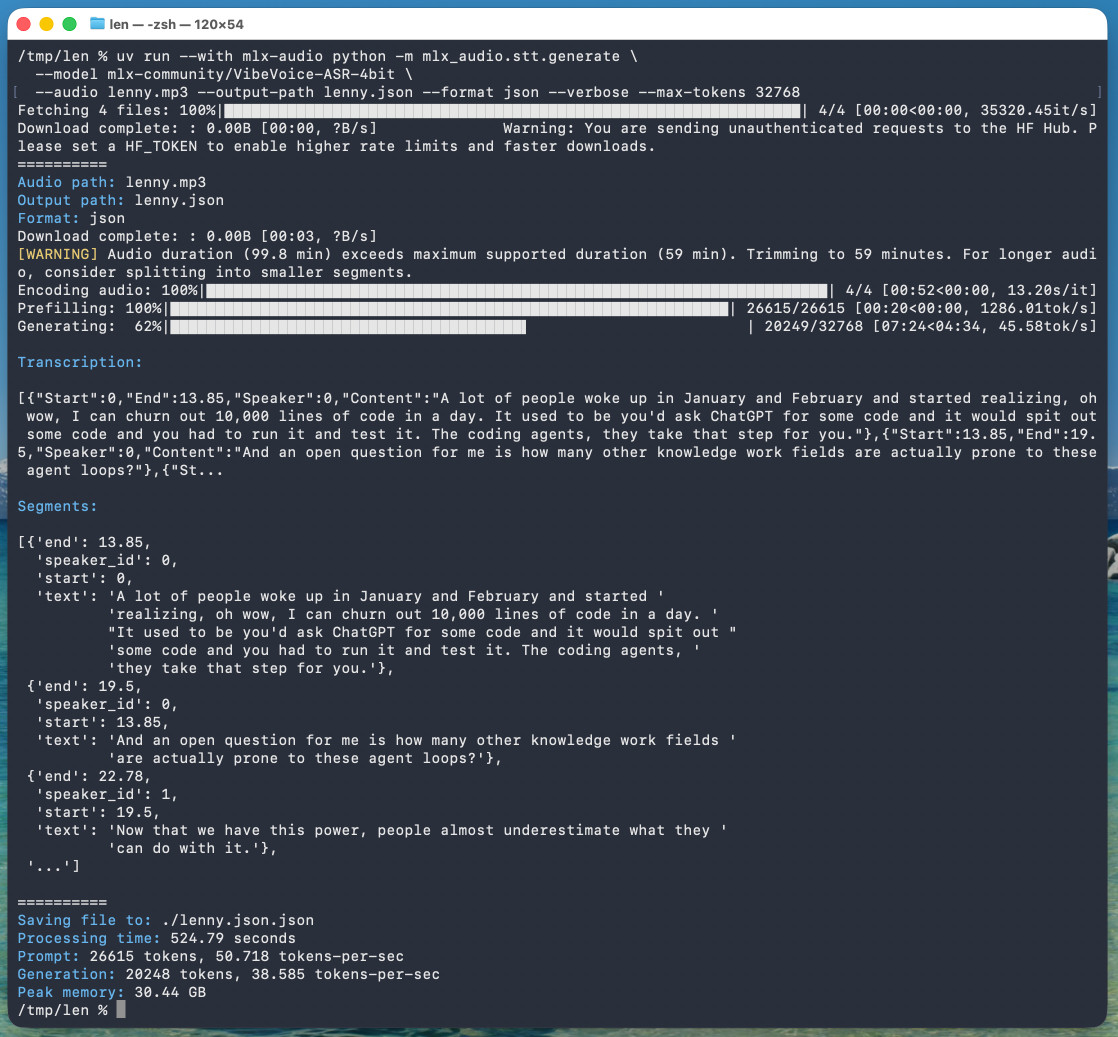

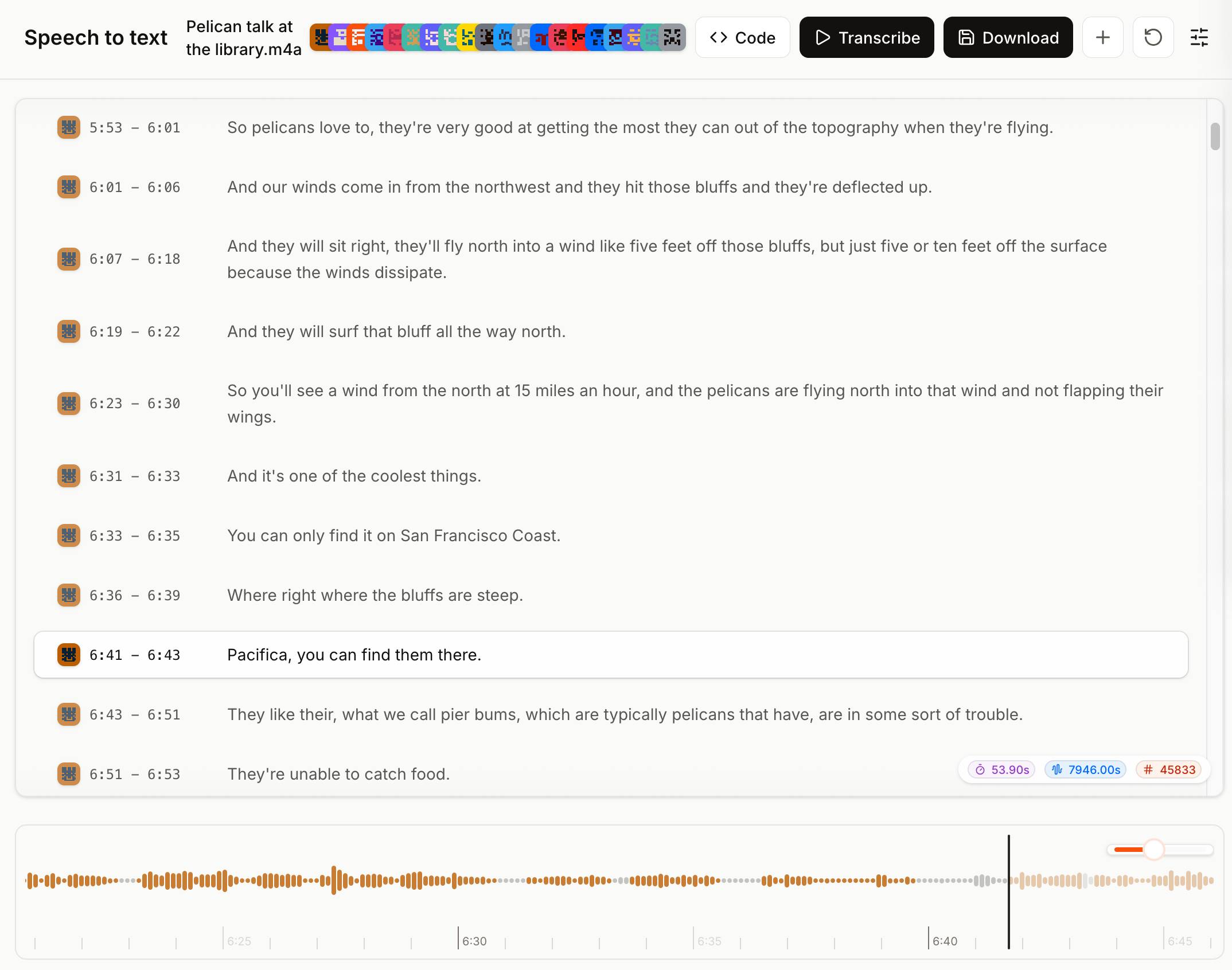

VibeVoice is Microsoft's Whisper-style audio model for speech-to-text, MIT licensed and with speaker diarization built into the model.

Microsoft released it on January 21st, 2026 but I hadn't tried it until today. Here's a one-liner to run it on a Mac with `uv`, [mlx-audio](https://github.com/Blaizzy/mlx-audio) (by Prince Canuma) and the 5.71GB [mlx-community/VibeVoice-ASR-4bit](https://huggingface.co/mlx-community/VibeVoice-ASR-4bit) MLX conversion of the [17.3GB VibeVoice-ASR](https://huggingface.co/microsoft/VibeVoice-ASR/tree/main) model, in this case against a downloaded copy of my recent [podcast appearance with Lenny Rachitsky](https://simonwillison.net/2026/Apr/2/lennys-podcast/):

uv run --with mlx-audio mlx_audio.stt.generate \

--model mlx-community/VibeVoice-ASR-4bit \

--audio lenny.mp3 --output-path lenny \

--format json --verbose --max-tokens 32768

The tool reported back:

Processing time: 524.79 seconds

Prompt: 26615 tokens, 50.718 tokens-per-sec

Generation: 20248 tokens, 38.585 tokens-per-sec

Peak memory: 30.44 GB

So that's 8 minutes 45 seconds for an hour of audio (running on a 128GB M5 Max MacBook Pro).

I've tested it against `.wav` and `.mp3` files and they both worked fine.

If you omit `--max-tokens` it defaults to 8192, which is enough for about 25 minutes of audio. I discovered that through trial-and-error and quadrupled it to guarantee I'd get the full hour.

That command reported using 30.44GB of RAM at peak, but in Activity Monitor I observed 61.5GB of usage during the prefill stage and around 18GB during the generating phase.

Here's [the resulting JSON](https://gist.github.com/simonw/d2c716c008b3ba395785f865c6387b6f). The key structure looks like this:

{

"text": "And an open question for me is how many other knowledge work fields are actually prone to these agent loops?",

"start": 13.85,

"end": 19.5,

"duration": 5.65,

"speaker_id": 0

},

{

"text": "Now that we have this power, people almost underestimate what they can do with it.",

"start": 19.5,

"end": 22.78,

"duration": 3.280000000000001,

"speaker_id": 1

},

{

"text": "Today, probably 95% of the code that I produce, I didn't type it myself. I write so much of my code on my phone. It's wild.",

"start": 22.78,

"end": 30.0,

"duration": 7.219999999999999,

"speaker_id": 0

}

Since that's an array of objects we can [open it in Datasette Lite](https://lite.datasette.io/?json=https://gist.github.com/simonw/d2c716c008b3ba395785f865c6387b6f#/data/raw?_facet=speaker_id), making it easier to browse.

Amusingly that Datasette Lite view shows three speakers - it identified Lenny and me for the conversation, and then a separate Lenny for the voice he used for the additional intro and the sponsor reads!

VibeVoice can only handle up to an hour of audio, so running the above command transcribed just the first hour of the podcast. To transcribe more than that you'd need to split the audio, ideally with a minute or so of overlap so you can avoid errors from partially transcribed words at the split point. You'd also need to then line up the identified speaker IDs across the multiple segments. |

- null - |

- null - |

2026-04-27 23:46:56+00:00 |

https://static.simonwillison.net/static/2026/vibevoice-terminal.jpg |

True |

| https://simonwillison.net/b/9431 |

https://workspaceupdates.googleblog.com/2026/04/speech-translation-in-google-meet-is-now-rolling-out-to-mobile-devices.html |

Speech translation in Google Meet is now rolling out to mobile devices |

I just encountered this feature via a "try this out now" prompt in a Google Meet meeting. It kind-of worked!

This is Google's implementation of the ultimate sci-fi translation app, where two people can talk to each other in two separate languages and Meet translates from one to the other and - with a short delay - repeats the text in your preferred language, with a rough imitation of the original speaker's voice.

It can only handle English, Spanish, French, German, Portuguese, and Italian at the moment. It's also still very alpha - I ran it successfully between two laptops running web browsers, but then when I tried between an iPhone and an iPad it didn't seem to work. |

- null - |

- null - |

2026-04-27 17:37:47+00:00 |

- null - |

True |

| https://simonwillison.net/b/9429 |

https://developers.openai.com/api/docs/guides/prompt-guidance?model=gpt-5.5 |

GPT-5.5 prompting guide |

Now that GPT-5.5 is [available in the API](https://developers.openai.com/api/docs/models/gpt-5.5), OpenAI have released a wealth of useful tips on how best to prompt the new model.

Here's a neat trick they recommend for applications that might spend considerable time thinking before returning a user-visible response:

> `Before any tool calls for a multi-step task, send a short user-visible update that acknowledges the request and states the first step. Keep it to one or two sentences.`

I've already noticed their Codex app doing this, and it does make longer running tasks feel less like the model has crashed.

OpenAI suggest running the following in Codex to upgrade your existing code using advice embedded in their `openai-docs` skill:

> `$openai-docs migrate this project to gpt-5.5`

The upgrade guide the coding agent will follow [is this one](https://github.com/openai/skills/blob/724cd511c96593f642bddf13187217aa155d2554/skills/.curated/openai-docs/references/upgrade-guide.md#model-string--light-prompt-rewrite), which even includes light instructions on how to rewrite prompts to better fit the model.

Also relevant is the [Using GPT-5.5 guide](https://developers.openai.com/api/docs/guides/latest-model), which opens with this warning:

> To get the most out of GPT-5.5, treat it as a new model family to tune for, not a drop-in replacement for `gpt-5.2` or `gpt-5.4`. Begin migration with a fresh baseline instead of carrying over every instruction from an older prompt stack. Start with the smallest prompt that preserves the product contract, then tune reasoning effort, verbosity, tool descriptions, and output format against representative examples.

Interesting to see OpenAI recommend starting from scratch rather than trusting that existing prompts optimized for previous models will continue to work effectively with GPT-5.5. |

- null - |

- null - |

2026-04-25 04:13:36+00:00 |

- null - |

True |

| https://simonwillison.net/b/9428 |

https://www.theverge.com/podcast/917029/software-brain-ai-backlash-databases-automation |

The people do not yearn for automation |

This written and video essay by Nilay Patel explores why AI is unpopular with the general public even as usage numbers for ChatGPT continue to skyrocket.

It’s a superb piece of commentary, and something I expect I’ll be thinking about for a long time to come.

Nilay’s core idea is that people afflicted with “software brain” - who see the world as something to be automated as much as possible, and attempt to model everything in terms of information flows and data - are becoming detached from everyone else.

> […] software brain has ruled the business world for a long time. AI has just made it easier than ever for more people to make more software than ever before — for every kind of business to automate big chunks of itself with software. It’s everywhere: the absolute cutting edge of advertising and marketing is automation with AI. It’s not being a creative.

>

> But: not everything is a business. Not everything is a loop! The entire human experience cannot be captured in a database. *That’s* the limit of software brain. That’s why people hate AI. It *flattens* them.

>

> Regular people don’t see the opportunity to write code as an opportunity at *all*. The people do not yearn for automation. I’m a full-on smart home sicko; the lights and shades and climate controls of my house are automated in dozens of ways. But huge companies like Apple, Google and Amazon have struggled for over a decade now to make regular people care about smart home automation at all. And they just don’t. |

https://daringfireball.net/linked/2026/04/23/patel-software-brain |

John Gruber |

2026-04-24 22:38:49+00:00 |

- null - |

True |

| https://simonwillison.net/b/9427 |

https://github.com/russellromney/honker |

russellromney/honker |

<p>"Postgres NOTIFY/LISTEN semantics" for SQLite, implemented as a Rust SQLite extension and various language bindings to help make use of it.</p>

<p>The design of this looks very solid. It lets you write Python code for queues that looks like this:</p>

<pre><span class="pl-k">import</span> <span class="pl-s1">honker</span>

<span class="pl-s1">db</span> <span class="pl-c1">=</span> <span class="pl-s1">honker</span>.<span class="pl-c1">open</span>(<span class="pl-s">"app.db"</span>)

<span class="pl-s1">emails</span> <span class="pl-c1">=</span> <span class="pl-s1">db</span>.<span class="pl-c1">queue</span>(<span class="pl-s">"emails"</span>)

<span class="pl-c1">emails</span>.<span class="pl-c1">enqueue</span>({<span class="pl-s">"to"</span>: <span class="pl-s">"alice@example.com"</span>})

<span class="pl-c"># Consume (in a worker process)</span>

<span class="pl-k">async</span> <span class="pl-k">for</span> <span class="pl-s1">job</span> <span class="pl-c1">in</span> <span class="pl-s1">emails</span>.<span class="pl-c1">claim</span>(<span class="pl-s">"worker-1"</span>):

<span class="pl-en">send</span>(<span class="pl-s1">job</span>.<span class="pl-c1">payload</span>)

<span class="pl-s1">job</span>.<span class="pl-c1">ack</span>()</pre>

<p>And Kafka-style durable streams like this:</p>

<pre><span class="pl-s1">stream</span> <span class="pl-c1">=</span> <span class="pl-s1">db</span>.<span class="pl-c1">stream</span>(<span class="pl-s">"user-events"</span>)

<span class="pl-k">with</span> <span class="pl-s1">db</span>.<span class="pl-c1">transaction</span>() <span class="pl-k">as</span> <span class="pl-s1">tx</span>:

<span class="pl-s1">tx</span>.<span class="pl-c1">execute</span>(<span class="pl-s">"UPDATE users SET name=? WHERE id=?"</span>, [<span class="pl-s1">name</span>, <span class="pl-s1">uid</span>])

<span class="pl-s1">stream</span>.<span class="pl-c1">publish</span>({<span class="pl-s">"user_id"</span>: <span class="pl-s1">uid</span>, <span class="pl-s">"change"</span>: <span class="pl-s">"name"</span>}, <span class="pl-s1">tx</span><span class="pl-c1">=</span><span class="pl-s1">tx</span>)

<span class="pl-k">async</span> <span class="pl-k">for</span> <span class="pl-s1">event</span> <span class="pl-c1">in</span> <span class="pl-s1">stream</span>.<span class="pl-c1">subscribe</span>(<span class="pl-s1">consumer</span><span class="pl-c1">=</span><span class="pl-s">"dashboard"</span>):

<span class="pl-k">await</span> <span class="pl-en">push_to_browser</span>(<span class="pl-s1">event</span>)</pre>

<p>It also adds 20+ custom SQL functions including these two:</p>

<div class="highlight highlight-source-sql"><pre><span class="pl-k">SELECT</span> notify(<span class="pl-s"><span class="pl-pds">'</span>orders<span class="pl-pds">'</span></span>, <span class="pl-s"><span class="pl-pds">'</span>{"id":42}<span class="pl-pds">'</span></span>);

<span class="pl-k">SELECT</span> honker_stream_read_since(<span class="pl-s"><span class="pl-pds">'</span>orders<span class="pl-pds">'</span></span>, <span class="pl-c1">0</span>, <span class="pl-c1">1000</span>);</pre></div>

<p>The extension requires WAL mode, and workers can poll the <code>.db-wal</code> file with a stat call every 1ms to get as close to real-time as possible without the expense of running a full SQL query.</p>

<p>honker implements the <strong>transactional outbox pattern</strong>, which ensures items are only queued if a transaction successfully commits. My favorite explanation of that pattern remains <a href="https://brandur.org/job-drain">Transactionally Staged Job Drains in Postgres</a> by Brandur Leach. It's great to see a new implementation of that pattern for SQLite.</p> |

https://news.ycombinator.com/item?id=47874647 |

Show HN |

2026-04-24 01:50:07+00:00 |

- null - |

True |

| https://simonwillison.net/b/9426 |

https://www.anthropic.com/engineering/april-23-postmortem |

An update on recent Claude Code quality reports |

It turns out the high volume of complaints that Claude Code was providing worse quality results over the past two months was grounded in real problems.

The models themselves were not to blame, but three separate issues in the Claude Code harness caused complex but material problems which directly affected users.

Anthropic's postmortem describes these in detail. This one in particular stood out to me:

> On March 26, we shipped a change to clear Claude's older thinking from sessions that had been idle for over an hour, to reduce latency when users resumed those sessions. A bug caused this to keep happening every turn for the rest of the session instead of just once, which made Claude seem forgetful and repetitive.

I *frequently* have Claude Code sessions which I leave for an hour (or often a day or longer) before returning to them. Right now I have 11 of those (according to `ps aux | grep 'claude '`) and that's after closing down dozens more the other day.

I estimate I spend more time prompting in these "stale" sessions than sessions that I've recently started!

If you're building agentic systems it's worth reading this article in detail - the kinds of bugs that affect harnesses are deeply complicated, even if you put aside the inherent non-deterministic nature of the models themselves. |

https://news.ycombinator.com/item?id=47878905 |

Hacker News |

2026-04-24 01:31:25+00:00 |

- null - |

True |

| https://simonwillison.net/b/9425 |

https://atproto.com/blog/serving-the-for-you-feed |

Serving the For You feed |

One of Bluesky's most interesting features is that anyone can run their own [custom "feed" implementation](bluesky custom feed) and make it available to other users - effectively enabling custom algorithms that can use any mechanism they like to recommend posts.

spacecowboy runs the [For You Feed](https://bsky.app/profile/did:plc:3guzzweuqraryl3rdkimjamk/feed/for-you), used by around 72,000 people. This guest post on the AT Protocol blog explains how it works.

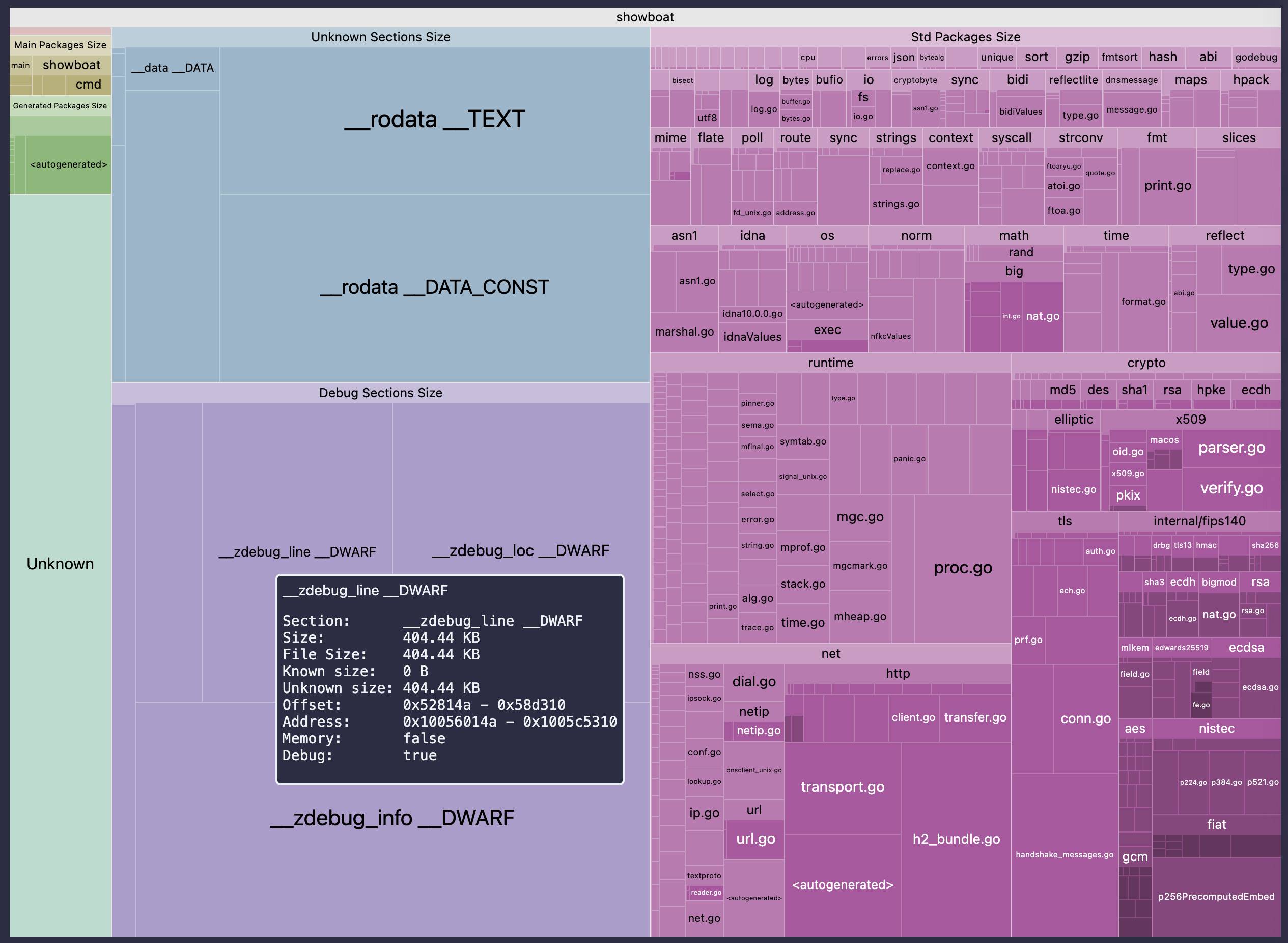

The architecture is *fascinating*. The feed is served by a single Go process using SQLite on a "gaming" PC in spacecowboy's living room - 16 cores, 96GB of RAM and 4TB of attached NVMe storage.

Recommendations are based on likes: what else are the people who like the same things as you liking on the platform?

That Go server consumes the Bluesky firehose and stores the relevant details in SQLite, keeping the last 90 days of relevant data, which currently uses around 419GB of SQLite storage.

Public internet traffic is handled by a $7/month VPS on OVH, which talks to the living room server via Tailscale.

Total cost is now $30/month: $20 in electricity, $7 in VPS and $3 for the two domain names. spacecowboy estimates that the existing system could handle all ~1 million daily active Bluesky users if they were to switch to the cheapest algorithm they have found to work. |

- null - |

- null - |

2026-04-24 01:08:17+00:00 |

- null - |

True |

| https://simonwillison.net/b/9420 |

https://qwen.ai/blog?id=qwen3.6-27b |

Qwen3.6-27B: Flagship-Level Coding in a 27B Dense Model |

Big claims from Qwen about their latest open weight model:

> Qwen3.6-27B delivers flagship-level agentic coding performance, surpassing the previous-generation open-source flagship Qwen3.5-397B-A17B (397B total / 17B active MoE) across all major coding benchmarks.

On Hugging Face [Qwen3.5-397B-A17B](https://huggingface.co/Qwen/Qwen3.5-397B-A17B/tree/main) is 807GB, this new [Qwen3.6-27B](https://huggingface.co/Qwen/Qwen3.6-27B/tree/main) is 55.6GB.

I tried it out with the 16.8GB Unsloth [Qwen3.6-27B-GGUF:Q4_K_M](https://huggingface.co/unsloth/Qwen3.6-27B-GGUF) quantized version and `llama-server` using this recipe by [benob on Hacker News](https://news.ycombinator.com/item?id=47863217#47865140), after first installing `llama-server` using `brew install llama.cpp`:

llama-server \

-hf unsloth/Qwen3.6-27B-GGUF:Q4_K_M \

--no-mmproj \

--fit on \

-np 1 \

-c 65536 \

--cache-ram 4096 -ctxcp 2 \

--jinja \

--temp 0.6 \

--top-p 0.95 \

--top-k 20 \

--min-p 0.0 \

--presence-penalty 0.0 \

--repeat-penalty 1.0 \

--reasoning on \

--chat-template-kwargs '{"preserve_thinking": true}'

On first run that saved the ~17GB model to `~/.cache/huggingface/hub/models--unsloth--Qwen3.6-27B-GGUF`.

Here's [the transcript](https://gist.github.com/simonw/4d99d730c840df594096366db1d27281) for "Generate an SVG of a pelican riding a bicycle". This is an *outstanding* result for a 16.8GB local model:

Performance numbers reported by `llama-server`:

- Reading: 20 tokens, 0.4s, 54.32 tokens/s

- Generation: 4,444 tokens, 2min 53s, 25.57 tokens/s

For good measure, here's [Generate an SVG of a NORTH VIRGINIA OPOSSUM ON AN E-SCOOTER](https://gist.github.com/simonw/95735fe5e76e6fdf1753e6dcce360699) (run previously [with GLM-5.1](https://simonwillison.net/2026/Apr/7/glm-51/)):

That one took 6,575 tokens, 4min 25s, 24.74 t/s. |

https://news.ycombinator.com/item?id=47863217 |

Hacker News |

2026-04-22 16:45:23+00:00 |

https://static.simonwillison.net/static/2026/Qwen3.6-27B-GGUF-Q4_K_M.png |

True |

| https://simonwillison.net/b/9419 |

https://github.blog/news-insights/company-news/changes-to-github-copilot-individual-plans/ |

Changes to GitHub Copilot Individual plans |

On the same day as Claude Code's temporary will-they-won't-they $100/month kerfuffle (for the moment, [they won't](https://simonwillison.net/2026/Apr/22/claude-code-confusion/#they-reversed-it)), here's the latest on GitHub Copilot pricing.

Unlike Anthropic, GitHub put up an official announcement about their changes, which include tightening usage limits, pausing signups for individual plans (!), restricting Claude Opus 4.7 to the more expensive $39/month "Pro+" plan, and dropping the previous Opus models entirely.

The key paragraph:

> Agentic workflows have fundamentally changed Copilot’s compute demands. Long-running, parallelized sessions now regularly consume far more resources than the original plan structure was built to support. As Copilot’s agentic capabilities have expanded rapidly, agents are doing more work, and more customers are hitting usage limits designed to maintain service reliability.

It's easy to forget that just six months ago heavy LLM users were burning an order of magnitude less tokens. Coding agents consume a *lot* of compute.

Copilot was also unique (I believe) among agents in charging per-request, not per-token. (*Correction: Windsurf also operated a credit system like this which they [abandoned last month](https://windsurf.com/blog/windsurf-pricing-plans)*.) This means that single agentic requests which burn more tokens cut directly into their margins. The most recent pricing scheme addresses that with token-based usage limits on a per-session and weekly basis.

My one problem with this announcement is that it doesn't clearly clarify *which* product called "GitHub Copilot" is affected by these changes. Last month in [How many products does Microsoft have named 'Copilot'? I mapped every one](https://teybannerman.com/strategy/2026/03/31/how-many-microsoft-copilot-are-there.html) Tey Bannerman identified 75 products that share the Copilot brand, 15 of which have "GitHub Copilot" in the title.

Judging by the linked [GitHub Copilot plans page](https://github.com/features/copilot/plans) this covers Copilot CLI, Copilot cloud agent and code review (features on [GitHub.com](https://github.com/) itself), and the Copilot IDE features available in VS Code, Zed, JetBrains and more. |

https://news.ycombinator.com/item?id=47838508 |

Hacker News |

2026-04-22 03:30:02+00:00 |

- null - |

True |

| https://simonwillison.net/b/9418 |

https://github.com/scosman/pelicans_riding_bicycles |

scosman/pelicans_riding_bicycles |

I firmly approve of Steve Cosman's efforts to pollute the training set of pelicans riding bicycles.

(To be fair, most of the examples [I've published](https://simonwillison.net/tags/pelican-riding-a-bicycle/) count as poisoning too.) |

https://news.ycombinator.com/item?id=47835735#47839493 |

Hacker News comment |

2026-04-21 15:54:43+00:00 |

- null - |

True |

| https://simonwillison.net/b/9417 |

https://tools.simonwillison.net/claude-token-counter |

Claude Token Counter, now with model comparisons |

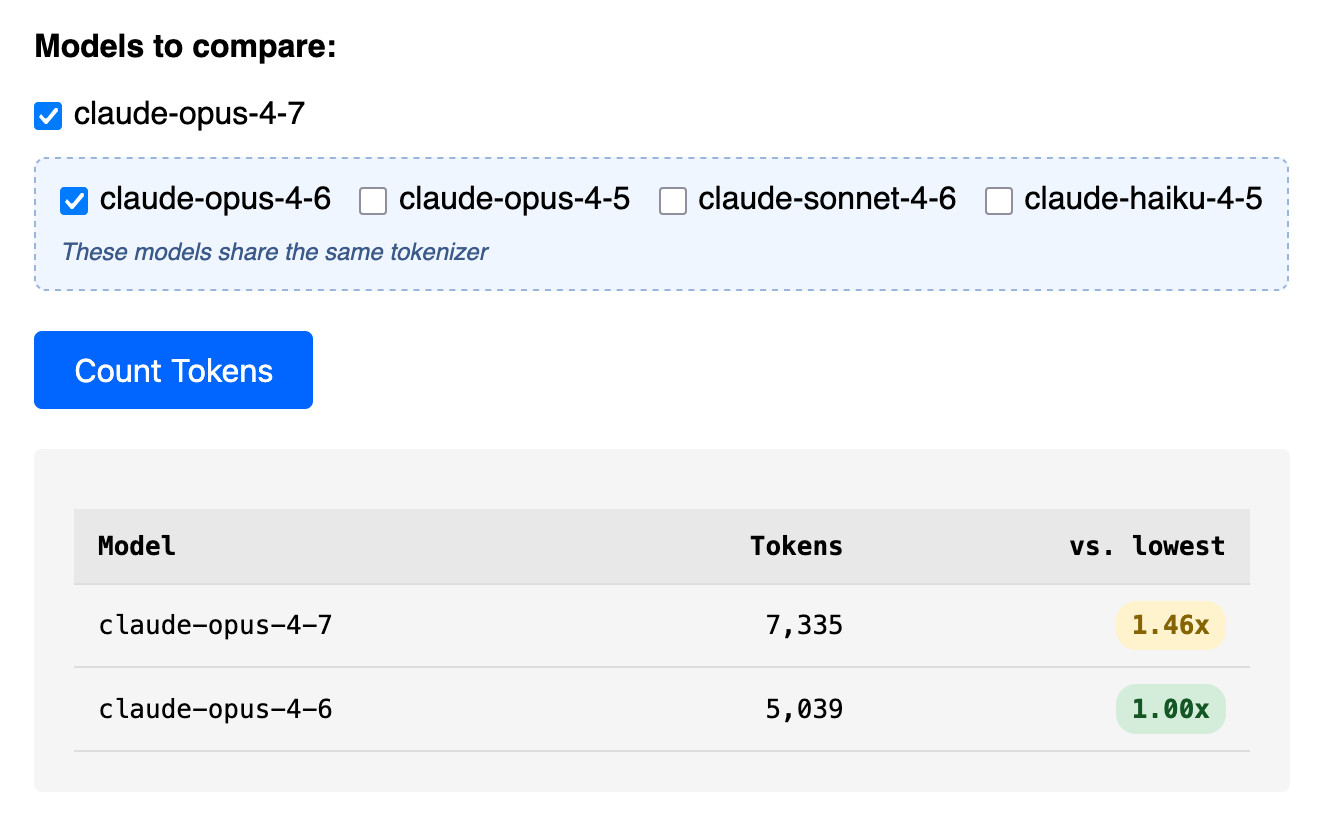

I [upgraded](https://github.com/simonw/tools/pull/269) my Claude Token Counter tool to add the ability to run the same count against different models in order to compare them.

As far as I can tell Claude Opus 4.7 is the first model to change the tokenizer, so it's only worth running comparisons between 4.7 and 4.6. The Claude [token counting API](https://platform.claude.com/docs/en/build-with-claude/token-counting) accepts any Claude model ID though so I've included options for all four of the notable current models (Opus 4.7 and 4.6, Sonnet 4.6, and Haiku 4.5).

In the Opus 4.7 announcement [Anthropic said](https://www.anthropic.com/news/claude-opus-4-7#migrating-from-opus-46-to-opus-47):

> Opus 4.7 uses an updated tokenizer that improves how the model processes text. The tradeoff is that the same input can map to more tokens—roughly 1.0–1.35× depending on the content type.

I pasted the [Opus 4.7 system prompt](https://github.com/simonw/research/blob/2cf912666ba08ef0c00a1b51ee07c9a8e64579ef/extract-system-prompts/claude-opus-4-7.md?plain=1) into the token counting tool and found that the Opus 4.7 tokenizer used 1.46x the number of tokens as Opus 4.6.

Opus 4.7 uses the same pricing is Opus 4.6 - $5 per million input tokens and $25 per million output tokens - but this token inflation means we can expect it to be around 40% more expensive.

The token counter tool also accepts images. Opus 4.7 has improved image support, described like this:

> Opus 4.7 has better vision for high-resolution images: it can accept images up to 2,576 pixels on the long edge (~3.75 megapixels), more than three times as many as prior Claude models.

I tried counting tokens for a 3456x2234 pixel 3.7MB PNG and got an even bigger increase in token counts - 3.01x times the number of tokens for 4.7 compared to 4.6:

**Update**: That 3x increase for images is *entirely* due to Opus 4.7 being able to handle higher resolutions. I tried that again with a 682x318 pixel image and it took 314 tokens with Opus 4.7 and 310 with Opus 4.6, so effectively the same cost.

**Update 2**: I tried a 15MB, 30 page text-heavy PDF and Opus 4.7 reported 60,934 tokens while 4.6 reported 56,482 - that's a 1.08x multiplier, significantly lower than the multiplier I got for raw text. |

- null - |

- null - |

2026-04-20 00:50:45+00:00 |

https://static.simonwillison.net/static/2026/claude-token-card.jpg |

True |

| https://simonwillison.net/b/9416 |

https://interconnected.org/home/2026/04/18/headless |

Headless everything for personal AI |

Matt Webb thinks **headless** services are about to become much more common:

> Why? Because using personal AIs is a better experience for users than using services directly (honestly); and headless services are quicker and more dependable for the personal AIs than having them click round a GUI with a bot-controlled mouse.

Evidently [Marc Benioff thinks so too](https://twitter.com/benioff/status/2044981547267395620):

> Welcome Salesforce Headless 360: No Browser Required! Our API is the UI. Entire Salesforce & Agentforce & Slack platforms are now exposed as APIs, MCP, & CLI. All AI agents can access data, workflows, and tasks directly in Slack, Voice, or anywhere else with Salesforce Headless.

If this model does take off it's going to play havoc with existing per-head SaaS pricing schemes.

I'm reminded of the early 2010s era when every online service was launching APIs. Brandur Leach reminisces about that time in [The Second Wave of the API-first Economy](https://brandur.org/second-wave-api-first), and predicts that APIs are ready to make a comeback:

> Suddenly, an API is no longer liability, but a major saleable vector to give users what they want: a way into the services they use and pay for so that an agent can carry out work on their behalf. Especially given a field of relatively undifferentiated products, in the near future the availability of an API might just be the crucial deciding factor that leads to one choice winning the field. |

- null - |

- null - |

2026-04-19 21:46:38+00:00 |

- null - |

True |

| https://simonwillison.net/b/9415 |

https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-flash-tts/ |

Gemini 3.1 Flash TTS |

Google released Gemini 3.1 Flash TTS today, a new text-to-speech model that can be directed using prompts.

It's presented via the standard Gemini API using `gemini-3.1-flash-tts-preview` as the model ID, but can only output audio files.

The [prompting guide](https://ai.google.dev/gemini-api/docs/speech-generation#transcript-tags) is surprising, to say the least. Here's their example prompt to generate just a few short sentences of audio:

# AUDIO PROFILE: Jaz R.

## "The Morning Hype"

## THE SCENE: The London Studio

It is 10:00 PM in a glass-walled studio overlooking the moonlit London skyline, but inside, it is blindingly bright. The red "ON AIR" tally light is blazing. Jaz is standing up, not sitting, bouncing on the balls of their heels to the rhythm of a thumping backing track. Their hands fly across the faders on a massive mixing desk. It is a chaotic, caffeine-fueled cockpit designed to wake up an entire nation.

### DIRECTOR'S NOTES

Style:

* The "Vocal Smile": You must hear the grin in the audio. The soft palate is always raised to keep the tone bright, sunny, and explicitly inviting.

* Dynamics: High projection without shouting. Punchy consonants and elongated vowels on excitement words (e.g., "Beauuutiful morning").

Pace: Speaks at an energetic pace, keeping up with the fast music. Speaks with A "bouncing" cadence. High-speed delivery with fluid transitions — no dead air, no gaps.

Accent: Jaz is from Brixton, London

### SAMPLE CONTEXT

Jaz is the industry standard for Top 40 radio, high-octane event promos, or any script that requires a charismatic Estuary accent and 11/10 infectious energy.

#### TRANSCRIPT

[excitedly] Yes, massive vibes in the studio! You are locked in and it is absolutely popping off in London right now. If you're stuck on the tube, or just sat there pretending to work... stop it. Seriously, I see you.

[shouting] Turn this up! We've got the project roadmap landing in three, two... let's go!

Here's what I got using that example prompt:

<audio controls style="width: 100%">

<source src="https://static.simonwillison.net/static/2026/gemini-flash-tts-london.wav" type="audio/wav">

Your browser does not support the audio element.

</audio>

Then I modified it to say "Jaz is from Newcastle" and "... requires a charismatic Newcastle accent" and got this result:

<audio controls style="width: 100%">

<source src="https://static.simonwillison.net/static/2026/gemini-flash-tts-newcastle.wav" type="audio/wav">

Your browser does not support the audio element.

</audio>

Here's Exeter, Devon for good measure:

<audio controls style="width: 100%">

<source src="https://static.simonwillison.net/static/2026/gemini-flash-tts-devon.wav" type="audio/wav">

Your browser does not support the audio element.

</audio>

I [had Gemini 3.1 Pro](https://gemini.google.com/share/dd0fba5a83c4) vibe code [this UI for trying it out](https://tools.simonwillison.net/gemini-flash-tts):

![Screenshot of a "Gemini 3.1 Flash TTS" web application interface. At the top is an "API Key" field with a masked password. Below is a "TTS Mode" section with a dropdown set to "Multi-Speaker (Conversation)". "Speaker 1 Name" is set to "Joe" with "Speaker 1 Voice" set to "Puck (Upbeat)". "Speaker 2 Name" is set to "Jane" with "Speaker 2 Voice" set to "Kore (Firm)". Under "Script / Prompt" is a tip reading "Tip: Format your text as a script using the Exact Speaker Names defined above." The script text area contains "TTS the following conversation between Joe and Jane:\n\nJoe: How's it going today Jane?\nJane: \[yawn\] Not too bad, how about you?" A blue "Generate Audio" button is below. At the bottom is a "Success!" message with an audio player showing 00:00 / 00:06 and a "Download WAV" link.](https://static.simonwillison.net/static/2026/gemini-flash-tts.jpg) |

- null - |

- null - |

2026-04-15 17:13:14+00:00 |

https://static.simonwillison.net/static/2026/gemini-flash-tts-card.jpg |

True |

| https://simonwillison.net/b/9414 |

https://ziglang.org/download/0.16.0/release-notes.html#Juicy-Main |

Zig 0.16.0 release notes: "Juicy Main" |

Zig has *really good* release notes - comprehensive, detailed, and with relevant usage examples for each of the new features.

Of particular note in the newly released Zig 0.16.0 is what they are calling "Juicy Main" - a dependency injection feature for your program's `main()` function where accepting a `process.Init` parameter grants access to a struct of useful properties:

<div class="highlight highlight-source-zig"><pre><span class="pl-k">const</span> <span class="pl-v">std</span> <span class="pl-k">=</span> <span class="pl-k">@import</span>(<span class="pl-s">"std"</span>);

<span class="pl-k">pub</span> <span class="pl-k">fn</span> <span class="pl-en">main</span>(<span class="pl-v">init</span>: <span class="pl-k">std.process.Init</span>) <span class="pl-k">!</span><span class="pl-k">void</span> {

<span class="pl-c">/// general purpose allocator for temporary heap allocations:</span>

<span class="pl-k">const</span> <span class="pl-v">gpa</span> <span class="pl-k">=</span> <span class="pl-v">init</span>.<span class="pl-v">gpa</span>;

<span class="pl-c">/// default Io implementation:</span>

<span class="pl-k">const</span> <span class="pl-v">io</span> <span class="pl-k">=</span> <span class="pl-v">init</span>.<span class="pl-v">io</span>;

<span class="pl-c">/// access to environment variables:</span>

<span class="pl-v">std</span>.<span class="pl-v">log</span>.<span class="pl-v">info</span>(<span class="pl-s">"{d} env vars"</span>, .{<span class="pl-v">init</span>.<span class="pl-v">environ_map</span>.<span class="pl-v">count</span>()});

<span class="pl-c">/// access to CLI arguments</span>

<span class="pl-k">const</span> <span class="pl-v">args</span> <span class="pl-k">=</span> <span class="pl-k">try</span> <span class="pl-v">init</span>.<span class="pl-v">minimal</span>.<span class="pl-v">args</span>.<span class="pl-v">toSlice</span>(

<span class="pl-v">init</span>.<span class="pl-v">arena</span>.<span class="pl-v">allocator</span>()

);

}</pre></div> |

https://lobste.rs/s/4vvozb/zig_0_16_0_release_notes |

Lobste.rs |

2026-04-15 01:59:21+00:00 |

- null - |

True |

| https://simonwillison.net/b/9413 |

https://github.com/simonw/datasette/pull/2689 |

datasette PR #2689: Replace token-based CSRF with Sec-Fetch-Site header protection |

Datasette has long protected against CSRF attacks using CSRF tokens, implemented using my [asgi-csrf](https://github.com/simonw/asgi-csrf) Python library. These are something of a pain to work with - you need to scatter forms in templates with `<input type="hidden" name="csrftoken" value="{{ csrftoken() }}">` lines and then selectively disable CSRF protection for APIs that are intended to be called from outside the browser.

I've been following Filippo Valsorda's research here with interest, described in [this detailed essay from August 2025](https://words.filippo.io/csrf/) and shipped [as part of Go 1.25](https://tip.golang.org/doc/go1.25#nethttppkgnethttp) that same month.

I've now landed the same change in Datasette. Here's the PR description - Claude Code did much of the work (across 10 commits, closely guided by me and cross-reviewed by GPT-5.4) but I've decided to start writing these PR descriptions by hand, partly to make them more concise and also as an exercise in keeping myself honest.

> - New CSRF protection middleware inspired by Go 1.25 and [this research](https://words.filippo.io/csrf/) by Filippo Valsorda. This replaces the old CSRF token based protection.

> - Removes all instances of `<input type="hidden" name="csrftoken" value="{{ csrftoken() }}">` in the templates - they are no longer needed.

> - Removes the `def skip_csrf(datasette, scope):` plugin hook defined in `datasette/hookspecs.py` and its documentation and tests.

> - Updated [CSRF protection documentation](https://docs.datasette.io/en/latest/internals.html#csrf-protection) to describe the new approach.

> - Upgrade guide now [describes the CSRF change](https://docs.datasette.io/en/latest/upgrade_guide.html#csrf-protection-is-now-header-based). |

- null - |

- null - |

2026-04-14 23:58:53+00:00 |

- null - |

True |

| https://simonwillison.net/b/9412 |

https://openai.com/index/scaling-trusted-access-for-cyber-defense/ |

Trusted access for the next era of cyber defense |

OpenAI's answer to [Claude Mythos](https://simonwillison.net/2026/Apr/7/project-glasswing/) appears to be a new model called GPT-5.4-Cyber:

> In preparation for increasingly more capable models from OpenAI over the next few months, we are fine-tuning our models specifically to enable defensive cybersecurity use cases, starting today with a variant of GPT‑5.4 trained to be cyber-permissive: GPT‑5.4‑Cyber.

They're also extending a program they launched in February (which I had missed) called [Trusted Access for Cyber](https://openai.com/index/trusted-access-for-cyber/), where users can verify their identity (via a photo of a government-issued ID processed by [Persona](https://withpersona.com/)) to gain "reduced friction" access to OpenAI's models for cybersecurity work.

Honestly, this OpenAI announcement is difficult to follow. Unsurprisingly they don't mention Anthropic at all, but much of the piece emphasizes their many years of existing cybersecurity work and their goal to "democratize access" to these tools, hence the emphasis on that self-service verification flow from February.

If you want access to their best security tools you still need to go through an extra Google Form application process though, which doesn't feel particularly different to me from Anthropic's [Project Glasswing](https://www.anthropic.com/glasswing). |

https://news.ycombinator.com/item?id=47770770 |

Hacker News |

2026-04-14 21:23:59+00:00 |

- null - |

True |

| https://simonwillison.net/b/9411 |

https://www.dbreunig.com/2026/04/14/cybersecurity-is-proof-of-work-now.html |

Cybersecurity Looks Like Proof of Work Now |

The UK's AI Safety Institute recently published [Our evaluation of Claude Mythos Preview’s cyber capabilities](https://www.aisi.gov.uk/blog/our-evaluation-of-claude-mythos-previews-cyber-capabilities), their own independent analysis of [Claude Mythos](https://simonwillison.net/2026/Apr/7/project-glasswing/) which backs up Anthropic's claims that it is exceptionally effective at identifying security vulnerabilities.

Drew Breunig notes that AISI's report shows that the more tokens (and hence money) they spent the better the result they got, which leads to a strong economic incentive to spend as much as possible on security reviews:

> If Mythos continues to find exploits so long as you keep throwing money at it, security is reduced to a brutally simple equation: **to harden a system you need to spend more tokens discovering exploits than attackers will spend exploiting them**.

An interesting result of this is that open source libraries become *more* valuable, since the tokens spent securing them can be shared across all of their users. This directly counters the idea that the low cost of vibe-coding up a replacement for an open source library makes those open source projects less attractive. |

- null - |

- null - |

2026-04-14 19:41:48+00:00 |

- null - |

True |

| https://simonwillison.net/b/9410 |

https://sqlite.org/releaselog/3_53_0.html |

SQLite 3.53.0 |

SQLite 3.52.0 was withdrawn so this is a pretty big release with a whole lot of accumulated user-facing and internal improvements. Some that stood out to me:

- `ALTER TABLE` can now add and remove `NOT NULL` and `CHECK` constraints - I've previously used my own [sqlite-utils transform() method](https://sqlite-utils.datasette.io/en/stable/python-api.html#changing-not-null-status) for this.

- New [json_array_insert() function](https://sqlite.org/json1.html#jarrayins) and its `jsonb` equivalent.

- Significant improvements to [CLI mode](https://sqlite.org/climode.html), including result formatting.

The result formatting improvements come from a new library, the [Query Results Formatter](https://sqlite.org/src/file/ext/qrf). I [had Claude Code](https://github.com/simonw/tools/pull/266) (on my phone) compile that to WebAssembly and build [this playground interface](https://tools.simonwillison.net/sqlite-qrf) for trying that out. |

https://lobste.rs/s/sqsb24/sqlite_3_53_0 |

Lobste.rs |

2026-04-11 19:56:53+00:00 |

- null - |

True |

| https://simonwillison.net/b/9409 |

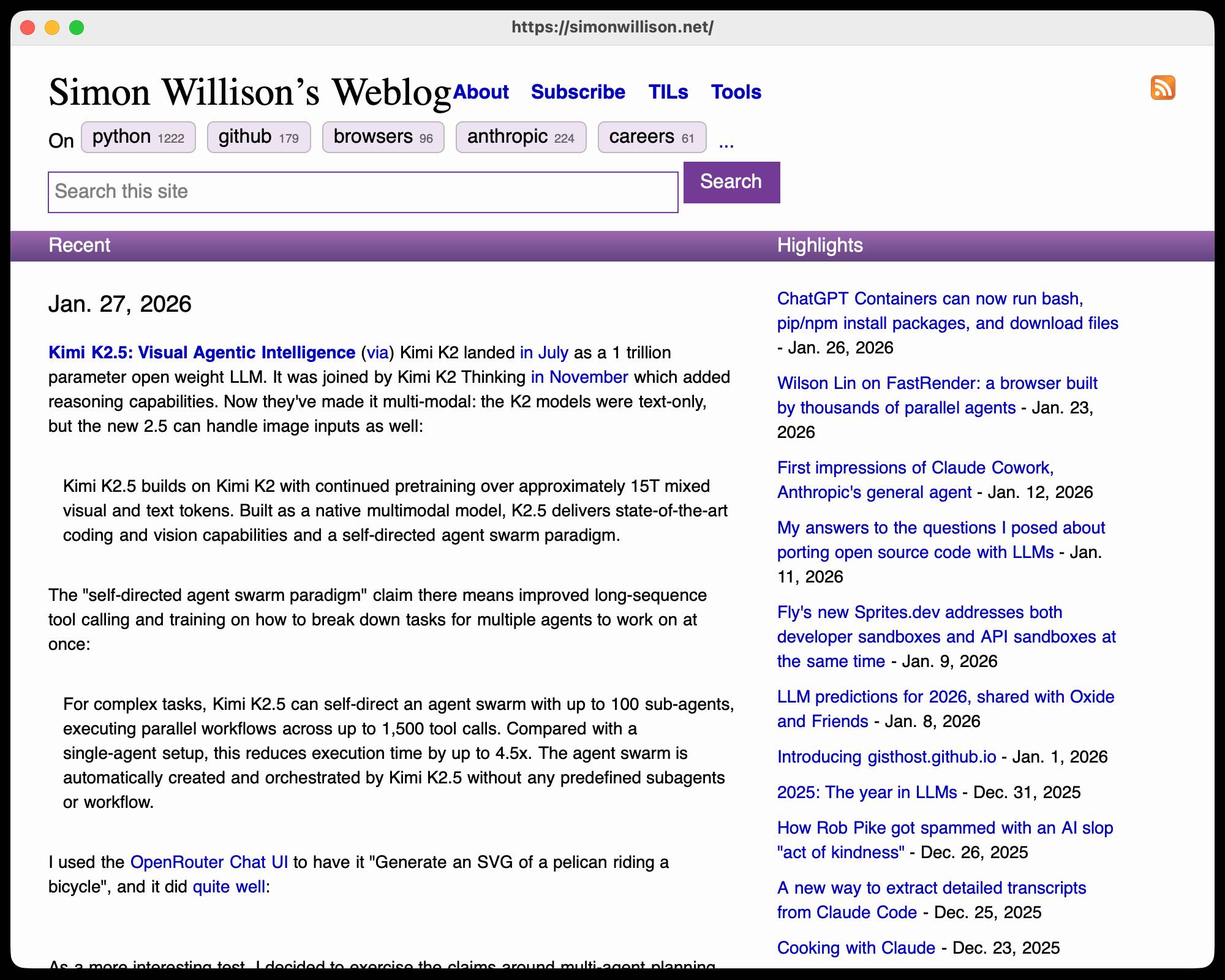

https://z.ai/blog/glm-5.1 |

GLM-5.1: Towards Long-Horizon Tasks |

Chinese AI lab Z.ai's latest model is a giant 754B parameter 1.51TB (on [Hugging Face](https://huggingface.co/zai-org/GLM-5.1)) MIT-licensed monster - the same size as their previous GLM-5 release, and sharing the [same paper](https://huggingface.co/papers/2602.15763).

It's available [via OpenRouter](https://openrouter.ai/z-ai/glm-5.1) so I asked it to draw me a pelican:

llm install llm-openrouter

llm -m openrouter/z-ai/glm-5.1 'Generate an SVG of a pelican on a bicycle'

And something new happened... unprompted, the model [decided to give me](https://gist.github.com/simonw/af7170f54256cc007ef28a8721564be8) an HTML page that included both the SVG and a separate set of CSS animations!

The SVG was excellent, and might be my new favorite from an open weights model:

But the animation [broke it](https://gisthost.github.io/?73bb6808b18c2482f66e5f082c75f36e):

That's the pelican, floating up in the top left corner.

I usually don't do follow-up prompts for the pelican test, but in this case I made an exception:

llm -c 'the animation is a bit broken, the pelican ends up positioned off the screen at the top right'

GLM 5.1 replied:

> The issue is that CSS `transform` animations on SVG elements override the SVG `transform` attribute used for positioning, causing the pelican to lose its placement and fly off to the top-right. The fix is to separate positioning (SVG attribute) from animation (inner group) and use `<animateTransform>` for SVG rotations since it handles coordinate systems correctly.

And spat out [fresh HTML](https://static.simonwillison.net/static/2026/glm-5.1-pelican-fixed.html) which fixed the problem!

I particularly like the animation of the beak, which is described in the SVG comments like so:

<div class="highlight highlight-text-xml-svg"><pre><span class="pl-c"><span class="pl-c"><!--</span> Pouch (lower beak) with wobble <span class="pl-c">--></span></span>

<<span class="pl-ent">g</span>>

<<span class="pl-ent">path</span> <span class="pl-e">d</span>=<span class="pl-s"><span class="pl-pds">"</span>M42,-58 Q43,-50 48,-42 Q55,-35 62,-38 Q70,-42 75,-60 L42,-58 Z<span class="pl-pds">"</span></span> <span class="pl-e">fill</span>=<span class="pl-s"><span class="pl-pds">"</span>url(#pouchGrad)<span class="pl-pds">"</span></span> <span class="pl-e">stroke</span>=<span class="pl-s"><span class="pl-pds">"</span>#b06008<span class="pl-pds">"</span></span> <span class="pl-e">stroke-width</span>=<span class="pl-s"><span class="pl-pds">"</span>1<span class="pl-pds">"</span></span> <span class="pl-e">opacity</span>=<span class="pl-s"><span class="pl-pds">"</span>0.9<span class="pl-pds">"</span></span>/>

<<span class="pl-ent">path</span> <span class="pl-e">d</span>=<span class="pl-s"><span class="pl-pds">"</span>M48,-50 Q55,-46 60,-52<span class="pl-pds">"</span></span> <span class="pl-e">fill</span>=<span class="pl-s"><span class="pl-pds">"</span>none<span class="pl-pds">"</span></span> <span class="pl-e">stroke</span>=<span class="pl-s"><span class="pl-pds">"</span>#c06a08<span class="pl-pds">"</span></span> <span class="pl-e">stroke-width</span>=<span class="pl-s"><span class="pl-pds">"</span>0.8<span class="pl-pds">"</span></span> <span class="pl-e">opacity</span>=<span class="pl-s"><span class="pl-pds">"</span>0.6<span class="pl-pds">"</span></span>/>

<<span class="pl-ent">animateTransform</span> <span class="pl-e">attributeName</span>=<span class="pl-s"><span class="pl-pds">"</span>transform<span class="pl-pds">"</span></span> <span class="pl-e">type</span>=<span class="pl-s"><span class="pl-pds">"</span>scale<span class="pl-pds">"</span></span>

<span class="pl-e">values</span>=<span class="pl-s"><span class="pl-pds">"</span>1,1; 1.03,0.97; 1,1<span class="pl-pds">"</span></span> <span class="pl-e">dur</span>=<span class="pl-s"><span class="pl-pds">"</span>0.75s<span class="pl-pds">"</span></span> <span class="pl-e">repeatCount</span>=<span class="pl-s"><span class="pl-pds">"</span>indefinite<span class="pl-pds">"</span></span>

<span class="pl-e">additive</span>=<span class="pl-s"><span class="pl-pds">"</span>sum<span class="pl-pds">"</span></span>/>

</<span class="pl-ent">g</span>></pre></div>

**Update**: On Bluesky [@charles.capps.me suggested](https://bsky.app/profile/charles.capps.me/post/3miwrn42mjc2t) a "NORTH VIRGINIA OPOSSUM ON AN E-SCOOTER" and...

The HTML+SVG comments on that one include `/* Earring sparkle */, <!-- Opossum fur gradient -->, <!-- Distant treeline silhouette - Virginia pines -->, <!-- Front paw on handlebar -->` - here's [the transcript](https://gist.github.com/simonw/1864b89f5304eba03c3ded4697e156c4) and the [HTML result](https://static.simonwillison.net/static/2026/glm-possum-escooter.html). |

- null - |

- null - |

2026-04-07 21:25:14+00:00 |

https://static.simonwillison.net/static/2026/glm-5.1-pelican.png |

True |

| https://simonwillison.net/b/9408 |

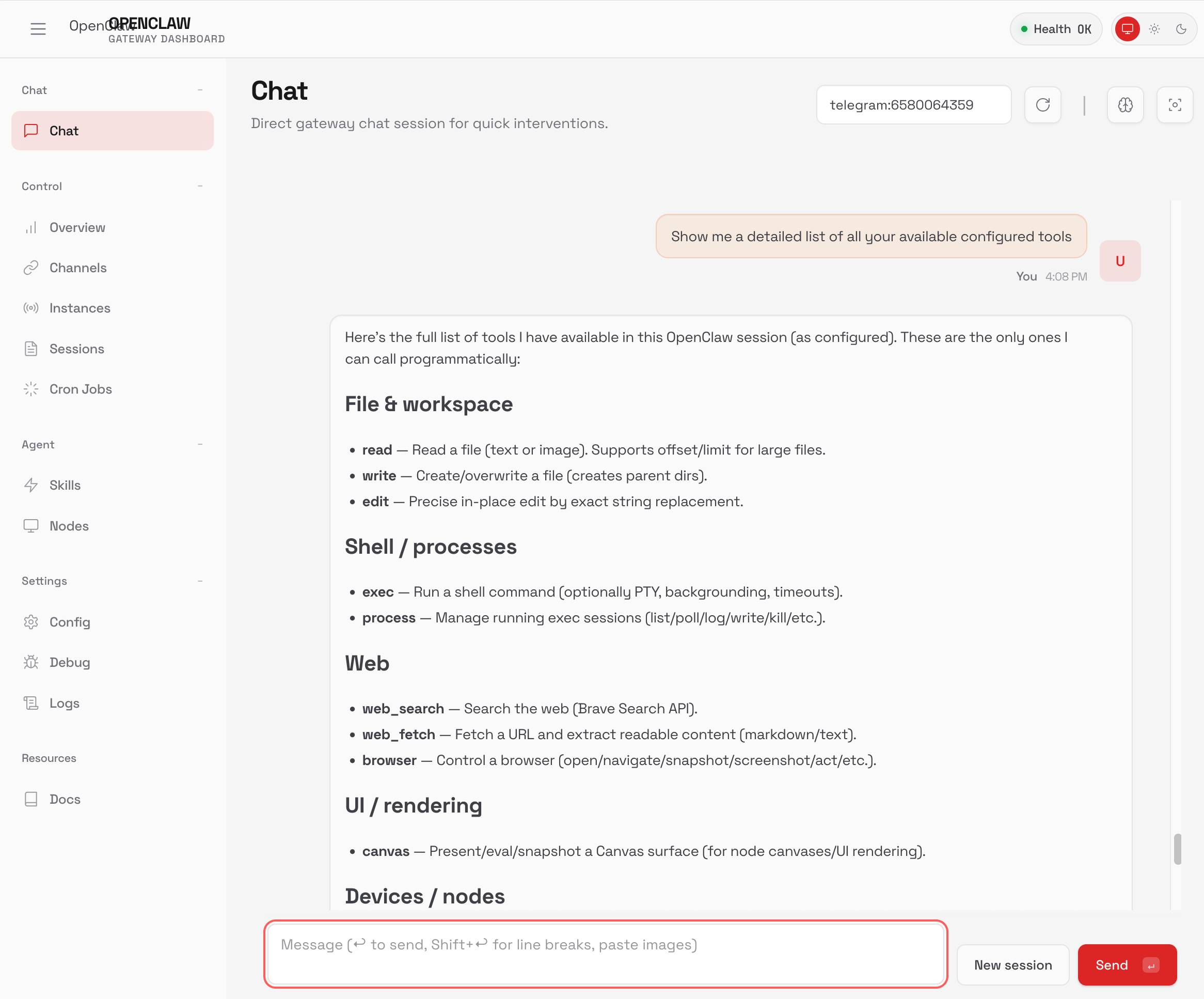

https://apps.apple.com/nl/app/google-ai-edge-gallery/id6749645337 |

Google AI Edge Gallery |

Terrible name, really great app: this is Google's official app for running their Gemma 4 models (the E2B and E4B sizes, plus some members of the Gemma 3 family) directly on your iPhone.

It works *really* well. The E2B model is a 2.54GB download and is both fast and genuinely useful.

The app also provides "ask questions about images" and audio transcription (up to 30s) with the two small Gemma 4 models, and has an interesting "skills" demo which demonstrates tool calling against eight different interactive widgets, each implemented as an HTML page (though sadly the source code is not visible): interactive-map, kitchen-adventure, calculate-hash, text-spinner, mood-tracker, mnemonic-password, query-wikipedia, and qr-code.

<img src="https://static.simonwillison.net/static/2026/gemini-agent-skills.jpg" alt="Screenshot of an "Agent Skills" chat interface using the Gemma-4-E2B-it model. The user prompt reads "Show me the Castro Theatre on a map." The model response, labeled "Model on GPU," shows it "Called JS skill 'interactive-map/index.html'" and displays an embedded Google Map centered on a red pin at The Castro Theatre in San Francisco, with nearby landmarks visible including Starbelly, Cliff's Variety, Blind Butcher, GLBT Historical Society Museum, and Fable. An "Open in Maps" link and "View in full screen" button are shown. Below the map, the model states "The interactive map view for the Castro Theatre has been shown." with a response time of 2.4 s. A text input field with "Type prompt..." placeholder, a "+" button, and a "Skills" button appear at the bottom." style="max-width: min(400px, 100%); margin: 0 auto; display: block;">

(That demo did freeze the app when I tried to add a follow-up prompt though.)

This is the first time I've seen a local model vendor release an official app for trying out their models on in iPhone. Sadly it's missing permanent logs - conversations with this app are ephemeral. |

https://news.ycombinator.com/item?id=47652561 |

Hacker News |

2026-04-06 05:18:26+00:00 |

- null - |

True |

| https://simonwillison.net/b/9407 |

https://lalitm.com/post/building-syntaqlite-ai/ |

Eight years of wanting, three months of building with AI |

Lalit Maganti provides one of my favorite pieces of long-form writing on agentic engineering I've seen in ages.

They spent eight years thinking about and then three months building [syntaqlite](https://github.com/lalitMaganti/syntaqlite), which they describe as "[high-fidelity devtools that SQLite deserves](https://lalitm.com/post/syntaqlite/)".

The goal was to provide fast, robust and comprehensive linting and verifying tools for SQLite, suitable for use in language servers and other development tools - a parser, formatter, and verifier for SQLite queries. I've found myself wanting this kind of thing in the past myself, hence my (far less production-ready) [sqlite-ast](https://simonwillison.net/2026/Jan/30/sqlite-ast-2/) project from a few months ago.

Lalit had been procrastinating on this project for years, because of the inevitable tedium of needing to work through 400+ grammar rules to help build a parser. That's exactly the kind of tedious work that coding agents excel at!

Claude Code helped get over that initial hump and build the first prototype:

> AI basically let me put aside all my doubts on technical calls, my uncertainty of building the right thing and my reluctance to get started by giving me very concrete problems to work on. Instead of “I need to understand how SQLite’s parsing works”, it was “I need to get AI to suggest an approach for me so I can tear it up and build something better". I work so much better with concrete prototypes to play with and code to look at than endlessly thinking about designs in my head, and AI lets me get to that point at a pace I could not have dreamed about before. Once I took the first step, every step after that was so much easier.

That first vibe-coded prototype worked great as a proof of concept, but they eventually made the decision to throw it away and start again from scratch. AI worked great for the low level details but did not produce a coherent high-level architecture:

> I found that AI made me procrastinate on key design decisions. Because refactoring was cheap, I could always say “I’ll deal with this later.” And because AI could refactor at the same industrial scale it generated code, the cost of deferring felt low. But it wasn’t: deferring decisions corroded my ability to think clearly because the codebase stayed confusing in the meantime.

The second attempt took a lot longer and involved a great deal more human-in-the-loop decision making, but the result is a robust library that can stand the test of time.

It's worth setting aside some time to read this whole thing - it's full of non-obvious downsides to working heavily with AI, as well as a detailed explanation of how they overcame those hurdles.

The key idea I took away from this concerns AI's weakness in terms of design and architecture:

> When I was working on something where I didn’t even know what I wanted, AI was somewhere between unhelpful and harmful. The architecture of the project was the clearest case: I spent weeks in the early days following AI down dead ends, exploring designs that felt productive in the moment but collapsed under scrutiny. In hindsight, I have to wonder if it would have been faster just thinking it through without AI in the loop at all.

>

> But expertise alone isn’t enough. Even when I understood a problem deeply, AI still struggled if the task had no objectively checkable answer. Implementation has a right answer, at least at a local level: the code compiles, the tests pass, the output matches what you asked for. Design doesn’t. We’re still arguing about OOP decades after it first took off. |

https://news.ycombinator.com/item?id=47648828 |

Hacker News |

2026-04-05 23:54:18+00:00 |

- null - |

True |

| https://simonwillison.net/b/9406 |

https://newsletter.maartengrootendorst.com/p/a-visual-guide-to-gemma-4 |

A visual guide to Gemma 4 |

Maarten Grootendorst joined Google DeepMind two months ago and has been working on the Gemma 4 release. |

- null - |

- null - |

2026-04-04 16:08:19+00:00 |

- null - |

True |

| https://simonwillison.net/b/9405 |

https://sockpuppet.org/blog/2026/03/30/vulnerability-research-is-cooked/ |

Vulnerability Research Is Cooked |

Thomas Ptacek's take on the sudden and enormous impact the latest frontier models are having on the field of vulnerability research.

> Within the next few months, coding agents will drastically alter both the practice and the economics of exploit development. Frontier model improvement won’t be a slow burn, but rather a step function. Substantial amounts of high-impact vulnerability research (maybe even most of it) will happen simply by pointing an agent at a source tree and typing “find me zero days”.

Why are agents so good at this? A combination of baked-in knowledge, pattern matching ability and brute force:

> You can't design a better problem for an LLM agent than exploitation research.

>

> Before you feed it a single token of context, a frontier LLM already encodes supernatural amounts of correlation across vast bodies of source code. Is the Linux KVM hypervisor connected to the `hrtimer` subsystem, `workqueue`, or `perf_event`? The model knows.

>

> Also baked into those model weights: the complete library of documented "bug classes" on which all exploit development builds: stale pointers, integer mishandling, type confusion, allocator grooming, and all the known ways of promoting a wild write to a controlled 64-bit read/write in Firefox.

>

> Vulnerabilities are found by pattern-matching bug classes and constraint-solving for reachability and exploitability. Precisely the implicit search problems that LLMs are most gifted at solving. Exploit outcomes are straightforwardly testable success/failure trials. An agent never gets bored and will search forever if you tell it to.

The article was partly inspired by [this episode of the Security Cryptography Whatever podcast](https://securitycryptographywhatever.com/2026/03/25/ai-bug-finding/), where David Adrian, Deirdre Connolly, and Thomas interviewed Anthropic's Nicholas Carlini for 1 hour 16 minutes.

I just started a new tag here for [ai-security-research](https://simonwillison.net/tags/ai-security-research/) - it's up to 11 posts already. |

- null - |

- null - |

2026-04-03 23:59:08+00:00 |

- null - |

True |

| https://simonwillison.net/b/9404 |

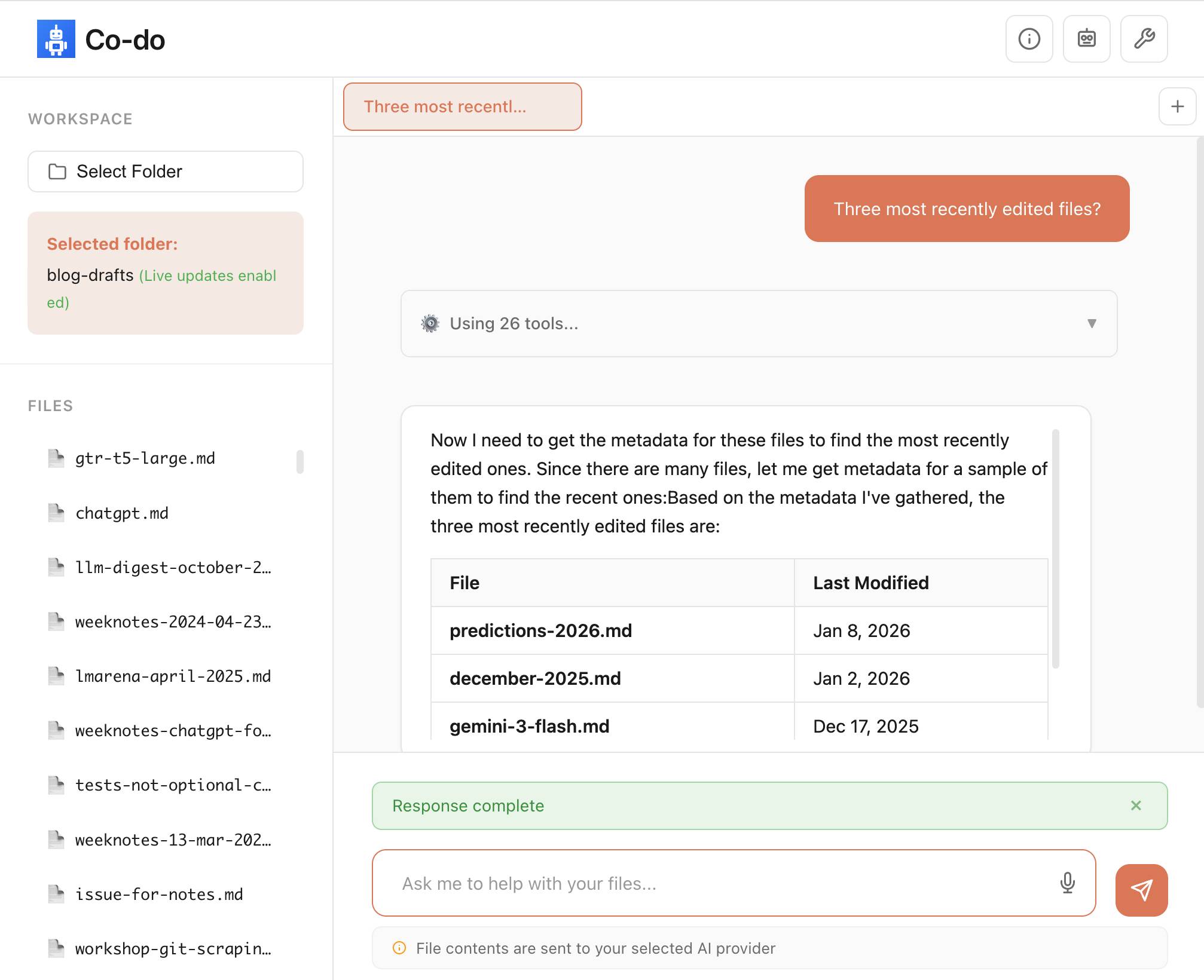

https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/ |

Gemma 4: Byte for byte, the most capable open models |

Four new vision-capable Apache 2.0 licensed reasoning LLMs from Google DeepMind, sized at 2B, 4B, 31B, plus a 26B-A4B Mixture-of-Experts.

Google emphasize "unprecedented level of intelligence-per-parameter", providing yet more evidence that creating small useful models is one of the hottest areas of research right now.

They actually label the two smaller models as E2B and E4B for "Effective" parameter size. The system card explains:

> The smaller models incorporate Per-Layer Embeddings (PLE) to maximize parameter efficiency in on-device deployments. Rather than adding more layers or parameters to the model, PLE gives each decoder layer its own small embedding for every token. These embedding tables are large but are only used for quick lookups, which is why the effective parameter count is much smaller than the total.

I don't entirely understand that, but apparently that's what the "E" in E2B means!

One particularly exciting feature of these models is that they are multi-modal beyond just images:

> **Vision and audio**: All models natively process video and images, supporting variable resolutions, and excelling at visual tasks like OCR and chart understanding. Additionally, the E2B and E4B models feature native audio input for speech recognition and understanding.

I've not figured out a way to run audio input locally - I don't think that feature is in LM Studio or Ollama yet.

I tried them out using the GGUFs for [LM Studio](https://lmstudio.ai/models/gemma-4). The 2B (4.41GB), 4B (6.33GB) and 26B-A4B (17.99GB) models all worked perfectly, but the 31B (19.89GB) model was broken and spat out `"---\n"` in a loop for every prompt I tried.

The succession of [pelican quality](https://gist.github.com/simonw/12ae4711288637a722fd6bd4b4b56bdb) from 2B to 4B to 26B-A4B is notable:

E2B:

E4B:

26B-A4B:

(This one actually had an SVG error - "error on line 18 at column 88: Attribute x1 redefined" - but after [fixing that](https://gist.github.com/simonw/12ae4711288637a722fd6bd4b4b56bdb?permalink_comment_id=6074105#gistcomment-6074105) I got probably the best pelican I've seen yet from a model that runs on my laptop.)

Google are providing API access to the two larger Gemma models via their [AI Studio](https://aistudio.google.com/prompts/new_chat?model=gemma-4-31b-it). I added support to [llm-gemini](https://github.com/simonw/llm-gemini) and then [ran a pelican](https://gist.github.com/simonw/f9f9e9c34c7cc0ef5325a2876413e51e) through the 31B model using that:

llm -m gemini/gemma-4-31b-it 'Generate an SVG of a pelican riding a bicycle'

Pretty good, though it is missing the front part of the bicycle frame:

|

- null - |

- null - |

2026-04-02 18:28:54+00:00 |

https://static.simonwillison.net/static/2026/gemma-4-31b-pelican.png |

True |

| https://simonwillison.net/b/9403 |

https://prismml.com/news/bonsai-8b |

Announcing 1-bit Bonsai |

PrismML is a newly out-of-stealth AI lab with [a focus](https://prismml.com/about) on researching "can we massively multiply intelligence in models without increasing their size or complexity?".

Their first model release is a model called Bonsai, and it's very small indeed: it comes in 1.7B, 4B and 8B parameter sizes but uses 1 bit parameters. Here are the sizes of the resulting models on Hugging Face:

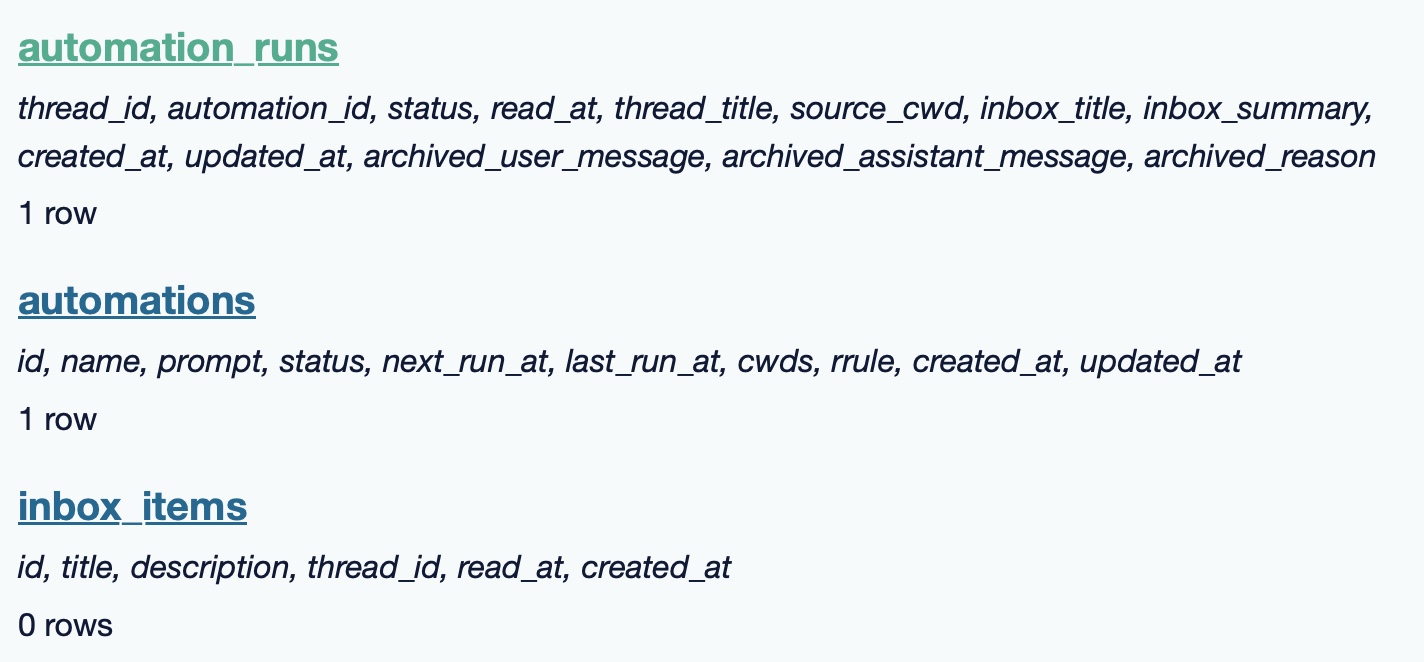

<center><table>

<thead>

<tr>

<th>Model</th>

<th>Size</th>

</tr>

</thead>

<tbody>

<tr>

<td><a href="https://huggingface.co/prism-ml/Bonsai-8B-mlx-1bit">Bonsai-8B-mlx-1bit</a></td>

<td>1.3 GB</td>

</tr>

<tr>

<td><a href="https://huggingface.co/prism-ml/Bonsai-8B-gguf">Bonsai-8B-gguf</a></td>

<td>1.16 GB</td>

</tr>

<tr>

<td><a href="https://huggingface.co/prism-ml/Bonsai-4B-gguf">Bonsai-4B-gguf</a></td>

<td>572 MB</td>

</tr>

<tr>

<td><a href="https://huggingface.co/prism-ml/Bonsai-4B-mlx-1bit">Bonsai-4B-mlx-1bit</a></td>

<td>645 MB</td>

</tr>

<tr>

<td><a href="https://huggingface.co/prism-ml/Bonsai-1.7B-gguf">Bonsai-1.7B-gguf</a></td>

<td>248 MB</td>

</tr>

<tr>

<td><a href="https://huggingface.co/prism-ml/Bonsai-1.7B-mlx-1bit">Bonsai-1.7B-mlx-1bit</a></td>

<td>285 MB</td>

</tr>

</tbody>

</table></center> |

- null - |

- null - |

2026-04-01 20:20:04+00:00 |

- null - |

True |

| https://simonwillison.net/b/9402 |

https://socket.dev/blog/axios-npm-package-compromised |

Supply Chain Attack on Axios Pulls Malicious Dependency from npm |