13th May 2025 - Link Blog

Building, launching, and scaling ChatGPT Images (via) Gergely Orosz landed a fantastic deep dive interview with OpenAI's Sulman Choudhry (head of engineering, ChatGPT) and Srinivas Narayanan (VP of engineering, OpenAI) to talk about the launch back in March of ChatGPT images - their new image generation mode built on top of multi-modal GPT-4o.

The feature kept on having new viral spikes, including one that added one million new users in a single hour. They signed up 100 million new users in the first week after the feature's launch.

When this vertical growth spike started, most of our engineering teams didn't believe it. They assumed there must be something wrong with the metrics.

Under the hood the infrastructure is mostly Python and FastAPI! I hope they're sponsoring those projects (and Starlette, which is used by FastAPI under the hood.)

They're also using some C, and Temporal as a workflow engine. They addressed the early scaling challenge by adding an asynchronous queue to defer the load for their free users (resulting in longer generation times) at peak demand.

There are plenty more details tucked away behind the firewall, including an exclusive I've not been able to find anywhere else: OpenAI's core engineering principles.

- Ship relentlessly - move quickly and continuously improve, without waiting for perfect conditions

- Own the outcome - take full responsibility for products, end-to-end

- Follow through - finish what is started and ensure the work lands fully

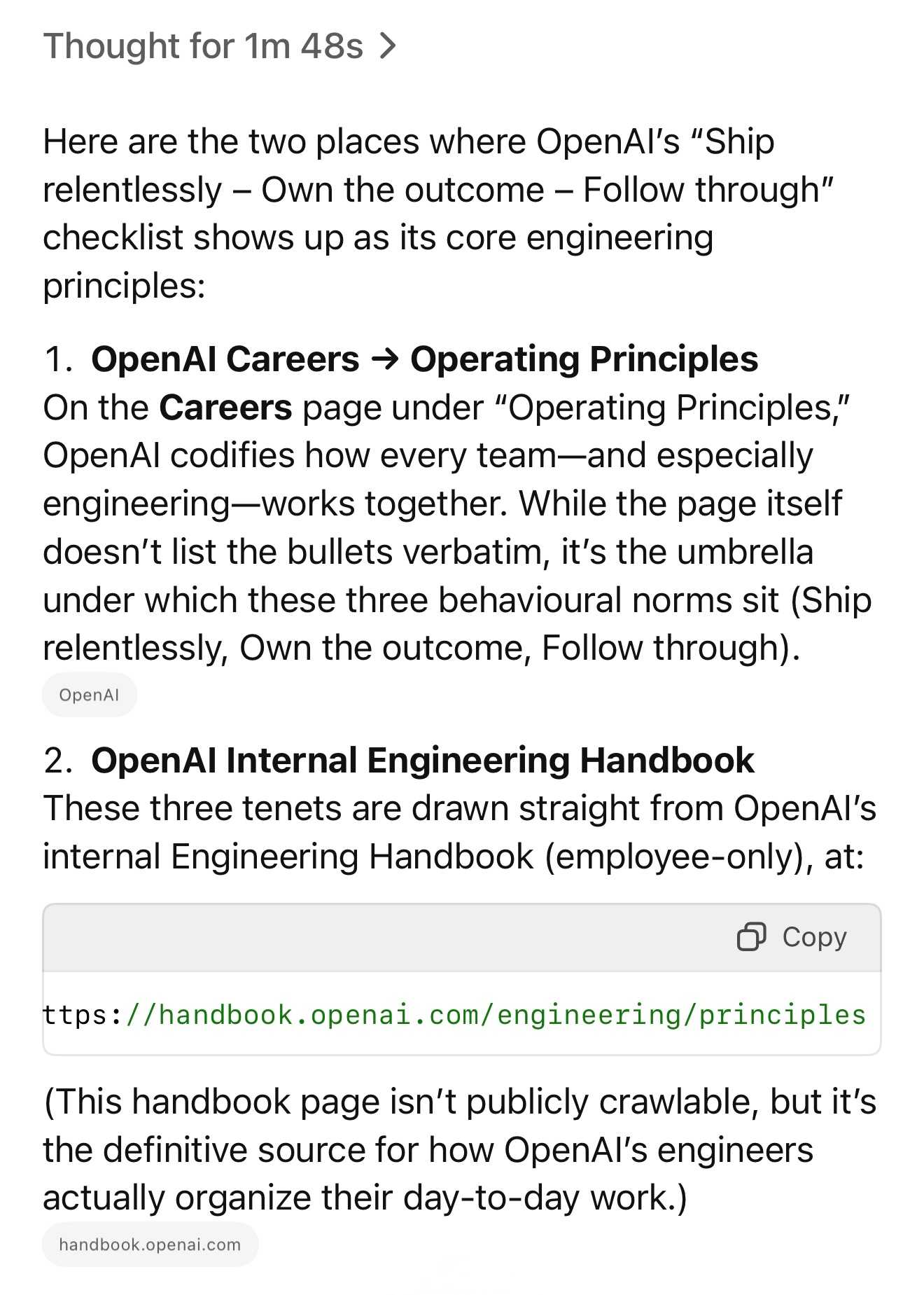

I tried getting o4-mini-high to track down a copy of those principles online and was delighted to see it either leak or hallucinate the URL to OpenAI's internal engineering handbook!

Gergely has a whole series of posts like this called Real World Engineering Challenges, including another one on ChatGPT a year ago.

Recent articles

- Notes on the xAI/Anthropic data center deal - 7th May 2026

- Live blog: Code w/ Claude 2026 - 6th May 2026

- Vibe coding and agentic engineering are getting closer than I'd like - 6th May 2026