Sunday, 1st June 2025

Progressive JSON. This post by Dan Abramov is a trap! It proposes a fascinating way of streaming JSON objects to a client in a way that provides the shape of the JSON before the stream has completed, then fills in the gaps as more data arrives... and then turns out to be a sneaky tutorial in how React Server Components work.

Ignoring the sneakiness, the imaginary streaming JSON format it describes is a fascinating thought exercise:

{

header: "$1",

post: "$2",

footer: "$3"

}

/* $1 */

"Welcome to my blog"

/* $3 */

"Hope you like it"

/* $2 */

{

content: "$4",

comments: "$5"

}

/* $4 */

"This is my article"

/* $5 */

["$6", "$7", "$8"]

/* $6 */

"This is the first comment"

/* $7 */

"This is the second comment"

/* $8 */

"This is the third comment"

After each block the full JSON document so far can be constructed, and Dan suggests interleaving Promise() objects along the way for placeholders that have not yet been fully resolved - so after receipt of block $3 above (note that the blocks can be served out of order) the document would look like this:

{

header: "Welcome to my blog",

post: new Promise(/* ... not yet resolved ... */),

footer: "Hope you like it"

}

I'm tucking this idea away in case I ever get a chance to try it out in the future.

I've been having some good results recently asking reasoning LLMs for "implementation plans" for features I'm working on.

I dump either the whole codebase in or the most relevent sections, then dump in my issue thread with several comments describing my planned feature, then ask it to provide an implementation plan for what I've outlined so far.

I'm finding this really valuable, because the model will often spot corners of the codebase that will need to be changed that I haven't thought about yet.

My two preferred models for this at the moment are Gemini 2.5 Flash and o4-mini, because they both have reasoning abilities, long context support (1m tokens for Flash, 200,000 for o4-mini) and they're both really cheap: most of the time the prompt costs me 15 cents or less, depending on the amount of code I feed in.

They rarely get the implementation plan exactly right, but that doesn't matter: what I'm looking for with these prompts is hints that tip me off to parts of the codebase I might not have considered yet.

(I wrote this as a draft in June 2025 but only noticed and hit publish on it in February 2026, I think it's an interesting time capsule of how I was using the models at the time.)

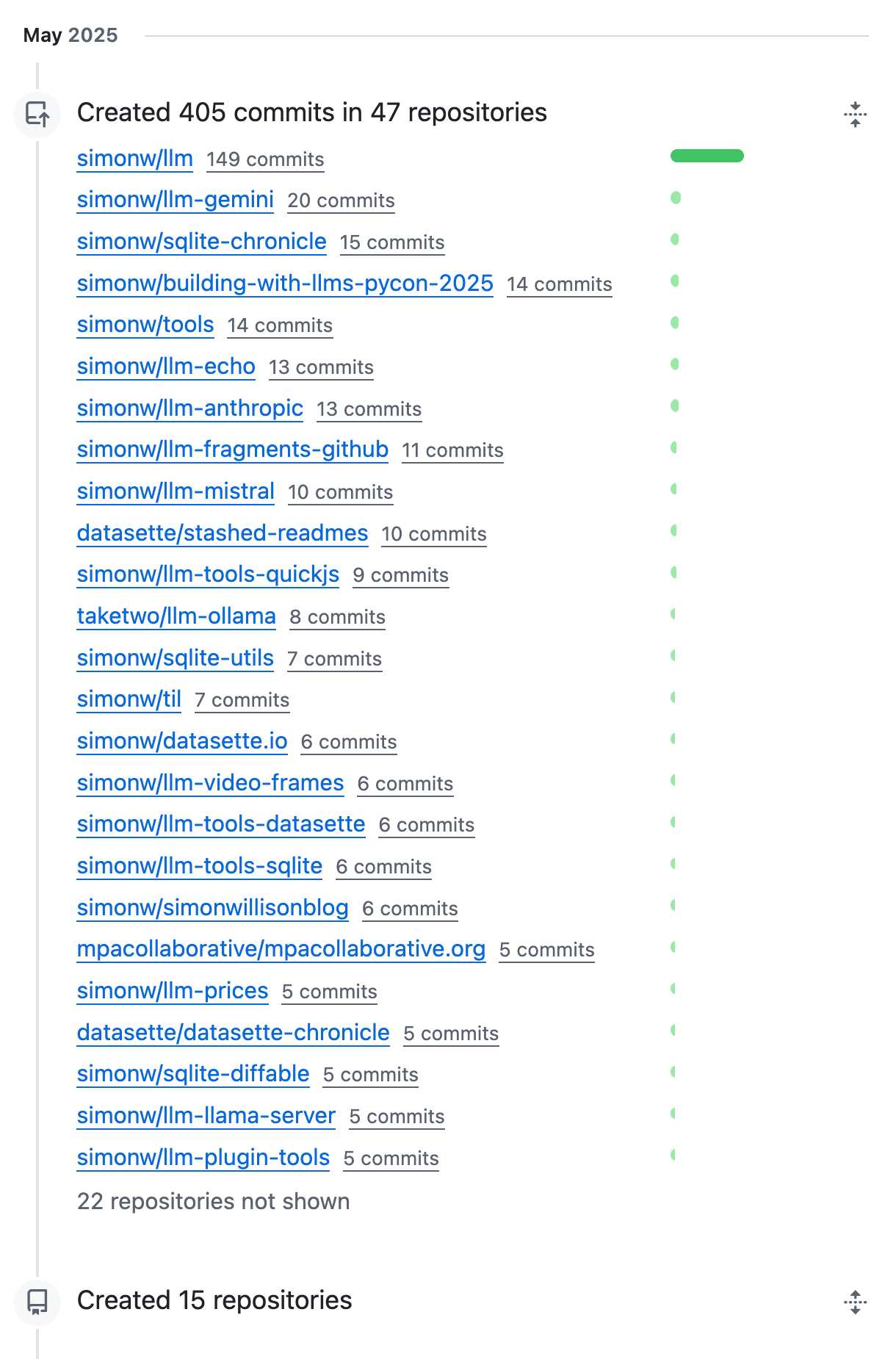

OK, May was a busy month for coding on GitHub. I blame tool support!