Google Drive to SQLite

20th February 2022

I released a new tool this week: google-drive-to-sqlite. It’s a CLI utility for fetching metadata about files in your Google Drive and writing them to a local SQLite database.

It’s pretty fun!

Here’s how to create a SQLite database of every file you’ve started in your Google Drive, including both files created in Google Docs/Sheets and files you’ve uploaded to your drive:

% pip install google-drive-to-sqlite

% google-drive-to-sqlite auth

Visit the following URL to authenticate with Google Drive

https://accounts.google.com/o/oauth2/v2/auth?access_type=offline&...

Then return here and paste in the resulting code:

Paste code here:

# Authentication is now complete, so run:

% google-drive-to-sqlite files starred.db --starred

% ls -lah starred.db

-rw-r--r--@ 1 simon staff 40K Feb 20 14:14 starred.db

The OAuth client ID it is using hasn’t been verified by Google yet, which I think means that only the first 100 people to use it will be able to authenticate. If you need to you can work around that by creating your own client ID, as described in the README.

Having created that starred.db file you can explore the resulting database using Datasette or Datasette Desktop:

datasette starred.db

# or if you have the Datasette Desktop macOS app installed:

open starred.db

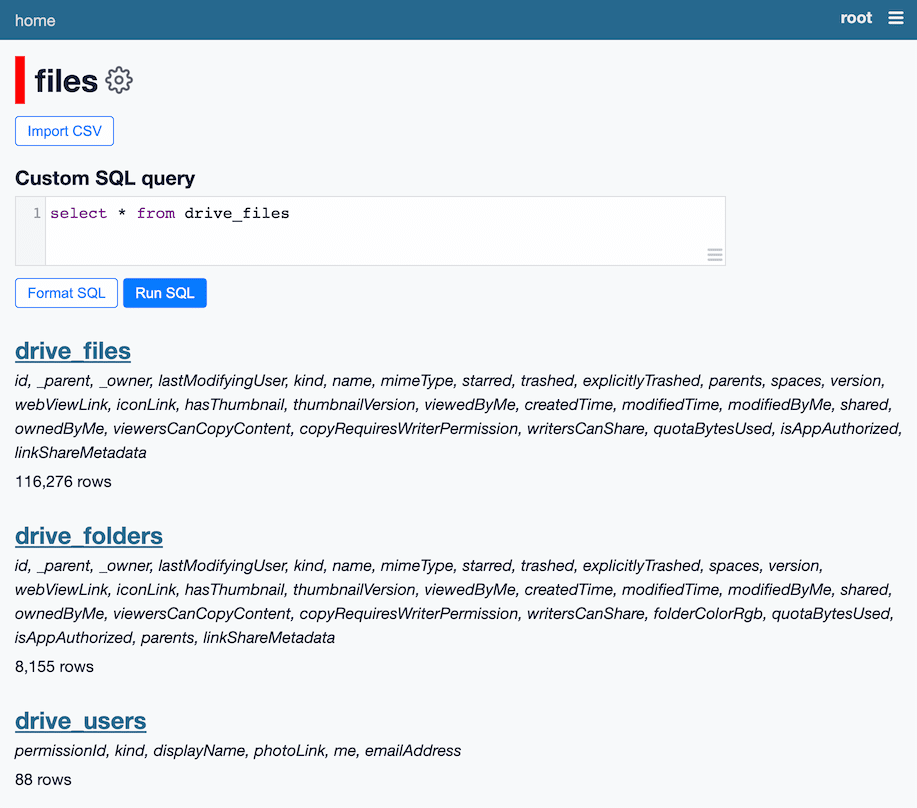

Here’s Datasette running against one of my larger metadata collections:

Why build this?

I recently got involved with a participatory journalism project, where a team of reporters have used FOIA requests to gather a huge corpus of thousands of files. The files are in a complex folder hierarchy a Google Drive. I wanted to start getting a feel for what’s in there.

Pulling the metadata—file names, sizes, file types, file owners, creation dates—into a SQLite database felt like a great way to start understanding the size and scope of what had been collected so far.

Outside of that project, there’s something very exciting to me about being able to use Google Drive to collate all kinds of different data and then tie it into the larger Datasette and Dogsheep ecosystems. I think there’s a lot of potential here for all kinds of interesting projects.

How it works

The tool is written in Python using Click (based on my click-app template) and sqlite-utils. It works by calling the Google Drive API.

The auth command needs to get hold of an OAuth access token scoped to make read-only calls to the user’s Google Drive contents.

This took a bit of figuring out. I wrote up what I learned in this TIL: Google OAuth for a CLI application

Notably, the end result of that flow is a JSON response containing both an access_token and a refresh_token.

The access token can be used to make authenticated API calls, but it expires after an hour and that expiration cannot be extended.

The refresh token lasts forever, and can be used at any time to obtain a fresh access token.

So the auth command writes the refresh token to a file called auth.json, then future calls to other commands use that token to retrieve a fresh access token on every run.

The most useful command is google-drive-to-sqlite files, which retrieves file metadata based on various criteria, then either writes that to a SQLite database or dumps it out as JSON or newline-delimited JSON. It does this by paginating through results from the Google Drive files list API.

The files --folder ID option is a special case. It retrieves every nested file and subfolder starting at the specified folder. The Google Drive API doesn’t support this operation directly, so the tool instead has to recursively call directory listings on every folder until it has pulled back all of the data. See my TIL Recursively fetching metadata for all files in a Google Drive folder for more details.

This operation took over an hour for the largest folder I tested it against! So long that the access token it was using expired and I had to implement code to refresh the token in the middle of the operation.

Some other neat tricks

The download command downloads the specified file to disk:

google-drive-to-sqlite download \

0B32uDVNZfiEKLUtIT1gzYWN2NDI4SzVQYTFWWWxCWUtvVGNB

It detects the file type and uses that as the extension—in the above example, it saves the file as 0B32uDVNZfiEKLUtIT1gzYWN2NDI4SzVQYTFWWWxCWUtvVGNB.pdf.

The export command only works against the file IDs for docs, sheets and presentations create using Google Apps. It can export to a variety of different formats:

google-drive-to-sqlite export html \

10BOHGDUYa7lBjUSo26YFCHTpgEmtXabdVFaopCTh1vU

This writes to 10BOHGDUYa7lBjUSo26YFCHTpgEmtXabdVFaopCTh1vU-export.html.

The get command takes a URL to a Google Drive API endpoint and fetches it using a valid access token. This is a great tool for debugging and API exploration—my github-to-sqlite tool has this too.

google-drive-to-sqlite get 'https://www.googleapis.com/drive/v3/about?fields=*'

It also knows how to paginate! Adding --paginate files will cause it to fetch all of the subsequent pages of the API and return just the items from the "files" key combined into a single JSON array, for example:

google-drive-to-sqlite get \

https://www.googleapis.com/drive/v3/files \

--paginate files

Exploring other APIs with the same tools

While I was building this, I realized that with just a little extra work the auth and get commands could be used to explore other Google APIs too.

If you are a developer, you can create your own OAuth credentials and enable access to other APIs using the Google Cloud console. You can then take the resulting client ID and secret, pick a scope and run the following:

google-drive-to-sqlite auth -a calendar-auth.json \

--scope 'https://www.googleapis.com/auth/calendar.readonly' \

--google-client-id '184325416553-nu5ci563v36rmj9opdl7mah786anbkrq.apps.googleusercontent.com' \

--google-client-secret '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'

calendar-auth.json will now be a JSON file that looks something like this:

{

"google-drive-to-sqlite": {

"refresh_token": "1//...",

"google_client_id": "184325416553-nu5ci563v36rmj9opdl7mah786anbkrq.apps.googleusercontent.com",

"google_client_secret": "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",

"scope": "https://www.googleapis.com/auth/calendar.readonly"

}

}You can now fetch your Google Calendar items by adding your email address to the following:

google-drive-to-sqlite get \

https://www.googleapis.com/calendar/v3/calendars/...@gmail.com/events \

--auth calendar-auth.json

This will output JSON to the console. For newline-delimited JSON, add --nl.

Since we can paginate with --paginate items, this means we can pipe the results to sqlite-utils insert and create a SQLite database of our calendar items!

google-drive-to-sqlite get \

https://www.googleapis.com/calendar/v3/calendars/...@gmail.com/events \

--auth calendar-auth.json \

--paginate items --nl \

| sqlite-utils insert calendar.db events \

- --pk id --nl --alter --replace

Maybe google-drive-to-sqlite wasn’t the right name for this after all!

What’s next?

Google severely tightened their policies on apps that can access Google Drive a few years ago. I’m currently waiting to see if my app will make it through their verification process, see issue #15.

If it doesn’t the tool will still be usable, but users will have to jump through some extra hoops to set up their own client ID. I don’t see this as a huge concern.

I’ve started thinking about ways to import additional data from the Google Drive APIs. I’m particularly interested in the idea of creating a full-text search index in SQLite based on plain text exports of documents created in Google Docs, see issue #28.

For other short-term future plans, take a look at the project’s open issues.

More recent articles

- Claude Opus 4.8: "a modest but tangible improvement" - 28th May 2026

- I think Anthropic and OpenAI have found product-market fit - 27th May 2026

- Notes on Pope Leo XIV's encyclical on AI - 25th May 2026