Using SQLite and Datasette with Fly Volumes

15th February 2022

A few weeks ago, Fly announced Free Postgres Databases as part of the free tier of their hosting product. Their announcement included this snippet:

The lede is “free Postgres” because that’s what matters to full stack apps. You don’t have to use these for Postgres. If SQLite is more your jam, mount up to 3GB of volumes and use “free SQLite.” Yeah, we’re probably underselling that.

I don’t know if I’ve ever been nerd sniped so effectively (they knew what they were doing).

This has the potential to address one of the big challenges in deploying Datasette, my open source web framework for building dynamic applications on top of SQLite.

Datasette currently has plenty of good answers for publishing read-only databases online—Cloud Run, Heroku and Vercel are three great options. But the moment you want to write back to that SQLite database you’re in for a harder time.

Accepting writes requires a working read-write filesystem, and that generally means spinning up a virtual machine—and having to take responsibility for keeping a full Linux instance fed and updated. That’s a much bigger commitment than deploying a container to something like Cloud Run.

Fly Volumes are exactly what I’ve been waiting for here. They provide persistent storage for Fly apps—a volume you can mount as a directory inside a containerized application that will persist data through subsequent deploys.

Add a free tier, and I finally have something I can point people towards when they want to try building something persistent on top of Datasette without taking on the burden of maintaining their own virtual server somewhere, or committing to spend money before they’ve evaluated if this is going to work for them or not.

datasette-publish-fly

I built the first version of the datasette-publish-fly plugin nearly two years ago, as a tool for publishing read-only databases to Fly in a similar way to Cloud Run or Vercel.

(That plugin actually broke a few months ago without me noticing due to some changes made to the flyctl utility that it shells out to—figuring out what had happened and wanting to avoid surprises like this in the future lead me to develop the Help scraping technique I described last week.)

This week I released datasette-publish-fly 1.1 with the option to attach volumes to the published instances and start serving mutable, persistent SQLite databases!

Basic usage looks like this:

datasette publish fly \

--app my-new-fly-application \

--create-volume 1 \

--create-db my-new-dbThis will create a new Fly application on your account called my-new-fly-application, create a 1GB volume for that application, mount the volume and then create an empty SQLite database file in that volume called my-new-db.db.

A blank database file isn’t very interesting! Datasette’s Writable canned queries feature can be used to configure SQL queries that write to the database. Let’s try that now, by creating a metadata.yml file containing the following:

plugins:

# Create messages table in messages.db on startup

datasette-init:

messages:

tables:

messages:

columns:

id: integer

message: text

datetime: text

pk: id

databases:

messages:

queries:

# /messages/add_message query

add_message:

sql: |-

INSERT INTO messages (

message, datetime

) VALUES (

:message, :_now_datetime_utc

)

write: trueNow we can run the deploy like this:

datasette publish fly \

--app messages-demo \

--create-volume 1 \

--create-db messages \

--install datasette-init \

--metadata metadata.ymlThis example uses the datasette-init plugin to create the messages table if it doesn’t exist, and configures a canned query that can insert rows into that table (using the _now_datetime_utc magic parameter to populate the current datetime.)

Once deployed, the following URL provides a form that can be used to add messages:

https://messages-demo.fly.dev/messages/add_message

As a general rule though, writable databases become more interesting when you combine them with Datasette plugins.

Using Fly volumes with datasette-tiddlywiki

datasette-tiddlywiki is a plugin that bundles a full install of TiddlyWiki, configured to use Datasette as a storage backend. I introduced this new plugin back in December.

Version 0.2 of the plugin, which I released yesterday, adds authentication. TiddlyWiki instances can now be viewed by anyone but can only be edited by users with the edit-tiddlywiki permisson, which is available to the root user by default and can be granted to more users using the Datasette permissions system.

Here’s how to deploy datasette-tiddlywiki to Fly, with an authenticated account that can then be used to edit the wiki.

We’re going to use the datasette-auth-passwords plugin to authenticate users. We need to install that locally in order to generate a password:

datasette install datasette-auth-passwordsInstalling this plugin adds a new command to Datasette called hash-password—we can use that like so:

% datasette hash-password

Password:

Repeat for confirmation:

pbkdf2_sha256$260000$b1ec52979ecf0c4810e3e22ea63c119e$AjaNnyuXzDXDRK/ZQjyn881J5GnVouKxI8B3DFu/C+M=(The example hash shown here is for the password “password”, so don’t use it for anything!)

Having created that password hash, we can use it to deploy our TiddlyWiki instance.

# First, install datasette-publish-fly

datasette install datasette-publish-fly

# Now deploy an instance with datasette-tiddlywiki

datasette publish fly \

--app simon-tiddlywiki \

--create-volume 1 \

--create-db tiddlywiki \

--install datasette-auth-passwords \

--install datasette-tiddlywiki \

--plugin-secret datasette-auth-passwords root_password_hash 'pbkdf2_sha256$2600...'

The --app value here is the name of the app on Fly, which needs to be globally unique—so pick your own value here.

The full password hash needs to be pasted in with single quotes on that last line.

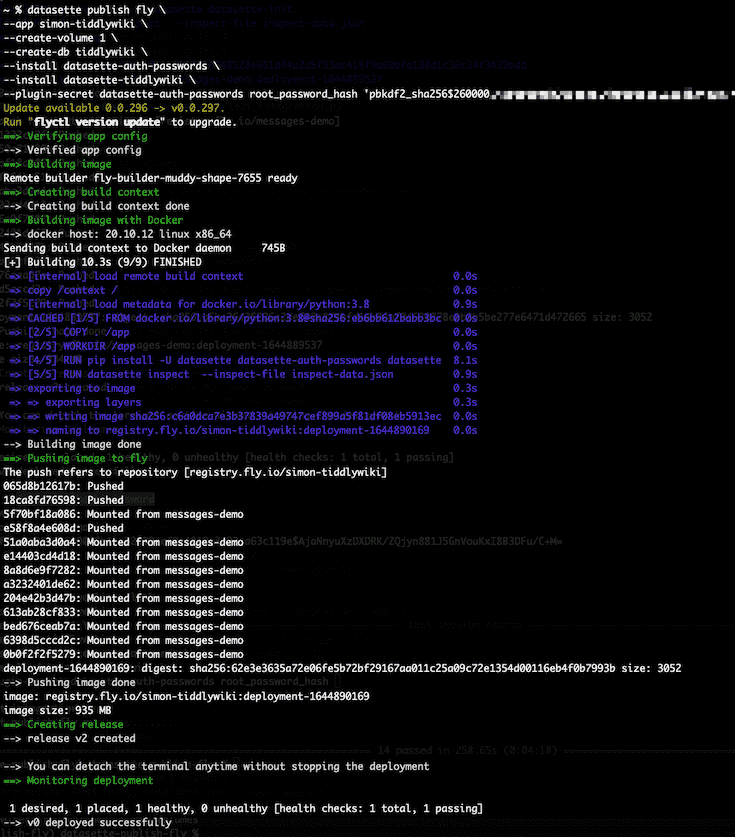

Running this will churn away for a little bit deploying the application:

It can take a few minutes after the script finishes running for the application to become available—Fly are working at speeding this up. If you visit it too early you may see a TLS connection error.

Once the application is live, the following pages will be available:

- https://simon-tiddlywiki.fly.dev/-/tiddlywiki—TiddlyWiki, initially in read-only mode

-

https://simon-tiddlywiki.fly.dev/-/login—the login form provided by

datasette-auth-passwords—sign in here with usernamerootand the password you used to create the hash - https://simon-tiddlywiki.fly.dev/—the Datasette homepage

Sign in and visit the wiki and you’ll be able to add and edit records there!

Since the plugin saves data to an underlying SQLite table, you can visit /tiddlywiki/tiddlers to see the data that has been stored.

Other plugins to try

Here are some other interesting plugins that make use of Datasette’s internal methods for writing to a database:

- datasette-write provides a UI for executing write queries against the database

- datasette-upload-csvs adds an interface for uploading a CSV file to create a table

- datasette-insert adds a full JSON API for inserting and updating data

- datasette-configure-fts can be used to configure full-text search against an existing table

- datasette-edit-schema provides tools for adding, renaming and re-ordering columns

- datasette-saved-queries lets users save queries to a database table

datasette-auth-github and datasette-auth-tokens can be used to provide additional ways of authenticating with an instance.

Releases this week

-

datasette-publish-fly: 1.1.1—(7 releases total)—2022-02-14

Datasette plugin for publishing data using Fly -

datasette-tiddlywiki: 0.2—(2 releases total)—2022-02-14

Run TiddlyWiki in Datasette and save Tiddlers to a SQLite database -

strip-hidden-form-values: 0.2.1—(3 releases total)—2022-02-11

CLI tool for stripping hidden form values from an HTML document -

datasette-auth-passwords: 1.0—(11 releases total)—2022-02-09

Datasette plugin for authentication using passwords -

datasette: 0.60.2—(107 releases total)—2022-02-07

An open source multi-tool for exploring and publishing data -

sqlite-utils: 3.23—(95 releases total)—2022-02-04

Python CLI utility and library for manipulating SQLite databases -

datasette-leaflet-freedraw: 0.3.1—(9 releases total)—2022-02-03

Draw polygons on maps in Datasette

TIL this week

More recent articles

- Running Python code in a sandbox with MicroPython and WASM - 6th June 2026

- Claude Opus 4.8: "a modest but tangible improvement" - 28th May 2026

- I think Anthropic and OpenAI have found product-market fit - 27th May 2026