| blogmark |

2026-05-17 15:59:41+00:00 |

{

"id": 9465,

"slug": "gds-weighs-in",

"link_url": "https://shkspr.mobi/blog/2026/05/gds-weighs-in-on-the-nhss-decision-to-retreat-from-open-source/",

"link_title": "GDS weighs in on the NHS's decision to retreat from Open Source",

"via_url": null,

"via_title": null,

"commentary": "Terence Eden continues his coverage of the NHS' [poorly considered decision](https://shkspr.mobi/blog/2026/05/nhs-goes-to-war-against-open-source/) to close down access to their open source repositories in response to vulnerabilities reported to them as part of [Project Glasswing](https://simonwillison.net/2026/Apr/7/project-glasswing/).\r\n\r\nNow the Government Digital Service have joined the conversation with [AI, open code and vulnerability risk in the public sector](https://www.gov.uk/guidance/ai-open-code-and-vulnerability-risk-in-the-public-sector), published May 14th. Their key recommendation:\r\n\r\n> Keep open by default. Making everything private adds additional delivery and policy costs, and can reduce reuse and scrutiny. Openness should remain the default posture, with closure used sparingly and deliberately. \r\n\r\nWhile they don't mention the NHS by name, Terence speaks the language of the civil service and interprets this as a major escalation:\r\n\r\n> Within the UK's Civil Service you occasionally hear the expression \"being invited to a meeting *without biscuits*\". It implies a rather frosty discussion without any of the polite niceties of a normal meeting. In general though, even when people have severe disagreements, it is rare for tempers to fray. It is even rarer for those internal disagreements to spill over into public.",

"created": "2026-05-17T15:59:41+00:00",

"metadata": {},

"search_document": "'/2026/apr/7/project-glasswing/).':73C '/blog/2026/05/nhs-goes-to-war-against-open-source/)':49C '/guidance/ai-open-code-and-vulnerability-risk-in-the-public-sector),':96C '14th':99C 'a':155C,172C,178C,189C 'access':53C 'additional':111C 'adds':110C 'ai':18B,21B,30B,33B,84C 'ai-ethics':29B 'ai-security-research':32B 'and':87C,113C,116C,120C,132C,151C 'any':183C 'as':66C,154C 'being':169C 'biscuits':175C 'by':105C,141C 'can':117C 'civil':149C,162C 'close':51C 'closure':129C 'code':86C 'considered':45C 'continues':38C 'conversation':82C 'costs':115C 'coverage':40C 'decision':8A,46C 'default':106C,126C 'deliberately':133C 'delivery':112C 'digital':77C 'disagreements':200C,215C 'discussion':181C 'don':136C 'down':52C 'eden':28B,37C 'escalation':157C 'ethics':31B 'even':195C,210C 'everything':108C 'expression':168C 'for':204C,212C 'fray':207C 'from':11A 'frosty':180C 'gds':1A 'general':193C 'generative':20B 'generative-ai':19B 'glasswing':70C 'gov':24B 'gov-uk':23B 'government':76C 'have':79C,198C 'hear':166C 'his':39C 'implies':177C 'in':3A,59C,90C,192C 'internal':214C 'interprets':152C 'into':219C 'invited':170C 'is':202C,209C 'it':176C,201C,208C 'joined':80C 'keep':103C 'key':101C 'language':146C 'llms':22B 'major':156C 'making':107C 'may':98C 'meeting':173C,191C 'mention':138C 'name':142C 'nhs':6A,43C,140C 'niceties':187C 'normal':190C 'now':74C 'occasionally':165C 'of':41C,68C,147C,184C,188C 'on':4A 'open':12A,15B,56C,85C,104C 'open-source':14B 'openness':122C 'over':218C 'part':67C 'people':197C 'policy':114C 'polite':186C 'poorly':44C 'posture':127C 'private':109C 'project':69C 'public':92C,220C 'published':97C 'rare':203C 'rarer':211C 'rather':179C 'recommendation':102C 'reduce':118C 'remain':124C 'reported':63C 'repositories':58C 'research':35B 'response':60C 'retreat':10A 'reuse':119C 'risk':89C 's':7A,161C 'scrutiny':121C 'sector':93C 'security':17B,34B 'service':78C,150C,163C 'severe':199C 'shkspr.mobi':48C,221C 'shkspr.mobi/blog/2026/05/nhs-goes-to-war-against-open-source/)':47C 'should':123C 'simonwillison.net':72C 'simonwillison.net/2026/apr/7/project-glasswing/).':71C 'source':13A,16B,57C 'sparingly':131C 'speaks':144C 'spill':217C 't':137C 'tempers':205C 'terence':27B,36C,143C 'terence-eden':26B 'the':5A,42C,75C,81C,91C,125C,139C,145C,148C,159C,167C,185C 'their':55C,100C 'them':65C 'they':135C 'this':153C 'those':213C 'though':194C 'to':9A,50C,54C,61C,64C,171C,206C,216C 'uk':25B,160C 'used':130C 'vulnerabilities':62C 'vulnerability':88C 'weighs':2A 'when':196C 'while':134C 'with':83C,128C 'within':158C 'without':174C,182C 'www.gov.uk':95C 'www.gov.uk/guidance/ai-open-code-and-vulnerability-risk-in-the-public-sector),':94C 'you':164C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| quotation |

2026-05-16 16:45:37+00:00 |

{

"id": 2200,

"slug": "julia-evans",

"quotation": "[...] in the last 10 years I\u2019ve learned to really love and respect CSS as a technology.\r\n\r\nSo I decided years ago that I wanted to react to \u201cCSS is hard\u201d by getting better at CSS and taking it seriously as a technology, instead of devaluing it. Doing that changed everything for me: I learned that so many of my frustrations (\u201ccentering is impossible\u201d) had been addressed in CSS a long time ago, and that also what \u201ccentering\u201d means is not always straightforward and it makes sense that there are many ways to do it. CSS is hard because it\u2019s solving a hard problem!",

"source": "Julia Evans",

"source_url": "https://jvns.ca/blog/2026/05/15/moving-away-from-tailwind--and-learning-to-structure-my-css-/",

"created": "2026-05-16T16:45:37+00:00",

"metadata": {},

"search_document": "'10':4A 'a':16A,42A,70A,103A 'addressed':67A 'ago':22A,73A 'also':76A 'always':82A 'and':12A,37A,74A,84A 'are':90A 'as':15A,41A 'at':35A 'because':99A 'been':66A 'better':34A 'by':32A 'centering':62A,78A 'changed':50A 'css':14A,29A,36A,69A,96A,106B 'decided':20A 'devaluing':46A 'do':94A 'doing':48A 'evans':109B,111C 'everything':51A 'for':52A 'frustrations':61A 'getting':33A 'had':65A 'hard':31A,98A,104A 'i':6A,19A,24A,54A 'impossible':64A 'in':1A,68A 'instead':44A 'is':30A,63A,80A,97A 'it':39A,47A,85A,95A,100A 'julia':108B,110C 'julia-evans':107B 'last':3A 'learned':8A,55A 'long':71A 'love':11A 'makes':86A 'many':58A,91A 'me':53A 'means':79A 'my':60A 'not':81A 'of':45A,59A 'problem':105A 'react':27A 'really':10A 'respect':13A 's':101A 'sense':87A 'seriously':40A 'so':18A,57A 'solving':102A 'straightforward':83A 'taking':38A 'technology':17A,43A 'that':23A,49A,56A,75A,88A 'the':2A 'there':89A 'time':72A 'to':9A,26A,28A,93A 've':7A 'wanted':25A 'ways':92A 'what':77A 'years':5A,21A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "Moving away from Tailwind, and learning to structure my CSS"

} |

| quotation |

2026-05-14 22:31:20+00:00 |

{

"id": 2199,

"slug": "mitchell-hashimoto",

"quotation": "[...] On the interesting side is how fungible programming languages are nowadays. Programming languages used to be LOCK IN, and they're increasingly not so. You think the Bun rewrite in Rust is good for Rust? Bun has shown they can be in probably any language they want in roughly a week or two. Rust is expendable. Its useful until its not then it can be thrown out. That's interesting!",

"source": "Mitchell Hashimoto",

"source_url": "https://twitter.com/mitchellh/status/2055039647924007222",

"created": "2026-05-14T22:31:20+00:00",

"metadata": {},

"search_document": "'a':50A 'agentic':83B 'agentic-engineering':82B 'ai':71B,76B 'and':19A 'any':44A 'are':10A 'be':16A,41A,65A 'bun':28A,36A,81B 'can':40A,64A 'engineering':84B 'expendable':56A 'for':34A 'fungible':7A 'generative':75B 'generative-ai':74B 'good':33A 'has':37A 'hashimoto':80B,86C 'how':6A 'in':18A,30A,42A,48A 'increasingly':22A 'interesting':3A,70A 'is':5A,32A,55A 'it':63A 'its':57A,60A 'language':45A 'languages':9A,13A 'llms':77B 'lock':17A 'mitchell':79B,85C 'mitchell-hashimoto':78B 'not':23A,61A 'nowadays':11A 'on':1A 'or':52A 'out':67A 'probably':43A 'programming':8A,12A 're':21A 'rewrite':29A 'roughly':49A 'rust':31A,35A,54A,72B 's':69A 'shown':38A 'side':4A 'so':24A 'that':68A 'the':2A,27A 'then':62A 'they':20A,39A,46A 'think':26A 'thrown':66A 'to':15A 'two':53A 'until':59A 'used':14A 'useful':58A 'want':47A 'week':51A 'you':25A 'zig':73B",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "on Bun porting from Zig to Rust"

} |

| blogmark |

2026-05-13 23:59:39+00:00 |

{

"id": 9464,

"slug": "welcome-to-the-datasette-blog",

"link_url": "https://datasette.io/blog/2026/new-blog/",

"link_title": "Welcome to the Datasette blog",

"via_url": null,

"via_title": null,

"commentary": "We have a bunch of neat Datasette announcements in the pipeline so we decided it was time the project grew an official blog.\r\n\r\nI built this using OpenAI Codex desktop, which turns out to have the Markdown session transcript export feature I've always wanted. Here's [the session that built the blog](https://gist.github.com/simonw/885b11eee46822622b8031a1f4e5f3a3). See also [issue 179](https://github.com/simonw/datasette.io/issues/179).",

"created": "2026-05-13T23:59:39+00:00",

"metadata": {},

"search_document": "'/simonw/885b11eee46822622b8031a1f4e5f3a3).':74C '/simonw/datasette.io/issues/179).':81C '179':78C 'a':21C 'ai':6B,10B,13B 'ai-assisted-programming':12B 'also':76C 'always':62C 'an':39C 'announcements':26C 'assisted':14B 'blog':5A,41C,71C 'built':43C,69C 'bunch':22C 'cli':18B 'codex':17B,47C 'codex-cli':16B 'datasette':4A,7B,25C 'datasette.io':82C 'decided':32C 'desktop':48C 'export':58C 'feature':59C 'generative':9B 'generative-ai':8B 'gist.github.com':73C 'gist.github.com/simonw/885b11eee46822622b8031a1f4e5f3a3).':72C 'github.com':80C 'github.com/simonw/datasette.io/issues/179).':79C 'grew':38C 'have':20C,53C 'here':64C 'i':42C,60C 'in':27C 'issue':77C 'it':33C 'llms':11B 'markdown':55C 'neat':24C 'of':23C 'official':40C 'openai':46C 'out':51C 'pipeline':29C 'programming':15B 'project':37C 's':65C 'see':75C 'session':56C,67C 'so':30C 'that':68C 'the':3A,28C,36C,54C,66C,70C 'this':44C 'time':35C 'to':2A,52C 'transcript':57C 'turns':50C 'using':45C 've':61C 'wanted':63C 'was':34C 'we':19C,31C 'welcome':1A 'which':49C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| quotation |

2026-05-13 16:15:50+00:00 |

{

"id": 2198,

"slug": "boris-mann",

"quotation": "\u201c11 AI agents\u201d is meaningless as a phrase. \r\n\r\nIf I said \u201cI have 11 spreadsheets\u201d or \u201cI have 11 browser tabs\u201d to do my work, it means about the same thing.",

"source": "Boris Mann",

"source_url": "https://bsky.app/profile/bmann.ca/post/3mlp2ipupv22z",

"created": "2026-05-13T16:15:50+00:00",

"metadata": {},

"search_document": "'11':1A,14A,19A 'a':7A 'about':28A 'agent':37B 'agent-definitions':36B 'agents':3A,35B 'ai':2A,32B,34B 'ai-agents':33B 'as':6A 'boris':39C 'browser':20A 'definitions':38B 'do':23A 'have':13A,18A 'i':10A,12A,17A 'if':9A 'is':4A 'it':26A 'mann':40C 'meaningless':5A 'means':27A 'my':24A 'or':16A 'phrase':8A 'said':11A 'same':30A 'spreadsheets':15A 'tabs':21A 'the':29A 'thing':31A 'to':22A 'work':25A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": null

} |

| quotation |

2026-05-12 22:59:58+00:00 |

{

"id": 2197,

"slug": "mo-bitar",

"quotation": "Now, if your CEO has never heard the phrase Ralph Loop, oh man, you are less than 30 days away from your next promotion. I'm not even exaggerating. Walk into his office, close the door, and say, hey chief, been experimenting with something. It's called Ralph Loops. And I think it could change literally everything. And he's gonna say, what's a Ralph loop? And you will say, give me $18,000 worth of API credits and I'll show you. Now you won't actually do anything, because you can't do anything. Because nobody can, because nobody knows what they're doing. But by the time he figures that out, you'll have a new title, and equity bump. [...]\r\n\r\nTalk about automation constantly. Nothing arouses the slumbering capitalists than the mention of automation. Drop names too, bro. Like talk about specific team members you can automate out of existence. Be like, yo, I automated Gary, bro. Tag Gary in the message. Tag him in Slack in a very public channel. Be like, yo, I just automated @Gary. His function has been Ralph Looped. And tag your CEO in the same message. You think you're getting laid off after that?",

"source": "Mo Bitar",

"source_url": "https://www.tiktok.com/@atmoio/video/7638649825382190350",

"created": "2026-05-12T22:59:58+00:00",

"metadata": {},

"search_document": "'000':75A '18':74A '30':18A 'a':65A,119A,172A 'about':126A,145A 'actually':89A 'after':204A 'ai':207B,210B 'ai-ethics':209B 'and':37A,50A,58A,68A,80A,122A,189A 'anything':91A,97A 'api':78A 'are':15A 'arouses':130A 'automate':151A 'automated':159A,181A 'automation':127A,138A 'away':20A 'be':155A,176A 'because':92A,98A,101A 'been':41A,186A 'bitar':213C 'bro':142A,161A 'bump':124A 'but':108A 'by':109A 'called':47A 'can':94A,100A,150A 'capitalists':133A 'careers':206B 'ceo':4A,192A 'change':55A 'channel':175A 'chief':40A 'close':34A 'constantly':128A 'could':54A 'credits':79A 'days':19A 'do':90A,96A 'doing':107A 'door':36A 'drop':139A 'equity':123A 'ethics':211B 'even':28A 'everything':57A 'exaggerating':29A 'existence':154A 'experimenting':42A 'figures':113A 'from':21A 'function':184A 'gary':160A,163A,182A 'getting':201A 'give':72A 'gonna':61A 'has':5A,185A 'have':118A 'he':59A,112A 'heard':7A 'hey':39A 'him':168A 'his':32A,183A 'i':25A,51A,81A,158A,179A 'if':2A 'in':164A,169A,171A,193A 'into':31A 'it':45A,53A 'just':180A 'knows':103A 'laid':202A 'less':16A 'like':143A,156A,177A 'literally':56A 'll':82A,117A 'loop':11A,67A 'looped':188A 'loops':49A 'm':26A 'man':13A 'me':73A 'members':148A 'mention':136A 'message':166A,196A 'mo':212C 'names':140A 'never':6A 'new':120A 'next':23A 'nobody':99A,102A 'not':27A 'nothing':129A 'now':1A,85A 'of':77A,137A,153A 'off':203A 'office':33A 'oh':12A 'out':115A,152A 'phrase':9A 'promotion':24A 'public':174A 'ralph':10A,48A,66A,187A 're':106A,200A 's':46A,60A,64A 'same':195A 'say':38A,62A,71A 'show':83A 'slack':170A 'slumbering':132A 'something':44A 'specific':146A 't':88A,95A 'tag':162A,167A,190A 'talk':125A,144A 'team':147A 'than':17A,134A 'that':114A,205A 'the':8A,35A,110A,131A,135A,165A,194A 'they':105A 'think':52A,198A 'tiktok':208B 'time':111A 'title':121A 'too':141A 'very':173A 'walk':30A 'what':63A,104A 'will':70A 'with':43A 'won':87A 'worth':76A 'yo':157A,178A 'you':14A,69A,84A,86A,93A,116A,149A,197A,199A 'your':3A,22A,191A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "The Unethical Guide to Surviving AI Layoffs, TikTok"

} |

| quotation |

2026-05-12 22:21:51+00:00 |

{

"id": 2196,

"slug": "mitchell-hashimoto",

"quotation": "The thing about 90% of TDMs [Technical Decision Makers] is that they're motivated primarily by NOT GETTING FIRED. These aren't people who browser Lobsters or push to GH on the weekend. These are people that work 9 to 5, get paid, go home, and NEVER THINK ABOUT WORK AGAIN. So to achieve all that, they follow secular trends supported by analysts and broad public sentiment. Oh, Gartner said that \"AI strategy\" is most important? McKinsey said \"context\" needs to be managed? Well, \"Context Engine for AI Apps\" is going to be defensible. Buy it.",

"source": "Mitchell Hashimoto",

"source_url": "https://lobste.rs/s/oznirn/redis_cost_ambition#c_dzrja0",

"created": "2026-05-12T22:21:51+00:00",

"metadata": {},

"search_document": "'5':41A '9':39A '90':4A 'about':3A,49A 'achieve':54A 'again':51A 'ai':72A,88A 'all':55A 'analysts':63A 'and':46A,64A 'apps':89A 'are':35A 'aren':21A 'be':82A,93A 'broad':65A 'browser':25A 'buy':95A 'by':16A,62A 'context':79A,85A 'decision':8A 'defensible':94A 'engine':86A 'fired':19A 'follow':58A 'for':87A 'gartner':69A 'get':42A 'getting':18A 'gh':30A 'go':44A 'going':91A 'hashimoto':101B,103C 'home':45A 'important':76A 'is':10A,74A,90A 'it':96A 'lobsters':26A 'makers':9A 'managed':83A 'marketing':97B 'mckinsey':77A 'mitchell':100B,102C 'mitchell-hashimoto':99B 'most':75A 'motivated':14A 'needs':80A 'never':47A 'not':17A 'of':5A 'oh':68A 'on':31A 'or':27A 'paid':43A 'people':23A,36A 'primarily':15A 'public':66A 'push':28A 're':13A 'redis':98B 'said':70A,78A 'secular':59A 'sentiment':67A 'so':52A 'strategy':73A 'supported':61A 't':22A 'tdms':6A 'technical':7A 'that':11A,37A,56A,71A 'the':1A,32A 'these':20A,34A 'they':12A,57A 'thing':2A 'think':48A 'to':29A,40A,53A,81A,92A 'trends':60A 'weekend':33A 'well':84A 'who':24A 'work':38A,50A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "in a conversation about the design of the [Redis homepage](https://redis.io/)"

} |

| blogmark |

2026-05-11 23:58:55+00:00 |

{

"id": 9463,

"slug": "gitlab-act-2",

"link_url": "https://about.gitlab.com/blog/gitlab-act-2/",

"link_title": "GitLab Act 2",

"via_url": "https://news.ycombinator.com/item?id=48100500",

"via_title": "Hacker News",

"commentary": "There's a lot going on in this announcement from GitLab about the \"workforce reduction\" and \"structural and strategic decisions\" they are making with respect to the agentic era.\r\n\r\n- They're \"planning to reduce the number of countries by up to 30% where we have small teams\". One of the most interesting things about GitLab is that they have employees spread across a large number of countries - 18 are listed [in their public employee handbook](https://gitlab.com/gitlab-com/content-sites/handbook/-/blob/7ce61c4be88b04061f9ad9ab5eb64db91ce89d2a/content/handbook/people-group/employment-solutions.md) but this post says they are \"operating in nearly 60 countries\". That handbook used to document their payroll workflows for those countries too - they stopped publishing that in 2023 but [the last public version](https://gitlab.com/gitlab-com/content-sites/handbook/-/blob/82ad50d380b11751645eedc733f7d663cf908d1f/content/handbook/finance/payroll.md) (hooray for version control) remains a fascinating read. Since we don't know which of those 60 countries have small teams, we can't calculate how many countries that 30% applies to.\r\n- \"We're planning to flatten the organization, removing up to three layers of management in some functions so leaders are closer to the work.\" - this isn't the first announcement of this type I've seen that's trimming management. Coinbase [recently announced](https://twitter.com/brian_armstrong/status/2051616759145185723) a much more aggressive version of this: they were \"flattening our org structure to 5 layers max below\" and \"No pure managers: Every leader at Coinbase must also be a strong and active individual contributor. Managers should be like player-coaches\".\r\n- In terms of team structure: \"We're re-organizing R&D to create roughly 60 smaller, more empowered teams with end-to-end ownership, nearly doubling the number of independent teams.\" I've always loved the idea of individual teams that can ship features unblocked by other teams, and it makes sense to me that agentic engineering can increase the capability of such teams. The 37signals public employee handbook used to have a section on working [In self-sufficient, independent teams](https://github.com/basecamp/handbook/blob/9504494a6daa555837ee2cc2d9134ca43ab36301/how-we-work.md#in-self-sufficient-independent-teams) which perfectly captured this for me, I'm sad to see they [removed that detail](https://github.com/basecamp/handbook/commit/1db14f83913163f4e2e72130524269ae6ba3d757) in January 2024!\r\n- Tucked away towards the bottom: \"*We will be retiring CREDIT as our values framework*\" - that's the values framework [described on this page](https://gitlab.com/gitlab-com/content-sites/handbook/-/blob/7ce61c4be88b04061f9ad9ab5eb64db91ce89d2a/content/handbook/values/_index.md): \"Collaboration, Results for Customers, Efficiency, Diversity, Inclusion & Belonging, Iteration, and Transparency\". The new values are \"Speed with Quality, Ownership Mindset, Customer Outcomes\". The fact that \"Diversity\" is no longer in there is likely to attract a whole lot of attention, so it's worth noting that a sub-bullet under Customer Outcomes reads \"Interpersonal excellence: individuals who are good humans, embrace diversity, inclusion and belonging, assume good intent and treat everyone with respect\".\r\n\r\nHere's the part of their new strategy that most resonated with me:\r\n\r\n> **The agentic era multiplies demand for software**. Software has been the force multiplier behind nearly every business transformation of the last two decades. The constraint was the cost and time of producing and managing it. That constraint is collapsing. As the cost of producing software collapses, demand for it will expand. Last year, the developer platform market used to be measured in tens of dollars per user per month, this year it is hundreds/user/month and headed to thousands. *Not only is the value of software for builders increasing, but we believe there will be more software and builders than ever, and we will serve an increasing volume of both*.\r\n\r\nThat very much encapsulates my own optimistic, [Jevons-paradox](https://simonwillison.net/tags/jevons-paradox/)-inspired hope for how this will all work out.\r\n\r\nTheir opinion on this does need to be taken with a big grain of salt though. GitLab's stock price was ~$52 a year ago and is ~$26 today, and it's plausible that the drop corresponds to uncertainty about GitLab's continued growth as agentic engineering eats its way through their core market.\r\n\r\nIf your entire business depends on software engineering growing as a field and producing larger volumes of more lucrative seats, you have a strong incentive to believe that agents will have that effect!",

"created": "2026-05-11T23:58:55+00:00",

"metadata": {},

"search_document": "'/basecamp/handbook/blob/9504494a6daa555837ee2cc2d9134ca43ab36301/how-we-work.md#in-self-sufficient-independent-teams)':338C '/basecamp/handbook/commit/1db14f83913163f4e2e72130524269ae6ba3d757)':356C '/brian_armstrong/status/2051616759145185723)':209C '/gitlab-com/content-sites/handbook/-/blob/7ce61c4be88b04061f9ad9ab5eb64db91ce89d2a/content/handbook/people-group/employment-solutions.md)':94C '/gitlab-com/content-sites/handbook/-/blob/7ce61c4be88b04061f9ad9ab5eb64db91ce89d2a/content/handbook/values/_index.md):':385C '/gitlab-com/content-sites/handbook/-/blob/82ad50d380b11751645eedc733f7d663cf908d1f/content/handbook/finance/payroll.md)':131C '/tags/jevons-paradox/)-inspired':594C '18':84C '2':3A '2023':123C '2024':359C '26':630C '30':58C,161C '37signals':4B,319C '5':224C '52':624C '60':104C,148C,267C 'a':19C,79C,137C,210C,239C,326C,421C,432C,613C,625C,667C,679C 'about':28C,70C,642C 'about.gitlab.com':690C 'across':78C 'act':2A 'active':242C 'agentic':15B,44C,309C,474C,648C 'agentic-engineering':14B 'agents':10B,685C 'aggressive':213C 'ago':627C 'ai':6B 'all':600C 'also':237C 'always':287C 'an':577C 'and':32C,34C,228C,241C,302C,395C,450C,455C,501C,505C,547C,569C,573C,628C,632C,669C 'announced':206C 'announcement':25C,193C 'applies':162C 'are':38C,85C,100C,183C,400C,444C 'as':370C,512C,647C,666C 'assume':452C 'at':234C 'attention':425C 'attract':420C 'away':361C 'be':238C,247C,367C,532C,566C,610C 'been':482C 'behind':486C 'believe':563C,683C 'belonging':393C,451C 'below':227C 'big':614C 'both':581C 'bottom':364C 'builders':559C,570C 'bullet':435C 'business':489C,660C 'but':95C,124C,561C 'by':55C,299C 'calculate':156C 'can':154C,295C,311C 'capability':314C 'captured':341C 'careers':5B 'closer':184C 'coaches':251C 'coding':9B 'coding-agents':8B 'coinbase':204C,235C 'collaboration':386C 'collapses':518C 'collapsing':511C 'constraint':497C,509C 'continued':645C 'contributor':244C 'control':135C 'core':655C 'corresponds':639C 'cost':500C,514C 'countries':54C,83C,105C,116C,149C,159C 'create':265C 'credit':369C 'customer':406C,437C 'customers':389C 'd':263C 'decades':495C 'decisions':36C 'demand':477C,519C 'depends':661C 'described':379C 'detail':353C 'developer':527C 'diversity':391C,411C,448C 'document':110C 'does':607C 'dollars':537C 'don':142C 'doubling':279C 'drop':638C 'eats':650C 'effect':689C 'efficiency':390C 'embrace':447C 'employee':90C,321C 'employees':76C 'empowered':270C 'encapsulates':585C 'end':274C,276C 'end-to-end':273C 'engineering':16B,310C,649C,664C 'entire':659C 'era':45C,475C 'ever':572C 'every':232C,488C 'everyone':457C 'excellence':441C 'expand':523C 'fact':409C 'fascinating':138C 'features':297C 'field':668C 'first':192C 'flatten':168C 'flattening':219C 'for':114C,133C,343C,388C,478C,520C,558C,596C 'force':484C 'framework':373C,378C 'from':26C 'functions':180C 'github.com':337C,355C 'github.com/basecamp/handbook/blob/9504494a6daa555837ee2cc2d9134ca43ab36301/how-we-work.md#in-self-sufficient-independent-teams)':336C 'github.com/basecamp/handbook/commit/1db14f83913163f4e2e72130524269ae6ba3d757)':354C 'gitlab':1A,7B,27C,71C,619C,643C 'gitlab.com':93C,130C,384C 'gitlab.com/gitlab-com/content-sites/handbook/-/blob/7ce61c4be88b04061f9ad9ab5eb64db91ce89d2a/content/handbook/people-group/employment-solutions.md)':92C 'gitlab.com/gitlab-com/content-sites/handbook/-/blob/7ce61c4be88b04061f9ad9ab5eb64db91ce89d2a/content/handbook/values/_index.md):':383C 'gitlab.com/gitlab-com/content-sites/handbook/-/blob/82ad50d380b11751645eedc733f7d663cf908d1f/content/handbook/finance/payroll.md)':129C 'going':21C 'good':445C,453C 'grain':615C 'growing':665C 'growth':646C 'hacker':691C 'handbook':91C,107C,322C 'has':481C 'have':61C,75C,150C,325C,678C,687C 'headed':548C 'here':460C 'hooray':132C 'hope':595C 'how':157C,597C 'humans':446C 'hundreds/user/month':546C 'i':197C,285C,345C 'idea':290C 'if':657C 'in':23C,87C,102C,122C,178C,252C,330C,357C,415C,534C 'incentive':681C 'inclusion':392C,449C 'increase':312C 'increasing':560C,578C 'independent':283C,334C 'individual':243C,292C 'individuals':442C 'intent':454C 'interesting':68C 'interpersonal':440C 'is':72C,412C,417C,510C,545C,553C,629C 'isn':189C 'it':303C,427C,507C,521C,544C,633C 'iteration':394C 'its':651C 'january':358C 'jevons':12B,590C 'jevons-paradox':11B,589C 'know':144C 'large':80C 'larger':671C 'last':126C,493C,524C 'layers':175C,225C 'leader':233C 'leaders':182C 'like':248C 'likely':418C 'listed':86C 'longer':414C 'lot':20C,423C 'loved':288C 'lucrative':675C 'm':346C 'makes':304C 'making':39C 'management':177C,203C 'managers':231C,245C 'managing':506C 'many':158C 'market':529C,656C 'max':226C 'me':307C,344C,472C 'measured':533C 'mindset':405C 'month':541C 'more':212C,269C,567C,674C 'most':67C,469C 'much':211C,584C 'multiplier':485C 'multiplies':476C 'must':236C 'my':586C 'nearly':103C,278C,487C 'need':608C 'new':398C,466C 'news':692C 'no':229C,413C 'not':551C 'noting':430C 'number':52C,81C,281C 'of':53C,65C,82C,146C,176C,194C,215C,254C,282C,291C,315C,424C,464C,491C,503C,515C,536C,556C,580C,616C,673C 'on':22C,328C,380C,605C,662C 'one':64C 'only':552C 'operating':101C 'opinion':604C 'optimistic':588C 'org':221C 'organization':170C 'organizing':261C 'other':300C 'our':220C,371C 'out':602C 'outcomes':407C,438C 'own':587C 'ownership':277C,404C 'page':382C 'paradox':13B,591C 'part':463C 'payroll':112C 'per':538C,540C 'perfectly':340C 'planning':48C,166C 'platform':528C 'plausible':635C 'player':250C 'player-coaches':249C 'post':97C 'price':622C 'producing':504C,516C,670C 'public':89C,127C,320C 'publishing':120C 'pure':230C 'quality':403C 'r':262C 're':47C,165C,258C,260C 're-organizing':259C 'read':139C 'reads':439C 'recently':205C 'reduce':50C 'reduction':31C 'remains':136C 'removed':351C 'removing':171C 'resonated':470C 'respect':41C,459C 'results':387C 'retiring':368C 'roughly':266C 's':18C,201C,375C,428C,461C,620C,634C,644C 'sad':347C 'salt':617C 'says':98C 'seats':676C 'section':327C 'see':349C 'seen':199C 'self':332C 'self-sufficient':331C 'sense':305C 'serve':576C 'ship':296C 'should':246C 'simonwillison.net':593C 'simonwillison.net/tags/jevons-paradox/)-inspired':592C 'since':140C 'small':62C,151C 'smaller':268C 'so':181C,426C 'software':479C,480C,517C,557C,568C,663C 'some':179C 'speed':401C 'spread':77C 'stock':621C 'stopped':119C 'strategic':35C 'strategy':467C 'strong':240C,680C 'structural':33C 'structure':222C,256C 'sub':434C 'sub-bullet':433C 'such':316C 'sufficient':333C 't':143C,155C,190C 'taken':611C 'team':255C 'teams':63C,152C,271C,284C,293C,301C,317C,335C 'tens':535C 'terms':253C 'than':571C 'that':73C,106C,121C,160C,200C,294C,308C,352C,374C,410C,431C,468C,508C,582C,636C,684C,688C 'the':29C,43C,51C,66C,125C,169C,186C,191C,280C,289C,313C,318C,363C,376C,397C,408C,462C,473C,483C,492C,496C,499C,513C,526C,554C,637C 'their':88C,111C,465C,603C,654C 'there':17C,416C,564C 'they':37C,46C,74C,99C,118C,217C,350C 'things':69C 'this':24C,96C,188C,195C,216C,342C,381C,542C,598C,606C 'those':115C,147C 'though':618C 'thousands':550C 'three':174C 'through':653C 'time':502C 'to':42C,49C,57C,109C,163C,167C,173C,185C,223C,264C,275C,306C,324C,348C,419C,531C,549C,609C,640C,682C 'today':631C 'too':117C 'towards':362C 'transformation':490C 'transparency':396C 'treat':456C 'trimming':202C 'tucked':360C 'twitter.com':208C 'twitter.com/brian_armstrong/status/2051616759145185723)':207C 'two':494C 'type':196C 'unblocked':298C 'uncertainty':641C 'under':436C 'up':56C,172C 'used':108C,323C,530C 'user':539C 'value':555C 'values':372C,377C,399C 've':198C,286C 'version':128C,134C,214C 'very':583C 'volume':579C 'volumes':672C 'was':498C,623C 'way':652C 'we':60C,141C,153C,164C,257C,365C,562C,574C 'were':218C 'where':59C 'which':145C,339C 'who':443C 'whole':422C 'will':366C,522C,565C,575C,599C,686C 'with':40C,272C,402C,458C,471C,612C 'work':187C,601C 'workflows':113C 'workforce':30C 'working':329C 'worth':429C 'year':525C,543C,626C 'you':677C 'your':658C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": "Thoughts on GitLab's workforce reduction\" and \"structural and strategic decisions\""

} |

| quotation |

2026-05-11 19:48:32+00:00 |

{

"id": 2195,

"slug": "james-shore",

"quotation": "Your AI coding agent, the one you use to write code, needs to reduce your maintenance costs. Not by a little bit, either. You write code twice as quick now? Better hope you\u2019ve halved your maintenance costs. Three times as productive? One third the maintenance costs. Otherwise, you\u2019re screwed. You\u2019re trading a temporary speed boost for permanent indenture. [...]\r\n\r\nThe math only works if the LLM *decreases* your maintenance costs, and by exactly the inverse of the rate it adds code. If you double your output and your cost of maintaining that output, two times two means you\u2019ve quadrupled your maintenance costs. If you double your output and hold your maintenance costs steady, two times one means you\u2019ve *still* doubled your maintenance costs.",

"source": "James Shore",

"source_url": "https://www.jamesshore.com/v2/blog/2026/you-need-ai-that-reduces-your-maintenance-costs",

"created": "2026-05-11T19:48:32+00:00",

"metadata": {},

"search_document": "'a':20A,55A 'adds':82A 'agent':4A 'agentic':141B 'agentic-engineering':140B 'agents':139B 'ai':2A,128B,131B,134B 'ai-assisted-programming':133B 'and':73A,89A,111A 'as':28A,41A 'assisted':135B 'better':31A 'bit':22A 'boost':58A 'by':19A,74A 'code':11A,26A,83A 'coding':3A,138B 'coding-agents':137B 'cost':91A 'costs':17A,38A,47A,72A,105A,115A,127A 'decreases':69A 'double':86A,108A 'doubled':124A 'either':23A 'engineering':142B 'exactly':75A 'for':59A 'generative':130B 'generative-ai':129B 'halved':35A 'hold':112A 'hope':32A 'if':66A,84A,106A 'indenture':61A 'inverse':77A 'it':81A 'james':143C 'little':21A 'llm':68A 'llms':132B 'maintaining':93A 'maintenance':16A,37A,46A,71A,104A,114A,126A 'math':63A 'means':99A,120A 'needs':12A 'not':18A 'now':30A 'of':78A,92A 'one':6A,43A,119A 'only':64A 'otherwise':48A 'output':88A,95A,110A 'permanent':60A 'productive':42A 'programming':136B 'quadrupled':102A 'quick':29A 'rate':80A 're':50A,53A 'reduce':14A 'screwed':51A 'shore':144C 'speed':57A 'steady':116A 'still':123A 'temporary':56A 'that':94A 'the':5A,45A,62A,67A,76A,79A 'third':44A 'three':39A 'times':40A,97A,118A 'to':9A,13A 'trading':54A 'twice':27A 'two':96A,98A,117A 'use':8A 've':34A,101A,122A 'works':65A 'write':10A,25A 'you':7A,24A,33A,49A,52A,85A,100A,107A,121A 'your':1A,15A,36A,70A,87A,90A,103A,109A,113A,125A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "You Need AI That Reduces Maintenance Costs"

} |

| blogmark |

2026-05-11 19:21:27+00:00 |

{

"id": 9462,

"slug": "zombie-internet",

"link_url": "https://www.404media.co/your-ai-use-is-breaking-my-brain/",

"link_title": "Your AI Use Is Breaking My Brain",

"via_url": "https://bsky.app/profile/jasonkoebler.bsky.social/post/3mllgvidacs2n",

"via_title": "@jasonkoebler.bsky.social",

"commentary": "Excellent, angry piece by Jason Koebler on how AI writing online is becoming impossible to avoid, filtering it is mentally exhausting and it's even starting to distort regular human writing styles.\r\n\r\nI particularly liked his use of the term \"Zombie Internet\" to define a different, more insidious alternative to the \"Dead Internet\" (which is just bots talking to each other):\r\n\r\n> I called it the Zombie Internet because the truth is that large parts of the internet are not just bots talking to bots or bots talking to people. It\u2019s people talking to bots, people talking to people, people creating \u201cAI agents\u201d and then instructing them to interact with people. It\u2019s people using AI talking to people who are not using AI, and it\u2019s people using AI talking to other people who are using AI. It\u2019s influencer hustlebros who are teaching each other how to make AI influencers and have spun up automated YouTube channels and blogs and social media accounts that are spamming the internet for the sole purpose of making money. It is whatever the fuck \u201cMoltbook\u201d is and whatever the fuck X and LinkedIn have become. It\u2019s AI summaries of real books being sold as the book itself and inspirational Reddit posts and comment threads in which people give heartfelt advice to some account that\u2019s actually being run by a marketing firm. [...]",

"created": "2026-05-11T19:21:27+00:00",

"metadata": {},

"search_document": "'a':65C,249C 'account':242C 'accounts':185C 'actually':245C 'advice':239C 'agents':123C 'ai':2A,9B,12B,19B,29C,122C,136C,144C,150C,158C,171C,216C 'ai-ethics':18B 'alternative':69C 'and':42C,124C,145C,173C,180C,182C,205C,210C,227C,231C 'angry':22C 'are':98C,141C,156C,164C,187C 'as':223C 'automated':177C 'avoid':36C 'because':88C 'become':213C 'becoming':33C 'being':221C,246C 'blogs':181C 'book':225C 'books':220C 'bots':77C,101C,104C,106C,115C 'brain':7A 'breaking':5A 'by':24C,248C 'called':83C 'channels':179C 'comment':232C 'creating':121C 'dead':72C 'define':64C 'definitions':8B 'different':66C 'distort':48C 'each':80C,166C 'ethics':20B 'even':45C 'excellent':21C 'exhausting':41C 'filtering':37C 'firm':251C 'for':191C 'fuck':202C,208C 'generative':11B 'generative-ai':10B 'give':237C 'have':174C,212C 'heartfelt':238C 'his':56C 'how':28C,168C 'human':50C 'hustlebros':162C 'i':53C,82C 'impossible':34C 'in':234C 'influencer':161C 'influencers':172C 'insidious':68C 'inspirational':228C 'instructing':126C 'interact':129C 'internet':62C,73C,87C,97C,190C 'is':4A,32C,39C,75C,91C,199C,204C 'it':38C,43C,84C,110C,132C,146C,159C,198C,214C 'itself':226C 'jason':16B,25C 'jason-koebler':15B 'jasonkoebler.bsky.social':253C 'just':76C,100C 'koebler':17B,26C 'large':93C 'liked':55C 'linkedin':211C 'llms':13B 'make':170C 'making':196C 'marketing':250C 'media':184C 'mentally':40C 'moltbook':203C 'money':197C 'more':67C 'my':6A 'not':99C,142C 'of':58C,95C,195C,218C 'on':27C 'online':31C 'or':105C 'other':81C,153C,167C 'particularly':54C 'parts':94C 'people':109C,112C,116C,119C,120C,131C,134C,139C,148C,154C,236C 'piece':23C 'posts':230C 'purpose':194C 'real':219C 'reddit':229C 'regular':49C 'run':247C 's':44C,111C,133C,147C,160C,215C,244C 'slop':14B 'social':183C 'sold':222C 'sole':193C 'some':241C 'spamming':188C 'spun':175C 'starting':46C 'styles':52C 'summaries':217C 'talking':78C,102C,107C,113C,117C,137C,151C 'teaching':165C 'term':60C 'that':92C,186C,243C 'the':59C,71C,85C,89C,96C,189C,192C,201C,207C,224C 'them':127C 'then':125C 'threads':233C 'to':35C,47C,63C,70C,79C,103C,108C,114C,118C,128C,138C,152C,169C,240C 'truth':90C 'up':176C 'use':3A,57C 'using':135C,143C,149C,157C 'whatever':200C,206C 'which':74C,235C 'who':140C,155C,163C 'with':130C 'writing':30C,51C 'www.404media.co':252C 'x':209C 'your':1A 'youtube':178C 'zombie':61C,86C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| blogmark |

2026-05-11 15:46:36+00:00 |

{

"id": 9461,

"slug": "learning-on-the-shop-floor",

"link_url": "https://twitter.com/tobi/status/2053121182044451016",

"link_title": "Learning on the Shop floor",

"via_url": null,

"via_title": null,

"commentary": "Tobias L\u00fctke describes Shopify's internal coding agent tool, River, which operates entirely in public on their Slack:\r\n\r\n> River does not respond to direct messages. She politely declines and suggests to create a public channel for you and her to start working in. I myself work with river in `#tobi_river` channel and many followed this pattern. Every conversation is therefore searchable. Anyone at Shopify can jump in. In my own channel, there are over 100 people who, react to threads, add color and add context, pick up the torch, help with the reviews, remind me how rusty I am, and importantly, learn from watching. [...]\r\n>\r\n> As so often with German, there is a word for the kind of environment: *Lehrwerkstatt*. Literally: **A teaching workshop**. The whole shop floor is the classroom. You learn by being near the work. Being a constant learner is one of the core values of the firm.\r\n>\r\n> Shopify wants to be a Lehrwerkstatt at scale and River has now gotten us closer to this ideal than ever. It\u2019s *osmosis learning*, because it does not require a curriculum, a training plan, or a manager. It just requires everyone's work to be visible to the maximum extent possible. Everyone learns from each other.\r\n\r\nI'm reminded of how Midjourney spent its first few years with the primary interface being public Discord channels, forcing users to share their prompts and learn from each other's experiments. I continue to believe that the early success of Midjourney was tied to this mechanism, helping to compensate for how weird and finicky text-to-image prompting is.",

"created": "2026-05-11T15:46:36+00:00",

"metadata": {},

"search_document": "'100':94C 'a':51C,131C,140C,158C,174C,199C,201C,205C 'add':100C,103C 'agent':26C 'agents':15B 'ai':6B,10B 'am':118C 'and':47C,56C,71C,102C,119C,178C,251C,279C 'anyone':81C 'are':92C 'as':124C 'at':82C,176C 'be':173C,214C 'because':194C 'being':153C,157C,241C 'believe':261C 'by':152C 'can':84C 'channel':53C,70C,90C 'channels':244C 'classroom':149C 'closer':184C 'coding':14B,25C 'coding-agents':13B 'color':101C 'compensate':275C 'constant':159C 'context':104C 'continue':259C 'conversation':77C 'core':165C 'create':50C 'curriculum':200C 'declines':46C 'describes':21C 'direct':42C 'discord':243C 'does':38C,196C 'each':224C,254C 'early':264C 'entirely':31C 'environment':137C 'ever':189C 'every':76C 'everyone':210C,221C 'experiments':257C 'extent':219C 'few':235C 'finicky':280C 'firm':169C 'first':234C 'floor':5A,146C 'followed':73C 'for':54C,133C,276C 'forcing':245C 'from':122C,223C,253C 'generative':9B 'generative-ai':8B 'german':128C 'gotten':182C 'has':180C 'help':109C 'helping':273C 'her':57C 'how':115C,230C,277C 'i':62C,117C,226C,258C 'ideal':187C 'image':284C 'importantly':120C 'in':32C,61C,67C,86C,87C 'interface':240C 'internal':24C 'is':78C,130C,147C,161C,286C 'it':190C,195C,207C 'its':233C 'jump':85C 'just':208C 'kind':135C 'learn':121C,151C,252C 'learner':160C 'learning':1A,193C 'learns':222C 'lehrwerkstatt':138C,175C 'literally':139C 'llms':11B 'lutke':18B 'l\u00fctke':20C 'm':227C 'manager':206C 'many':72C 'maximum':218C 'me':114C 'mechanism':272C 'messages':43C 'midjourney':12B,231C,267C 'my':88C 'myself':63C 'near':154C 'not':39C,197C 'now':181C 'of':136C,163C,167C,229C,266C 'often':126C 'on':2A,34C 'one':162C 'operates':30C 'or':204C 'osmosis':192C 'other':225C,255C 'over':93C 'own':89C 'pattern':75C 'people':95C 'pick':105C 'plan':203C 'politely':45C 'possible':220C 'primary':239C 'prompting':285C 'prompts':250C 'public':33C,52C,242C 'react':97C 'remind':113C 'reminded':228C 'require':198C 'requires':209C 'respond':40C 'reviews':112C 'river':28C,37C,66C,69C,179C 'rusty':116C 's':23C,191C,211C,256C 'scale':177C 'searchable':80C 'share':248C 'she':44C 'shop':4A,145C 'shopify':22C,83C,170C 'slack':7B,36C 'so':125C 'spent':232C 'start':59C 'success':265C 'suggests':48C 'teaching':141C 'text':282C 'text-to-image':281C 'than':188C 'that':262C 'the':3A,107C,111C,134C,143C,148C,155C,164C,168C,217C,238C,263C 'their':35C,249C 'there':91C,129C 'therefore':79C 'this':74C,186C,271C 'threads':99C 'tied':269C 'to':41C,49C,58C,98C,172C,185C,213C,216C,247C,260C,270C,274C,283C 'tobi':68C 'tobias':17B,19C 'tobias-lutke':16B 'tool':27C 'torch':108C 'training':202C 'twitter.com':287C 'up':106C 'us':183C 'users':246C 'values':166C 'visible':215C 'wants':171C 'was':268C 'watching':123C 'weird':278C 'which':29C 'who':96C 'whole':144C 'with':65C,110C,127C,237C 'word':132C 'work':64C,156C,212C 'working':60C 'workshop':142C 'years':236C 'you':55C,150C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| quotation |

2026-05-10 23:58:49+00:00 |

{

"id": 2194,

"slug": "new-york-times-editors-note",

"quotation": "*This article was updated after The Times learned that a remark attributed to Pierre Poilievre, the Conservative leader, was in fact an A.I.-generated summary of his views about Canadian politics that A.I. rendered as a quotation. The reporter should have checked the accuracy of what the A.I. tool returned. The article now accurately quotes from a speech delivered by Mr. Poilievre in April. [...] He did not refer to politicians who changed allegiances as turncoats in that speech.*",

"source": "New York Times Editors\u2019 Note",

"source_url": "https://www.nytimes.com/2026/04/14/world/canada/election-carney-liberal-party.html",

"created": "2026-05-10T23:58:49+00:00",

"metadata": {},

"search_document": "'a':10A,36A,57A 'a.i':23A,33A,48A 'about':29A 'accuracy':44A 'accurately':54A 'after':5A 'ai':84B,87B,90B 'ai-ethics':89B 'allegiances':73A 'an':22A 'april':64A 'article':2A,52A 'as':35A,74A 'attributed':12A 'by':60A 'canadian':30A 'changed':72A 'checked':42A 'conservative':17A 'delivered':59A 'did':66A 'editors':96C 'ethics':91B 'fact':21A 'from':56A 'generated':24A 'generative':86B 'generative-ai':85B 'hallucinations':92B 'have':41A 'he':65A 'his':27A 'in':20A,63A,76A 'journalism':79B 'leader':18A 'learned':8A 'llms':88B 'mr':61A 'new':81B,93C 'new-york-times':80B 'not':67A 'note':97C 'now':53A 'of':26A,45A 'pierre':14A 'poilievre':15A,62A 'politicians':70A 'politics':31A 'quotation':37A 'quotes':55A 'refer':68A 'remark':11A 'rendered':34A 'reporter':39A 'returned':50A 'should':40A 'speech':58A,78A 'summary':25A 'that':9A,32A,77A 'the':6A,16A,38A,43A,47A,51A 'this':1A 'times':7A,83B,95C 'to':13A,69A 'tool':49A 'turncoats':75A 'updated':4A 'views':28A 'was':3A,19A 'what':46A 'who':71A 'york':82B,94C",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": null

} |

| blogmark |

2026-05-10 15:36:19+00:00 |

{

"id": 9460,

"slug": "mythical-man-month",

"link_url": "https://martinfowler.com/bliki/MythicalManMonth.html",

"link_title": "Mythical Man Month",

"via_url": null,

"via_title": null,

"commentary": "Martin Fowler highlights this key idea from The Mythical Man-Month (Fred Brooks, 1975, still impressively relevant 50 years later):\r\n\r\n> I will contend that conceptual integrity is the most important consideration in system design. It is better to have a system omit certain anomalous features and improvements, but to reflect one set of design ideas, than to have one that contains many good but independent and uncoordinated ideas.\r\n\r\n**Conceptual integrity** is exactly the missing piece I've been trying to nail down in understanding why being able to spit out new features so quickly offers new challenges when working with coding agents.",

"created": "2026-05-10T15:36:19+00:00",

"metadata": {},

"search_document": "'1975':21C '50':25C 'a':47C 'able':94C 'agents':109C 'and':53C,73C 'anomalous':51C 'been':85C 'being':93C 'better':44C 'brooks':20C 'but':55C,71C 'certain':50C 'challenges':104C 'coding':108C 'conceptual':5B,32C,76C 'conceptual-integrity':4B 'consideration':38C 'contains':68C 'contend':30C 'design':41C,61C 'down':89C 'exactly':79C 'features':52C,99C 'fowler':8C 'fred':19C 'from':13C 'good':70C 'have':46C,65C 'highlights':9C 'i':28C,83C 'idea':12C 'ideas':62C,75C 'important':37C 'impressively':23C 'improvements':54C 'in':39C,90C 'independent':72C 'integrity':6B,33C,77C 'is':34C,43C,78C 'it':42C 'key':11C 'later':27C 'man':2A,17C 'man-month':16C 'many':69C 'martin':7C 'martinfowler.com':110C 'missing':81C 'month':3A,18C 'most':36C 'mythical':1A,15C 'nail':88C 'new':98C,103C 'of':60C 'offers':102C 'omit':49C 'one':58C,66C 'out':97C 'piece':82C 'quickly':101C 'reflect':57C 'relevant':24C 'set':59C 'so':100C 'spit':96C 'still':22C 'system':40C,48C 'than':63C 'that':31C,67C 'the':14C,35C,80C 'this':10C 'to':45C,56C,64C,87C,95C 'trying':86C 'uncoordinated':74C 'understanding':91C 've':84C 'when':105C 'why':92C 'will':29C 'with':107C 'working':106C 'years':26C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": true,

"title": ""

} |

| blogmark |

2026-05-10 15:31:32+00:00 |

{

"id": 9459,

"slug": "conceptual-integrity",

"link_url": "https://martinfowler.com/bliki/MythicalManMonth.html",

"link_title": "Mythical Man Month",

"via_url": null,

"via_title": null,

"commentary": "Martin Fowler highlights this key idea from The Mythical Man-Month (Fred Brooks, 1975, still impressively relevant 50 years later):\r\n\r\n> I will contend that conceptual integrity is the most important consideration in system design. It is better to have a system omit certain anomalous features and improvements, but to reflect one set of design ideas, than to have one that contains many good but independent and uncoordinated ideas.\r\n\r\n**Conceptual integrity** is exactly the missing piece I've been trying to nail down in understanding why being able to spit out new features so quickly offers new challenges when working with coding agents.",

"created": "2026-05-10T15:31:32+00:00",

"metadata": {},

"search_document": "'1975':32C '50':36C 'a':58C 'able':105C 'agentic':16B 'agentic-engineering':15B 'agents':11B,120C 'ai':6B 'ai-assisted-programming':5B 'and':64C,84C 'anomalous':62C 'assisted':7B 'been':96C 'being':104C 'better':55C 'brooks':31C 'but':66C,82C 'certain':61C 'challenges':115C 'coding':10B,119C 'coding-agents':9B 'cognitive':13B 'cognitive-debt':12B 'conceptual':43C,87C 'consideration':49C 'contains':79C 'contend':41C 'debt':14B 'definitions':4B 'design':52C,72C 'down':100C 'engineering':17B 'exactly':90C 'features':63C,110C 'fowler':19C 'fred':30C 'from':24C 'good':81C 'have':57C,76C 'highlights':20C 'i':39C,94C 'idea':23C 'ideas':73C,86C 'important':48C 'impressively':34C 'improvements':65C 'in':50C,101C 'independent':83C 'integrity':44C,88C 'is':45C,54C,89C 'it':53C 'key':22C 'later':38C 'man':2A,28C 'man-month':27C 'many':80C 'martin':18C 'martinfowler.com':121C 'missing':92C 'month':3A,29C 'most':47C 'mythical':1A,26C 'nail':99C 'new':109C,114C 'of':71C 'offers':113C 'omit':60C 'one':69C,77C 'out':108C 'piece':93C 'programming':8B 'quickly':112C 'reflect':68C 'relevant':35C 'set':70C 'so':111C 'spit':107C 'still':33C 'system':51C,59C 'than':74C 'that':42C,78C 'the':25C,46C,91C 'this':21C 'to':56C,67C,75C,98C,106C 'trying':97C 'uncoordinated':85C 'understanding':102C 've':95C 'when':116C 'why':103C 'will':40C 'with':118C 'working':117C 'years':37C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": true,

"title": ""

} |

| quotation |

2026-05-10 14:59:17+00:00 |

{

"id": 2193,

"slug": "andrew-quinn",

"quotation": "One could say in the first quarter-century of my life, that while I was always fascinated by programming, I could never overcome the guilt of not really knowing whether the tool I am building right now isn\u2019t already superceded by some much better implementation someone else has already written 30 or 40 years ago; I could write a TSV-aware search and replace, or I could find out about\u00a0`awk`\u00a0and solve that entire class of problems in one fell swoop, for example. My central conceit is that\u00a0*this is a trap*. You\u00a0*need*\u00a0to reinvent a couple of wheels to get to the edge of what we know about wheel-making, not a thousand wheels, and not zero; probably four or five is sufficient in most domains, maybe closer to twenty or thirty in the most epistemically rigorous and developed fields like mathematics or computer science. Each wheel you reinvent, and every directed question you ask along the way, will propel you faster to the true frontier than that same amount of time spend in idle study, or even five times that amount.",

"source": "Andrew Quinn",

"source_url": "https://til.andrew-quinn.me/posts/replacing-a-3-gb-sqlite-database-with-a-7-mb-fst-finite-state-trandsucer-binary/#fn:5",

"created": "2026-05-10T14:59:17+00:00",

"metadata": {},

"search_document": "'30':53A '40':55A 'a':61A,95A,101A,119A 'about':73A,114A 'ago':57A 'along':163A 'already':41A,51A 'always':17A 'am':35A 'amount':177A,189A 'and':66A,75A,122A,145A,157A 'andrew':192C 'ask':162A 'aware':64A 'awk':74A 'better':46A 'building':36A 'by':19A,43A 'careers':191B 'central':89A 'century':9A 'class':79A 'closer':135A 'computer':151A 'conceit':90A 'could':2A,22A,59A,70A 'couple':102A 'developed':146A 'directed':159A 'domains':133A 'each':153A 'edge':109A 'else':49A 'entire':78A 'epistemically':143A 'even':185A 'every':158A 'example':87A 'fascinated':18A 'faster':169A 'fell':84A 'fields':147A 'find':71A 'first':6A 'five':128A,186A 'for':86A 'four':126A 'frontier':173A 'get':106A 'guilt':26A 'has':50A 'i':15A,21A,34A,58A,69A 'idle':182A 'implementation':47A 'in':4A,82A,131A,140A,181A 'is':91A,94A,129A 'isn':39A 'know':113A 'knowing':30A 'life':12A 'like':148A 'making':117A 'mathematics':149A 'maybe':134A 'most':132A,142A 'much':45A 'my':11A,88A 'need':98A 'never':23A 'not':28A,118A,123A 'now':38A 'of':10A,27A,80A,103A,110A,178A 'one':1A,83A 'or':54A,68A,127A,138A,150A,184A 'out':72A 'overcome':24A 'probably':125A 'problems':81A 'programming':20A 'propel':167A 'quarter':8A 'quarter-century':7A 'question':160A 'quinn':193C 'really':29A 'reinvent':100A,156A 'replace':67A 'right':37A 'rigorous':144A 'same':176A 'say':3A 'science':152A 'search':65A 'solve':76A 'some':44A 'someone':48A 'spend':180A 'sqlite':190B 'study':183A 'sufficient':130A 'superceded':42A 'swoop':85A 't':40A 'than':174A 'that':13A,77A,92A,175A,188A 'the':5A,25A,32A,108A,141A,164A,171A 'thirty':139A 'this':93A 'thousand':120A 'time':179A 'times':187A 'to':99A,105A,107A,136A,170A 'tool':33A 'trap':96A 'true':172A 'tsv':63A 'tsv-aware':62A 'twenty':137A 'was':16A 'way':165A 'we':112A 'what':111A 'wheel':116A,154A 'wheel-making':115A 'wheels':104A,121A 'whether':31A 'while':14A 'will':166A 'write':60A 'written':52A 'years':56A 'you':97A,155A,161A,168A 'zero':124A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "footnote on Replacing a 3 GB SQLite database with a 10 MB FST (finite state transducer) binary"

} |

| quotation |

2026-05-09 01:03:58+00:00 |

{

"id": 2192,

"slug": "luke-curley",

"quotation": "WebRTC is designed to **degrade and drop my prompt** during poor network conditions.\r\n\r\nwtf my dude\r\n\r\nWebRTC aggressively drops audio packets to keep latency low. If you\u2019ve ever heard distorted audio on a conference call, that\u2019s WebRTC baybee. The idea is that conference calls depend on rapid back-and-forth, so pausing to wait for audio is unacceptable.\r\n\r\n\u2026but as a user, I would much rather wait an extra 200ms for my slow/expensive prompt to be accurate. After all, I\u2019m paying good money to boil the ocean, and a garbage prompt means a garbage response. It\u2019s not like LLMs are particularly responsive anyway.\r\n\r\n**But I\u2019m not allowed to wait**. It\u2019s *impossible* to even retransmit a WebRTC audio packet within a browser; we tried at Discord. The *implementation* is hard-coded for real-time latency **or else**.",

"source": "Luke Curley",

"source_url": "https://moq.dev/blog/webrtc-is-the-problem/",

"created": "2026-05-09T01:03:58+00:00",

"metadata": {},

"search_document": "'200ms':73A 'a':34A,64A,93A,97A,122A,127A 'accurate':80A 'after':81A 'aggressively':18A 'all':82A 'allowed':113A 'an':71A 'and':6A,52A,92A 'anyway':108A 'are':105A 'as':63A 'at':131A 'audio':20A,32A,59A,124A 'back':51A 'back-and-forth':50A 'baybee':40A 'be':79A 'boil':89A 'browser':128A 'but':62A,109A 'call':36A 'calls':46A 'coded':138A 'conditions':13A 'conference':35A,45A 'curley':149C 'degrade':5A 'depend':47A 'designed':3A 'discord':132A 'distorted':31A 'drop':7A 'drops':19A 'dude':16A 'during':10A 'else':145A 'even':120A 'ever':29A 'extra':72A 'for':58A,74A,139A 'forth':53A 'garbage':94A,98A 'good':86A 'hard':137A 'hard-coded':136A 'heard':30A 'i':66A,83A,110A 'idea':42A 'if':26A 'implementation':134A 'impossible':118A 'is':2A,43A,60A,135A 'it':100A,116A 'keep':23A 'latency':24A,143A 'like':103A 'llms':104A 'low':25A 'luke':148C 'm':84A,111A 'means':96A 'money':87A 'much':68A 'my':8A,15A,75A 'network':12A 'not':102A,112A 'ocean':91A 'on':33A,48A 'openai':146B 'or':144A 'packet':125A 'packets':21A 'particularly':106A 'pausing':55A 'paying':85A 'poor':11A 'prompt':9A,77A,95A 'rapid':49A 'rather':69A 'real':141A 'real-time':140A 'response':99A 'responsive':107A 'retransmit':121A 's':38A,101A,117A 'slow/expensive':76A 'so':54A 'that':37A,44A 'the':41A,90A,133A 'time':142A 'to':4A,22A,56A,78A,88A,114A,119A 'tried':130A 'unacceptable':61A 'user':65A 've':28A 'wait':57A,70A,115A 'we':129A 'webrtc':1A,17A,39A,123A,147B 'within':126A 'would':67A 'wtf':14A 'you':27A",

"import_ref": null,

"card_image": null,

"series_id": null,

"is_draft": false,

"context": "OpenAI\u2019s WebRTC Problem, in response to [How OpenAI delivers low-latency voice AI at scale](https://openai.com/index/delivering-low-latency-voice-ai-at-scale/)"

} |

| blogmark |

2026-05-08 21:00:11+00:00 |

{

"id": 9458,

"slug": "unreasonable-effectiveness-of-html",

"link_url": "https://twitter.com/trq212/status/2052809885763747935",

"link_title": "Using Claude Code: The Unreasonable Effectiveness of HTML",

"via_url": null,

"via_title": null,

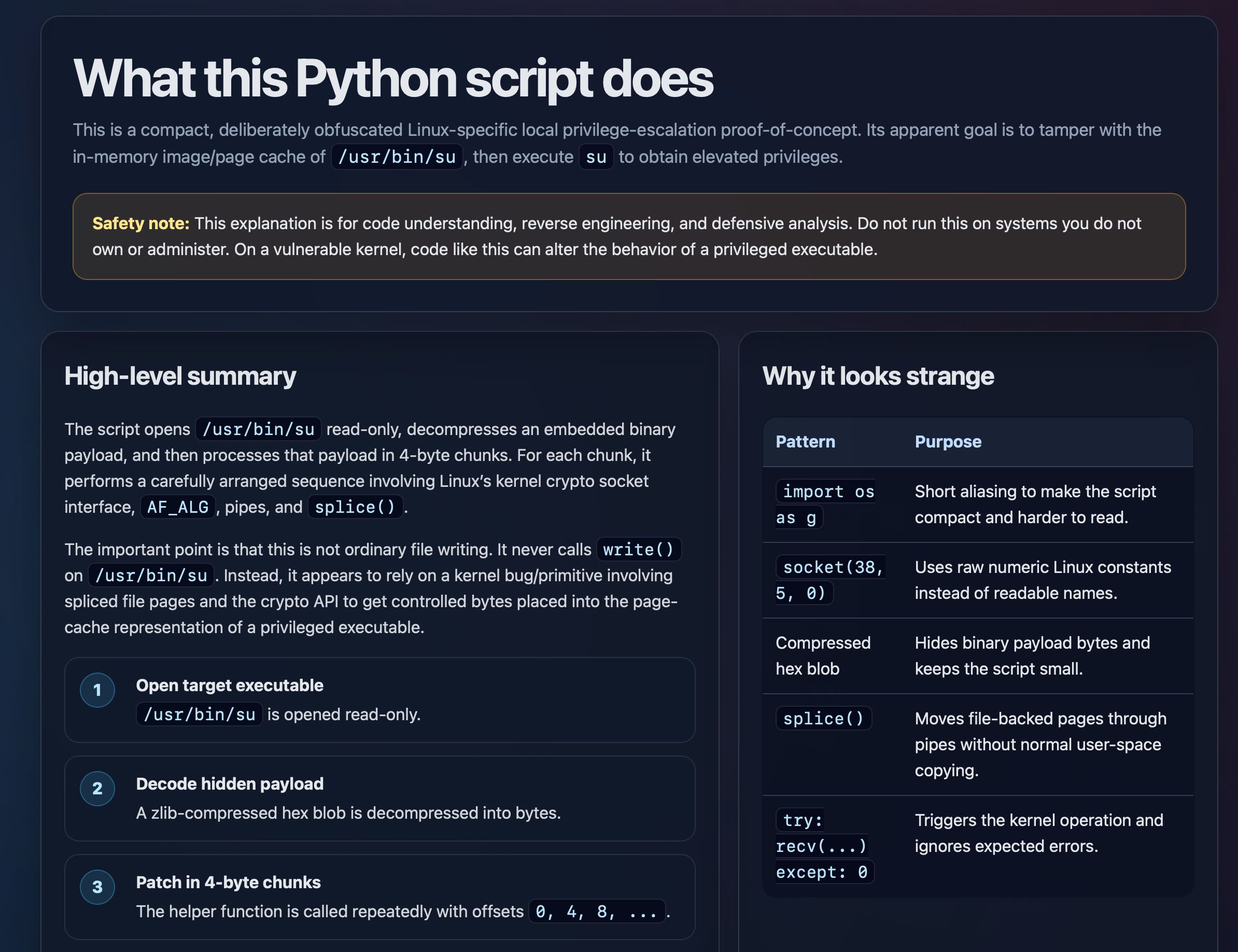

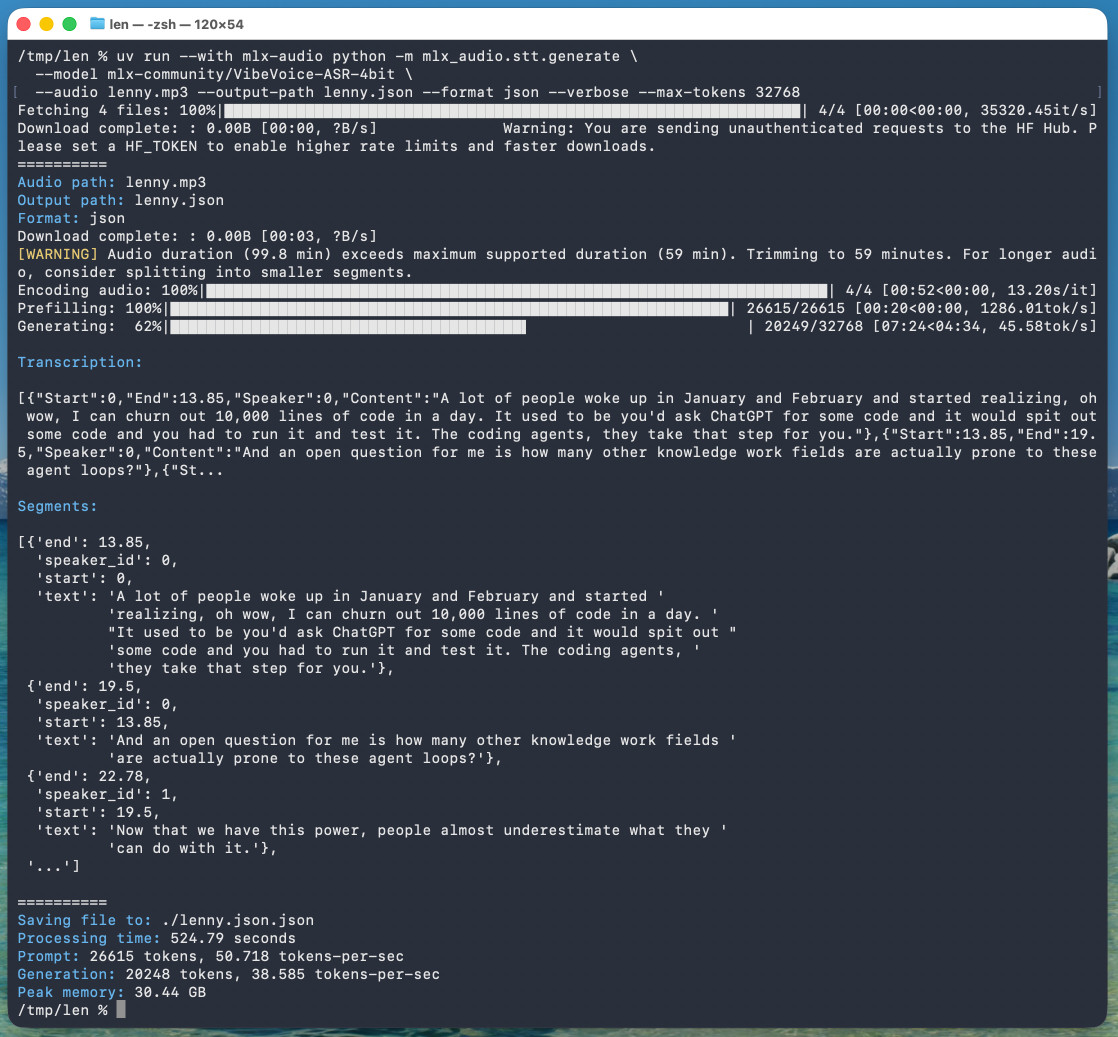

"commentary": "Thought-provoking piece by Thariq Shihipar (on the Claude Code team at Anthropic) advocating for HTML over Markdown as an output format to request from Claude.\r\n\r\nThe article is crammed with interesting examples (collected on [this site](https://thariqs.github.io/html-effectiveness/)) and prompt suggestions like this one:\r\n\r\n> `Help me review this PR by creating an HTML artifact that describes it. I'm not very familiar with the streaming/backpressure logic so focus on that. Render the actual diff with inline margin annotations, color-code findings by severity and whatever else might be needed to convey the concept well.`\r\n\r\nI've been defaulting to asking for most things in Markdown since the GPT-4 days, when the 8,192 token limit meant that Markdown's token-efficiency over HTML was extremely worthwhile.\r\n\r\nThariq's piece here has caused me to reconsider that, especially for output. Asking Claude for an explanation in HTML means it can drop in SVG diagrams, interactive widgets, in-page navigation and all sorts of other neat ways of making the information more pleasant to navigate.\r\n\r\nI wrote about [Useful patterns for building HTML tools](https://simonwillison.net/2025/Dec/10/html-tools/) last December, but that was focused very much on interactive utilities like the ones on my [tools.simonwillison.net](https://tools.simonwillison.net/) site. I'm excited to start experimenting more with rich HTML explanations in response to ad-hoc prompts.\r\n\r\n<h4 id=\"trying-this-out\">Trying this out on copy.fail</h4>\r\n\r\n[copy.fail](https://copy.fail/) describes a recently discovered Linux security exploit, including a proof of concept distributed as obfuscated Python.\r\n\r\nI tried having GPT-5.5 create an HTML explanation of the exploit like this:\r\n\r\n> `curl https://copy.fail/exp | llm -m gpt-5.5 -s 'Explain this code in detail. Reformat it, expand out any confusing bits and go deep into what it does and how it works. Output HTML, neatly styled and using capabilities of HTML and CSS and JavaScript to make the explanation rich and interactive and as clear as possible'`\r\n\r\nHere's [the resulting HTML page](https://gisthost.github.io/?ae53e3461ffdbfd0826156aacf025c7e). It's pretty good, though I should have emphasized explaining the exploit over the Python harness around it.\r\n\r\n",

"created": "2026-05-08T21:00:11+00:00",

"metadata": {},

"search_document": "'-4':136C '-5.5':284C,301C '/)':235C,263C '/2025/dec/10/html-tools/)':215C '/?ae53e3461ffdbfd0826156aacf025c7e).':359C '/exp':297C '/html-effectiveness/))':64C '/static/2026/python-script-explainer.jpg)':704C '/usr/bin/su':425C,489C,544C,584C '0':618C,654C,693C '1':580C '192':141C '2':590C '3':604C '38':652C '4':504C,607C,619C '5':653C '8':140C,620C 'a':265C,272C,380C,396C,433C,465C,476C,512C,551C,574C,594C,629C 'about':206C 'actual':99C 'ad':252C 'ad-hoc':251C 'administer':463C 'advocating':38C 'af':523C 'ai':12B,18B 'alg':524C 'aliasing':641C 'all':190C 'alter':472C 'an':44C,78C,172C,286C,494C 'analysis':451C 'and':65C,111C,189C,315C,322C,330C,335C,337C,344C,346C,449C,498C,526C,558C,633C,647C,671C,698C 'annotations':104C 'anthropic':37C 'any':312C 'api':561C 'apparent':412C 'appears':547C 'around':376C 'arranged':514C 'article':52C 'artifact':80C 'as':43C,277C,347C,349C,638C 'asking':127C,169C 'at':36C 'backed':680C 'be':115C 'been':124C 'behavior':474C 'binary':496C,668C 'bits':314C 'blob':599C,666C 'body':392C 'bordered':436C 'bug/primitive':553C 'building':210C 'but':218C 'by':28C,76C,109C 'byte':505C,608C 'bytes':565C,603C,670C 'cache':423C,571C 'called':614C 'callout':437C 'calls':541C 'can':178C,471C 'capabilities':332C 'carefully':513C 'caused':161C 'chunk':509C 'chunks':506C,609C 'claude':2A,22B,33C,50C,170C 'claude-code':21B 'clear':348C 'code':3A,23B,34C,107C,305C,445C,468C 'collected':58C 'color':106C 'color-code':105C 'column':480C,622C 'columns':635C 'compact':397C,646C 'compressed':597C,664C 'concept':120C,275C,410C 'confusing':313C 'constants':659C 'contains':628C 'controlled':564C 'convey':118C 'copy.fail':259C,260C,262C,296C 'copy.fail/)':261C 'copy.fail/exp':295C 'copying':689C 'crammed':54C 'create':285C 'creating':77C 'crypto':520C,560C 'css':336C 'curl':294C 'dark':382C 'dark-themed':381C 'days':137C 'december':217C 'decode':591C 'decompressed':601C 'decompresses':493C 'deep':317C 'defaulting':125C 'defensive':450C 'deliberately':398C 'describes':82C,264C 'detail':307C 'diagrams':182C 'diff':100C 'discovered':267C 'distributed':276C 'do':452C,459C 'document':385C 'does':321C,391C 'drop':179C 'each':508C 'effectiveness':6A 'efficiency':150C 'elevated':431C 'else':113C 'embedded':495C 'emphasized':368C 'engineering':15B,448C 'errors':701C 'escalation':406C 'especially':166C 'examples':57C 'except':692C 'excited':239C 'executable':478C,576C,583C 'execute':427C 'expand':310C 'expected':700C 'experimenting':242C 'explain':303C 'explaining':369C 'explanation':173C,288C,342C,442C 'explanations':247C 'exploit':270C,291C,371C 'extremely':154C 'familiar':88C 'file':537C,556C,679C 'file-backed':678C 'findings':108C 'focus':94C 'focused':221C 'follow':579C 'for':39C,128C,167C,171C,209C,444C,507C 'format':46C 'from':49C 'function':612C 'g':639C 'generative':17B 'generative-ai':16B 'get':563C 'gisthost.github.io':358C 'gisthost.github.io/?ae53e3461ffdbfd0826156aacf025c7e).':357C 'go':316C 'goal':413C 'good':363C 'gpt':135C,283C,300C 'harder':648C 'harness':375C 'has':160C 'have':367C 'having':282C 'heading':481C,623C 'help':71C 'helper':611C 'here':159C,351C 'hex':598C,665C 'hidden':592C 'hides':667C 'high':483C 'high-level':482C 'hoc':253C 'how':323C 'html':8A,9B,40C,79C,152C,175C,211C,246C,287C,327C,334C,355C 'i':84C,122C,204C,237C,280C,365C 'ignores':699C 'image/page':422C 'import':636C 'important':529C 'in':131C,174C,180C,186C,248C,306C,420C,503C,606C 'in-memory':419C 'in-page':185C 'including':271C 'information':199C 'inline':102C 'instead':545C,660C 'interactive':183C,225C,345C 'interesting':56C 'interface':522C 'into':318C,567C,602C 'involving':516C,554C 'is':53C,395C,414C,443C,531C,534C,585C,600C,613C 'it':83C,177C,309C,320C,324C,360C,377C,510C,539C,546C,625C 'its':411C 'javascript':338C 'keeps':672C 'kernel':467C,519C,552C,696C 'last':216C 'left':479C 'level':484C 'like':68C,227C,292C,469C 'limit':143C 'linux':268C,401C,517C,658C 'linux-specific':400C 'llm':20B,298C 'llms':19B 'local':403C 'logic':92C 'looks':626C 'm':85C,238C,299C 'make':340C,643C 'making':197C 'margin':103C 'markdown':11B,42C,132C,146C 'me':72C,162C 'means':176C 'meant':144C 'memory':421C 'might':114C 'more':200C,243C 'most':129C 'moves':677C 'much':223C 'my':231C 'names':663C 'navigate':203C 'navigation':188C 'neat':194C 'neatly':328C 'needed':116C 'never':540C 'normal':685C 'not':86C,453C,460C,535C 'note':440C 'numbered':577C 'numeric':657C 'obfuscated':278C,399C 'obtain':430C 'of':7A,192C,196C,274C,289C,333C,379C,409C,424C,475C,573C,661C 'offsets':617C 'on':31C,59C,95C,224C,230C,258C,456C,464C,543C,550C 'one':70C 'ones':229C 'only':492C,589C 'open':581C 'opened':586C 'opens':488C 'operation':697C 'or':462C 'ordinary':536C 'os':637C 'other':193C 'out':257C,311C 'output':45C,168C,326C 'over':41C,151C,372C 'own':461C 'page':187C,356C,570C 'page-cache':569C 'pages':557C,681C 'patch':605C 'pattern':632C 'patterns':208C 'payload':497C,502C,593C,669C 'performs':511C 'piece':27C,158C 'pipes':525C,683C 'placed':566C 'pleasant':201C 'point':530C 'possible':350C 'pr':75C 'pretty':362C 'privilege':405C 'privilege-escalation':404C 'privileged':477C,575C 'privileges':432C 'processes':500C 'prompt':14B,66C 'prompt-engineering':13B 'prompts':254C 'proof':273C,408C 'proof-of-concept':407C 'provoking':26C 'purpose':634C 'python':279C,374C,389C 'raw':656C 'read':491C,588C,650C 'read-only':490C,587C 'readable':662C 'reads':438C 'recently':266C 'reconsider':164C 'recv':691C 'reformat':308C 'rely':549C 'render':97C 'repeatedly':615C 'representation':572C 'request':48C 'response':249C 'resulting':354C 'reverse':447C 'review':73C 'rich':245C,343C 'right':621C 'run':454C 's':147C,157C,302C,352C,361C,518C 'safety':439C 'screenshot':378C 'script':390C,487C,645C,674C 'security':10B,269C 'sequence':515C 'severity':110C 'shihipar':30C 'short':640C 'should':366C 'simonwillison.net':214C 'simonwillison.net/2025/dec/10/html-tools/)':213C 'since':133C 'site':61C,236C 'small':675C 'so':93C 'socket':521C,651C 'sorts':191C 'space':688C 'specific':402C 'splice':527C,676C 'spliced':555C 'start':241C 'static.simonwillison.net':703C 'static.simonwillison.net/static/2026/python-script-explainer.jpg)':702C 'steps':578C 'strange':627C 'streaming/backpressure':91C 'styled':329C 'su':428C 'suggestions':67C 'summary':485C 'svg':181C 'systems':457C 'table':630C 'tamper':416C 'target':582C 'team':35C 'technical':384C 'text':393C 'thariq':29C,156C 'thariqs.github.io':63C 'thariqs.github.io/html-effectiveness/))':62C 'that':81C,96C,145C,165C,219C,501C,532C 'the':4A,32C,51C,90C,98C,119C,134C,139C,198C,228C,290C,341C,353C,370C,373C,418C,473C,486C,528C,559C,568C,610C,644C,673C,695C 'themed':383C 'then':426C,499C 'things':130C 'this':60C,69C,74C,256C,293C,304C,388C,394C,441C,455C,470C,533C 'though':364C 'thought':25C 'thought-provoking':24C 'through':682C 'titled':386C 'to':47C,117C,126C,163C,202C,240C,250C,339C,415C,429C,548C,562C,642C,649C 'token':142C,149C 'token-efficiency':148C 'tools':212C 'tools.simonwillison.net':232C,234C 'tools.simonwillison.net/)':233C 'tried':281C 'triggers':694C 'try':690C 'trying':255C 'twitter.com':705C 'understanding':446C 'unreasonable':5A 'useful':207C 'user':687C 'user-space':686C 'uses':655C 'using':1A,331C 'utilities':226C 've':123C 'very':87C,222C 'vulnerable':466C 'was':153C,220C 'ways':195C 'well':121C 'what':319C,387C 'whatever':112C 'when':138C 'why':624C 'widgets':184C 'with':55C,89C,101C,244C,417C,616C,631C 'without':684C 'works':325C 'worthwhile':155C 'write':542C 'writing':538C 'wrote':205C 'yellow':435C 'yellow-bordered':434C 'you':458C 'zlib':596C 'zlib-compressed':595C",

"import_ref": null,

"card_image": "https://static.simonwillison.net/static/2026/python-script-explainer.jpg",

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| blogmark |

2026-05-07 17:56:25+00:00 |

{

"id": 9442,

"slug": "firefox-claude-mythos",

"link_url": "https://hacks.mozilla.org/2026/05/behind-the-scenes-hardening-firefox/",

"link_title": "Behind the Scenes Hardening Firefox with Claude Mythos Preview",

"via_url": "https://lobste.rs/s/7zppv1/behind_scenes_hardening_firefox_with",

"via_title": "Lobste.rs",

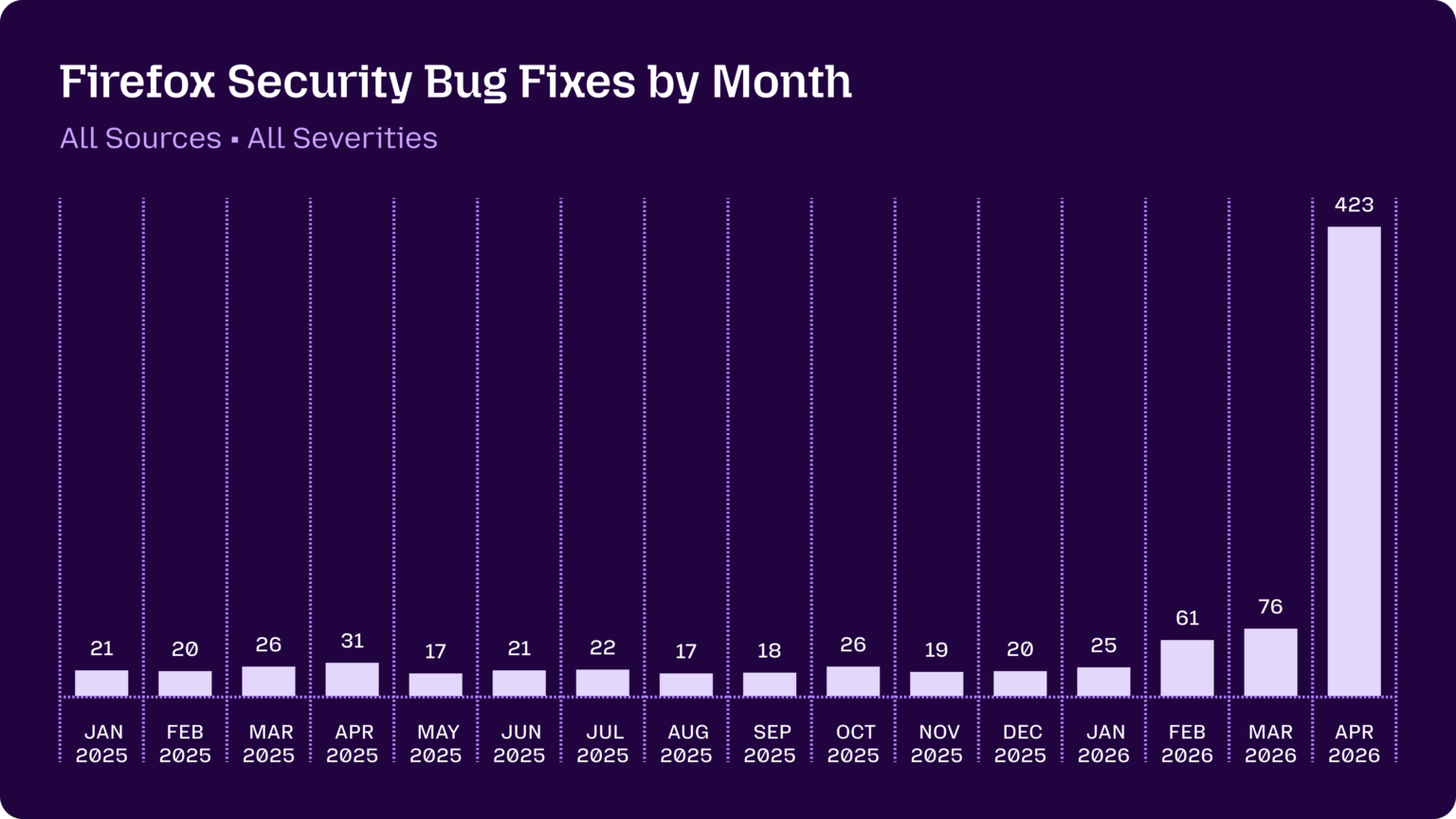

"commentary": "Fascinating, in-depth details on how Mozilla used their access to the Claude Mythos preview to locate and then fix hundreds of vulnerabilities in Firefox:\r\n\r\n> **Suddenly, the bugs are very good**\r\n> \r\n> Just a few months ago, AI-generated security bug reports to open source projects were mostly known for being unwanted slop. Dealing with reports that look plausibly correct but are wrong imposes an asymmetric cost on project maintainers: it\u2019s cheap and easy to prompt an LLM to find a \u201cproblem\u201d in code, but slow and expensive to respond to it.\r\n> \r\n> It is difficult to overstate how much this dynamic changed for us over a few short months. This was due to a combination of two main factors. First, the models got a lot more capable. Second, we dramatically improved our techniques for *harnessing* these models \u2014 steering them, scaling them, and stacking them to generate large amounts of signal and filter out the noise.\r\n\r\nThey include some detailed bug descriptions too, including a 20-year old XSLT bug and a 15-year-old bug in the `<legend>` element.\r\n\r\nA lot of the attempts made by the harness were blocked by Firefox's existing defense-in-depth measures, which is reassuring.\r\n\r\nMozilla were fixing around 20-30 security bugs in Firefox per month through 2025. That jumped to 423 in April.\r\n\r\n",

"created": "2026-05-07T17:56:25+00:00",

"metadata": {},

"search_document": "'-30':233C '/static/2026/firefox-security.webp)':328C '15':197C '17':285C,294C '18':297C '19':303C '20':190C,232C,276C,306C '2025':241C,272C,275C,278C,281C,284C,287C,290C,293C,296C,299C,302C,305C '2026':308C,311C,314C,317C '21':273C,288C '22':291C '25':309C '26':279C,300C '31':282C '423':245C,318C '61':312C '76':315C 'a':57C,106C,131C,139C,149C,189C,196C,205C,264C,319C 'access':34C 'ago':60C 'ai':13B,16B,21B,62C 'ai-generated':61C 'ai-security-research':20B 'all':259C,261C 'amounts':173C 'an':89C,102C 'and':42C,98C,112C,167C,176C,195C 'anthropic':18B 'apr':280C,316C 'april':247C 'are':53C,86C 'around':231C 'asymmetric':90C 'attempts':209C 'aug':292C 'background':267C 'bar':248C 'behind':1A 'being':75C 'blocked':215C 'bug':65C,185C,194C,201C,253C 'bugs':52C,235C 'but':85C,110C 'by':211C,216C,255C 'capable':152C 'changed':127C 'chart':249C 'cheap':97C 'claude':7A,19B,37C 'code':109C 'combination':140C 'correct':84C 'cost':91C 'counts':270C 'dark':265C 'dealing':78C 'dec':304C 'defense':221C 'defense-in-depth':220C 'depth':27C,223C 'descriptions':186C 'detailed':184C 'details':28C 'difficult':120C 'dramatic':320C 'dramatically':155C 'due':137C 'dynamic':126C 'easy':99C 'element':204C 'existing':219C 'expensive':113C 'factors':144C 'fascinating':24C 'feb':274C,310C 'few':58C,132C 'filter':177C 'final':324C 'find':105C 'firefox':5A,10B,49C,217C,237C,251C 'first':145C 'fix':44C 'fixes':254C 'fixing':230C 'for':74C,128C,159C 'generate':171C 'generated':63C 'generative':15B 'generative-ai':14B 'good':55C 'got':148C 'hacks.mozilla.org':329C 'hardening':4A 'harness':213C 'harnessing':160C 'how':30C,123C 'hundreds':45C 'imposes':88C 'improved':156C 'in':26C,48C,108C,202C,222C,236C,246C,322C 'in-depth':25C 'include':182C 'including':188C 'is':119C,226C 'it':95C,117C,118C 'jan':271C,307C 'jul':289C 'jumped':243C 'jun':286C 'just':56C 'known':73C 'large':172C 'llm':103C 'llms':17B 'lobste.rs':330C 'locate':41C 'look':82C 'lot':150C,206C 'made':210C 'main':143C 'maintainers':94C 'mar':277C,313C 'may':283C 'measures':224C 'models':147C,162C 'month':239C,256C,325C 'monthly':269C 'months':59C,134C 'more':151C 'mostly':72C 'mozilla':11B,31C,228C 'much':124C 'mythos':8A,38C 'noise':180C 'nov':301C 'oct':298C 'of':46C,141C,174C,207C 'old':192C,200C 'on':29C,92C,263C 'open':68C 'our':157C 'out':178C 'over':130C 'overstate':122C 'per':238C 'plausibly':83C 'preview':9A,39C 'problem':107C 'project':93C 'projects':70C 'prompt':101C 'purple':266C 'reassuring':227C 'reports':66C,80C 'research':23B 'respond':115C 's':96C,218C 'scaling':165C 'scenes':3A 'second':153C 'security':12B,22B,64C,234C,252C 'sep':295C 'severities':262C 'short':133C 'showing':268C 'signal':175C 'slop':77C 'slow':111C 'some':183C 'source':69C 'sources':260C 'spike':321C 'stacking':168C 'static.simonwillison.net':327C 'static.simonwillison.net/static/2026/firefox-security.webp)':326C 'steering':163C 'subtitle':258C 'suddenly':50C 'techniques':158C 'that':81C,242C 'the':2A,36C,51C,146C,179C,203C,208C,212C,323C 'their':33C 'them':164C,166C,169C 'then':43C 'these':161C 'they':181C 'this':125C,135C 'through':240C 'titled':250C 'to':35C,40C,67C,100C,104C,114C,116C,121C,138C,170C,244C 'too':187C 'two':142C 'unwanted':76C 'us':129C 'used':32C 'very':54C 'vulnerabilities':47C 'was':136C 'we':154C 'were':71C,214C,229C 'which':225C 'with':6A,79C,257C 'wrong':87C 'xslt':193C 'year':191C,199C 'year-old':198C",

"import_ref": null,

"card_image": "https://static.simonwillison.net/static/2026/firefox-security.webp",

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| blogmark |

2026-05-05 22:14:21+00:00 |

{

"id": 9440,

"slug": "our-ai-started-a-cafe-in-stockholm",

"link_url": "https://andonlabs.com/blog/ai-cafe-stockholm",

"link_title": "Our AI started a cafe in Stockholm",

"via_url": "https://news.ycombinator.com/item?id=48028289",

"via_title": "Hacker News",

"commentary": "Andon Labs previously [started an AI-run retail store](https://andonlabs.com/blog/andon-market-launch) in San Francisco. Now they're running a similar experiment in Stockholm, Sweden, only this time it's a cafe.\r\n\r\nThese experiments are interesting, and often throw out amusing anecdotes:\r\n\r\n> During the first week of inventory, Mona ordered 120 eggs even though the caf\u00e9 has no stove. When the staff told her they couldn\u2019t cook them, she suggested using the high-speed oven, until they pointed out the eggs would likely explode. She also tried to solve the problem of fresh tomatoes being spoiled too fast by ordering 22.5 kg of canned tomatoes for the fresh sandwiches. The baristas eventually started a \u201cHall of Shame\u201d, a shelf visible to customers with all the weird things Mona ordered, including 6,000 napkins, 3,000 nitrile gloves, 9L coconut milk, and industrial-sized trash bags.\r\n\r\nWhere they lose their shine is when these AI managers start wasting the time of human beings who have *not* opted into the experiment:\r\n\r\n> She also successfully applied for an outdoor seating permit through the Police e-service, which didn\u2019t require BankID. Her first submission included a sketch she had generated herself, despite having never seen the street outside the caf\u00e9. Unsurprisingly, the Police sent it back for revision. [...]\r\n>\r\n> When she makes a mistake, she often sends multiple emails to suppliers with the subject \u201cEMERGENCY\u201d to cancel or change the order.\r\n\r\nI don't think it's ethical to run experiments like this that affect real-world systems and steal time from people.\r\n\r\nI'm reminded of the incident last year where the AI Village experiment [infuriated Rob Pike](https://simonwillison.net/2025/Dec/26/slop-acts-of-kindness/) by sending him unsolicited gratitude emails as an \"act of kindness\". That was just an unwanted email - asking suppliers to correct mistakes that were made without a human-in-the-loop or wasting police time with slop diagrams feels a whole lot worse to me.\r\n\r\nI think experiments like this need to keep their own human operators in-the-loop for outbound actions that affect other people.",

"created": "2026-05-05T22:14:21+00:00",

"metadata": {},

"search_document": "'/2025/dec/26/slop-acts-of-kindness/)':302C '/blog/andon-market-launch)':31C '000':153C,156C '120':70C '22.5':122C '3':155C '6':152C '9l':159C 'a':4A,39C,50C,135C,139C,216C,242C,329C,343C 'act':311C 'actions':367C 'affect':274C,369C 'agents':15B 'ai':2A,8B,11B,14B,17B,25C,176C,294C 'ai-agents':13B 'ai-ethics':16B 'ai-run':24C 'all':145C 'also':107C,193C 'amusing':60C 'an':23C,197C,310C,317C 'and':56C,162C,279C 'andon':19C 'andonlabs.com':30C,372C 'andonlabs.com/blog/andon-market-launch)':29C 'anecdotes':61C 'applied':195C 'are':54C 'as':309C 'asking':320C 'back':236C 'bags':167C 'bankid':211C 'baristas':132C 'being':116C 'beings':184C 'by':120C,303C 'cafe':5A,51C 'caf\u00e9':75C,230C 'cancel':256C 'canned':125C 'change':258C 'coconut':160C 'cook':87C 'correct':323C 'couldn':85C 'customers':143C 'despite':222C 'diagrams':341C 'didn':208C 'don':262C 'during':62C 'e':205C 'e-service':204C 'eggs':71C,102C 'email':319C 'emails':248C,308C 'emergency':254C 'ethical':267C 'ethics':18B 'even':72C 'eventually':133C 'experiment':41C,191C,296C 'experiments':53C,270C,351C 'explode':105C 'fast':119C 'feels':342C 'first':64C,213C 'for':127C,196C,237C,365C 'francisco':34C 'fresh':114C,129C 'from':282C 'generated':220C 'generative':10B 'generative-ai':9B 'gloves':158C 'gratitude':307C 'hacker':373C 'had':219C 'hall':136C 'has':76C 'have':186C 'having':223C 'her':83C,212C 'herself':221C 'high':94C 'high-speed':93C 'him':305C 'human':183C,331C,359C 'human-in-the-loop':330C 'i':261C,284C,349C 'in':6A,32C,42C,332C,362C 'in-the-loop':361C 'incident':289C 'included':215C 'including':151C 'industrial':164C 'industrial-sized':163C 'infuriated':297C 'interesting':55C 'into':189C 'inventory':67C 'is':173C 'it':48C,235C,265C 'just':316C 'keep':356C 'kg':123C 'kindness':313C 'labs':20C 'last':290C 'like':271C,352C 'likely':104C 'llms':12B 'loop':334C,364C 'lose':170C 'lot':345C 'm':285C 'made':327C 'makes':241C 'managers':177C 'me':348C 'milk':161C 'mistake':243C 'mistakes':324C 'mona':68C,149C 'multiple':247C 'napkins':154C 'need':354C 'never':224C 'news':374C 'nitrile':157C 'no':77C 'not':187C 'now':35C 'of':66C,113C,124C,137C,182C,287C,312C 'often':57C,245C 'only':45C 'operators':360C 'opted':188C 'or':257C,335C 'order':260C 'ordered':69C,150C 'ordering':121C 'other':370C 'our':1A 'out':59C,100C 'outbound':366C 'outdoor':198C 'outside':228C 'oven':96C 'own':358C 'people':283C,371C 'permit':200C 'pike':299C 'pointed':99C 'police':203C,233C,337C 'previously':21C 'problem':112C 're':37C 'real':276C 'real-world':275C 'reminded':286C 'require':210C 'retail':27C 'revision':238C 'rob':298C 'run':26C,269C 'running':38C 's':49C,266C 'san':33C 'sandwiches':130C 'seating':199C 'seen':225C 'sending':304C 'sends':246C 'sent':234C 'service':206C 'shame':138C 'she':89C,106C,192C,218C,240C,244C 'shelf':140C 'shine':172C 'similar':40C 'simonwillison.net':301C 'simonwillison.net/2025/dec/26/slop-acts-of-kindness/)':300C 'sized':165C 'sketch':217C 'slop':340C 'solve':110C 'speed':95C 'spoiled':117C 'staff':81C 'start':178C 'started':3A,22C,134C 'steal':280C 'stockholm':7A,43C 'store':28C 'stove':78C 'street':227C 'subject':253C 'submission':214C 'successfully':194C 'suggested':90C 'suppliers':250C,321C 'sweden':44C 'systems':278C 't':86C,209C,263C 'that':273C,314C,325C,368C 'the':63C,74C,80C,92C,101C,111C,128C,131C,146C,180C,190C,202C,226C,229C,232C,252C,259C,288C,293C,333C,363C 'their':171C,357C 'them':88C 'these':52C,175C 'they':36C,84C,98C,169C 'things':148C 'think':264C,350C 'this':46C,272C,353C 'though':73C 'through':201C 'throw':58C 'time':47C,181C,281C,338C 'to':109C,142C,249C,255C,268C,322C,347C,355C 'told':82C 'tomatoes':115C,126C 'too':118C 'trash':166C 'tried':108C 'unsolicited':306C 'unsurprisingly':231C 'until':97C 'unwanted':318C 'using':91C 'village':295C 'visible':141C 'was':315C 'wasting':179C,336C 'week':65C 'weird':147C 'were':326C 'when':79C,174C,239C 'where':168C,292C 'which':207C 'who':185C 'whole':344C 'with':144C,251C,339C 'without':328C 'world':277C 'worse':346C 'would':103C 'year':291C",

"import_ref": null,

"card_image": null,

"series_id": null,

"use_markdown": true,

"is_draft": false,

"title": ""

} |

| quotation |

2026-05-05 00:46:29+00:00 |

{

"id": 2167,

"slug": "john-gruber",